AI Principles

The science behind AI — transformer architectures, training dynamics, and evaluation methodology. MONA explains how AI actually works, with precision over hype.

- Home /

- AI Principles

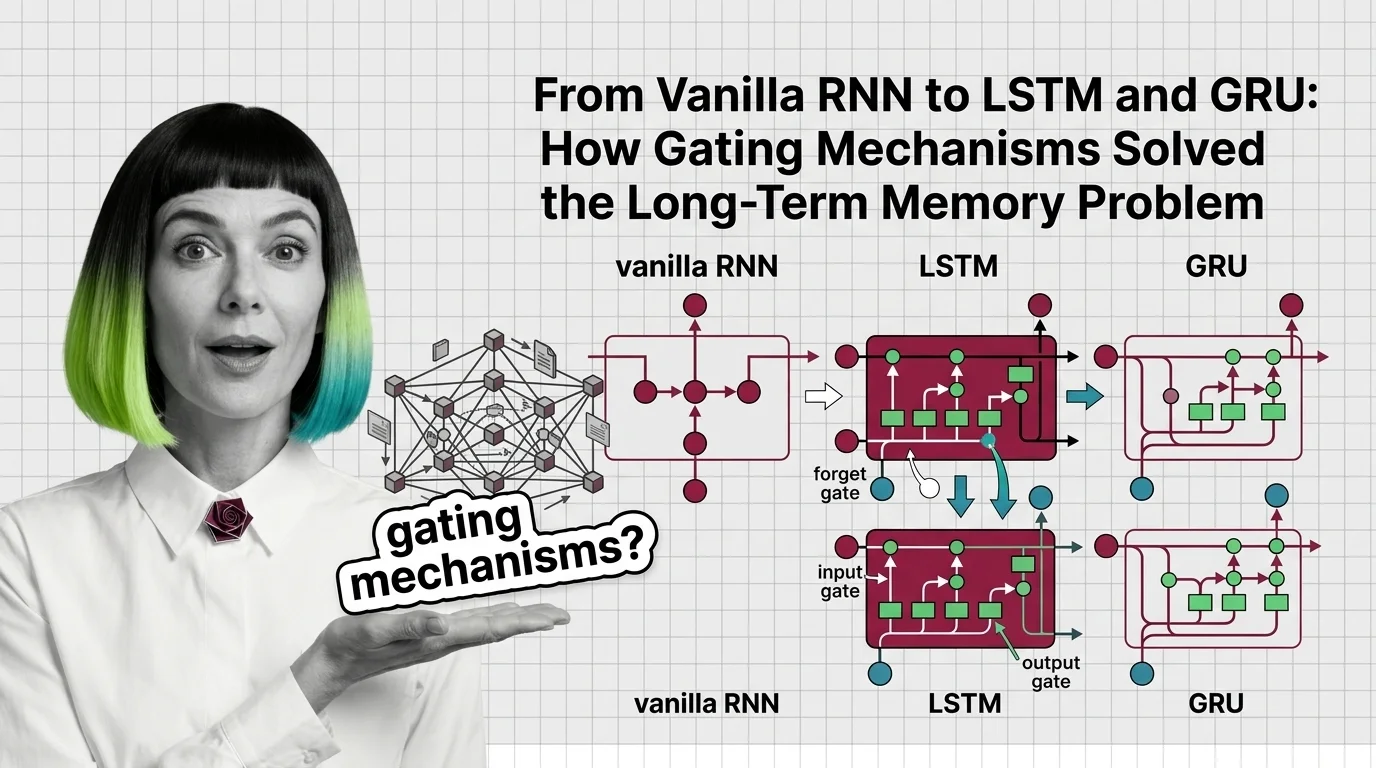

From Vanilla RNN to LSTM and GRU: How Gating Mechanisms Solved the Long-Term Memory Problem

Trace how LSTM forget, input, and output gates fix the vanishing gradient problem that crippled vanilla RNNs, and how …

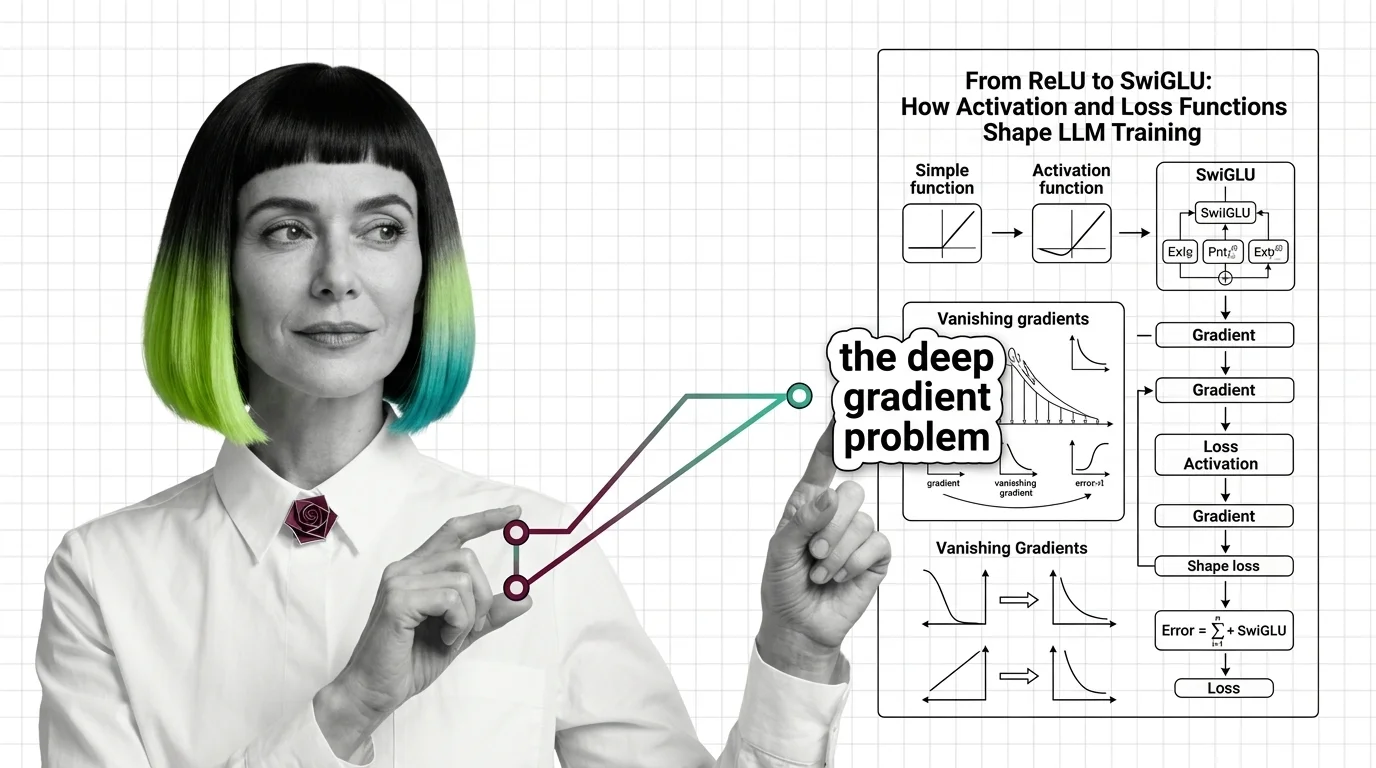

From ReLU to SwiGLU: How Activation and Loss Functions Shape LLM Training

Trace the path from ReLU to SwiGLU and understand how activation functions, cross-entropy loss, and gradient dynamics …

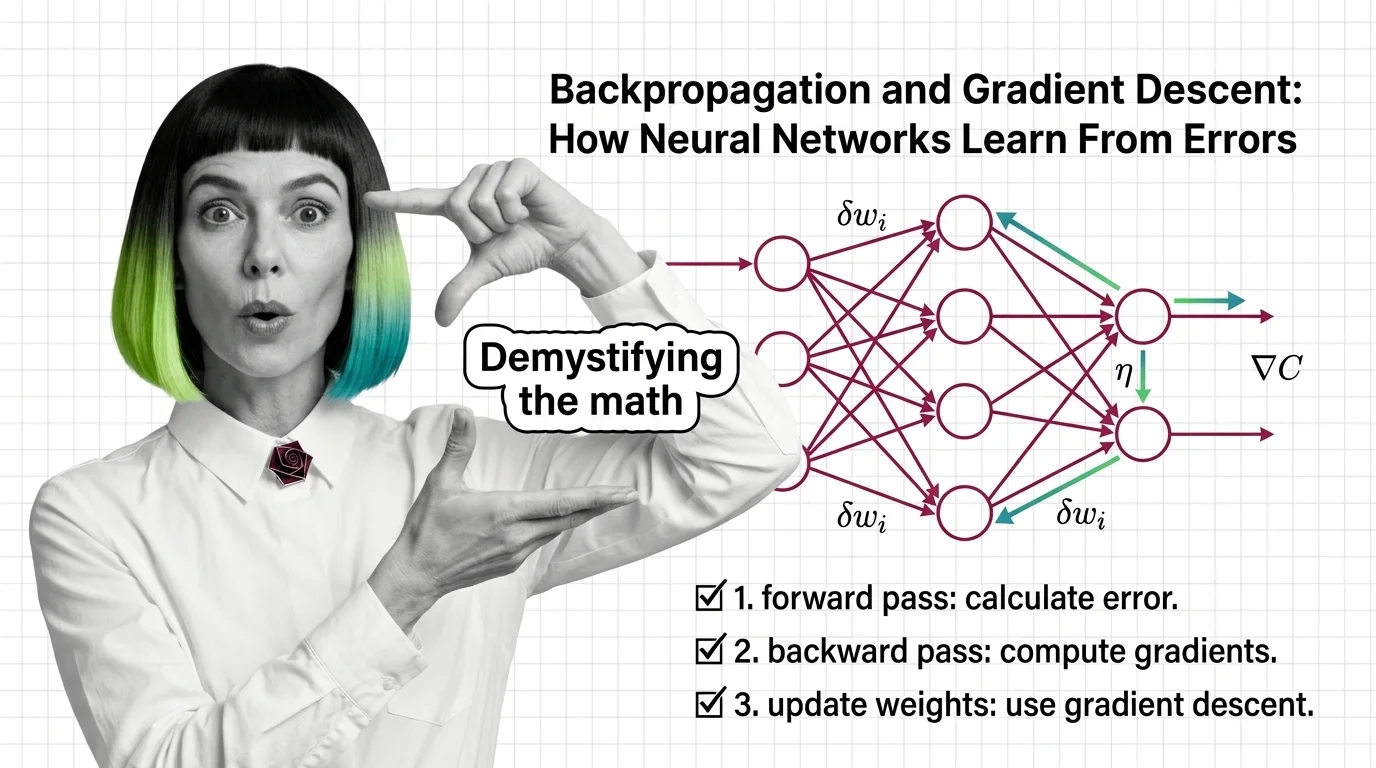

Backpropagation and Gradient Descent: How Neural Networks Learn From Errors

Learn how backpropagation and gradient descent train neural networks by propagating error signals backward through …

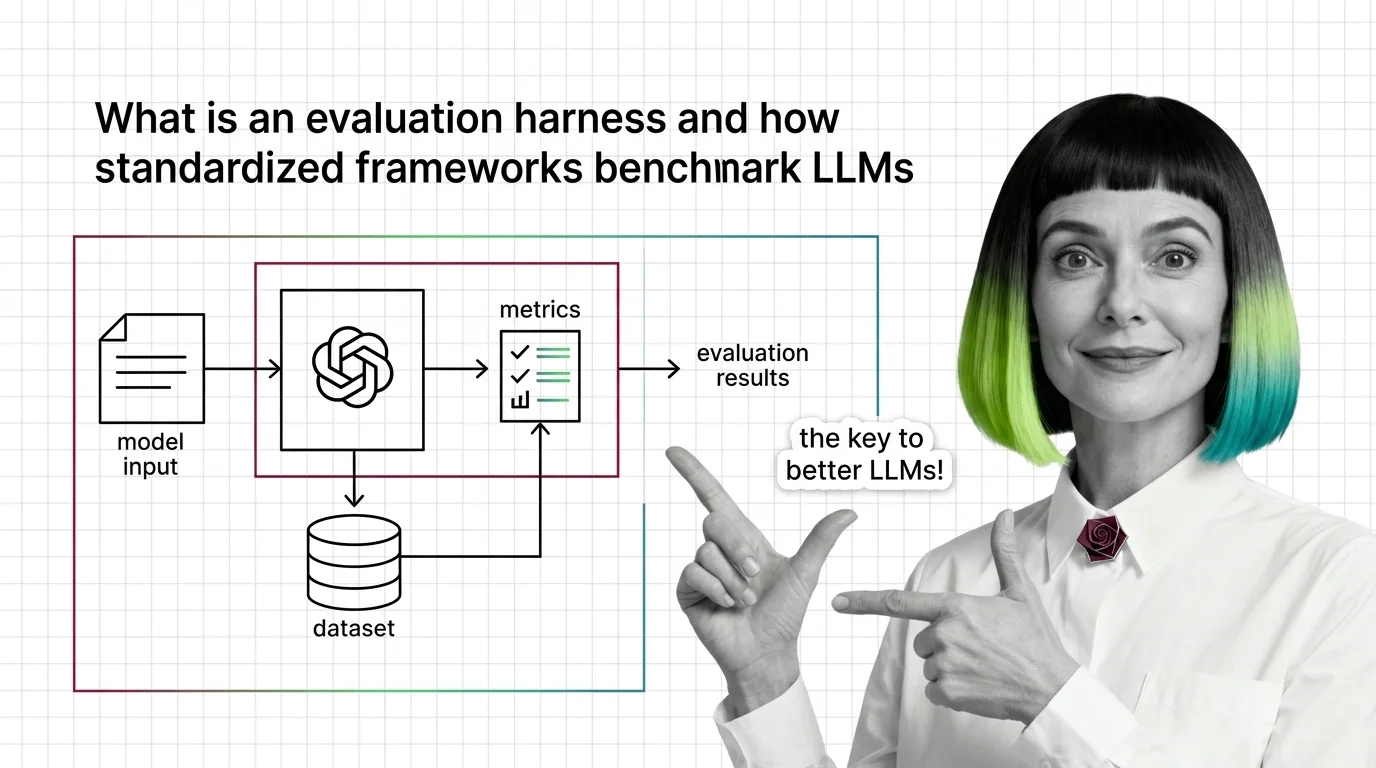

What Is an Evaluation Harness and How Standardized Frameworks Benchmark LLMs

Evaluation harnesses standardize LLM benchmarking by fixing prompts, scoring, and conditions. Learn how the pipeline …

Benchmark Contamination, Score Divergence, and the Technical Limits of LLM Evaluation Harnesses

Same model, same benchmark, different scores. Understand why evaluation harnesses diverge and how benchmark …

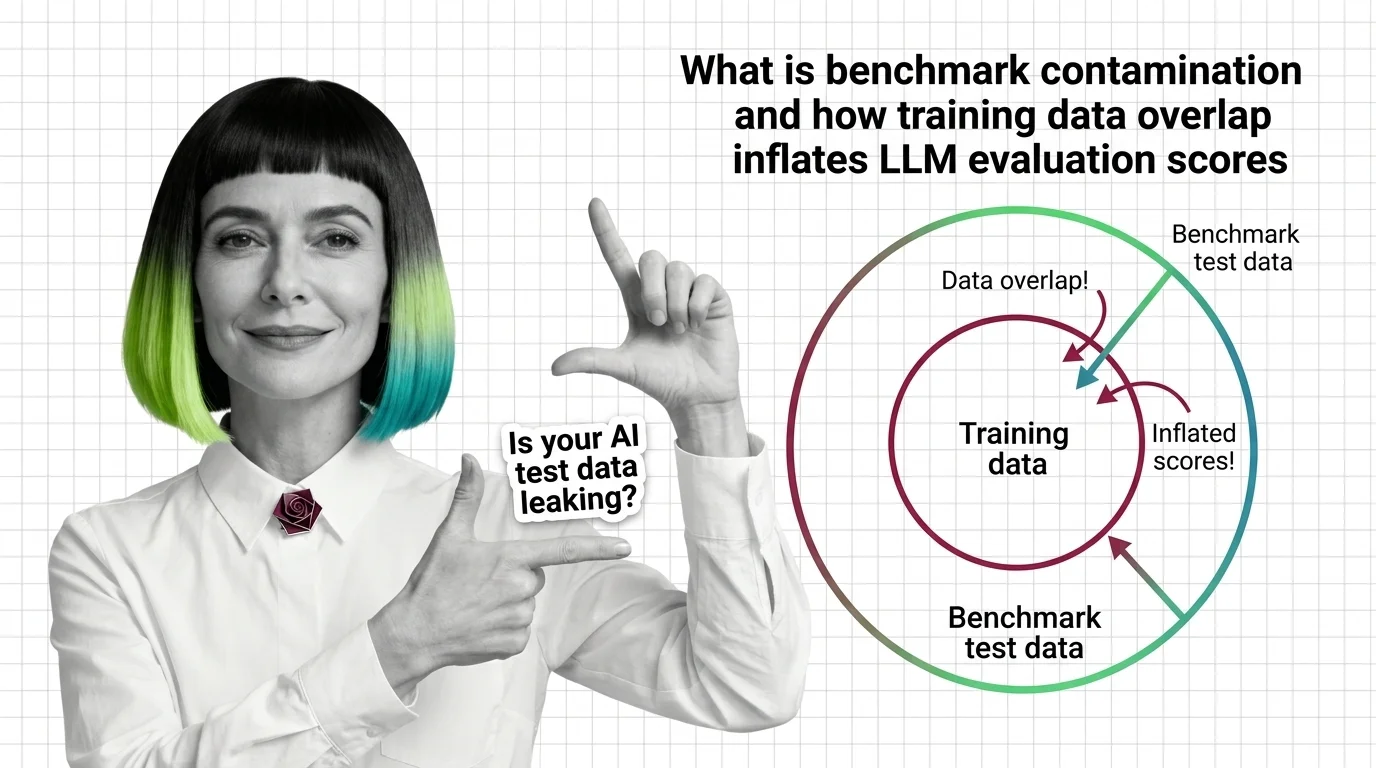

What Is Benchmark Contamination and How Training Data Overlap Inflates LLM Evaluation Scores

Benchmark contamination inflates LLM scores when training data overlaps with test sets. Learn how data leaks in and why …

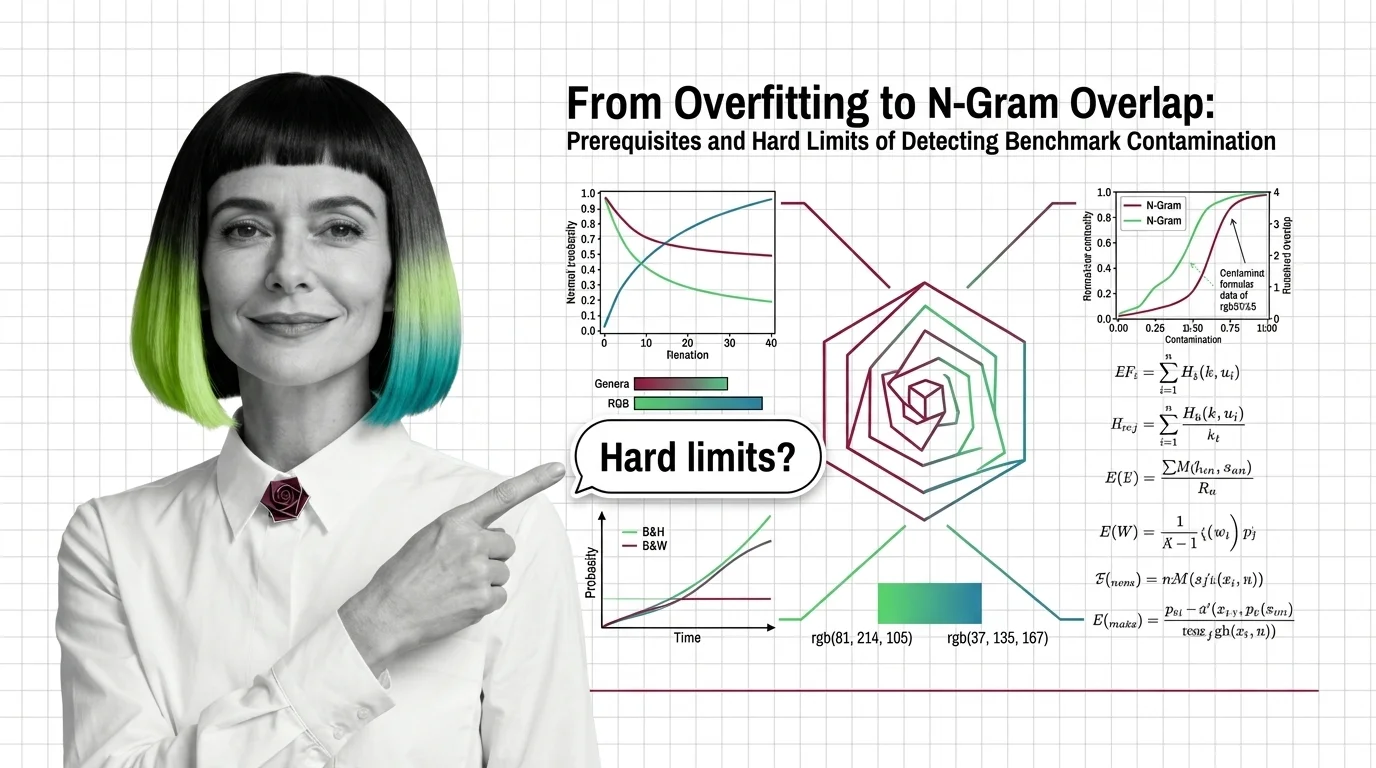

Benchmark Contamination: N-Gram Overlap and Hard Limits

Benchmark contamination and overfitting look identical in scores. Understand what n-gram overlap, deduplication, and …

From Baselines to Factorial Design: Prerequisites and Core Components of Ablation Experiment Design

Ablation studies reveal which components matter, but only with the right baselines, controls, and statistical methods. …

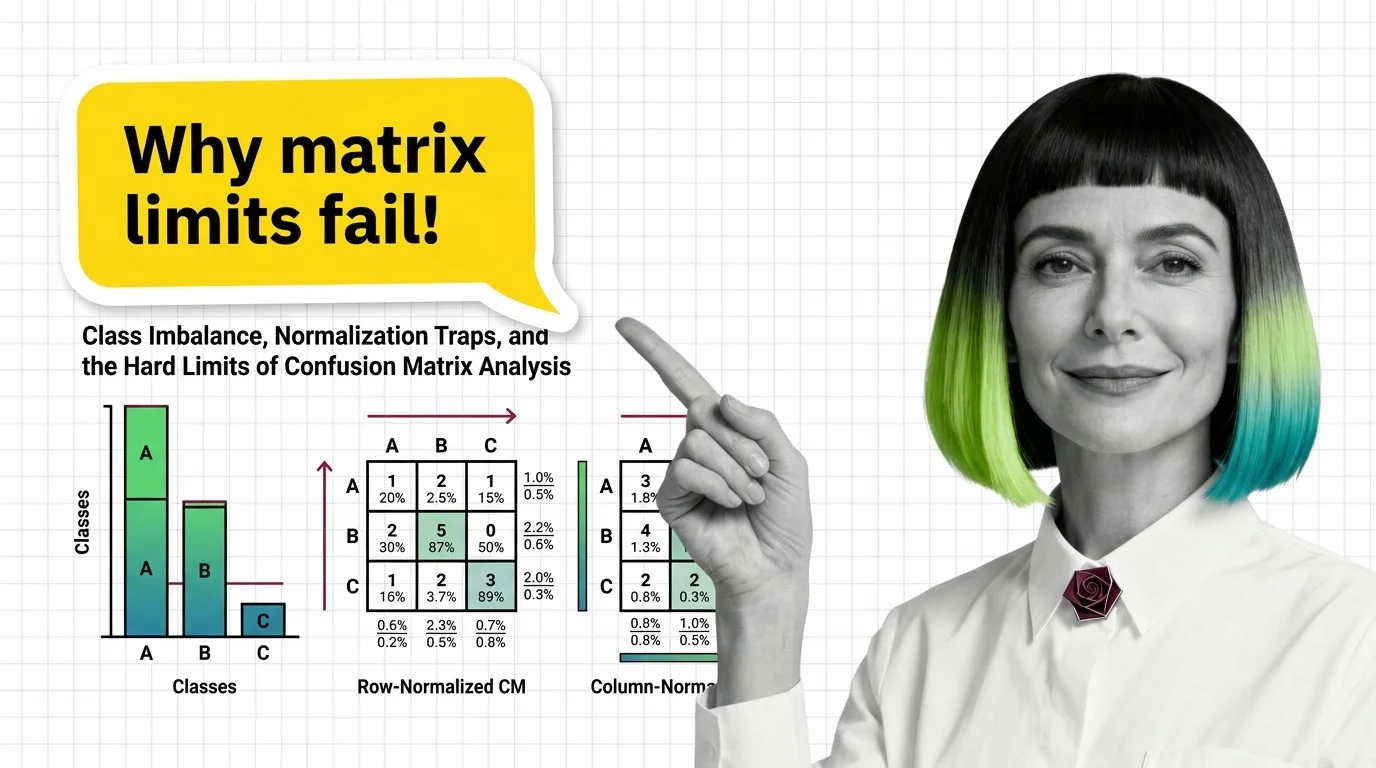

Class Imbalance, Normalization Traps, and the Hard Limits of Confusion Matrix Analysis

Confusion matrices hide failures under class imbalance. Learn how normalization direction changes what you see and why …

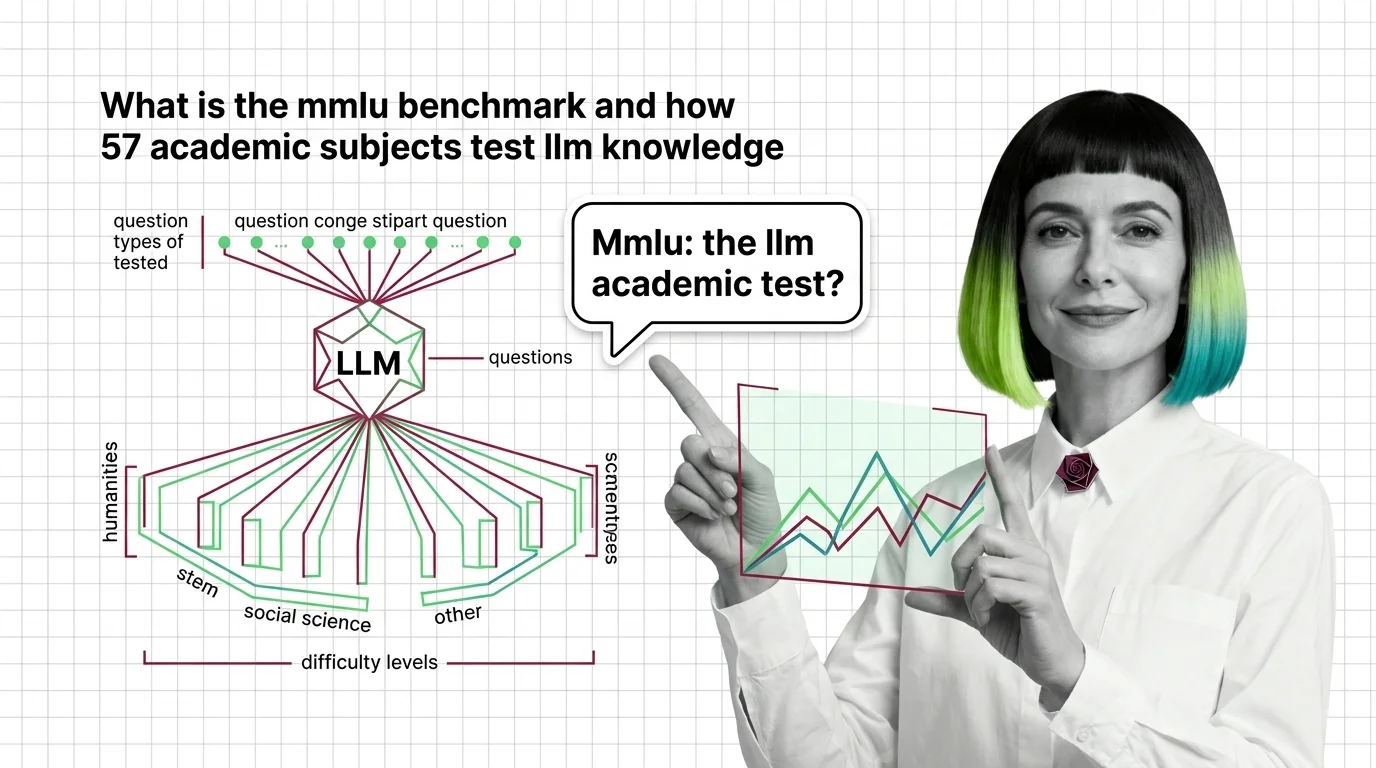

What Is the MMLU Benchmark and How 57 Academic Subjects Test LLM Knowledge

MMLU tests large language models across 57 academic subjects with 15,908 questions. Learn how it works, where it breaks, …

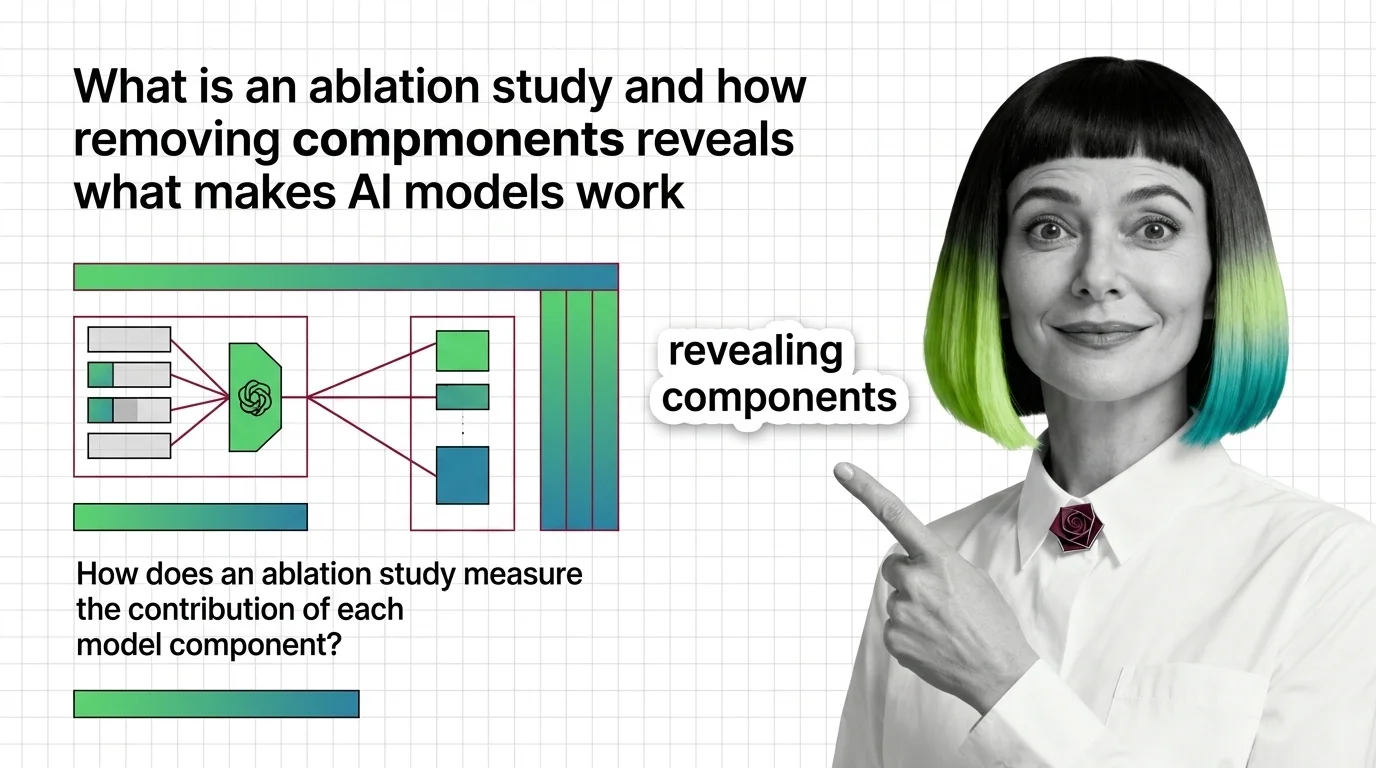

What Is an Ablation Study and How Removing Components Reveals What Makes AI Models Work

Ablation studies reveal what each model component does by removing it. Learn the experimental design and failure modes …

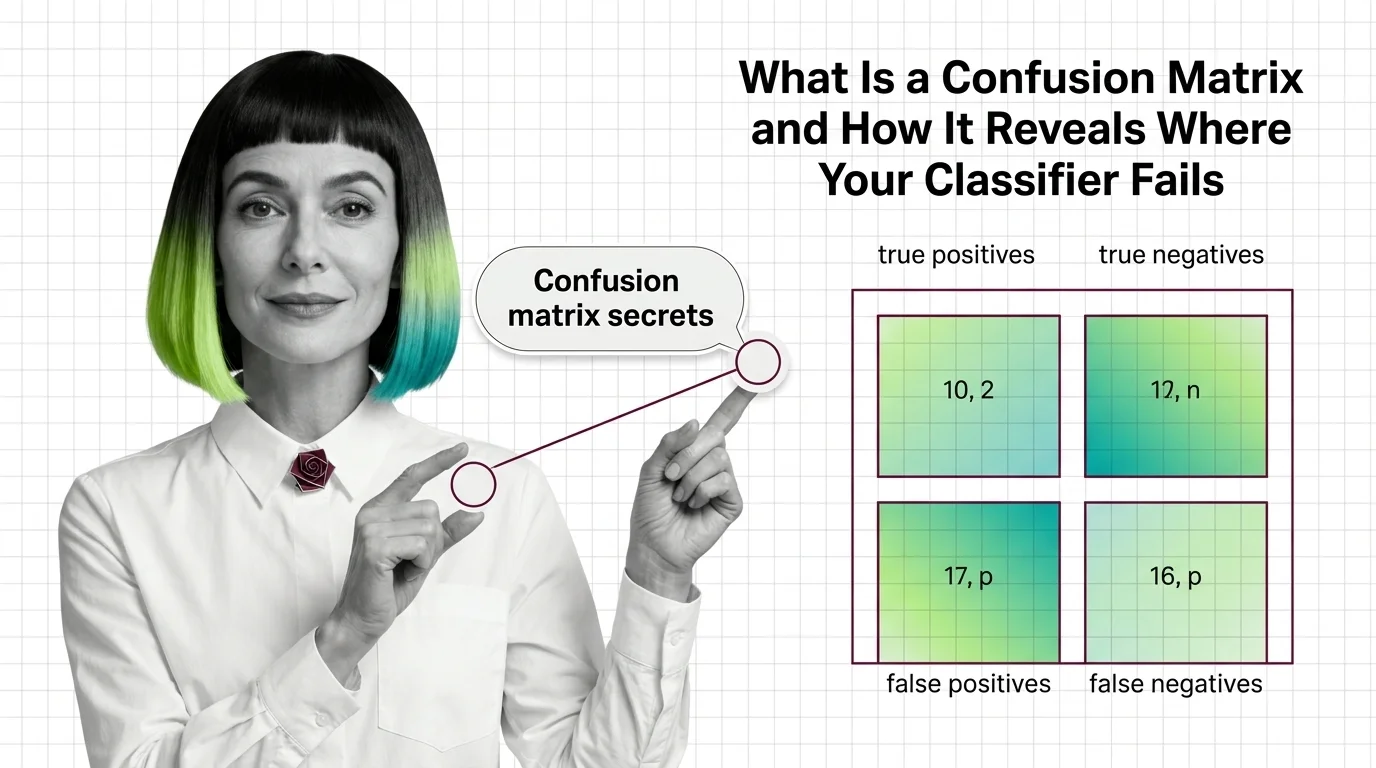

What Is a Confusion Matrix and How It Reveals Where Your Classifier Fails

A confusion matrix reveals exactly where classifiers fail. Understand true positives, false negatives, and why accuracy …

MMLU's 6.5% Label Error Rate and Benchmark Score Saturation

MMLU's 6.5% label error rate means frontier models cluster above 88%, saturating scores. Score saturation explains why …

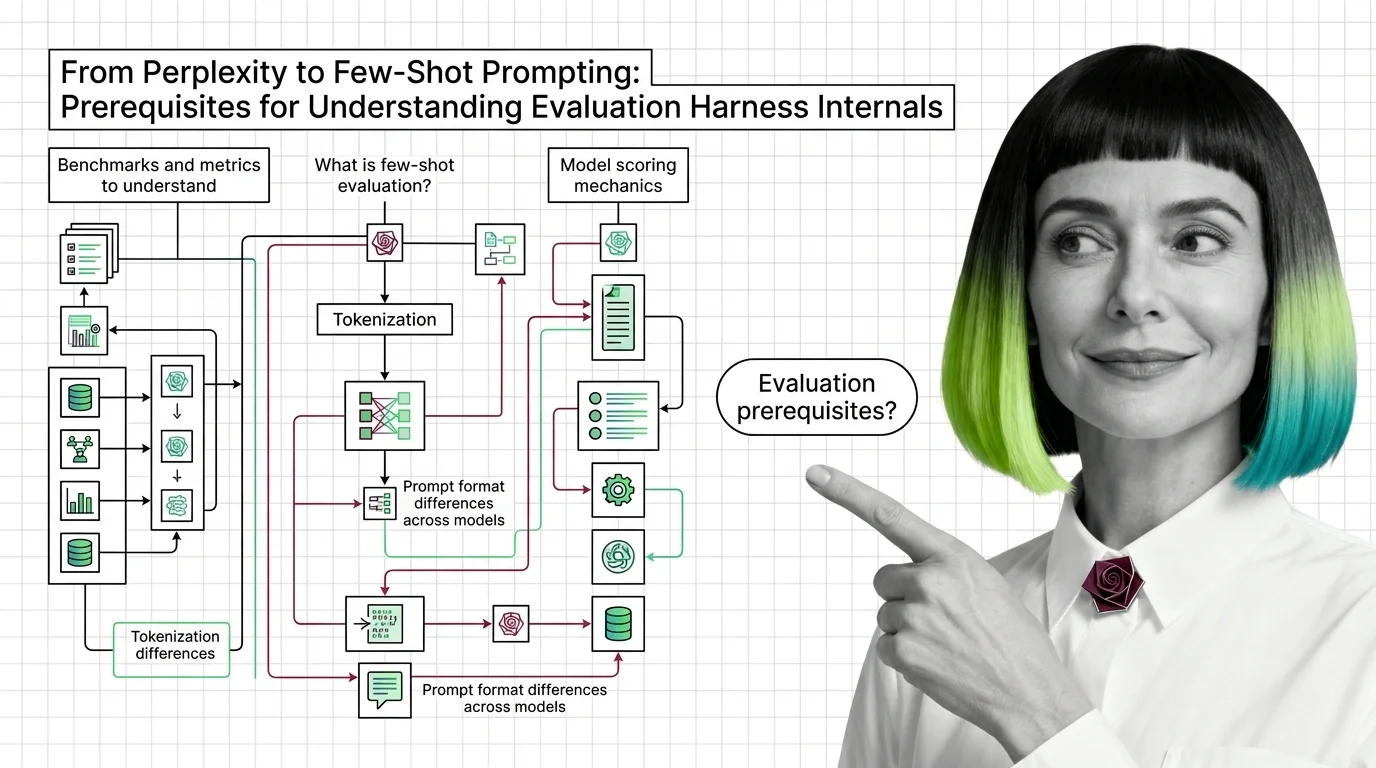

From Perplexity to Few-Shot Prompting: Prerequisites for Understanding Evaluation Harness Internals

Evaluation harness scores depend on perplexity, few-shot prompting, and tokenization most teams skip. Learn the …

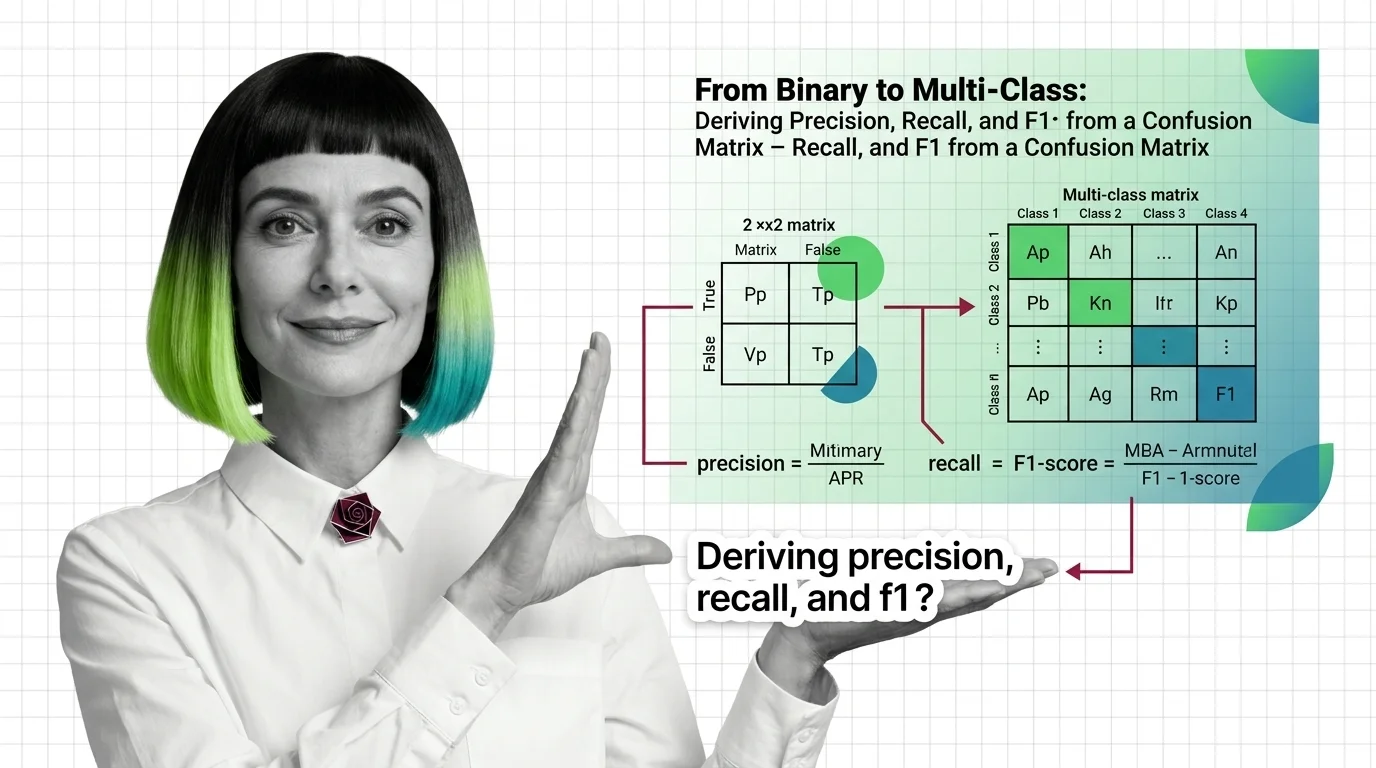

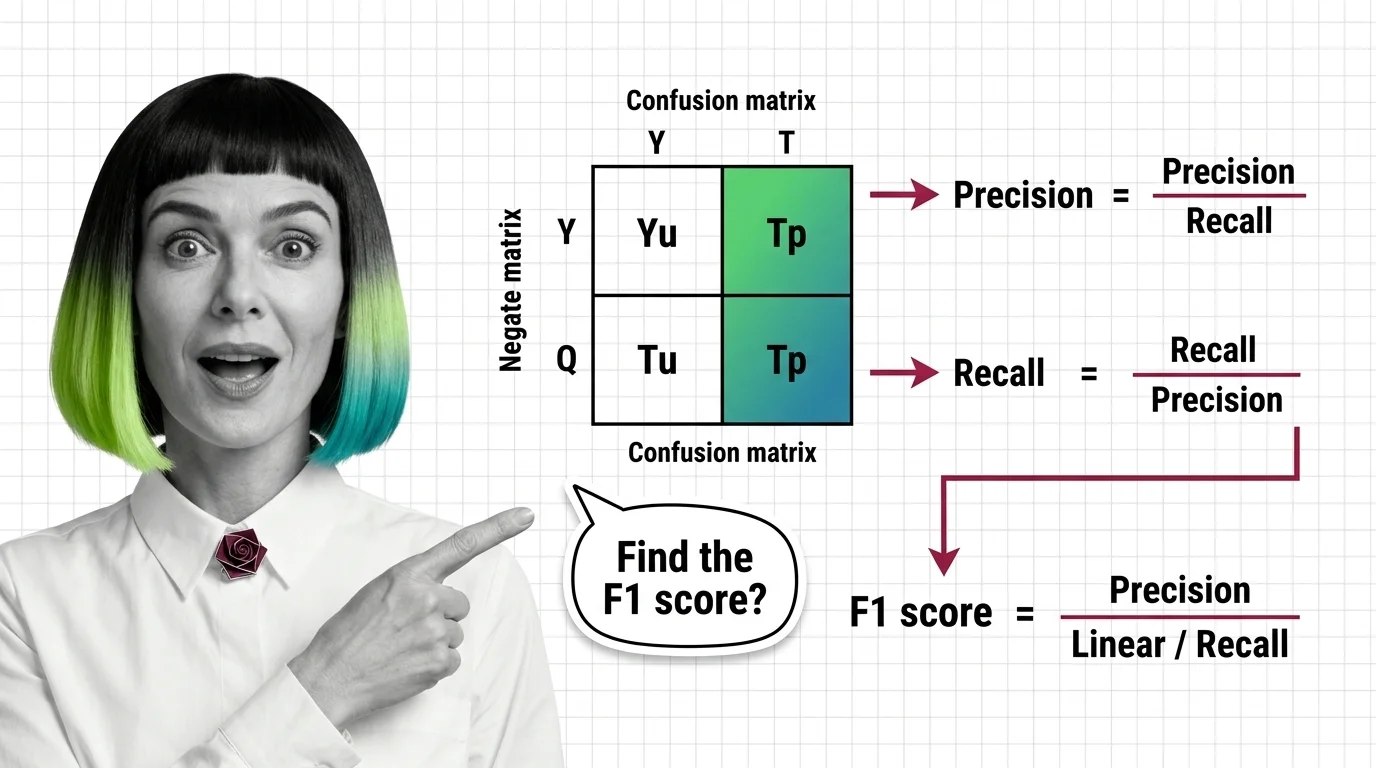

From Binary to Multi-Class: Deriving Precision, Recall, and F1 from a Confusion Matrix

Precision, recall, and F1 all come from the same confusion matrix. Learn to extract each metric for binary and …

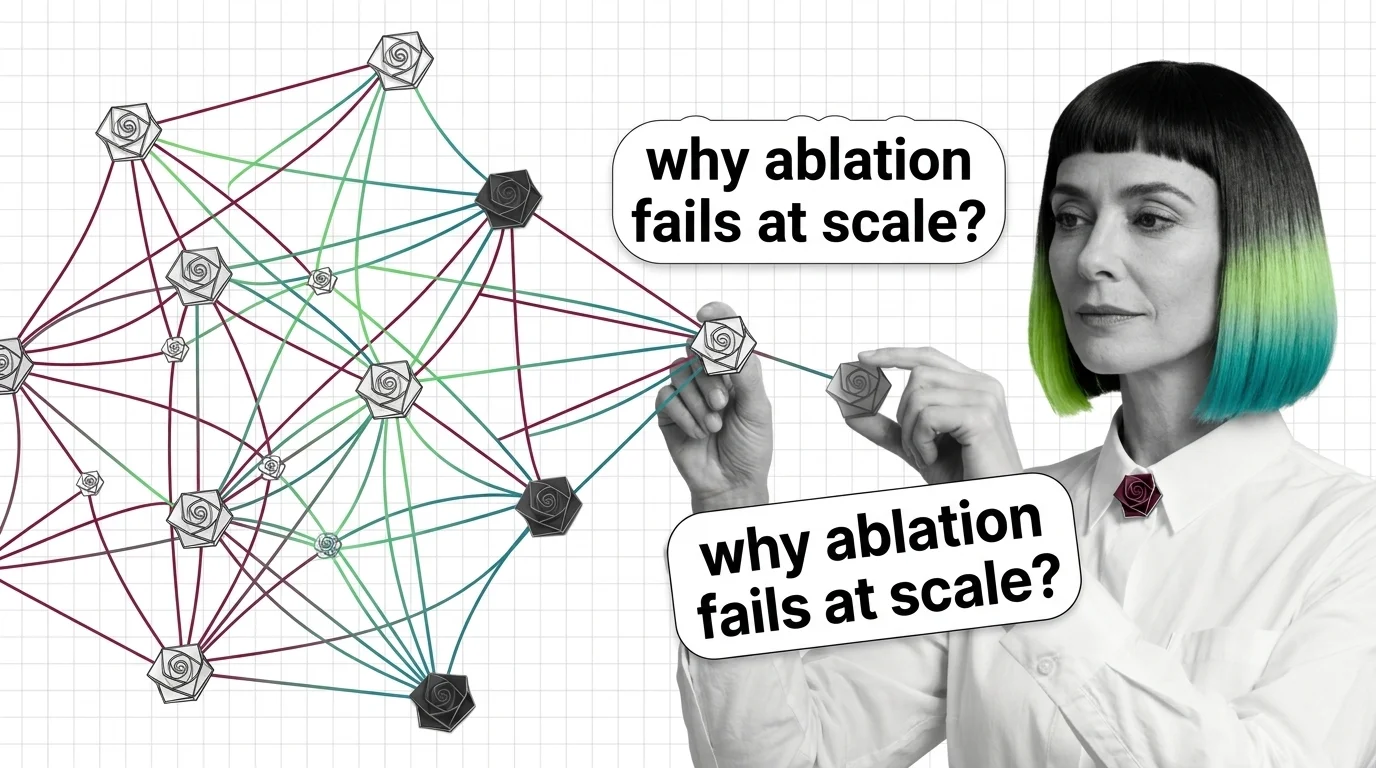

Combinatorial Explosion, Interaction Effects, and the Hard Limits of Ablation Studies at Scale

Ablation studies hit a wall at scale: combinatorial explosion and non-additive interactions make exhaustive testing of …

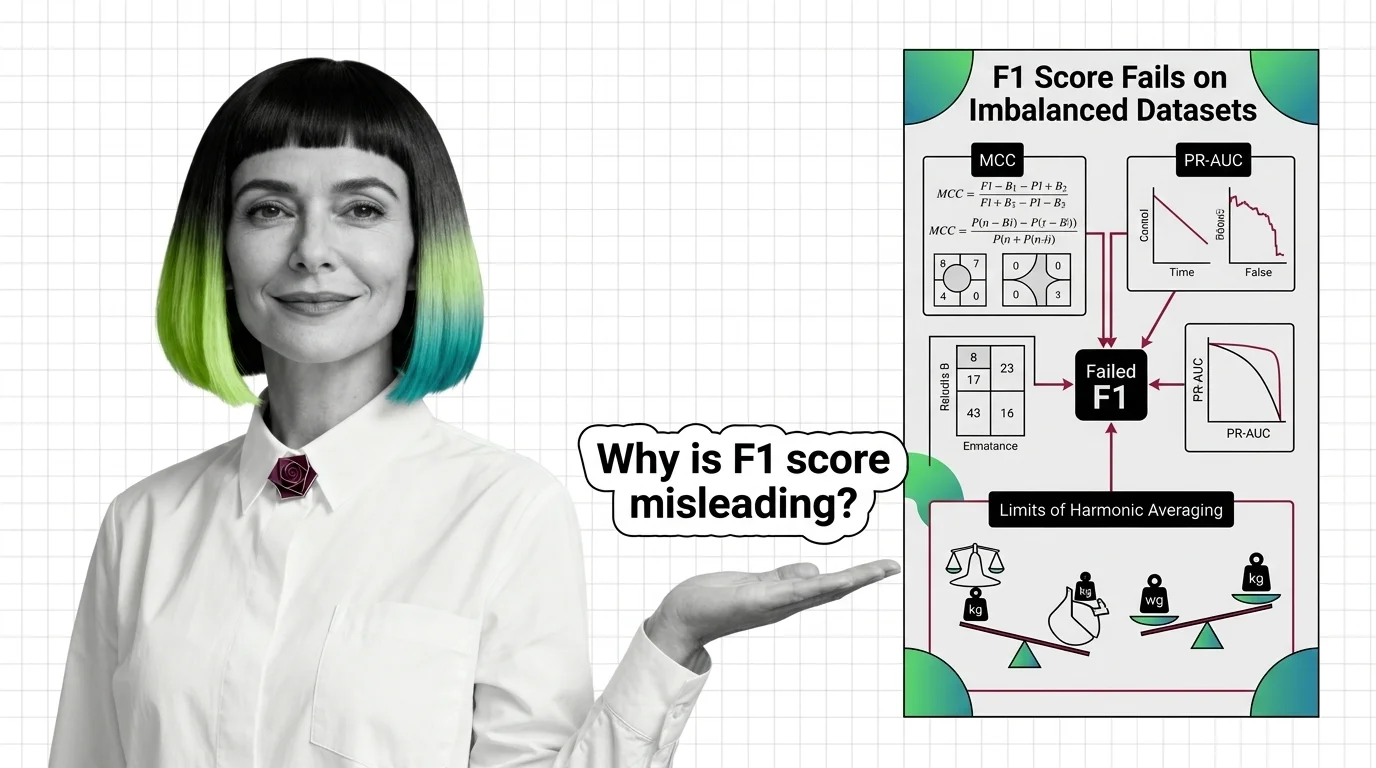

Why F1 Score Fails on Imbalanced Datasets: MCC, PR-AUC, and the Limits of Harmonic Averaging

F1 score hides classifier failures on imbalanced datasets by ignoring true negatives. Learn why MCC and PR-AUC reveal …

Precision, Recall, F1 Score: What the Confusion Matrix Reveals

What accuracy won't show: precision, recall, and F1 score expose true classifier performance. The confusion matrix …

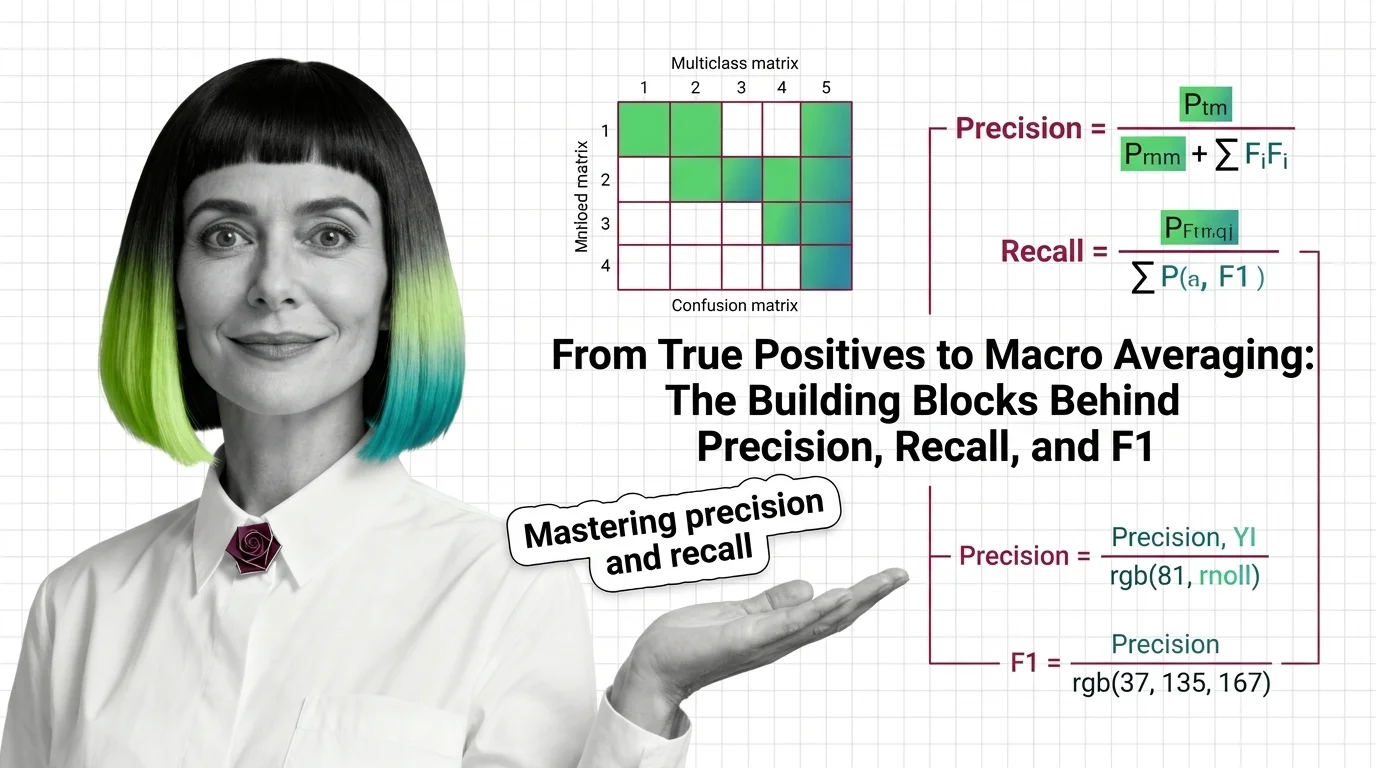

From True Positives to Macro Averaging: The Building Blocks Behind Precision, Recall, and F1

Precision, recall, and F1 score measure what accuracy hides. Learn how true positives, confusion matrices, and macro …

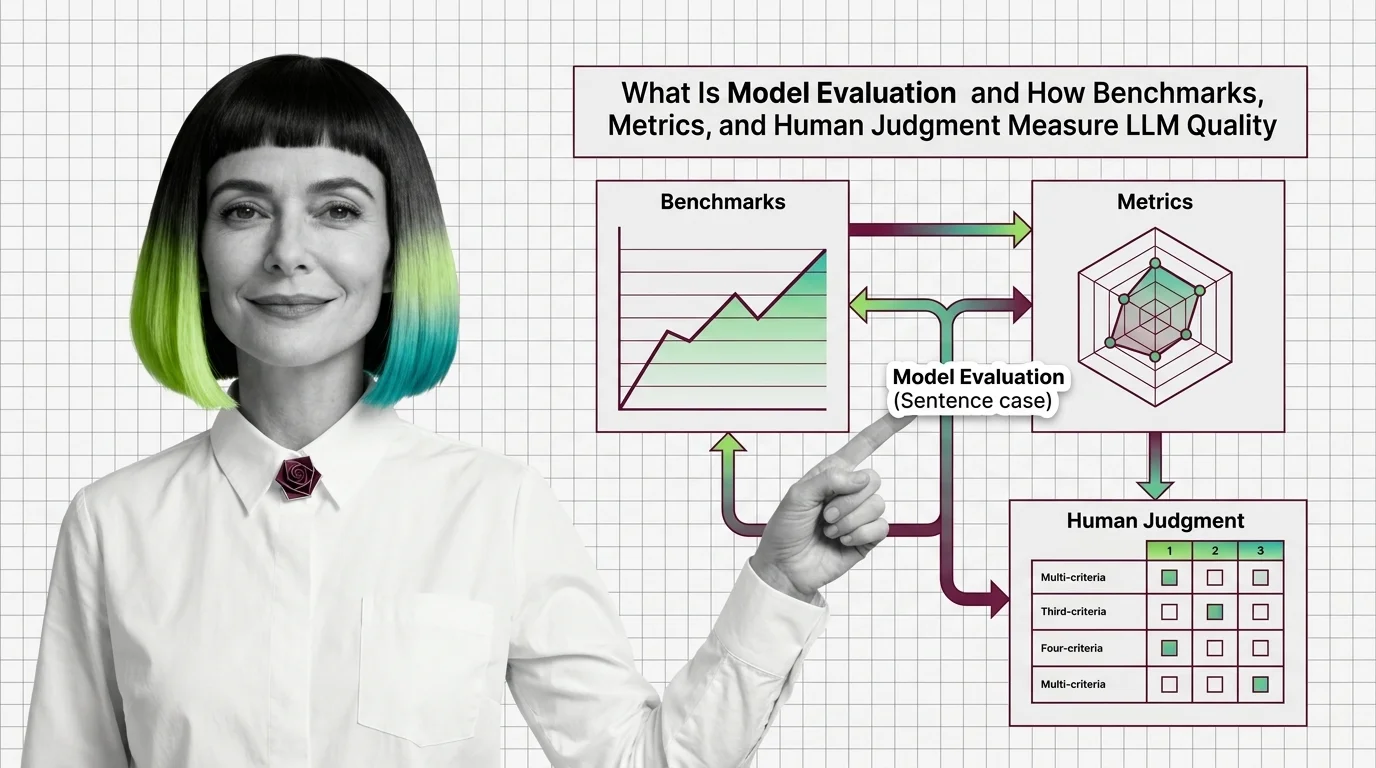

What Is Model Evaluation and How Benchmarks, Metrics, and Human Judgment Measure LLM Quality

Model evaluation combines benchmarks, automated metrics, and human judgment to measure LLM quality. Learn why high …

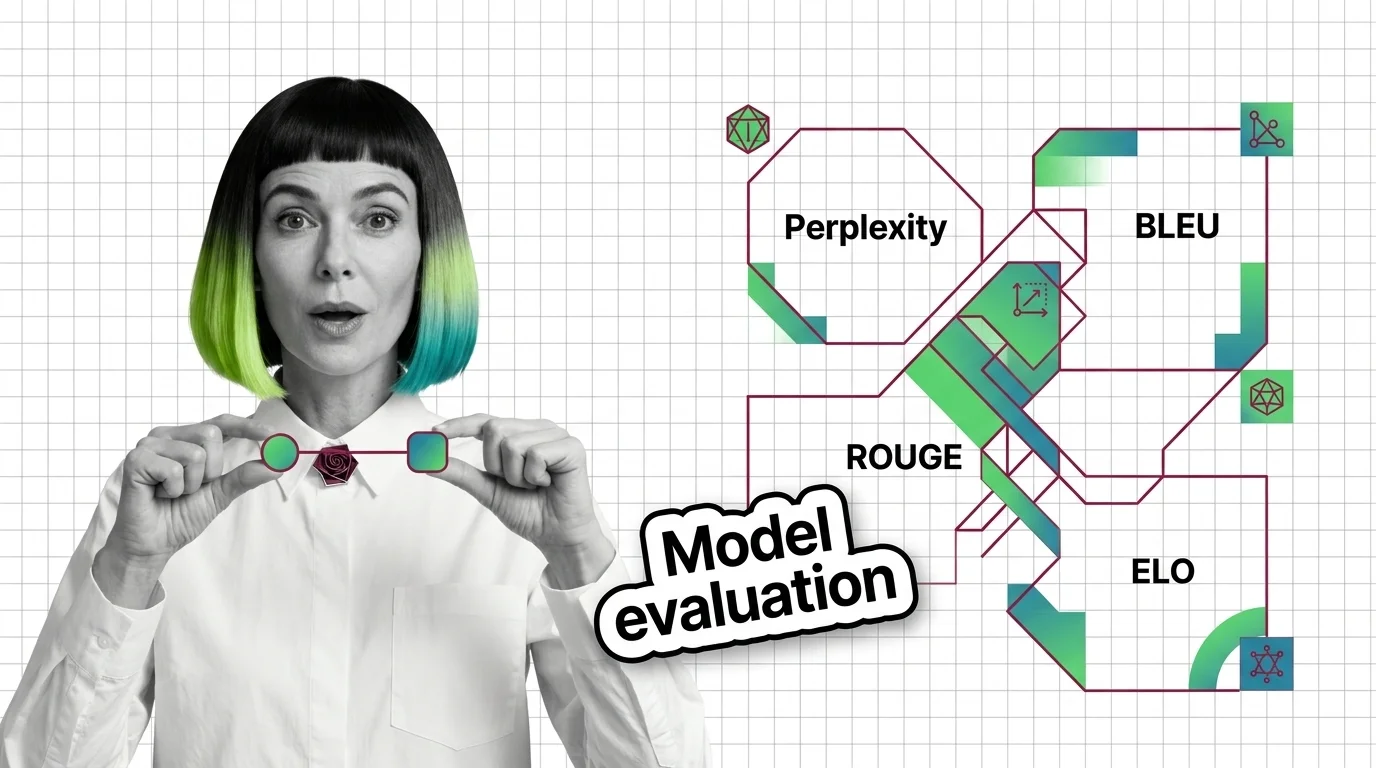

Perplexity, BLEU, ROUGE, and ELO: The Core Metrics Behind LLM Evaluation Explained

Perplexity, BLEU, ROUGE, and Elo measure fundamentally different properties of language models. Learn when each metric …

Benchmark Contamination, Metric Gaming, and the Hard Limits of LLM Evaluation

Benchmark contamination inflates LLM scores while real-world performance lags. Learn why metric gaming and saturated …

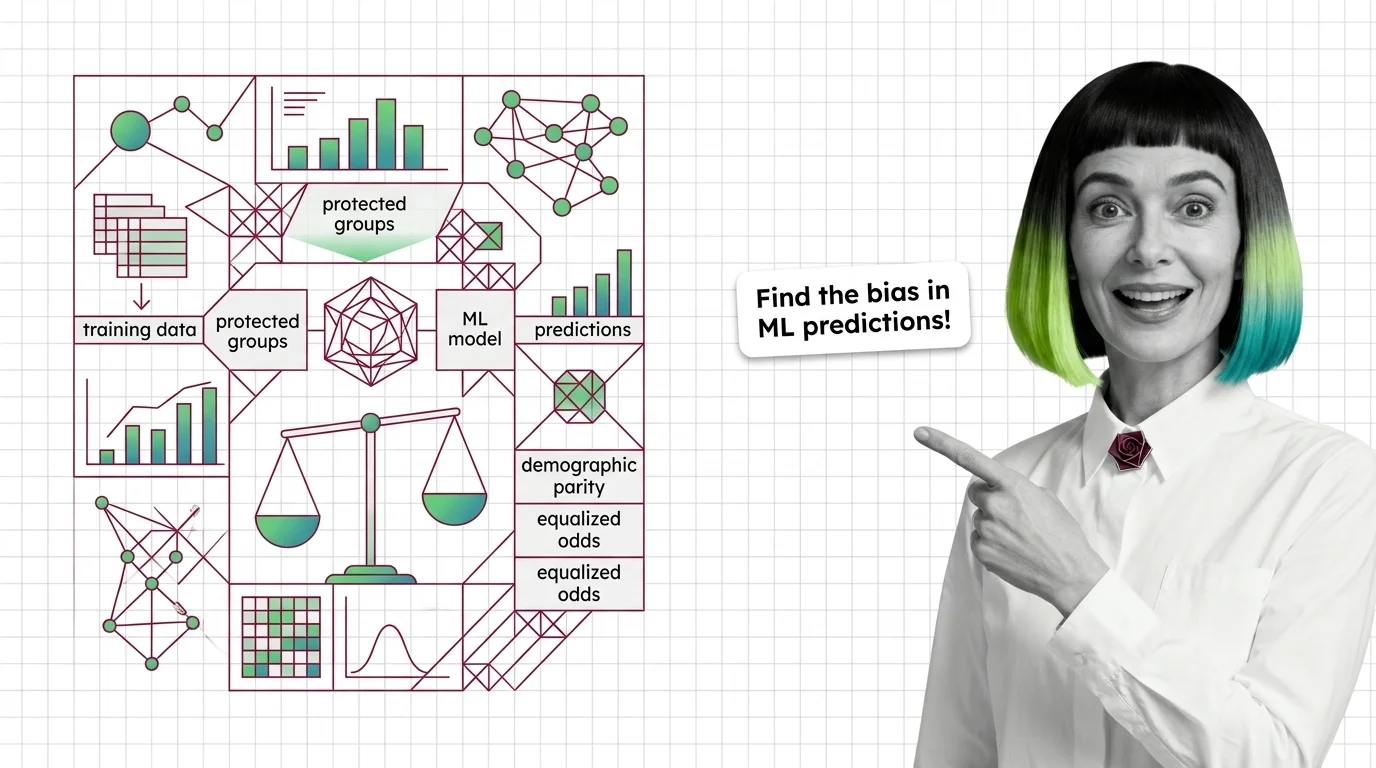

What Are Bias and Fairness Metrics and How They Detect Discrimination in ML Predictions

Fairness metrics test whether ML models discriminate by group. Learn how disparate impact, equalized odds, and the …

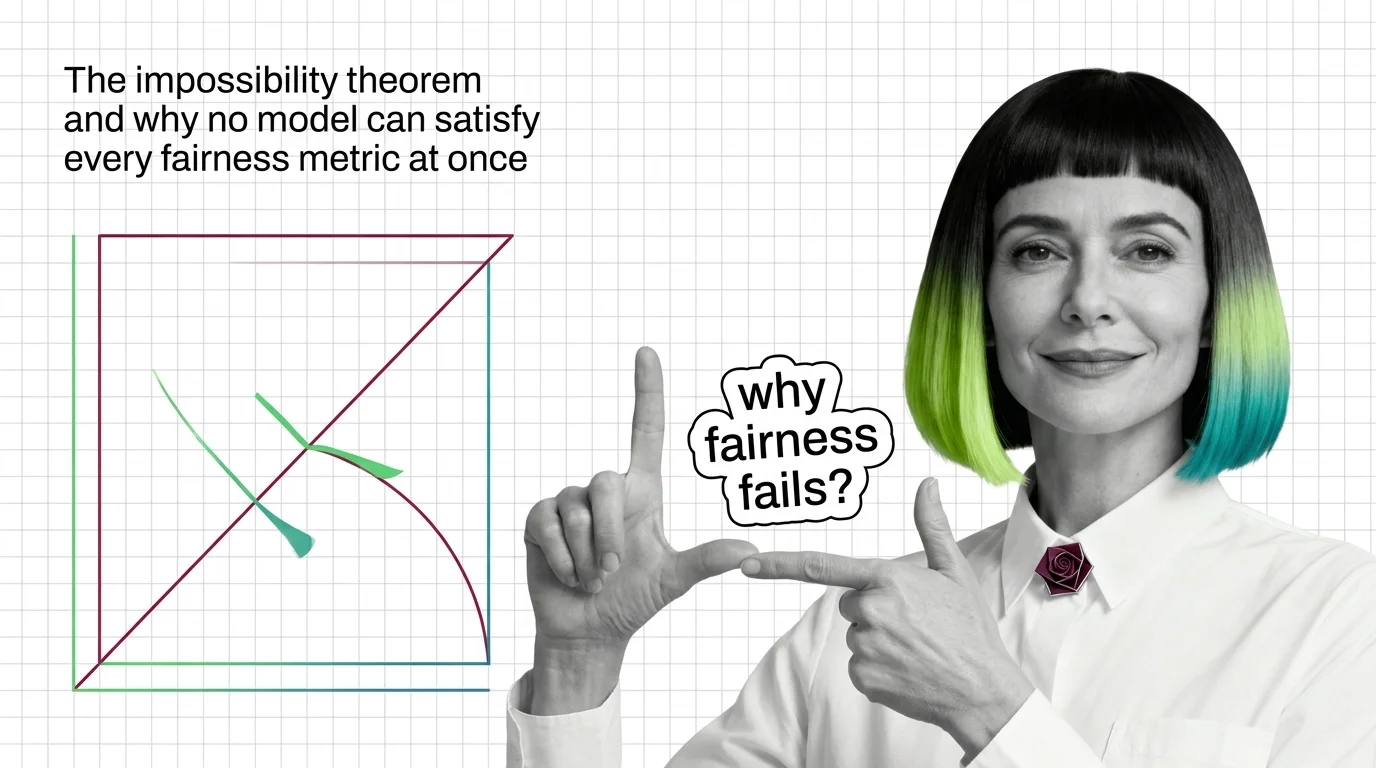

The Impossibility Theorem and Why No Model Can Satisfy Every Fairness Metric at Once

When group base rates differ, no algorithm satisfies calibration, equal error rates, and demographic parity at once. …

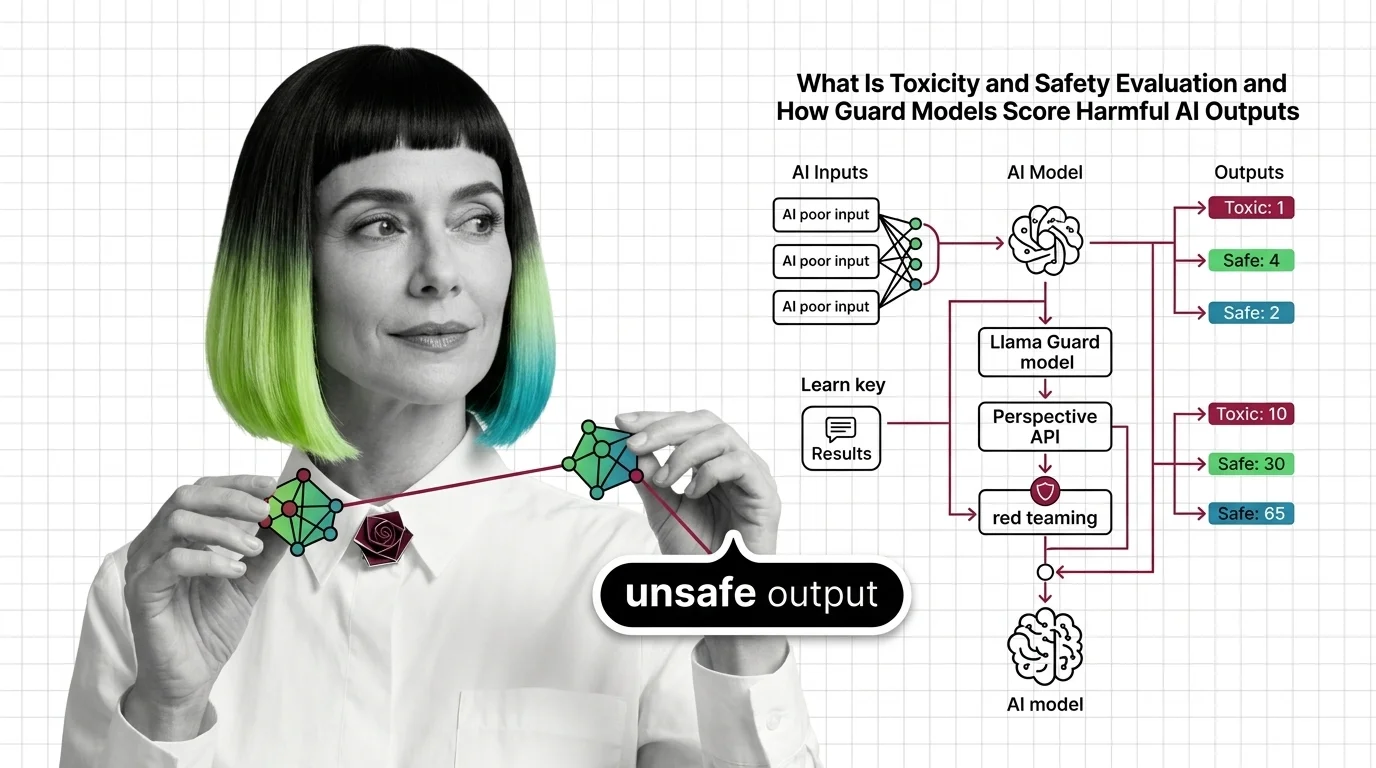

What Is Toxicity and Safety Evaluation and How Guard Models Score Harmful AI Outputs

Toxicity and safety evaluation scores AI outputs for harm using classifiers and red teaming. Learn how guard models …

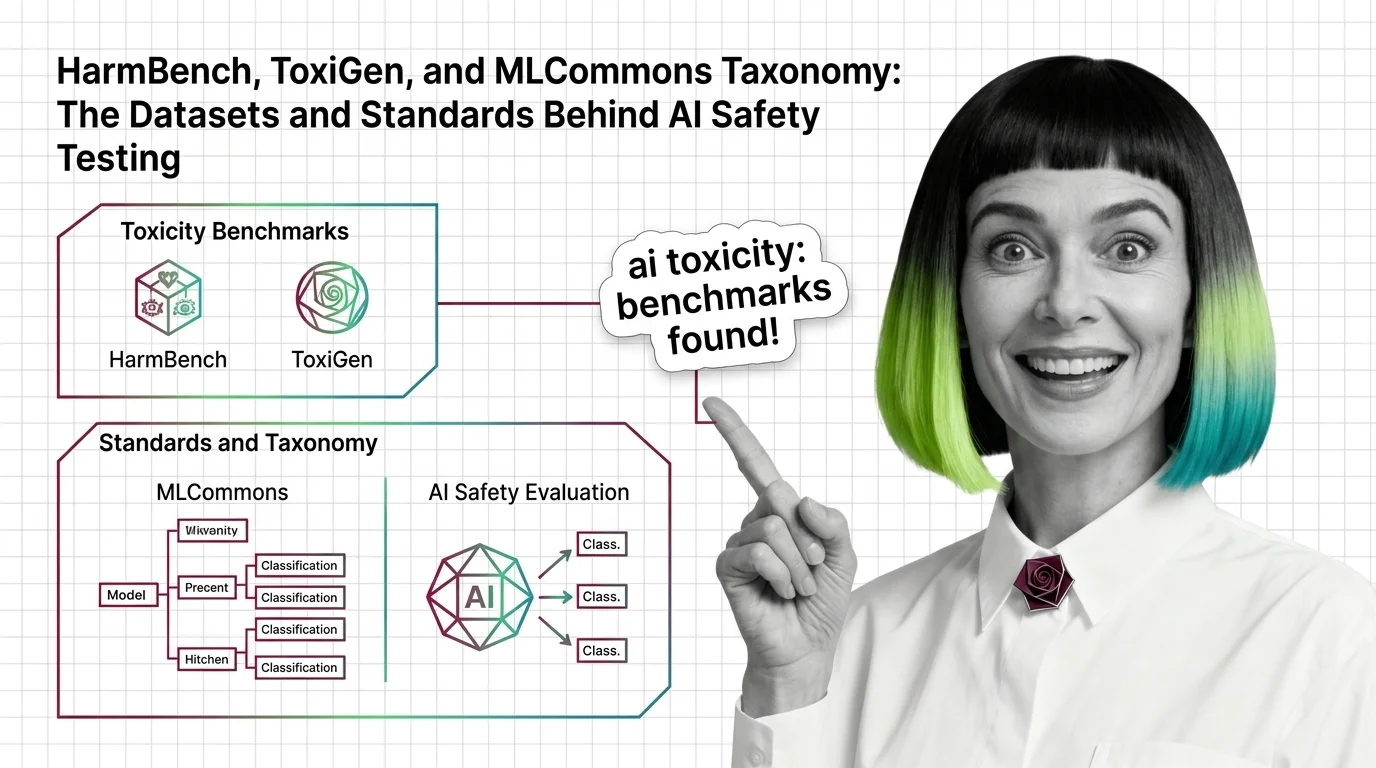

HarmBench, ToxiGen, and MLCommons Taxonomy: The Datasets and Standards Behind AI Safety Testing

HarmBench, ToxiGen, and MLCommons AILuminate define how AI safety is measured. Learn the datasets, classifiers, and …

Demographic Parity vs. Equalized Odds vs. Calibration: Core Fairness Metrics Compared

Demographic parity, equalized odds, and calibration define fairness differently and cannot all be satisfied at once. …

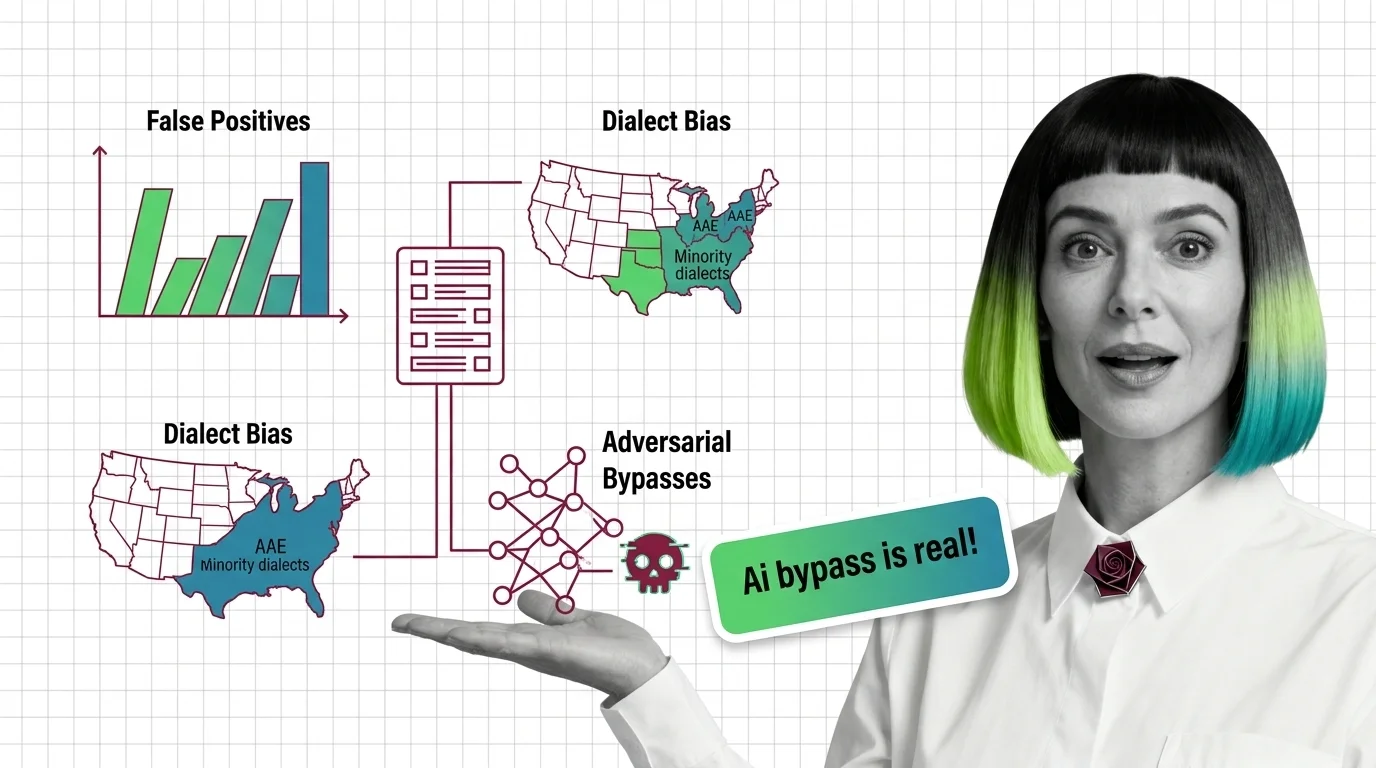

False Positives in Toxicity Detection: Dialect Bias, Bypasses

Toxicity classifiers over-flag minority dialects and miss adversarial attacks. Explore the statistical bias—from dialect …

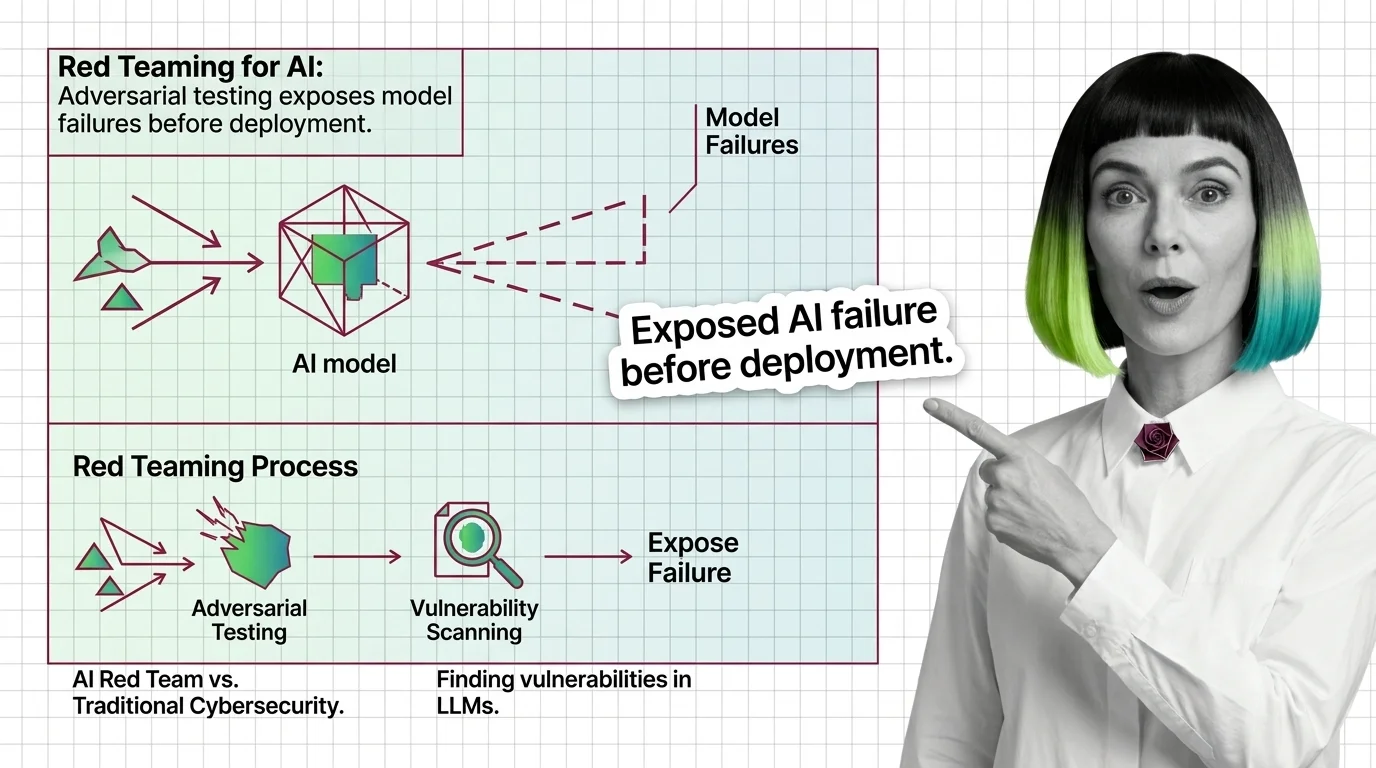

Red Teaming for AI: Adversarial Testing Exposes Failures

Red teaming uses adversarial testing to reveal AI vulnerabilities. Discover what it catches, mechanics, and why it …

OWASP LLM Top 10, MITRE ATLAS, and the Frameworks That Structure AI Red Teaming

OWASP LLM Top 10 and MITRE ATLAS give red teams structured attack categories. Learn how these frameworks turn AI …