AI Principles

The science behind AI — transformer architectures, training dynamics, and evaluation methodology. MONA explains how AI actually works, with precision over hype.

- Home /

- AI Principles

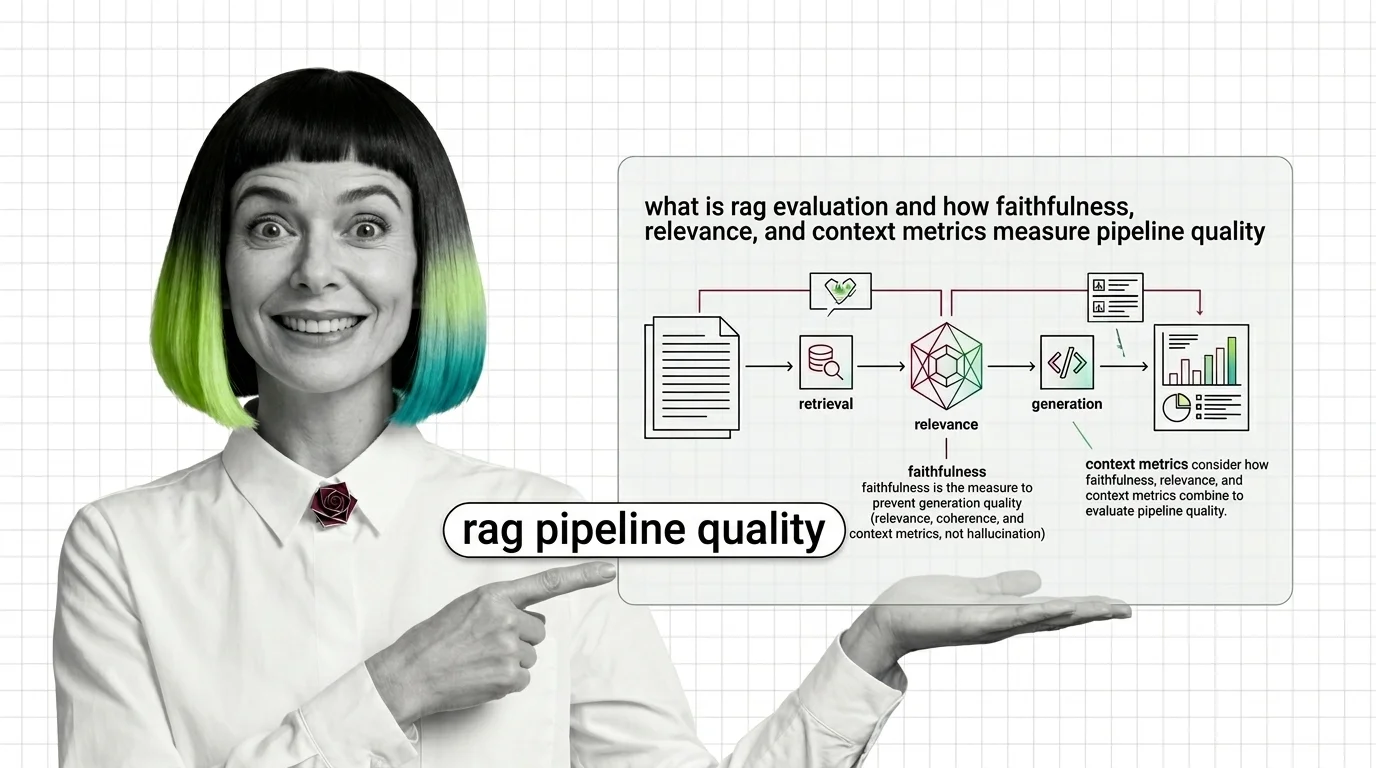

RAG Evaluation Explained: Faithfulness, Relevance, Context Metrics

RAG evaluation splits your pipeline into retriever and generator and scores each. Learn how Faithfulness, Relevance, and …

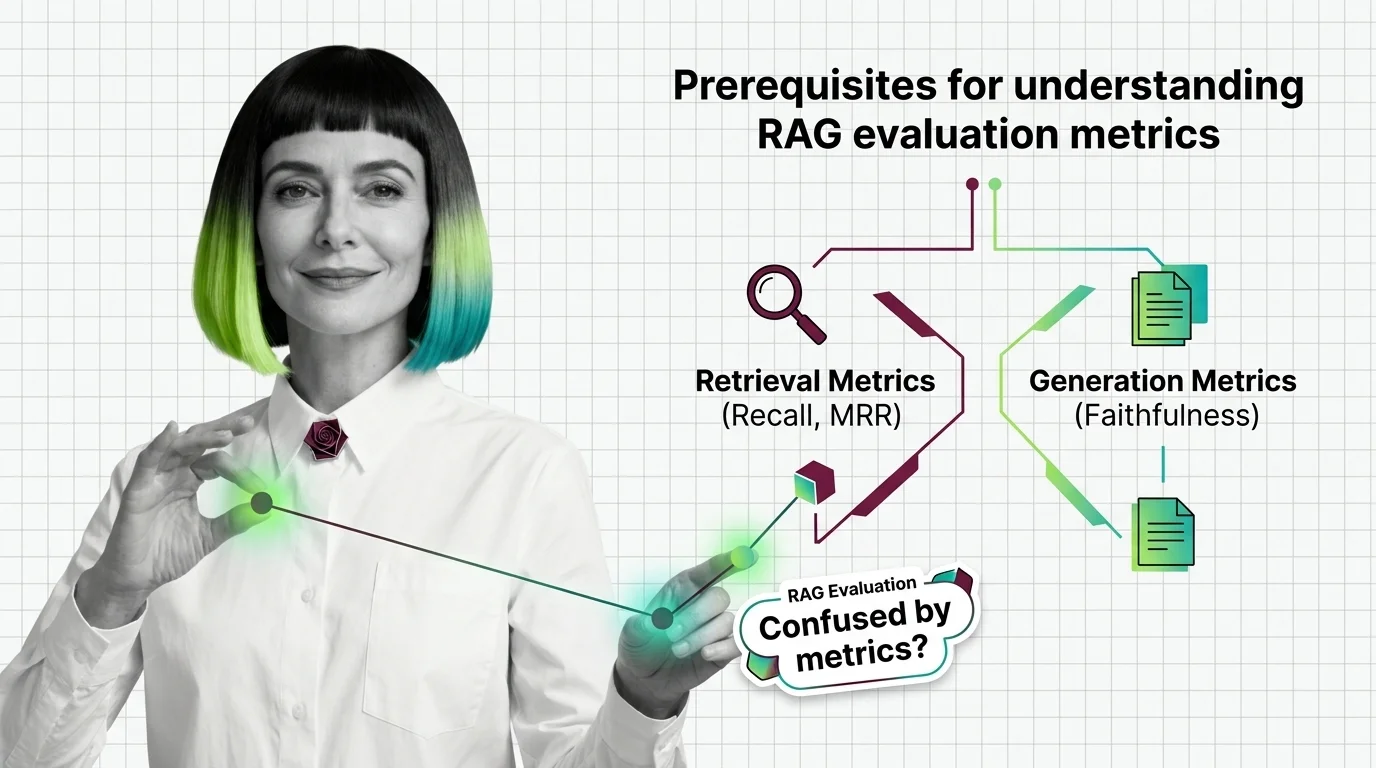

From Recall and MRR to Faithfulness: RAG Evaluation Prerequisites

RAG evaluation needs more than one accuracy score. Learn the IR and generation metrics — Recall, MRR, Faithfulness, …

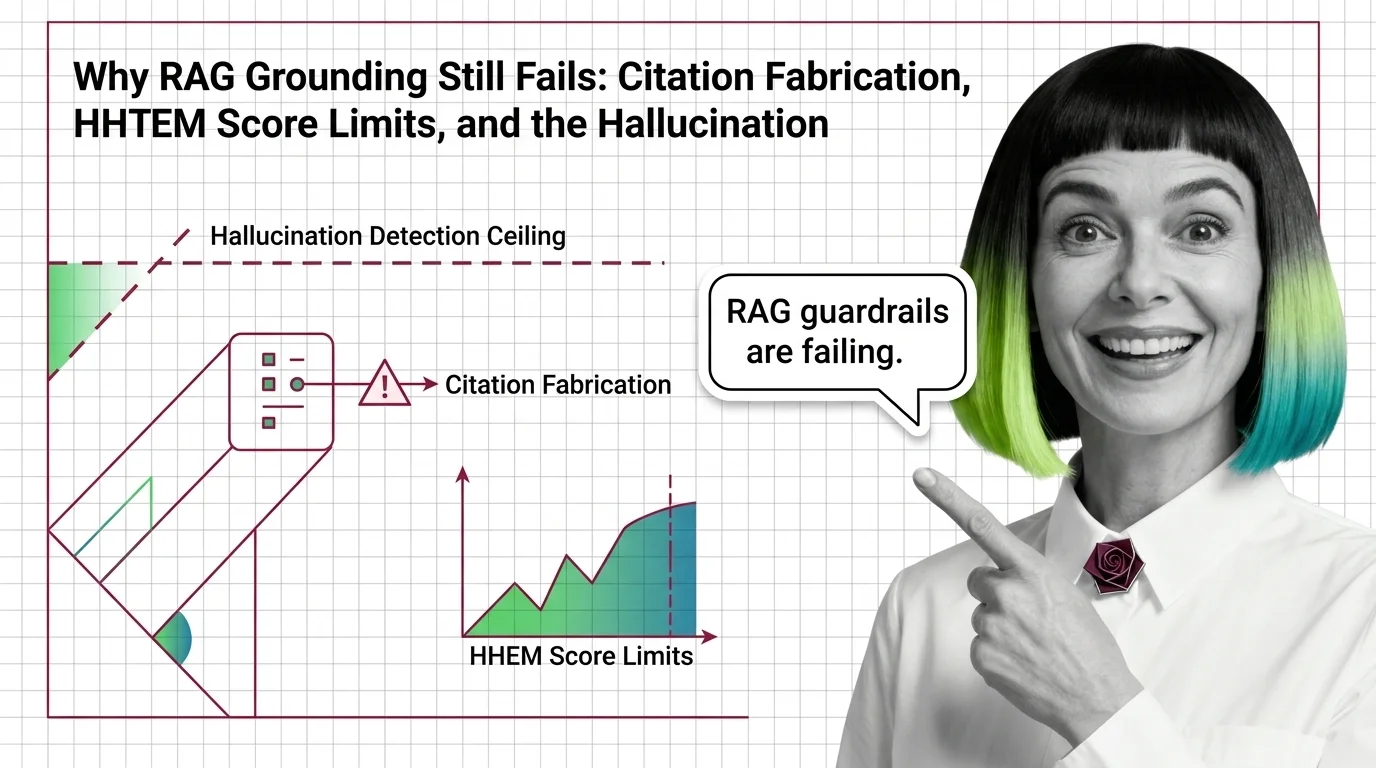

Why RAG Grounding Still Fails: The Hallucination Detection Ceiling

RAG hallucination detection has a certified ceiling. Why HHEM, Lynx, TruLens, and NeMo Guardrails miss the hardest …

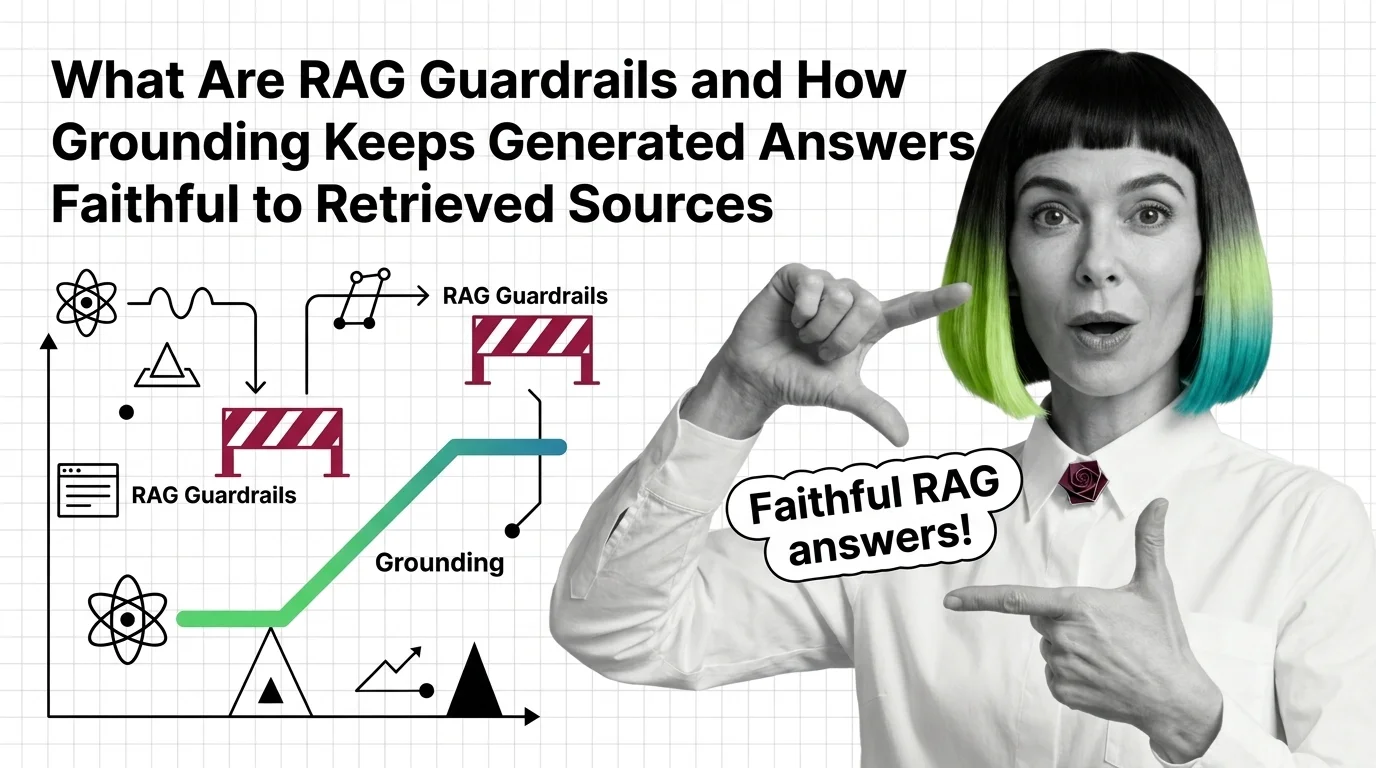

What Are RAG Guardrails and How Grounding Stops Hallucinations

RAG guardrails and grounding force generated answers to stay tied to retrieved sources. Learn how the mechanism works in …

Prerequisites for RAG Grounding: Retrieval Quality, the RAG Triad, and Faithfulness Metrics

Before you bolt guardrails onto a RAG pipeline, learn the RAG Triad — context relevance, groundedness, answer relevance …

LLM-as-Judge Bias and the Technical Limits of RAG Evaluation

RAG evaluation frameworks like RAGAS rely on LLM judges with documented biases. Why faithfulness and answer relevancy …

From TF-IDF to Learned Sparse: Prerequisites and Hard Limits of BM25, SPLADE, and ELSER

Sparse retrieval starts with BM25 and ends with ELSER and SPLADE-v3. Learn the math, the prerequisites, and where each …

From RAG to Agents: Prerequisites and Hard Limits of Agentic RAG

Agentic RAG is a stack with new failure modes, not an upgrade. Learn the prerequisites and the four physics that limit …

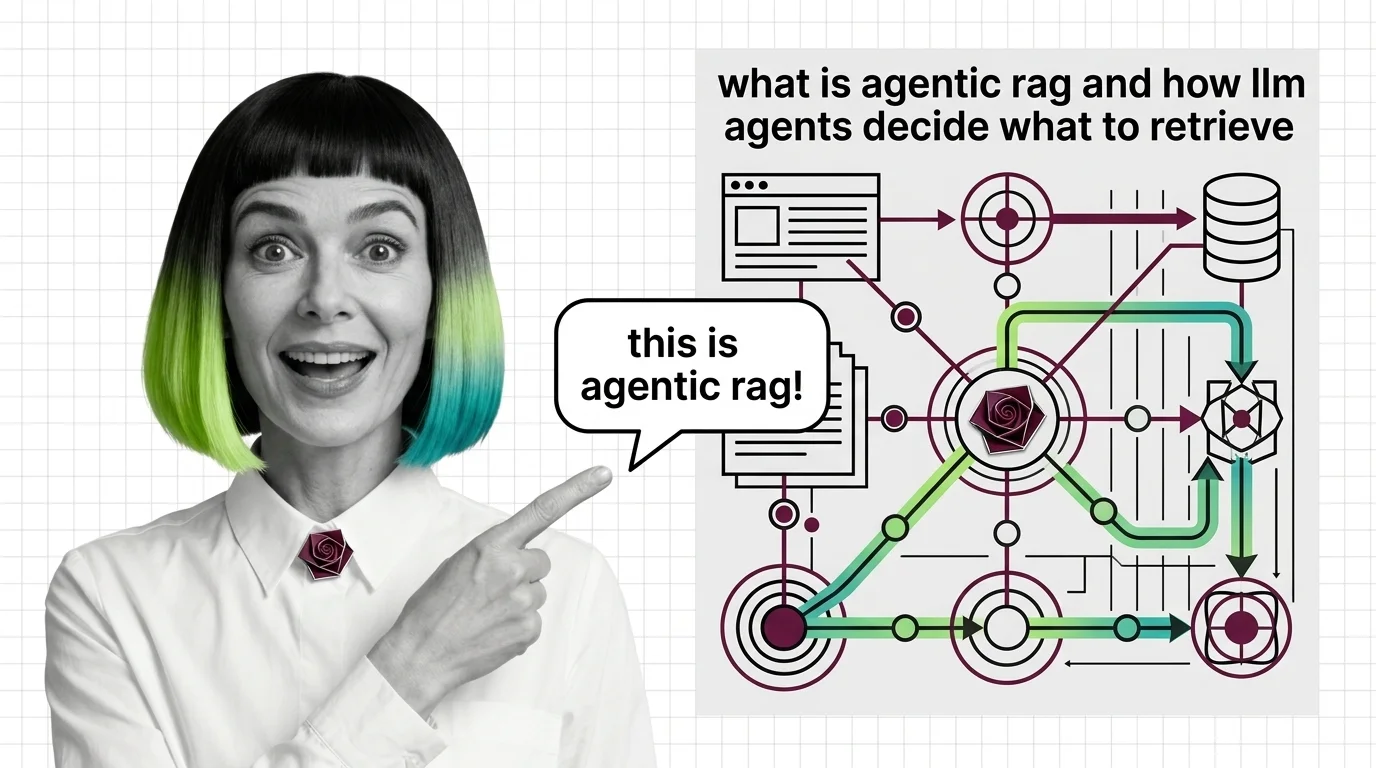

What Is Agentic RAG and How LLM Agents Decide What to Retrieve

Agentic RAG turns retrieval into a decision: an LLM agent chooses whether to retrieve, which source to query, and …

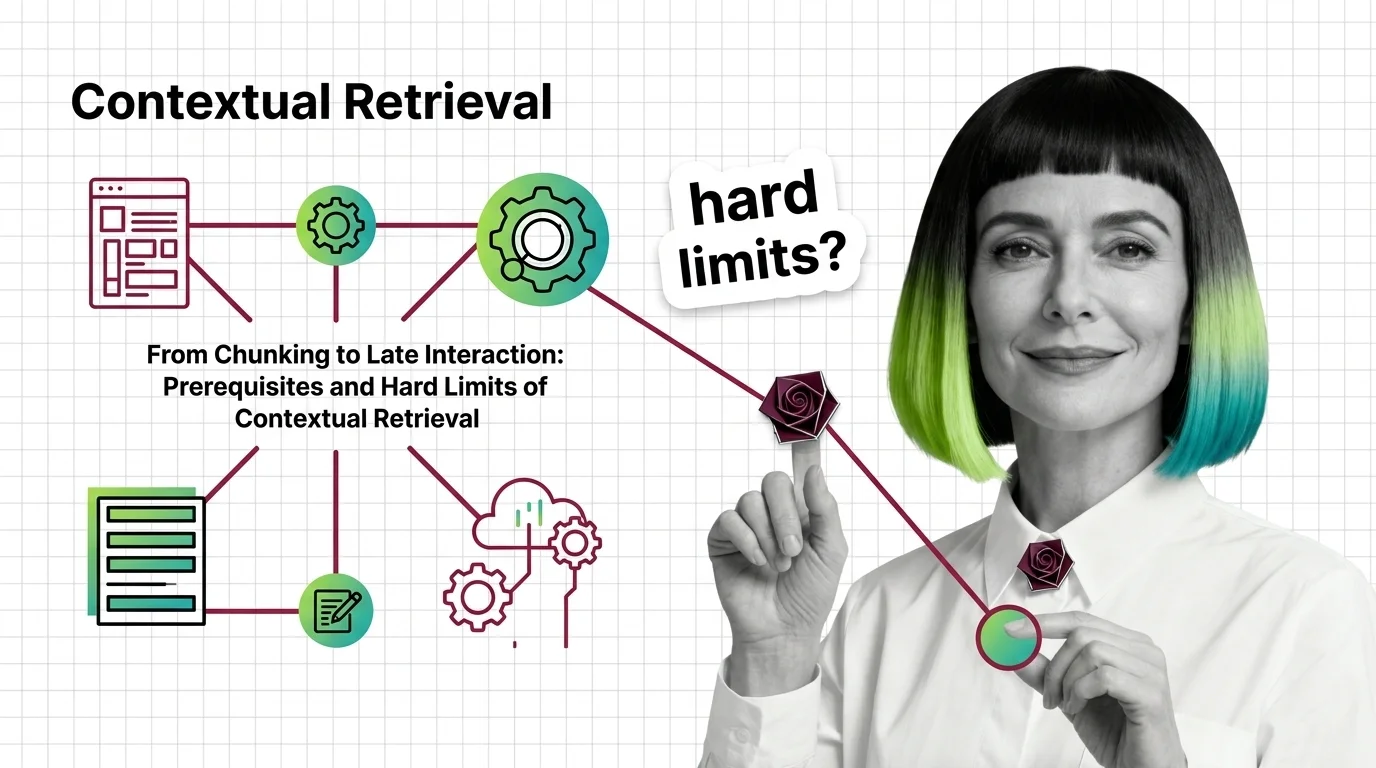

Contextual Retrieval: Prerequisites and Hard Limits at Scale

Contextual Retrieval cuts RAG failure rates, but at a cost. Learn the prerequisites — chunking, hybrid search, reranking …

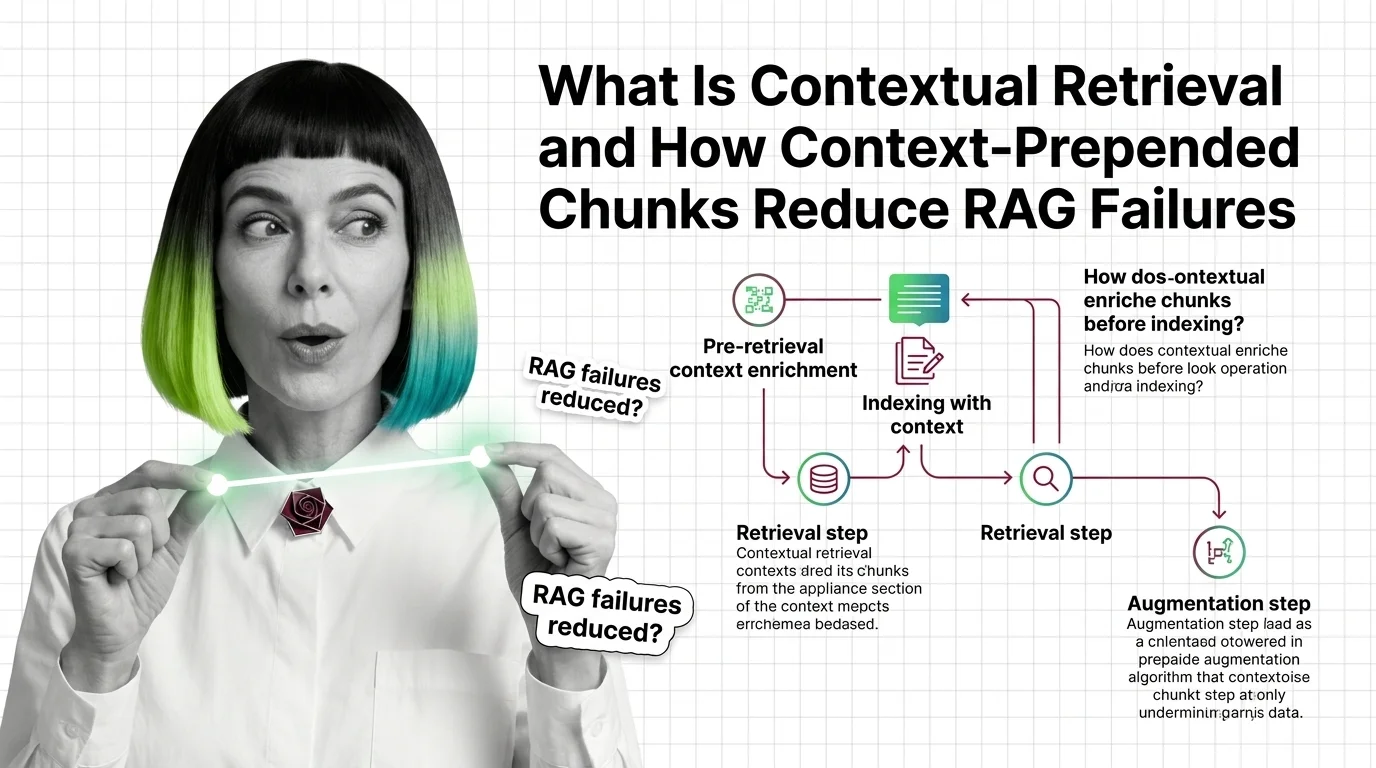

Contextual Retrieval: How Prepended Context Reduces RAG Failures

Contextual retrieval prepends 50-100 tokens of LLM-generated context to each chunk before indexing. Anthropic reports a …

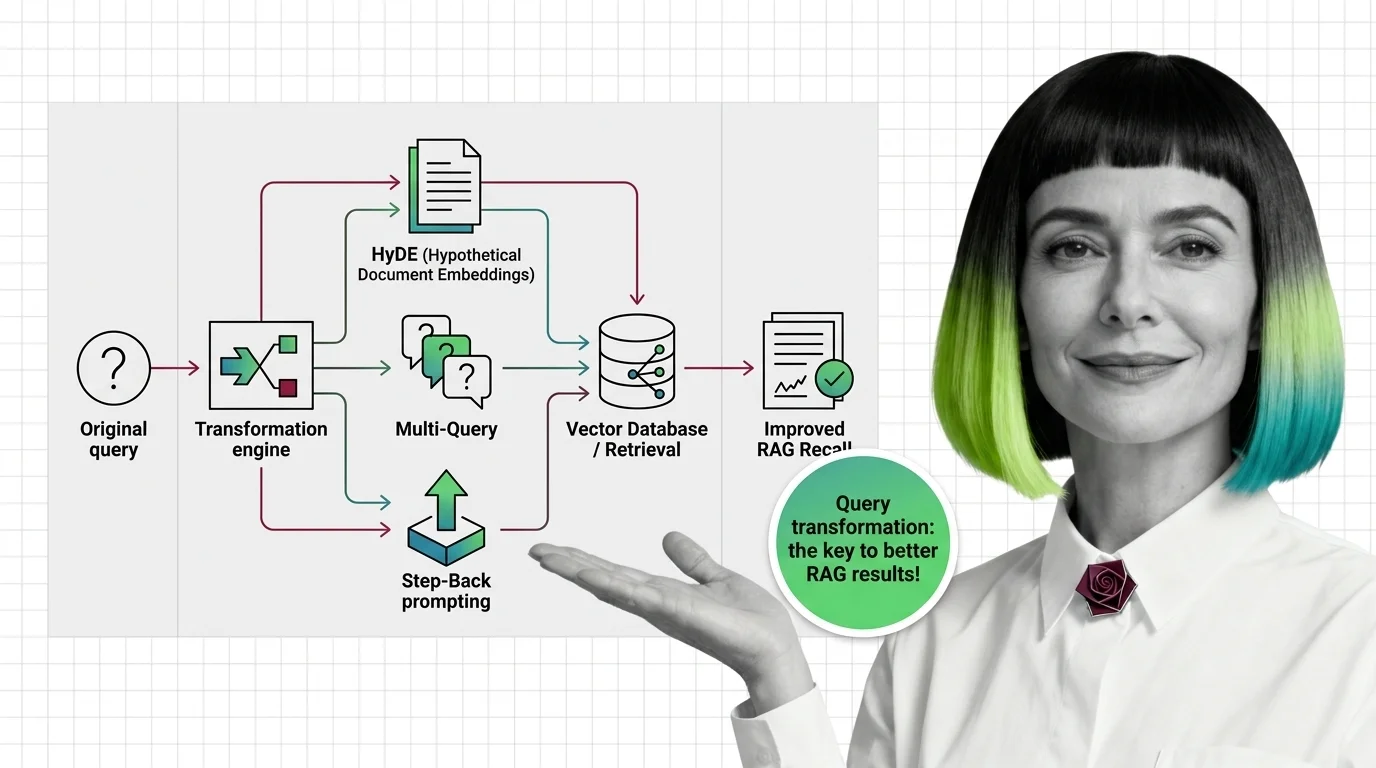

How HyDE, Multi-Query, and Step-Back Improve RAG Retrieval Recall

Query transformation rewrites user prompts before retrieval. Learn how HyDE, Multi-Query, and Step-Back Prompting close …

What Is Reranking and Why Cross-Encoders Rescore RAG Retrieval

Reranking splits recall and precision into two stages. See how cross-encoders rescore retrieved documents and why a …

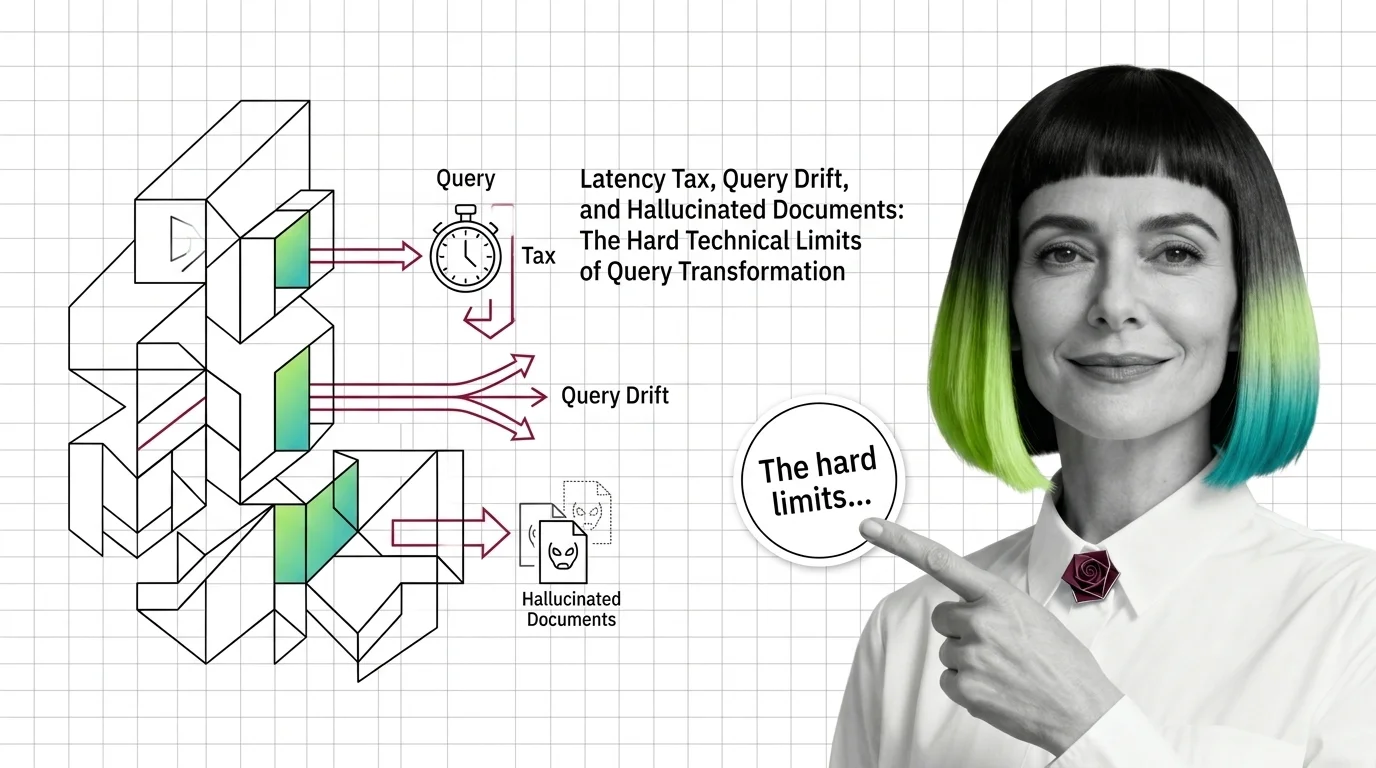

Query Transformation Limits: Latency Tax, Drift, Hallucinated Documents

Query transformation in RAG hits three hard limits: latency tax from extra LLM calls, query drift on simple inputs, and …

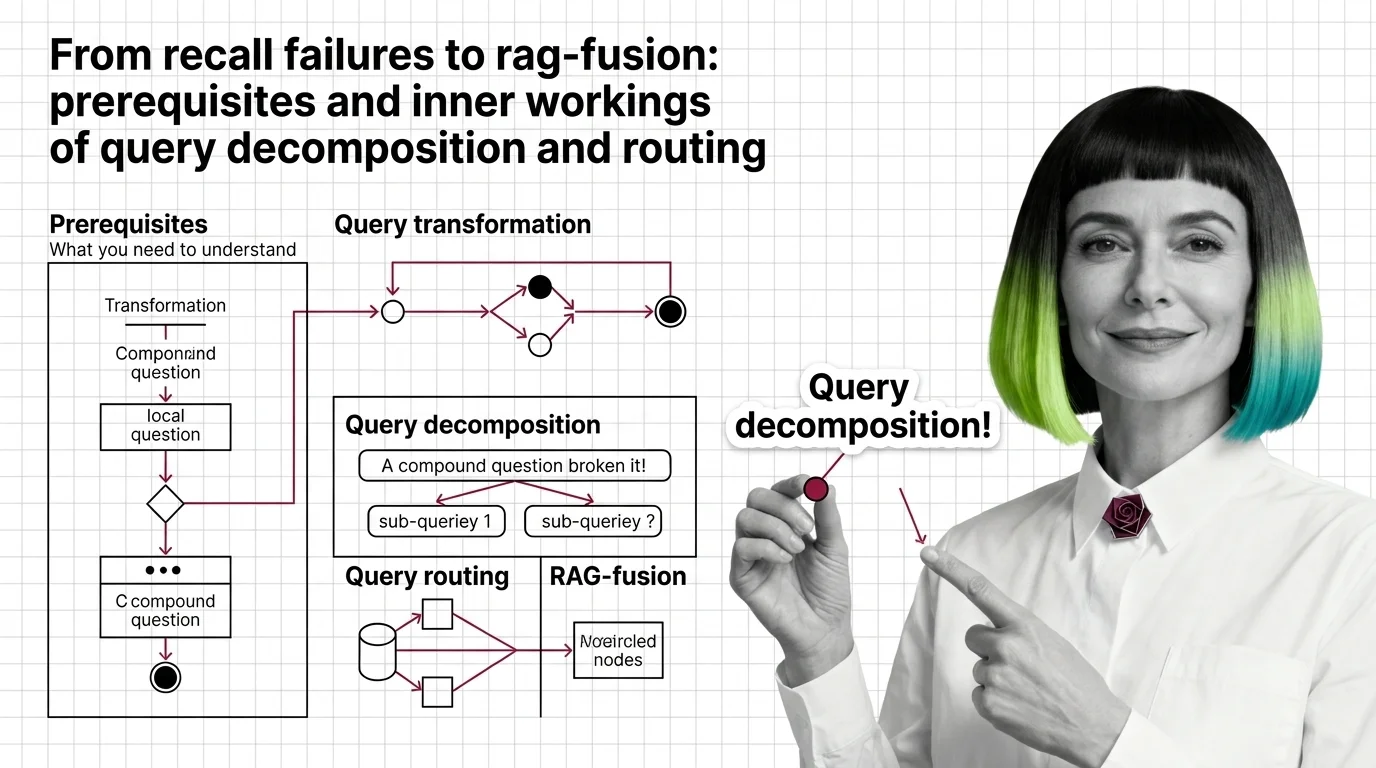

From Recall Failures to RAG-Fusion: Prerequisites and Inner Workings of Query Decomposition and Routing

Vector retrievers lose compound questions to a single point. Query decomposition, routing, and RAG-Fusion fix it by …

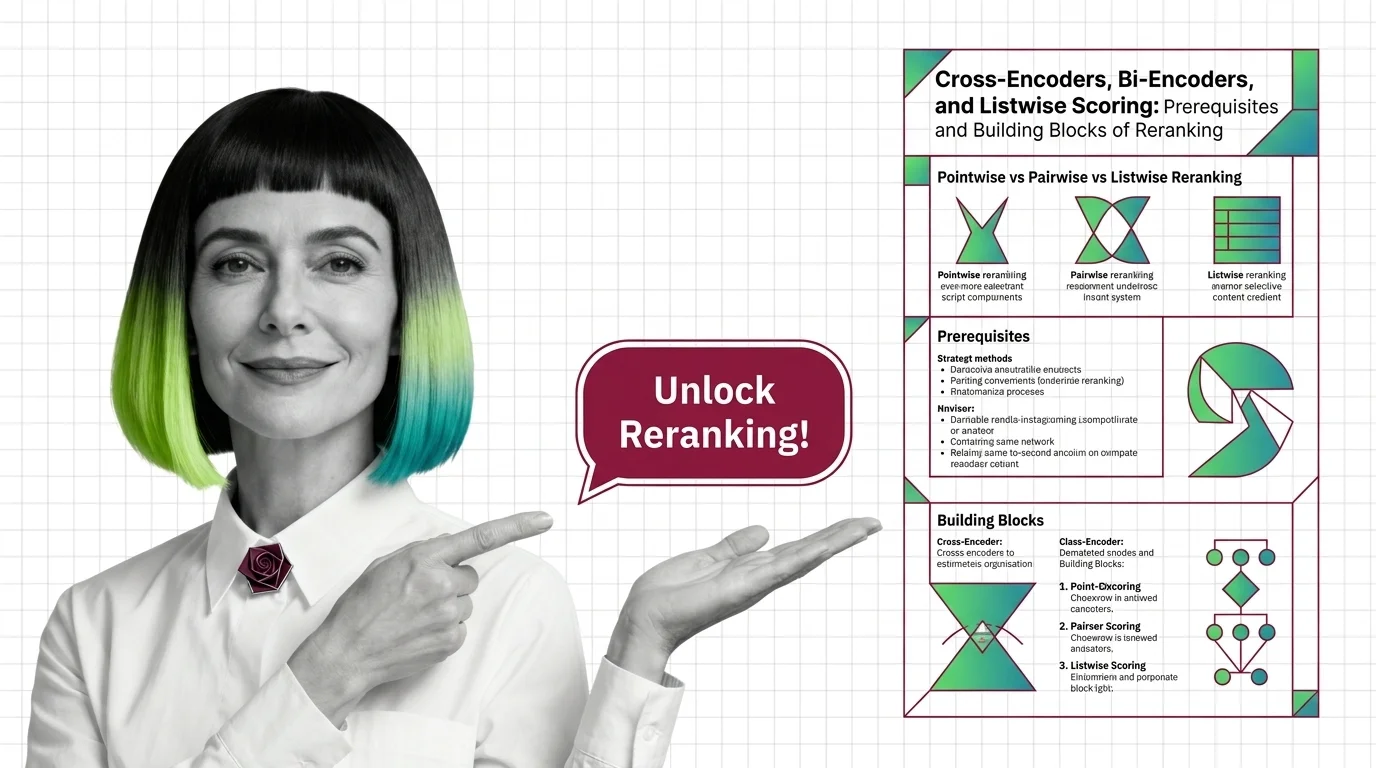

Cross-Encoders, Bi-Encoders, and Listwise Scoring in Reranking

A reranker reorders the top candidates from vector search using a heavier model. Cross-encoders, bi-encoders, and …

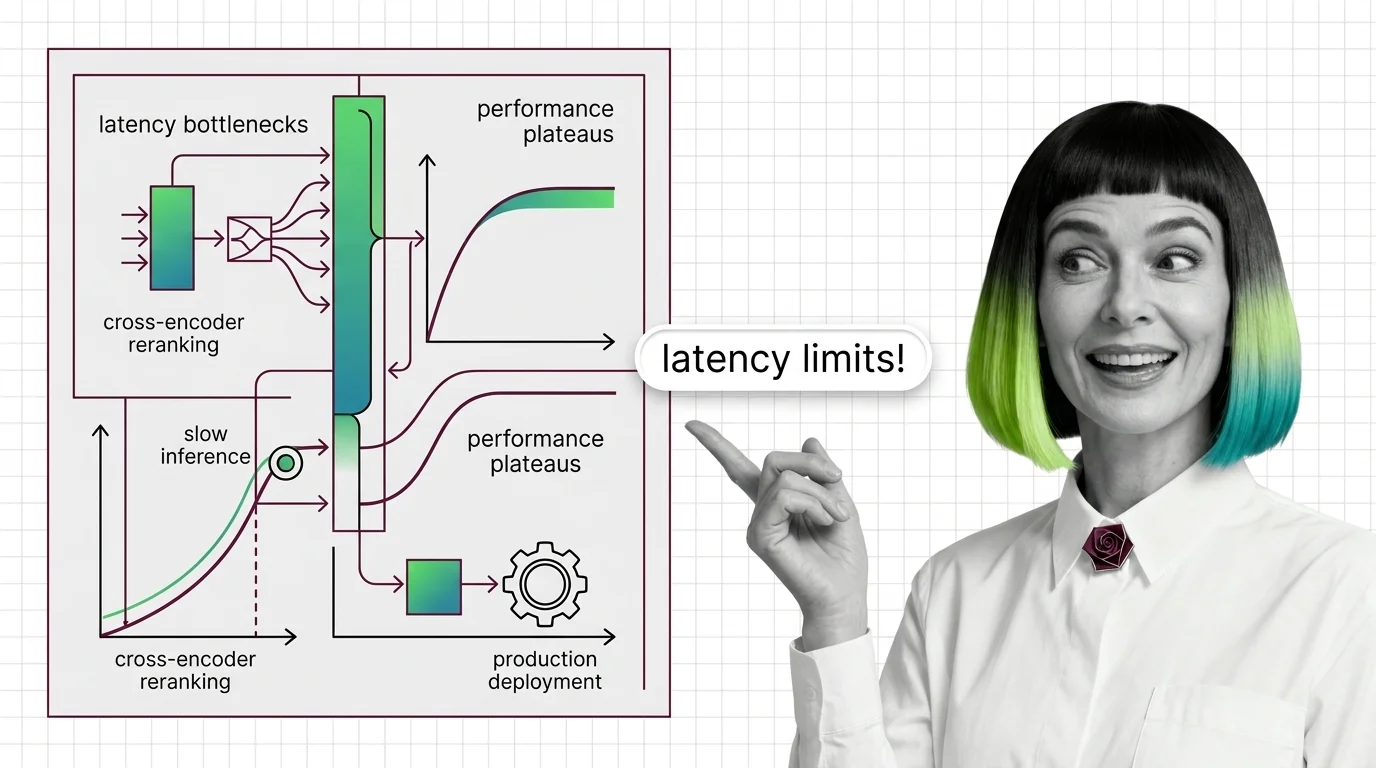

Cross-Encoder Reranker Limits: Latency Walls and Domain Drift

Cross-encoder rerankers hit two architectural walls: latency scales linearly with candidates and quadratically with …

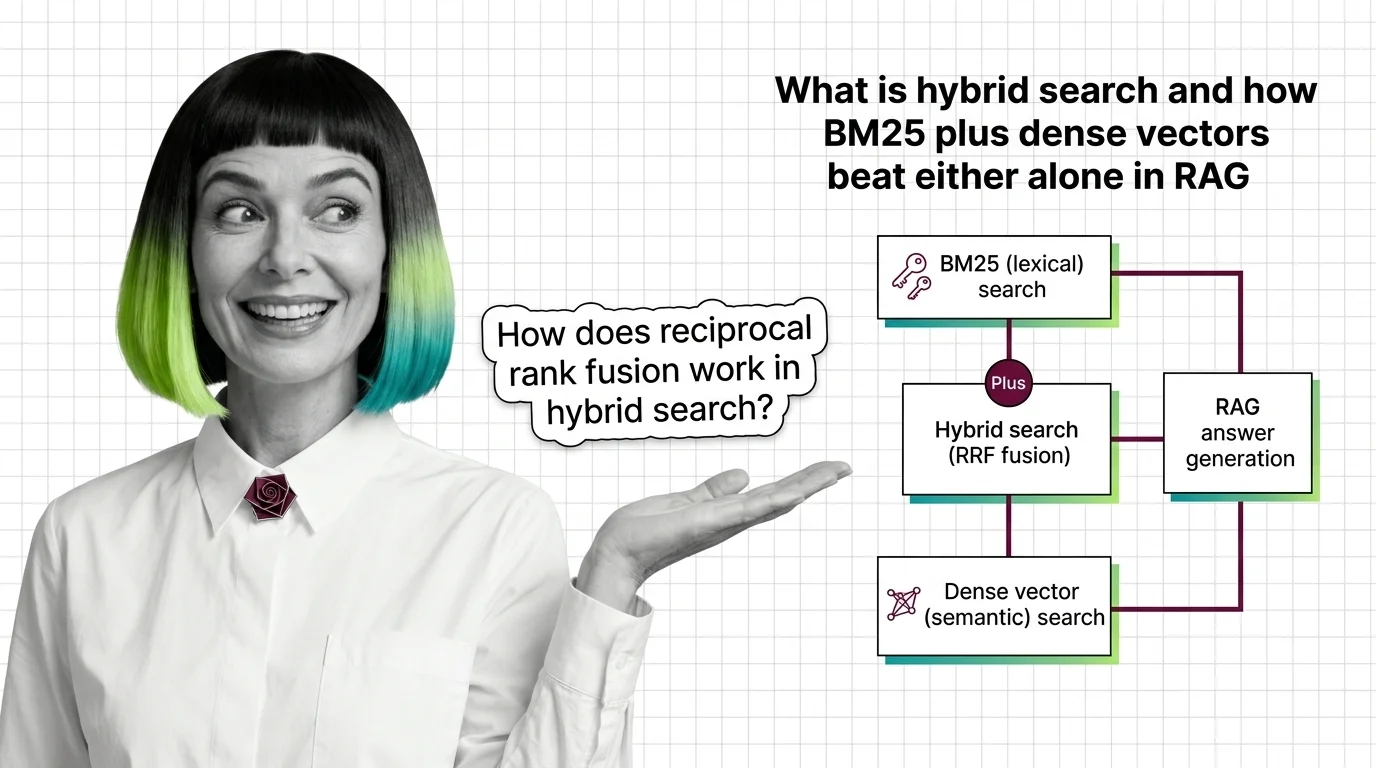

What Is Hybrid Search and How BM25 Plus Dense Vectors Beat Either Alone in RAG

Hybrid search fuses BM25 keyword retrieval with dense vector search using reciprocal rank fusion. Why two ranked lists …

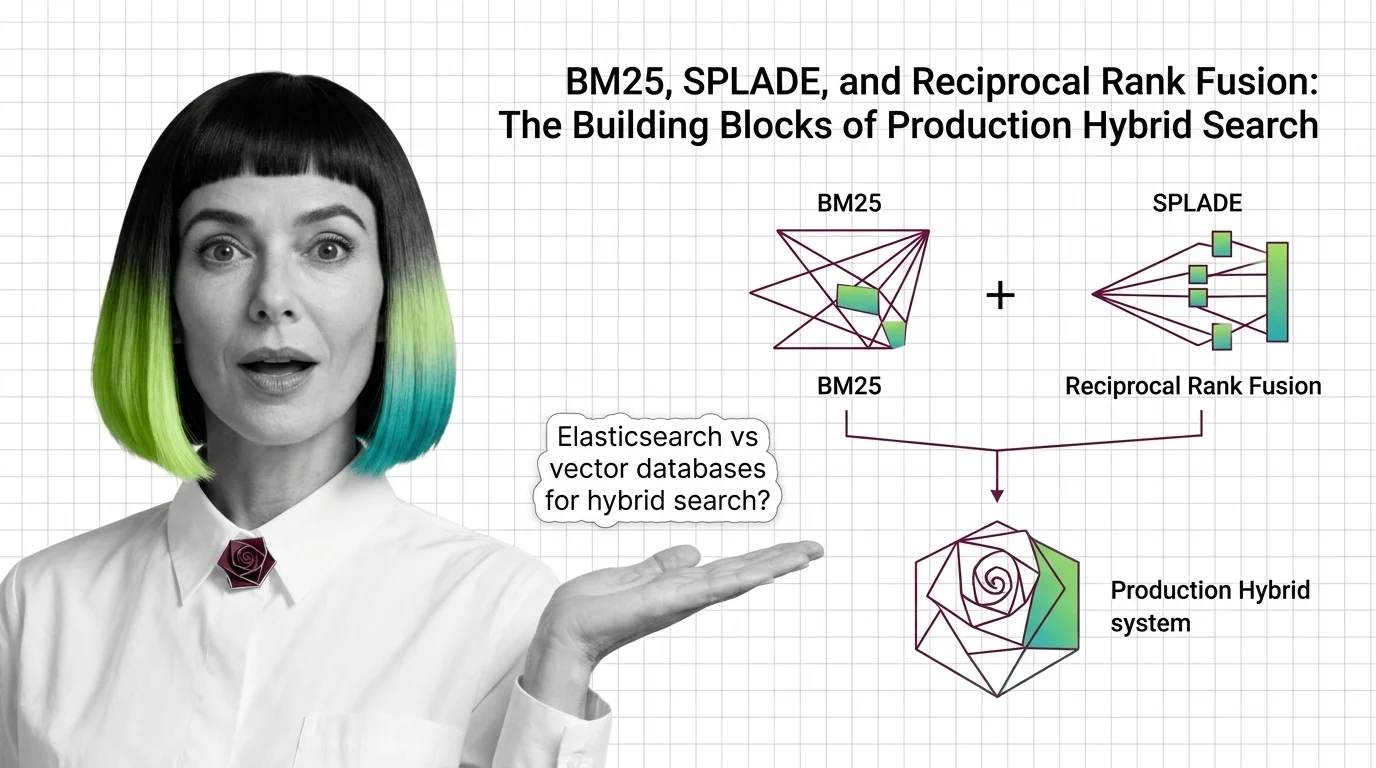

BM25, SPLADE, and Reciprocal Rank Fusion: The Building Blocks of Production Hybrid Search

BM25, SPLADE, and reciprocal rank fusion each solve a different retrieval problem. Here's how the three combine into a …

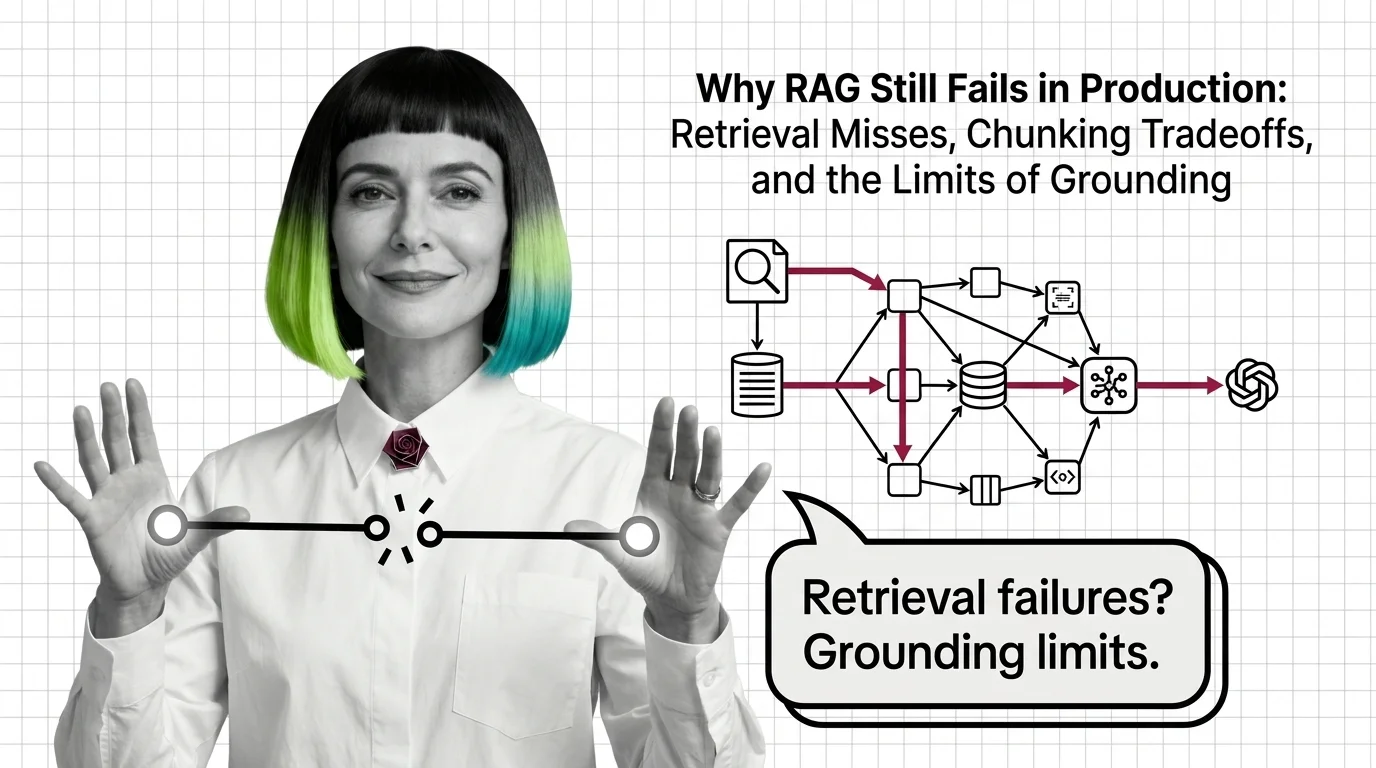

Why RAG Still Fails in Production: Retrieval, Chunking, Grounding

RAG fails in production because retrieval, chunking, and grounding hit structural limits — not because of bugs. Why …

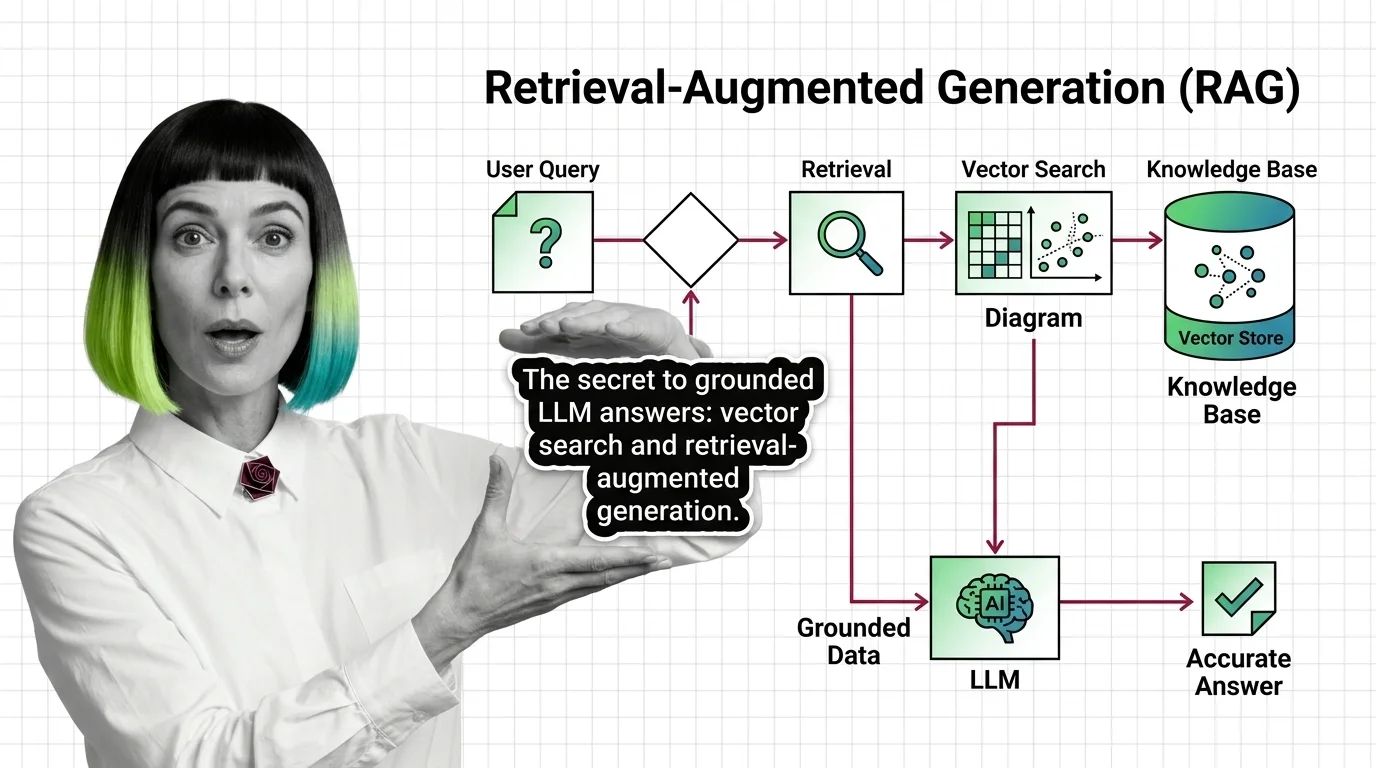

What Is RAG and How LLMs Use Vector Search to Ground Their Answers

Retrieval-augmented generation pairs an LLM with a vector index so answers are grounded in real documents — not just …

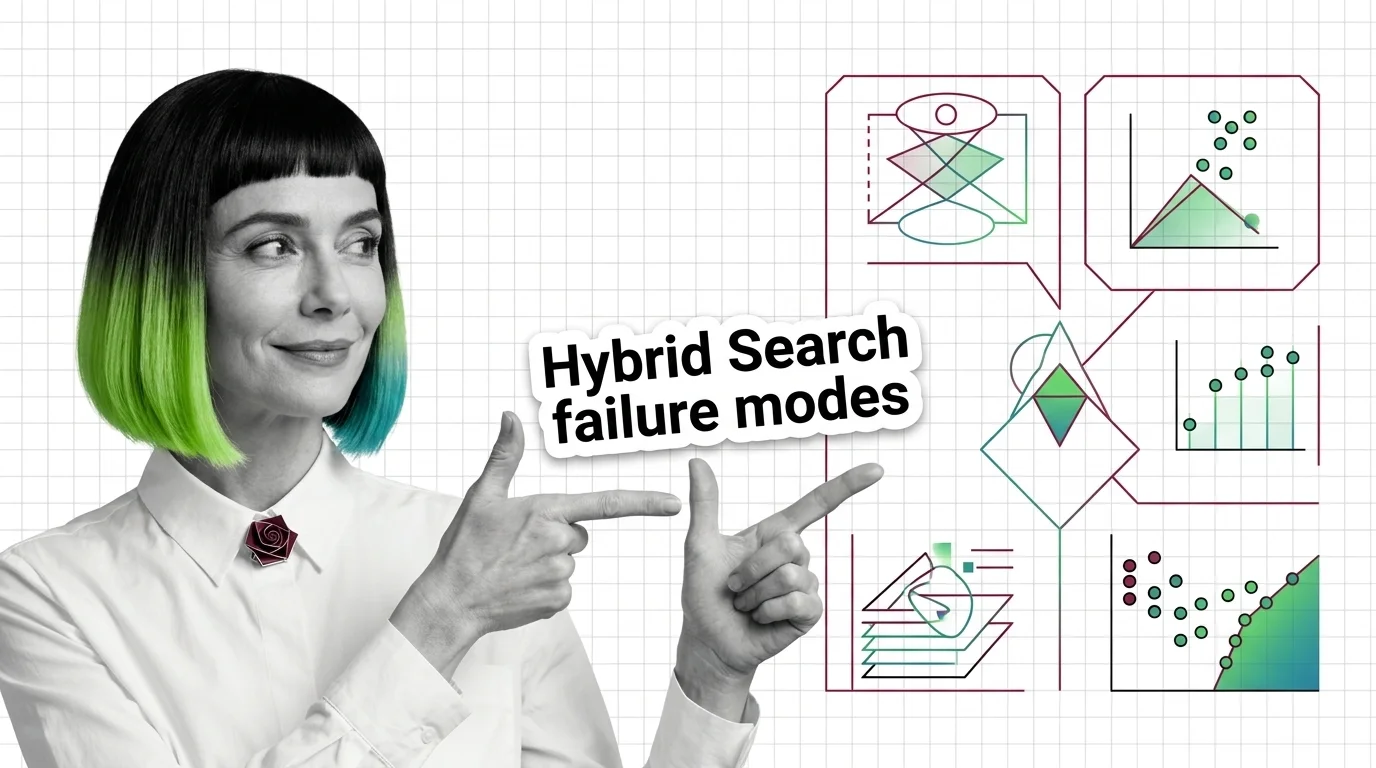

Score Mismatch, Tuning Hell: The Hard Limits of Hybrid Search Fusion

Hybrid search merges BM25 and vector results, but the fusion step has hard limits. Score mismatch, RRF blindness, and …

From Chunking to Reranking: RAG Pipeline Components and Prerequisites

Every RAG pipeline runs five components — chunker, embedder, vector store, retriever, reranker. Here is what each one …

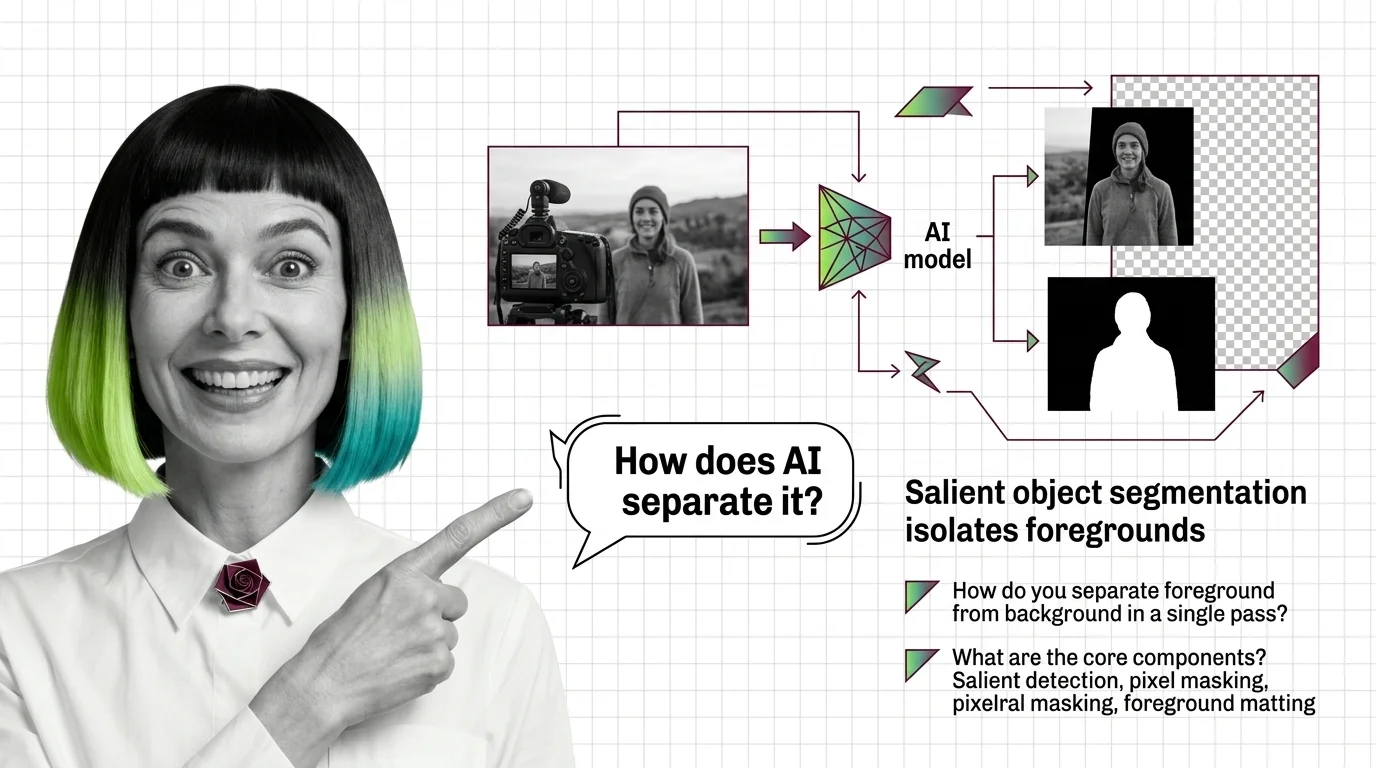

What Is AI Background Removal? How Salient Object Segmentation Works

AI background removal is not one model — it's salient object detection plus alpha matting. See how U2-Net, BiRefNet, and …

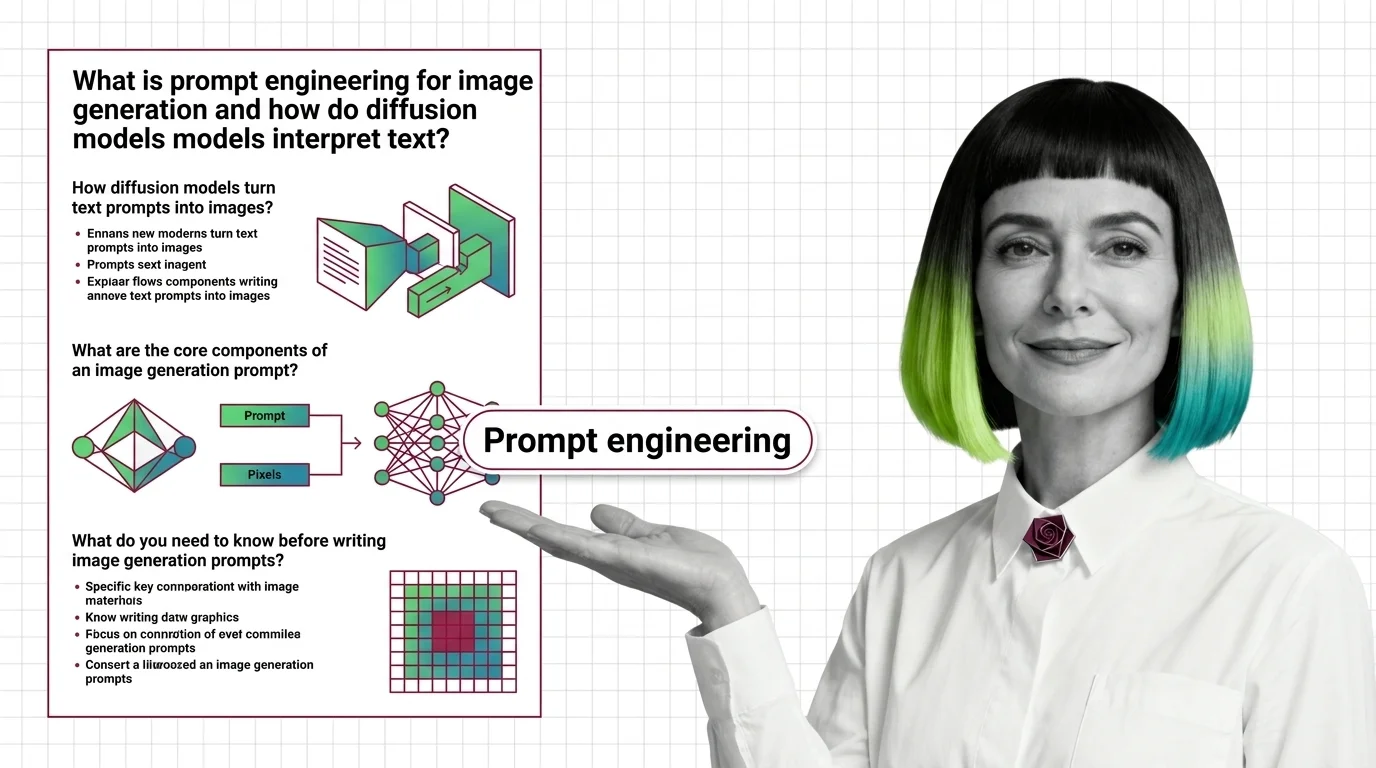

Prompt Engineering for Image Generation: How Diffusion Models Read Text

Image prompts steer probability, not pixels. Learn how diffusion models, cross-attention, and CFG turn text into images …

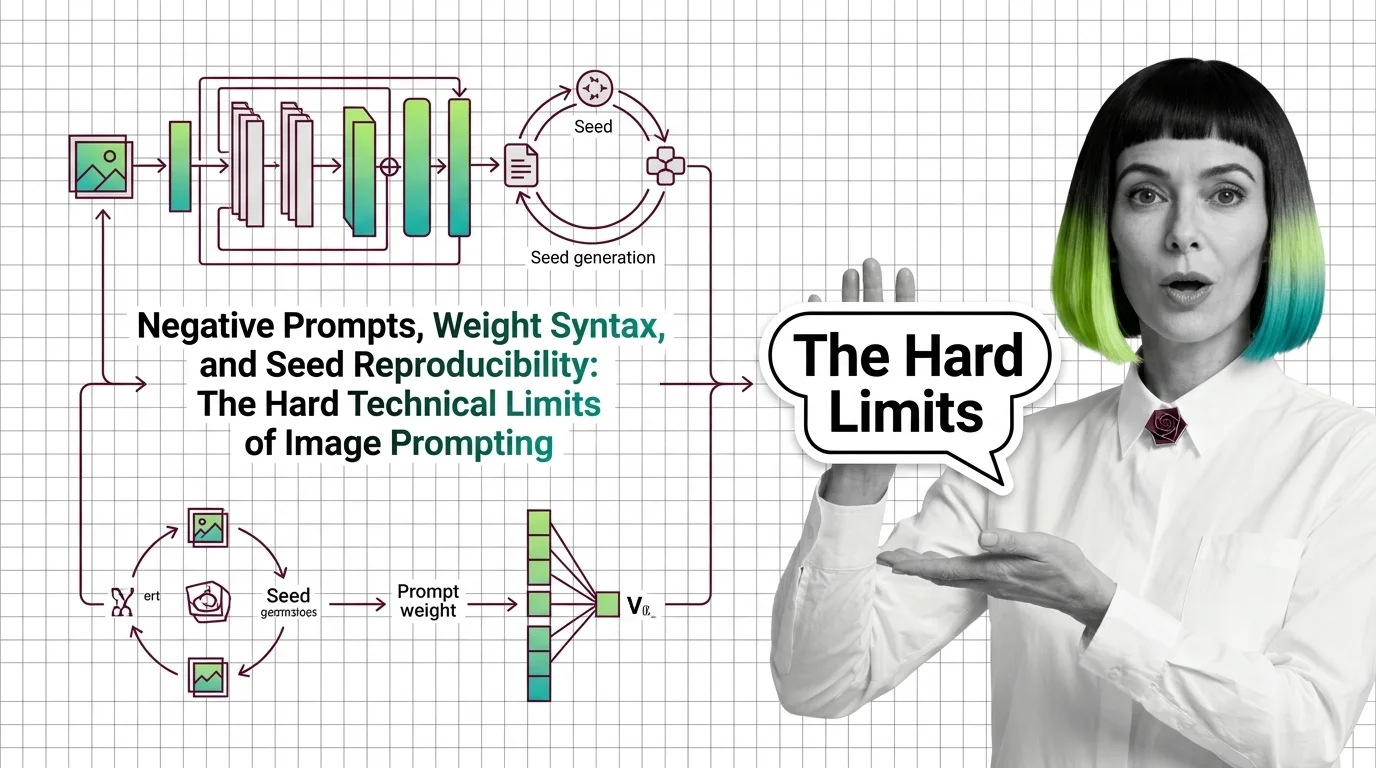

Negative Prompts, Weights, Seeds: Image Prompting Limits 2026

Negative prompts and weight syntax aren't universal — and seed reproducibility breaks across model versions. Inside the …

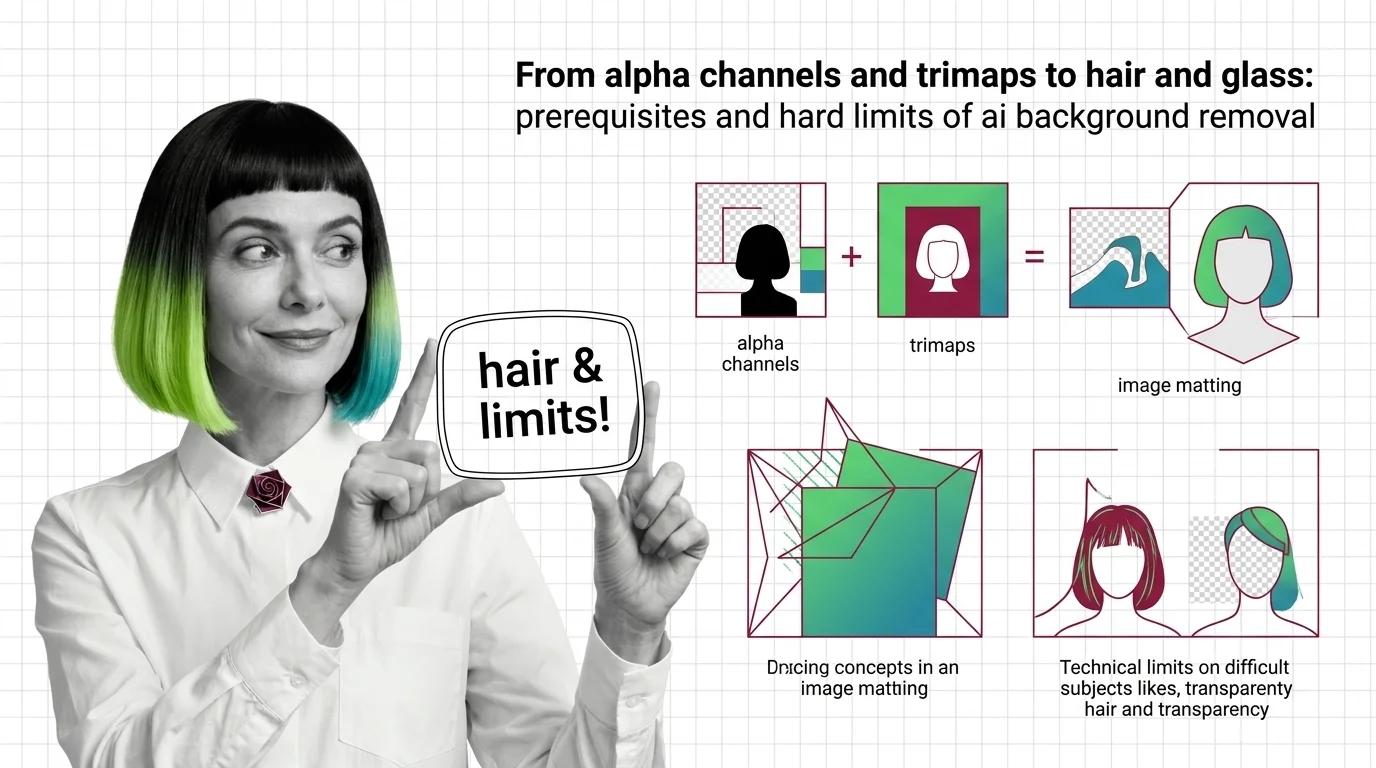

Alpha Channels, Trimaps, and the Hard Limits of AI Background Removal

Background removal is alpha estimation, not subject detection. Learn how trimaps and matting work, and why hair, glass, …

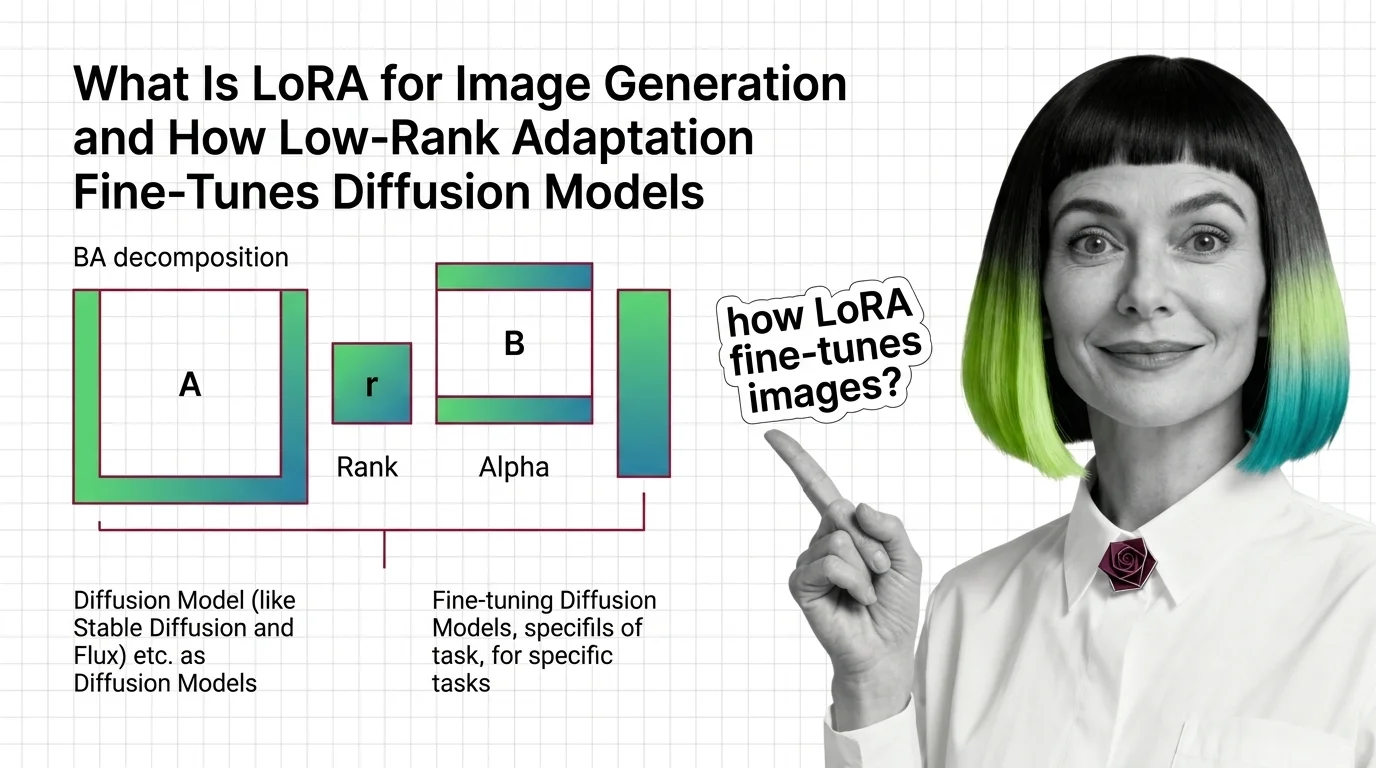

How LoRA Fine-Tunes Diffusion Models for Image Generation

LoRA fine-tunes Stable Diffusion and FLUX without retraining. Learn how rank, alpha, and the BA decomposition turn a …

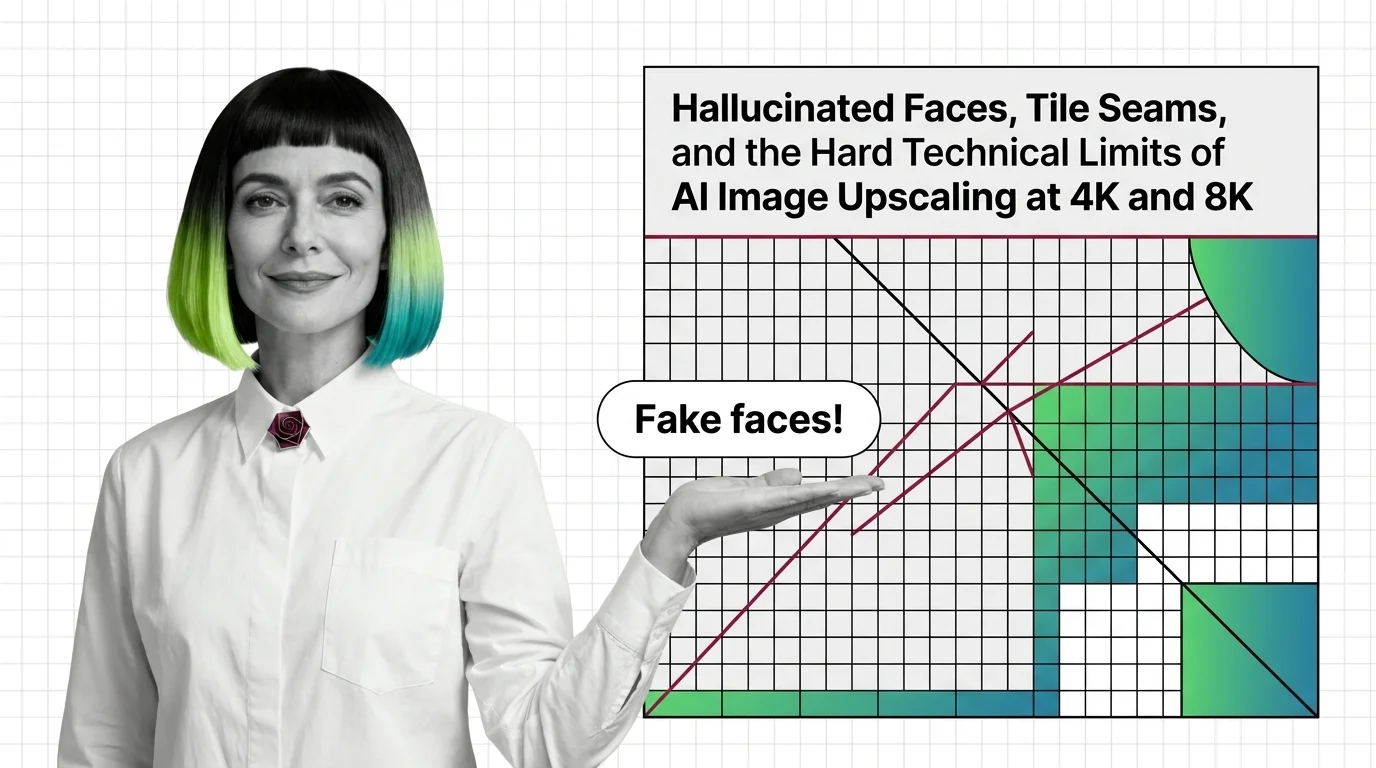

Why AI Upscalers Hallucinate Faces and Tile Seams at 4K and 8K

AI upscalers don't break at 4K and 8K because of weak hardware. The failures are structural — rooted in diffusion priors …

What Is Image Upscaling and How AI Super-Resolution Reconstructs Detail Beyond the Original Pixels

AI image upscaling doesn't enlarge what was captured — it generates plausible pixels from a learned prior. Learn how GAN …