Neural Network Architectures for Developers: What Maps and What Breaks

Table of Contents

Your inference endpoint’s latency doubled last Tuesday. Nothing in your deployment changed — same instance, same container, same code. The only variable was input length. Your image classification service held steady at twelve milliseconds regardless. Your time-series anomaly detector crept up linearly. Your LLM-backed summarizer — the one with the clean request-response contract and the fixed-price SLA — jumped from 200ms to 800ms when a user pasted a longer document. Three services, three cost curves, and your capacity planning spreadsheet treated them all as linear.

That spreadsheet is wrong. And the reason it is wrong traces directly to an architectural property you were never briefed on: different Neural Network Basics for LLMs families make different mathematical bets about how to process input, and those bets determine everything from inference cost to failure modes to what you can debug and what you cannot.

Three Bets Under One Abstraction

If you have been consuming ML models through API endpoints, the differences between architectures are invisible. Every model looks like the same thing: JSON in, JSON out, a latency number, and a price tag. But underneath that contract, three fundamentally different structures are doing fundamentally different work.

A Convolutional Neural Network slides small filters across spatial input — images, spectrograms, sensor grids — looking for local patterns that compose into larger ones. Edges become textures become objects. The key structural bet is locality: adjacent pixels matter more than distant ones, and the same pattern is worth detecting everywhere in the image. This is why your image classifier’s latency doesn’t change with content complexity. The filter slides the same number of times regardless.

A Recurrent Neural Network processes input one element at a time, carrying a hidden state forward. Each step compresses everything the network has seen so far into a fixed-size vector. The bet is temporal: order matters, and context accumulates. This is the architecture behind streaming anomaly detection, time-series prediction, and — until recently — most sequence-to-sequence tasks.

Transformers — the architecture underneath your LLM endpoint — make a third bet entirely. Every element in the input attends to every other element simultaneously. No locality assumption, no sequential processing. The cost of that flexibility is that computation scales with the square of input length. When your user pasted a longer document, the attention mechanism had to compute relevance scores between every pair of tokens.

Your pipeline instincts still apply here. These are layered systems with input validation, preprocessing stages, and output contracts. Batch-size tuning, request queuing, data format contracts — all of that transfers directly. The architecture families diverge on what happens inside the layers, not on how you feed them data or collect results.

That distinction matters.

Where Your Engineering Vocabulary Maps

The mapping between classical software concepts and neural network architectures is real, but it is shallower than the shared vocabulary suggests. Some instincts transfer cleanly. Others transfer the shape but not the substance.

| SW Concept | CNN Mapping | RNN Mapping | Transformer Mapping | Where It Breaks |

|---|---|---|---|---|

| Stateless request processing | Holds — each image is independent | Breaks — hidden state carries across inputs | Holds per request, but context window acts like session | RNN “state” is a compressed vector, not serializable session data |

| Input validation contracts | Exact shape required (height, width, channels) | Sequence length and feature dimension matter | Token count and format matter | Shape mismatch produces silent wrong answers, not errors |

| Preprocessing pipeline | Normalization, resizing, augmentation | Windowing, padding, feature scaling | Tokenization, truncation, padding | Each architecture needs its own preprocessing — swapping backbones without updating preprocessing is the most common silent failure |

| Cost scales with request volume | Correct — fixed compute per image | Partially — compute also scales with sequence length | Breaks — compute scales quadratically with input length | Capacity planning based on request count alone underestimates transformer costs |

The data contract concept — specifying what goes in, what comes out, and what shape failures take — transfers perfectly. If you already think in interface contracts and pipeline stages, you are not starting from zero. You are applying those instincts to a system where the failure modes are less visible.

What does not transfer is the assumption that intermediate state is inspectable. In your service architecture, you can log the output of each pipeline stage, set breakpoints between components, and trace a failure to a specific module. In a neural network, the “intermediate state” between layers is a high-dimensional tensor — a 512-element vector in an RNN, a stack of feature maps in a CNN — with no human-readable meaning.

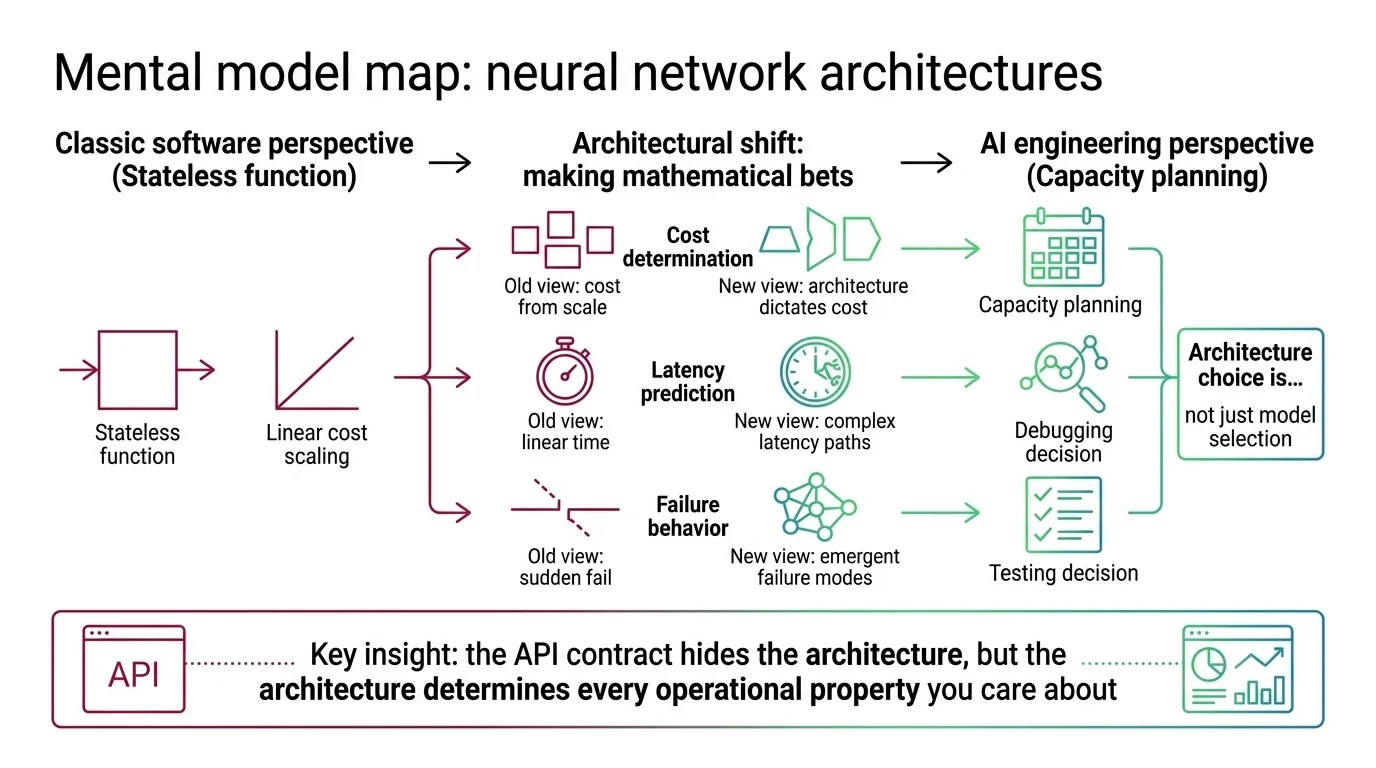

Mental Model Map: Neural Network Architectures From: All ML models are stateless functions with linear cost scaling Shift: Different architectures make different mathematical bets that determine cost, latency, and failure behavior To: Architecture choice is a capacity planning, debugging, and testing decision — not just a model selection decision Key insight: The API contract hides the architecture, but the architecture determines every operational property you care about

Why Your Cost Model Breaks at Scale

Which inference cost model breaks the ‘compute scales linearly’ assumption?

If you have been capacity-planning ML services the way you plan database or API workloads — requests per second times average latency — one of these architectures will surprise you.

CNN inference cost is fixed per input. A 224x224 image takes the same compute whether it contains a cat or a medical scan. Your load balancer and autoscaler work exactly as expected. This is why CNNs remain dominant for real-time vision tasks, edge deployment, and anywhere latency budgets are tight. MONA’s explainer on how CNNs extract visual features covers the mechanism underneath — what matters here is that your request-count-based pricing model holds.

RNN inference cost is linear with sequence length. Each additional time step adds one pass through the recurrent cell. If your anomaly detector processes 100-step sequences, doubling the window doubles inference time. Predictable, plannable, but dependent on a parameter — sequence length — that your standard monitoring dashboards may not track. MONA’s explainer on RNN hidden states covers why that linear cost comes with a memory constraint that sets an upper bound on what the model can remember.

Transformer inference cost is quadratic with input length. The attention mechanism computes pairwise relevance scores between every token. Double the input, quadruple the compute. This is the property that made your summarizer’s latency jump — and it is the property that most API pricing pages encode in their per-token rates without explaining why.

The practical consequence: if your SLA guarantees latency in milliseconds, and the endpoint runs a transformer, that guarantee is only valid for a specific input length range. Your contract needs a token-count ceiling, or your first long-context request rewrites the economics.

The Debugging Dead End Inside the Layer

Why does ‘same input always produces same output’ break during neural network training?

During inference with fixed weights, neural networks are deterministic — same input, same output. Your integration tests work. But during training, stochastic elements are everywhere: random weight initialization, random minibatch sampling, dropout layers that randomly zero activations. Run the same training code on the same data twice, and you get a different model. This means your CI pipeline cannot enforce “training produces identical results.” Reproducibility requires pinning random seeds, fixing hardware, and accepting that even then, floating-point non-determinism on GPUs may produce divergent outcomes.

The deeper shift is what “testing” means when the system under test is learned rather than written. You did not author the decision boundary. You trained it. The model’s behavior is a statistical consequence of data, architecture, and optimization — not a logical consequence of instructions you wrote. Your test strategy shifts from “does the code do what I specified” to “does the model behave acceptably across a distribution of inputs.”

Where does the ‘inspect intermediate state’ debugging instinct fail?

When your microservice returns wrong results, you trace the request through each service, inspect the intermediate payloads, and find the module that transformed the data incorrectly. When a neural network produces wrong output, the “intermediate state” is a 512-dimensional feature map or a hidden vector with no human-readable labels. There is no module with a testable responsibility and an inspectable interface.

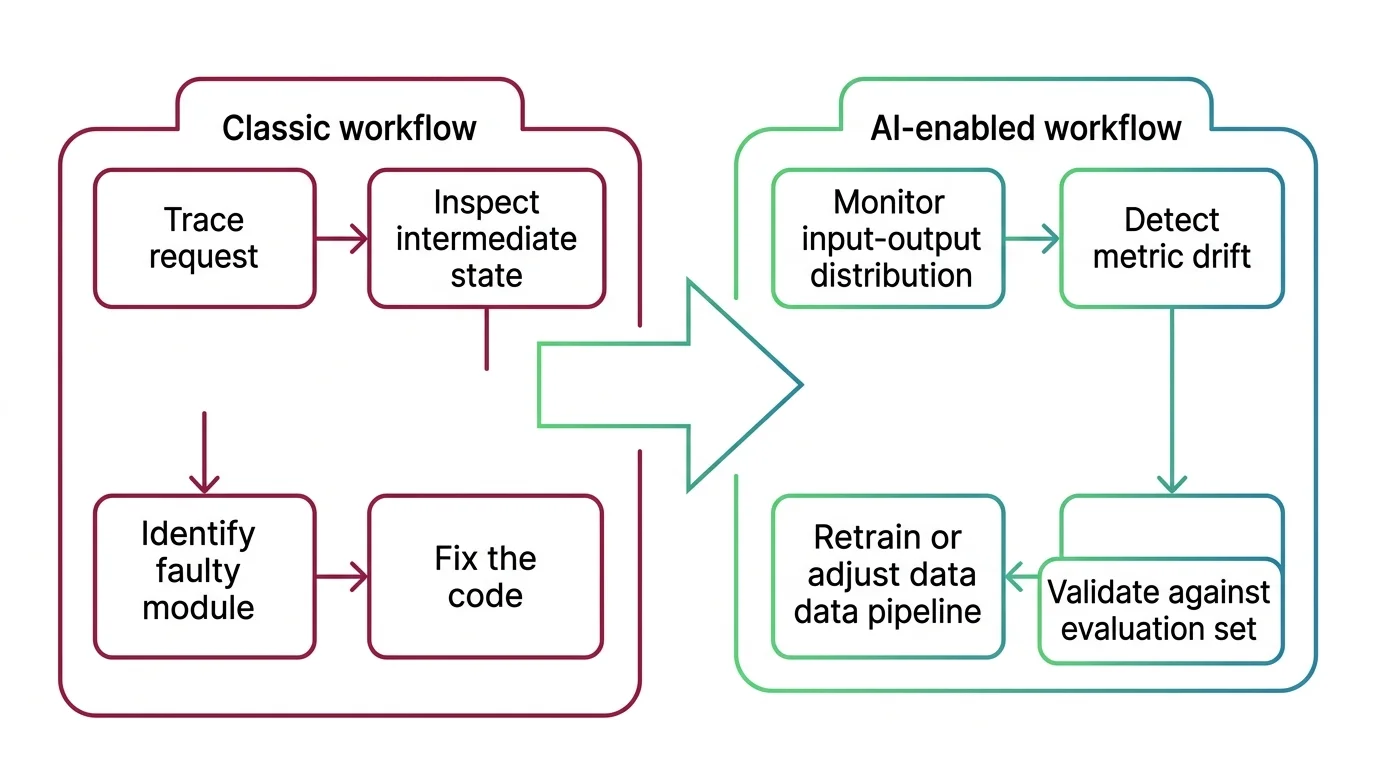

This is not a tooling gap waiting to be filled. It is a structural property of how these systems encode knowledge — as distributed patterns across millions of weights, not as discrete rules in identifiable components. ALAN’s essay on neural network opacity traces the accountability implications. For debugging purposes, what matters is the practical shift: your investigation moves from component inspection to behavioral observation. You monitor input-output distributions, track metric drift over time, and compare against held-out evaluation sets.

Shift Diagram: Debugging neural network failures Classic: Trace request → Inspect intermediate state → Identify faulty module → Fix the code AI: Monitor input-output distribution → Detect metric drift → Retrain or adjust data pipeline → Validate against evaluation set

The Architecture Zoo Is Smaller Than It Looks

A common misconception among developers encountering this space for the first time is that transformers replaced everything else. They did not. They dominate language tasks and are expanding into vision, but specific engineering constraints keep older architectures in production.

CNNs remain the default for real-time vision — image classification, object detection, medical imaging — wherever spatial locality is a strong prior and latency budgets are tight. ConvNeXt demonstrated that much of the transformer’s apparent advantage in vision was training methodology, not architecture. Hybrid CNN-transformer models now dominate production deployments across autonomous vehicles and medical devices. MONA’s explainer on CNN evolution from LeNet to ConvNeXt traces that convergence.

RNNs — specifically LSTMs and GRUs — hold niches where transformers are overkill: edge devices with constrained memory, streaming time-series where you cannot buffer a full context window, and low-data settings where a simpler architecture generalizes better. The recurrent revival is real: xLSTM and minLSTM have shown that recurrence matches transformer quality at linear inference cost. DAN’s analysis of the recurrent revival covers the production implications.

Generative Adversarial Network architectures hold where latency is the binding constraint — real-time super-resolution, AR pipelines, and specialized generation tasks where a single-pass generator beats iterative diffusion. Variational Autoencoder architectures power the compression layer inside every major diffusion model and show up in anomaly detection and drug discovery. Graph Neural Network architectures handle data that is neither a grid nor a sequence — molecular structures, social networks, fraud detection on transaction graphs — where the topology itself is the signal.

The practical consequence for your architecture decisions: the right question is not “which architecture is best” but “which structural bet matches my data, my latency budget, and my deployment constraints.” The answer varies by service, not by era.

The Updated Mental Model

Neural network architectures are not interchangeable black boxes behind an API. They are structural bets with distinct cost profiles, failure modes, and debugging surfaces — and the architecture your service runs on determines which of your engineering instincts you can trust. Start with MONA’s explainer on how neural networks learn for the shared training mechanism, then follow the architecture that matches your production workload into its deep articles.

FAQ

Q: Do I need to understand neural network internals to use ML APIs in production? A: Not the math — but you need the operational model. Architecture determines cost scaling, failure modes, and debugging strategy. Treating all endpoints as identical black boxes breaks your capacity planning and incident response.

Q: Which neural network architecture should I choose for a new ML service in 2026? A: Match the structural bet to your constraints. CNNs for spatial data with tight latency. RNNs for streaming or edge-constrained sequences. Transformers for flexible language and multimodal tasks where compute budget allows quadratic scaling.

Q: Are CNNs and RNNs still relevant now that transformers dominate? A: Yes. CNNs dominate real-time vision and medical imaging. RNNs hold edge deployment and streaming niches. Hybrid architectures combining recurrent and attention layers are an active production trend. The architecture that fits your deployment constraint matters more than the architecture that leads benchmarks.

AI-assisted content, human-reviewed. Images AI-generated. Editorial Standards · Our Editors