AI Image Stacks for Developers: What Maps and What Breaks

Table of Contents

Your /generate endpoint shipped Tuesday. Snapshot tests pinned to seed 42, perceptual-hash assertions tolerant within a few bits. Green for six weeks. Wednesday morning, fifty failed builds — identical prompt, identical seed, the picture is just different. OpenAI rotated system_fingerprint overnight. Your hosted Diffusers pipeline picked up a new attention processor in the latest container. The assertions that were supposed to catch drift were calibrated for one provider, on one runtime, on one Tuesday.

The pipeline did not break. The contract did. And nothing in your old debugging playbook tells you which of those four moving parts to look at first.

The Pipeline That Shipped Without a Spec

Image generation entered your stack through one of four doors. A user-facing feature wrapped a Diffusion Models API. A marketing automation flow grew an “auto-create variants” step. A Shopify-style asset pipeline added AI Background Removal to clean catalog photos. A creative tool grew AI Image Editing hooks behind a quick-edit button. Maybe all four.

In every case the integration surface looks familiar. You POST a JSON body, you get back a URL or a base64 blob, you ship it. You already know how to wrap that in a service. You already know how to retry, log, queue, and meter. The instincts that built your payment integration are doing real work here.

The trouble is that “send a request, get a response” hides at least three different image jobs behind one HTTP shape. Generation samples a new image from a prompt. Editing samples a new image conditioned on an old one and an instruction. Image Upscaling samples additional detail from a learned prior. Each one looks like an API call. Each one is, underneath, an iterative sampling process running across a billion-plus parameters with knobs you did not configure.

The good news: API gateway instincts transfer cleanly. The thing you are building is a router with per-backend contracts and a fallback ladder. MAX’s image editing pipeline guide treats this exactly that way — “which model edits this image” is a routing decision, not an architecture commitment. The bad news: every backend has a different contract about what counts as the same job. And that is where the weeks disappear.

Same Prompt, Same Seed, Different Picture

You expect a hash function. Same input bytes, same output bytes, every time. That instinct will quietly destroy your test suite.

Why does the same prompt with the same seed produce different images across model versions, runtimes, and even attention processors?

A diffusion or flow-matching model is not running a hash function. It is running an iterative sampler over a high-dimensional vector field, and the trajectory through that field depends on a stack of things you did not pin: the model weights, the scheduler implementation, the attention processor, the GPU’s RNG, the output resolution, and — for managed APIs — an opaque server-side fingerprint that rotates without a version bump.

Hugging Face’s Diffusers documentation says it bluntly: you can try to limit randomness, but it is not guaranteed even with an identical seed across releases, GPUs, and CUDA versions. OpenAI documents seed as best-effort and tells you to consult system_fingerprint to know when results may have drifted. Midjourney documents seeded reruns as “99% identical,” not pixel-identical. FLUX.2 supports seeds; GPT Image 2 exposes only quality, size, format, compression, and n — with no seed or negative_prompt; Nano Banana Pro does not expose a seed parameter at all and asks you to fix entropy with reference images instead.

| Provider | Seed semantics | What “same job” actually means |

|---|---|---|

| OpenAI GPT Image 2 | Best-effort, gated by system_fingerprint | Same fingerprint, same params, same prompt |

| Midjourney V7 / V8.1 | Seeded reruns ~99% identical | Visually similar, not byte-identical |

| FLUX.2 (BFL API) | Seed supported on the latent flow-matching transformer | Same model variant, same provider build |

| Diffusers self-host | torch.Generator + enable_full_determinism() | CPU-RNG generator, pinned attention processor, fixed CUDA |

| Nano Banana Pro | No seed parameter | Reference-image conditioning instead |

Why are upscalers, background removers, and instruction-based editors all sampling steps rather than deterministic image transforms?

Because the model has no concept of “the original image.” It has a learned prior — built from millions of unrelated images — and your input acts as a soft constraint that pulls the sample toward something plausible. An upscaler is not adding the missing pixels. It is hallucinating pixels that are statistically consistent with what survived. A background remover is not detecting your subject. It is solving an alpha-estimation problem on the compositing equation. An instruction-based editor is conditioning on two inputs — image plus instruction — and sampling a fresh image that happens to preserve the parts you did not mention.

That changes what your tests should assert. Equality is the wrong contract. Your CI pipeline needs perceptual similarity, embedding distance, or task-specific assertions — not a byte-equal snapshot. MAX’s reproducible prompt testing guide walks through Promptfoo, seed planes, and assertion suites built for this. Your debugger will not help here.

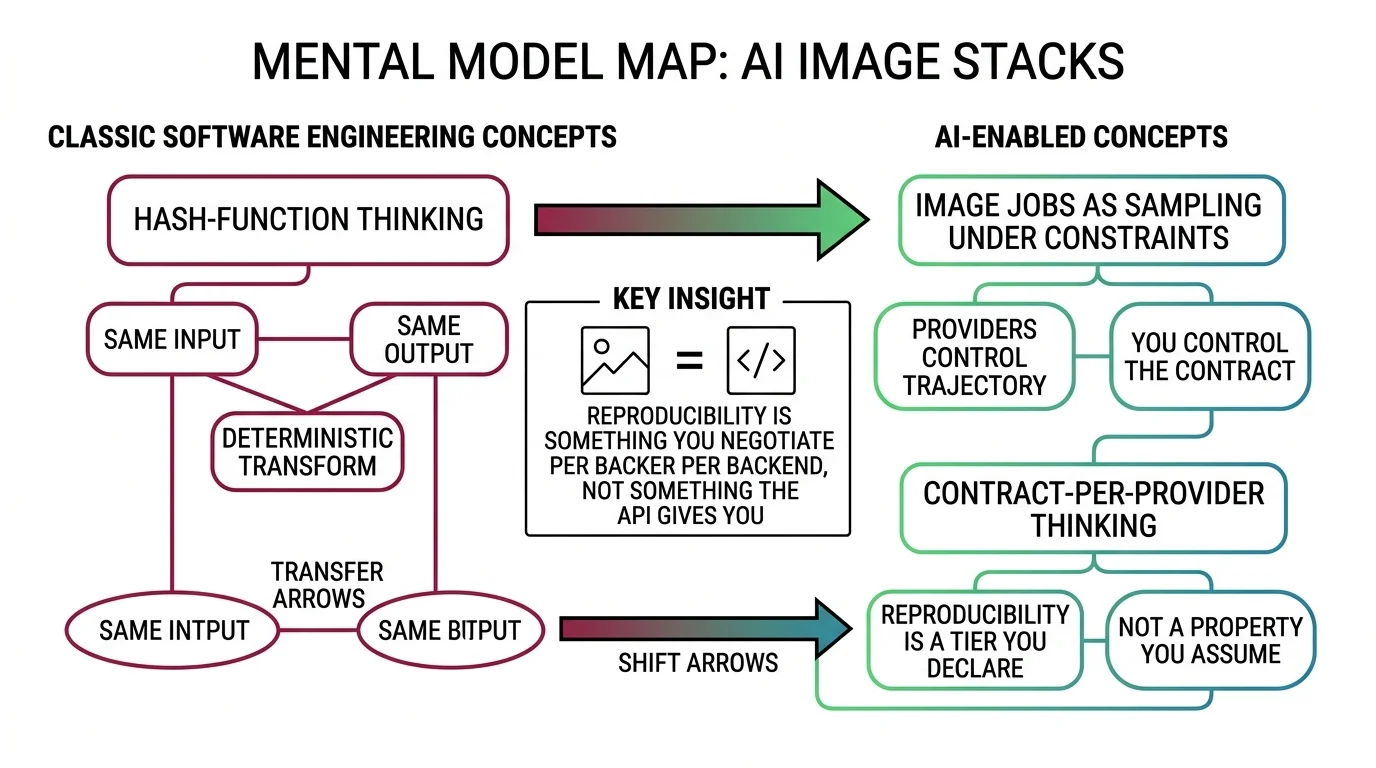

Mental Model Map: AI Image Stacks From: Hash-function thinking — same input, same output, deterministic transform Shift: Image jobs are sampling under constraints — providers control the trajectory, you control the contract To: Contract-per-provider thinking — reproducibility is a tier you declare, not a property you assume Key insight: Reproducibility is something you negotiate per backend, not something the API gives you

The Prompt-as-Query-String Trap

The most expensive misconception in this domain is that a prompt is a string. It is not. It is a configuration tuple.

Why does the “prompt is a query string” analogy collapse the moment you switch from Midjourney to Stable Diffusion to FLUX to GPT Image?

Each model parses the prompt with a different text encoder. SD 1.5 used CLIP. SDXL stacked CLIP-L with OpenCLIP-G. SD 3.5 added a T5-XXL of roughly 4.7 billion parameters. FLUX.2 swapped both encoders for Mistral Small 3.2, a vision-language model of roughly 24 billion parameters. GPT Image 2 is autoregressive and reads paragraphs. Nano Banana Pro is the same Gemini family that runs your text app — a multimodal LLM that wants conversation.

That divergence is not cosmetic. CLIP-based pipelines treat prompts as tagged keyword bags and respond to weight syntax like (masterpiece:1.3) and [bad_hands]. T5 and Mistral encoders parse natural-language sentences with grammar — weight syntax is parsed as literal punctuation. Midjourney V7 expects parameter flags like --sref, --sw, and --ar. GPT Image 2 explicitly rejects every shorthand the previous generation taught you. Negative prompts work on Stable Diffusion. FLUX.2 documentation states plainly that it does not support negative prompts and tells you to describe what you want, not what you don’t.

This is where the “translation bug, not a model bug” pattern shows up in production. A team writes a Prompt Engineering For Image Generation library that works on SDXL. It silently degrades on FLUX. It produces gibberish on GPT Image 2. The team blames the model. The bug is in the encoder mismatch. MAX’s prompt-grammar guide names the three grammar families — parameter-flag, weight-syntax, natural-language — and gives you the contract checklist for each.

The instinct that does transfer: spec the contract, then translate. Write the spec once in plain English — what the image must contain, what it must avoid, what output mode you want, what edge cases break the brief — then render that spec into each tool’s grammar separately. The spec is portable. The prompt is not.

When “Just Train a LoRA” Stops Being Cheap

Marketing decks make this sound easy. Twenty photos, fifteen minutes, a 24 GB consumer GPU, and you have a brand-aligned model. Most of that is technically true. None of it is the part that bites you.

Why is “we’ll just train a LoRA for our brand” rarely a small task, and which dataset, caption, VRAM, and rank-alpha assumptions cause silent failure?

A

LoRA for Image Generation adapter is a small file — often a few megabytes — that retargets attention layers in a frozen base model. The math is a low-rank decomposition: the update ΔW factors into two skinny matrices B and A, and α/r controls how loud the adapter speaks at inference. That part is clean.

The part that is not clean: the trainer is dictated by the base model, the learning rate is dictated by the encoder, the dataset is dictated by the failure mode, and the trigger word is dictated by the encoder again. Use Kohya for SDXL and FLUX.1, switch to ai-toolkit or fal.ai for FLUX.2 [klein] because Kohya’s main branch did not officially support FLUX.2 at last check. Set the learning rate to 5e-4 because that worked on SDXL — except FLUX’s T5-conditioned 12B-parameter backbone overfits at that rate. Throw in 30 portrait photos with the same lighting and you do not train a “character” LoRA — you train a “specific lighting” LoRA wearing a face.

| Surface | Spec required before training | What goes wrong if you skip it |

|---|---|---|

| Base model | SDXL, FLUX.1 [dev], or FLUX.2 [klein]/[dev] | Silent collapse — gray noise output |

| Trainer | Kohya, ai-toolkit, or fal.ai | Key-load errors, “unused weights” warnings |

| Dataset | 15-30 images, varied lighting, captioned for the encoder | Model carries unwanted style bias |

| Trigger word | Unique non-English token at caption start | Collisions with base-model vocabulary |

| Rank / alpha | r and α set explicitly, not as defaults | α/r ratio changes adapter loudness across runs |

Build-vs-buy instincts still apply. The artifact you are buying is just smaller and stranger than usual — a few-megabyte adapter file with a rank-alpha contract, plus a base-model dependency that can break on the next FLUX release. LoRAs trained on FLUX.1 [dev] must be retrained for FLUX.2 [dev] because the architecture changed. MAX’s LoRA training guide treats it as a four-surface specification problem. Your dataset-quality instincts from any classical ML project transfer almost intact. Your “small file = small task” instinct does not.

The Router Decision You Already Know

This is the most useful place where your existing instincts hold up — and the most useful place to anchor the rest of the cluster.

Which API gateway and vendor-router instincts transfer once “which model edits this image” becomes a runtime routing decision?

You already know how to build a multi-vendor router. Per-request routing rules. Per-backend contracts. Fallback ladders. Rate limiters. Observability with per-vendor labels. License-flag propagation. Cost ceilings per tenant. All of that transfers, line for line, into an image stack with multiple backends.

The 2026 frontier rewards exactly this pattern. The image-editing arena currently sits in a four-model cluster — GPT Image 1.5, Nano Banana Pro, Nano Banana 2, and HunyuanImage 3.0-Instruct — separated by an Elo spread tight enough that benchmark noise could reorder them tomorrow. The upscaler market split into two camps that share a leaderboard but solve different jobs: re-imagine upscalers (Magnific, SUPIR, Krea Enhance) and preserve upscalers (Real-ESRGAN, Topaz Gigapixel). The background removal market forked into open-weights self-host (BRIA RMBG-2.0, SAM 2.1, rembg) and commercial workflow APIs (Photoroom, remove.bg). None of these markets are stable. All of them benefit from the same architectural answer: route the work, do not commit to a vendor.

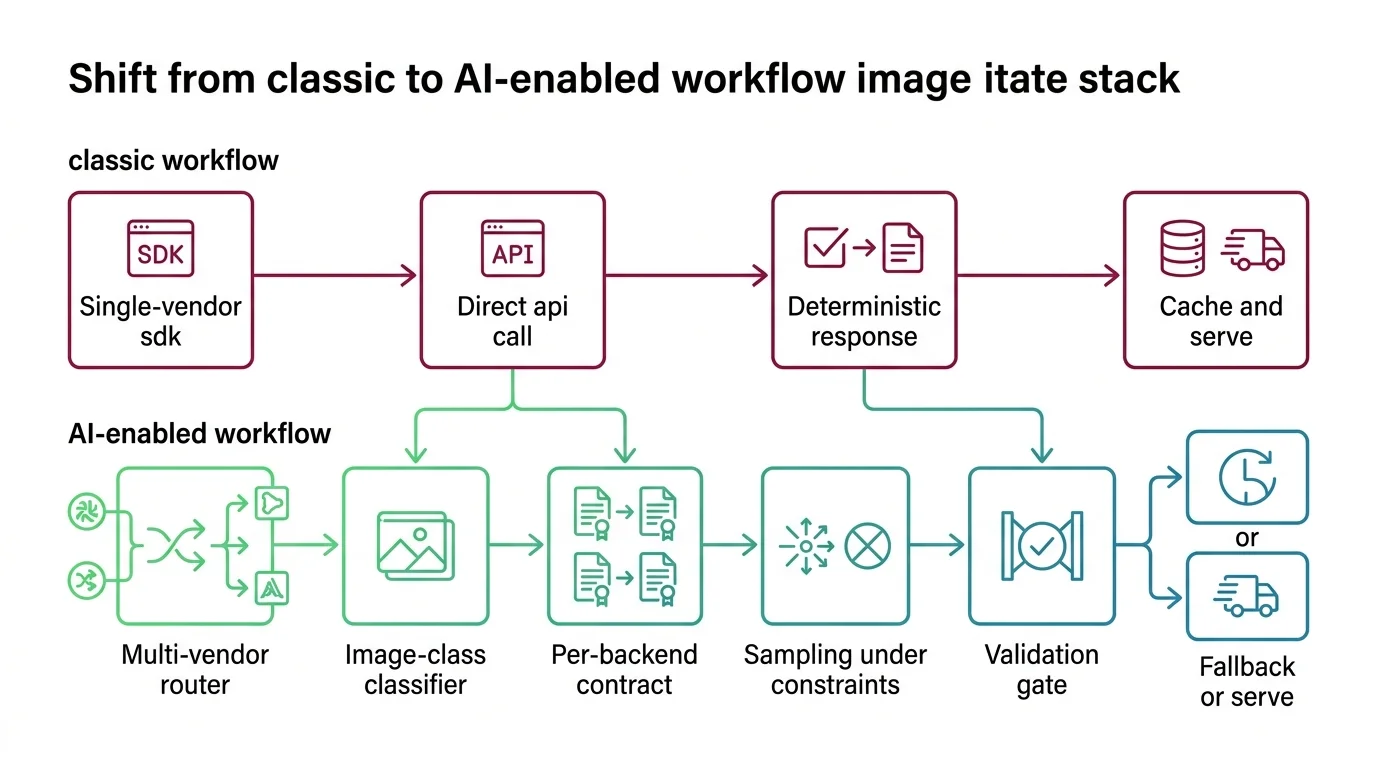

Shift Diagram: AI Image Stacks Classic: Single-vendor SDK → Direct API call → Deterministic response → Cache and serve AI: Multi-vendor router → Image-class classifier → Per-backend contract → Sampling under constraints → Validation gate → Fallback or serve

What changes is the validation step. A router for payment providers either gets a 200 or it does not. A router for image backends gets a sample from a learned prior — one that may have white halos around hair, painted-over fabric, the wrong character on the packaging, or a cutout running on a non-commercial license your engineer swapped in last week. Image editing, upscaling, and cutouts each have a known short list of failure modes. Validation is part of the contract, not part of QA. MAX’s editing pipeline guide shows the routing-plus-validation pattern in detail.

You now know which instincts survive the trip into image AI and which ones quietly betray you. Spec contracts, route work, validate samples, and treat reproducibility as a tier you declare per provider — not a property you assume of the API. The next reading depends on which surface bites first: the prompt-grammar guide for translation bugs, the editing pipeline guide for routing, the LoRA training guide for customization, or the reproducible prompt testing guide for the assertions your CI needs.

FAQ

Q: Why are my image generation snapshot tests failing after a provider update?

A: Image models are not hash functions. The same prompt and seed produce different bytes when the provider rotates system_fingerprint, ships a new attention processor, or changes scheduler implementation. Switch from byte-equal snapshots to perceptual similarity or embedding-distance assertions, and pin every version your pipeline depends on.

Q: Can the same prompt work across Midjourney, FLUX, and GPT Image 2? A: No. Each model uses a different text encoder and parses prompts as a different grammar — parameter flags for Midjourney, weight syntax for Stable Diffusion, natural-language paragraphs for FLUX, GPT Image, and Gemini. Write the spec once in plain English, then render it into each tool’s grammar separately.

Q: Do I need to train a custom LoRA to get brand-consistent output? A: Sometimes. A LoRA is a small adapter file with a rank-alpha contract that retargets attention layers in a frozen base model. Most “training problems” are dataset and caption problems wearing optimizer clothes — and a LoRA trained for FLUX.1 must be retrained for FLUX.2. Spec the four surfaces (base model, trainer, dataset, trigger) before you start the run.

AI-assisted content, human-reviewed. Images AI-generated. Editorial Standards · Our Editors