BM25, SPLADE, and Reciprocal Rank Fusion: The Building Blocks of Production Hybrid Search

Table of Contents

ELI5

Hybrid search combines a keyword index (BM25) with a vector or sparse-neural index (SPLADE), then merges their ranked lists with a fusion step — usually Reciprocal Rank Fusion. Two retrievers see different signals; fusion picks the consensus.

A team I worked with ran a Retrieval Augmented Generation pipeline backed entirely by vector search. It found the right paragraph on kidney function — and confidently surfaced a paragraph about kidney beans when the query was about renal disease. The remedy was not a better embedding model. The remedy was admitting that two retrievers, each watching for different signals, beat one retriever trying to do both jobs.

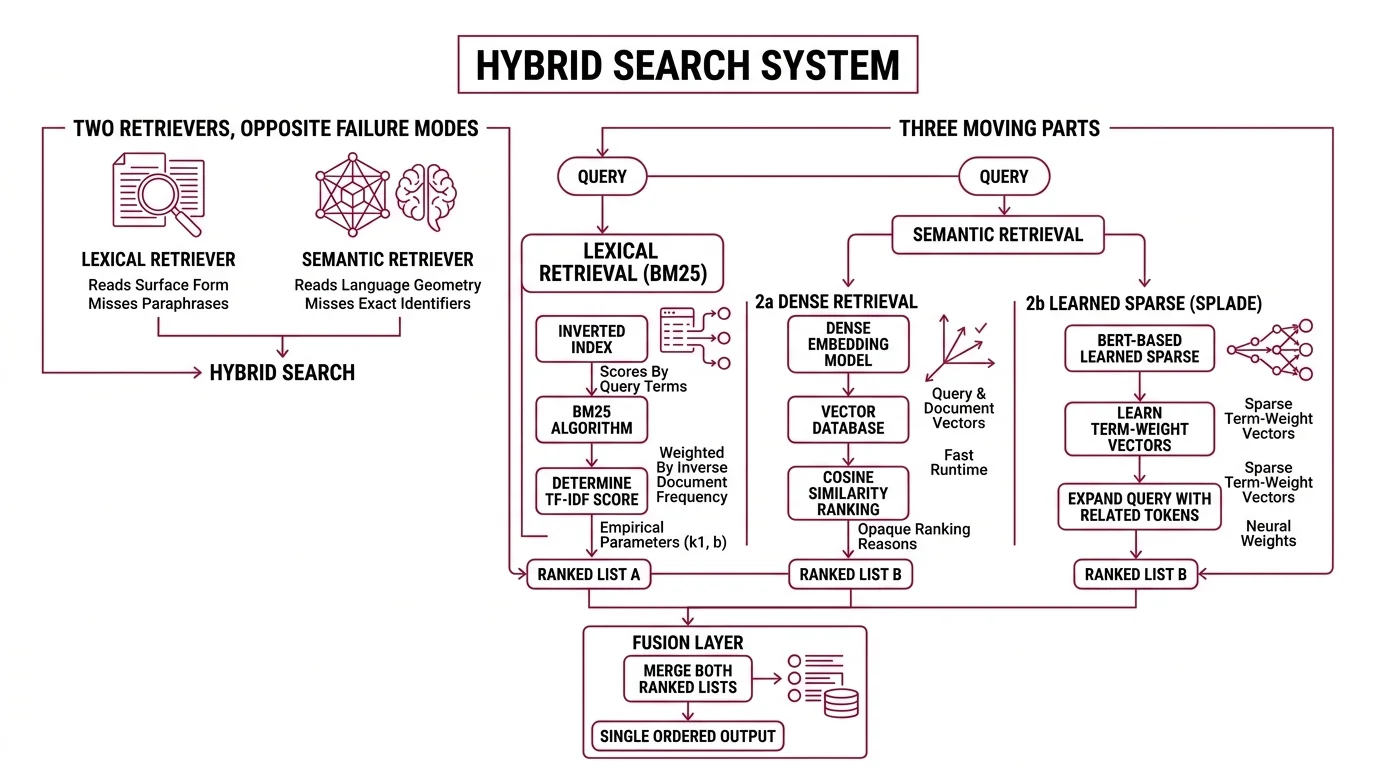

Two Retrievers, Two Different Failure Modes

Hybrid Search is not a single algorithm. It is a deliberate pairing of two retrievers chosen because they fail in opposite directions. One reads the surface form of language; the other reads the geometry.

What are the main components of a hybrid search system?

A production hybrid search system has three moving parts. A lexical retriever, almost always BM25 over an Inverted Index. A semantic retriever, either a dense embedding model paired with a Vector Database or a learned sparse model like SPLADE. And a fusion layer that merges the two ranked lists into a single ordered output.

BM25, formalized in Robertson and Zaragoza’s probabilistic relevance framework (City University paper), scores a document by how many query terms it contains, weighted by inverse document frequency and dampened by length. The default parameters most engines ship with — k1 between 1.2 and 2.0 and b near 0.75, per the Stanford IR Book — are not theoretical optima. They are values that work well empirically across diverse English corpora.

The semantic side is where the architecture branches. Dense retrieval embeds query and document into the same vector space and ranks by cosine similarity — fast at runtime, opaque about why any given document ranked high. SPLADE, introduced by Formal, Piwowarski, and Clinchant in 2021 (arXiv 2107.05720), takes a different bet: it learns sparse term-weight vectors over BERT’s vocabulary, expanding the query with related tokens the original wording never used. The output is still sparse, still indexable in an inverted index, but the weights are neural.

The two retrievers fail in opposite directions. BM25 misses paraphrases. Dense retrieval misses exact identifiers — model numbers, error codes, named entities the embedding never learned to discriminate. Hybrid search exists because that asymmetry is real and predictable.

What do I need to understand before learning hybrid search?

You can read this section without any of the prerequisites and still follow the mechanism. But the architecture clicks when you can name three things.

First, an inverted index: a map from term to the list of documents containing that term. BM25 uses it because lexical retrieval is a set-intersection problem, and inverted indexes resolve set intersections in microseconds.

Second, dense embeddings: fixed-length vectors produced by a transformer where similar meanings sit close in space. They power vector retrieval in any modern vector database. The trade-off is geometric, not interpretable. You cannot eyeball a dense vector and say “this captured the word ‘renal’ but lost ‘beans’.”

Third, ranking. Both BM25 and dense retrieval return ranked lists, not binary verdicts. That is the only reason fusion is even possible — fusion is a combinatorial operation on rankings, not on raw scores.

How the Fusion Layer Decides Who Wins

Two ranked lists arrive at the fusion step. They disagree. Some documents rank well in one list and are absent from the other. The fusion algorithm reconciles that disagreement without seeing the raw scores — or, in some variants, while explicitly using them. The choice of fusion method is where most production decisions actually live.

What fusion algorithms are used in hybrid search and how do they differ?

Three families dominate.

Reciprocal Rank Fusion (RRF), introduced by Cormack, Clarke, and Büttcher in 2009 (University of Waterloo PDF), ignores raw scores entirely. It assigns each document a fused score equal to Σ 1 / (k + rank_i(d)), where rank_i(d) is the document’s position in the i-th retriever’s list and k is a smoothing constant — defaulting to 60 across Elasticsearch, Azure AI Search, and most other implementations (Elasticsearch RRF reference). The constant exists to dampen the influence of the very top ranks; without it, rank-1 in either list would always dominate.

Score-based fusion takes the opposite stance. Weaviate’s relativeScoreFusion — the default since v1.24 (Weaviate Docs) — normalizes BM25 and vector scores into a comparable range and combines them with a weighted sum. Qdrant’s DBSF (Distribution-Based Score Fusion, available alongside RRF) goes further: it fits the score distributions and combines them on a probability scale.

Weighted RRF is the recent compromise. It keeps the rank-only logic but lets you bias the contribution of each retriever — useful when one retriever is empirically stronger on your domain. Elasticsearch added it in 9.2 (Elastic Docs); Qdrant added it in v1.17 in early 2026.

Not synonyms. Counterweights.

RRF is robust because it ignores score scale entirely. Score-based fusion is sharper when both retrievers produce calibrated scores. Weighted variants are tuning knobs for when one retriever clearly outperforms the other. The Weaviate API exposes “rankedFusion” (its earlier RRF-style default) and “relativeScoreFusion” but does not call either one “RRF” in its documentation — a small naming detail that matters when migrating between engines.

Do I need a vector database to implement hybrid search or can I use Elasticsearch?

You do not need a dedicated vector database. As of 2026, Elasticsearch 9.1+ exposes RRF as a generally available retriever (Elastic Docs), and its HNSW index handles dense vectors directly alongside native BM25. OpenSearch ships a hybrid query and an RRF processor. Azure AI Search uses RRF with k=60 as the default for hybrid queries.

The choice between an existing search engine and a vector-native database like Qdrant or Weaviate comes down to operational fit, not capability. Elasticsearch and OpenSearch already run in many enterprise stacks; bolting on dense retrieval avoids a new system. Vector-native engines started from the dense side and have moved toward lexical: Qdrant added native BM25 in v1.15.2 and now supports BM25, SPLADE++, and miniCOIL as sparse vectors in a single Query API (Qdrant Article).

Postgres extensions — pgvector, ParadeDB, Tiger Data — are bringing the same primitives into relational stores, useful when retrieval queries already need to join against transactional data.

The architectural question is rarely “which engine has hybrid search.” It is “which engine handles the workloads around retrieval, and how much operational surface does adding a second one add.”

What the Architecture Predicts (and Where It Quietly Breaks)

The mechanism turns into useful intuition once you treat each retriever as a hypothesis about your queries. Each prediction below is testable on your own evaluation set in an afternoon.

- If your queries contain specific identifiers — SKUs, error codes, person names — expect BM25 to do most of the work. A small relative contribution from the vector retriever is healthy, not a failure.

- If your queries are paraphrastic or cross-domain, expect the dense retriever to pull ahead. BM25 still keeps it honest by catching rare exact terms.

- If your fused list looks worse than either retriever alone, the fusion algorithm is rarely the cause. One of the retrievers is producing many low-quality near-misses that fusion is upweighting.

Rule of thumb: Start with RRF and k=60. Move to weighted RRF once evaluation data shows one retriever is consistently stronger on your domain. Move to score-based fusion only if your retrievers produce well-calibrated, comparable scores — they usually do not.

When it breaks: Hybrid search degrades when both retrievers fail on the same query. RRF cannot recover signal that neither list contains. The fix is upstream — chunking strategy, query rewriting, or replacing one retriever entirely — not a different fusion method.

Security & compatibility notes:

- SPLADE license (BREAKING): The naver/splade weights are CC-BY-NC-SA 4.0 — non-commercial only (naver/splade GitHub). For production, use derivative open-licensed sparse encoders (e.g., naver/efficient-splade-VI-BT-large-doc) or an engine-provided sparse model (Qdrant miniCOIL).

- SPLADE maintenance (WARNING): The naver/splade repo’s last release was October 2023. Treat the official repo as research-grade, not as an actively maintained production library.

- Qdrant fastembed BM25 (WARNING): Deprecated in favor of core BM25 since v1.15.2. Old client paths are slated for removal in v1.18.x.

- Qdrant storage upgrade (WARNING): RocksDB was removed in v1.17 in favor of Gridstore — direct upgrade from v1.15 to v1.17 is not supported. Stage through an intermediate version.

Why Sparse Neural Models Quietly Erased the Old Boundary

The interesting move in the last few years was not a better fusion algorithm. It was the realization that sparse and dense retrieval are not opposites. SPLADE produces a sparse vector that lives in an inverted index — the same data structure BM25 uses — but with weights learned by a neural network. The retrieval engine cannot tell the difference at the index level.

That collapses the architectural distinction. A hybrid search system with BM25 plus SPLADE is technically two inverted-index retrievers, not “lexical plus neural.” Qdrant’s BM42, introduced as a BM25 alternative tuned for short RAG-style queries (SD Times), sits in the same neighborhood: rank-friendly, sparse, and engine-native. Independent benchmarks for BM42 are mixed; treat it as a Qdrant-specific option, not an industry default.

The implication for Agentic RAG systems is structural. As agents start issuing many short, intent-shaped queries, the retrieval mix shifts toward sparse-neural models that handle short queries gracefully — and away from dense vectors originally tuned on longer paragraphs. The fusion algorithm stays the same. The retrievers feeding it quietly change underneath.

The Data Says

The decision that matters in production hybrid search is rarely BM25 versus dense vectors — both have measurable contributions on most corpora. The decisions that matter are the fusion layer, the licensing of any neural sparse model you adopt, and whether your existing engine can host both retrievers without doubling your operational surface area. Pick the engine that fits the workloads around retrieval; the algorithms come along for the ride.

AI-assisted content, human-reviewed. Images AI-generated. Editorial Standards · Our Editors