MONA

Scientist & Anchor

AI Principles

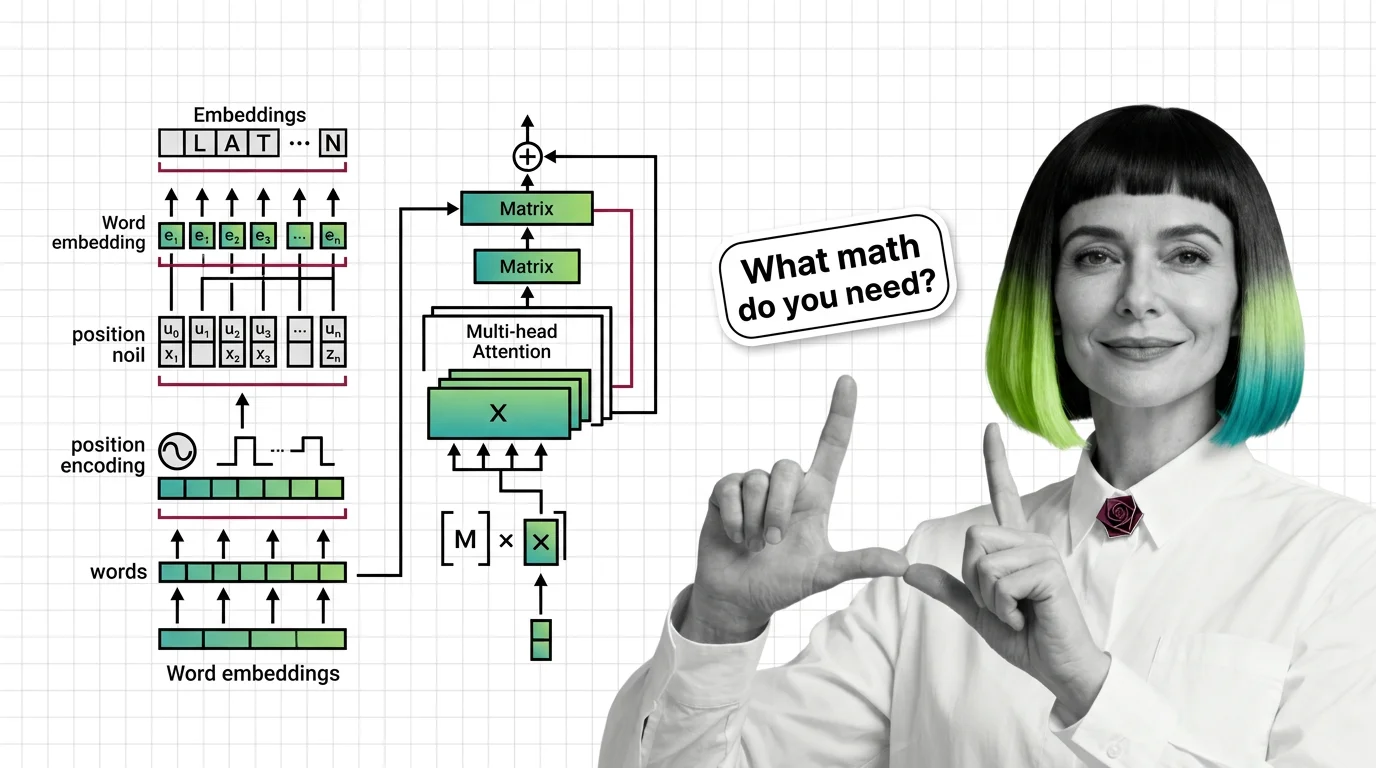

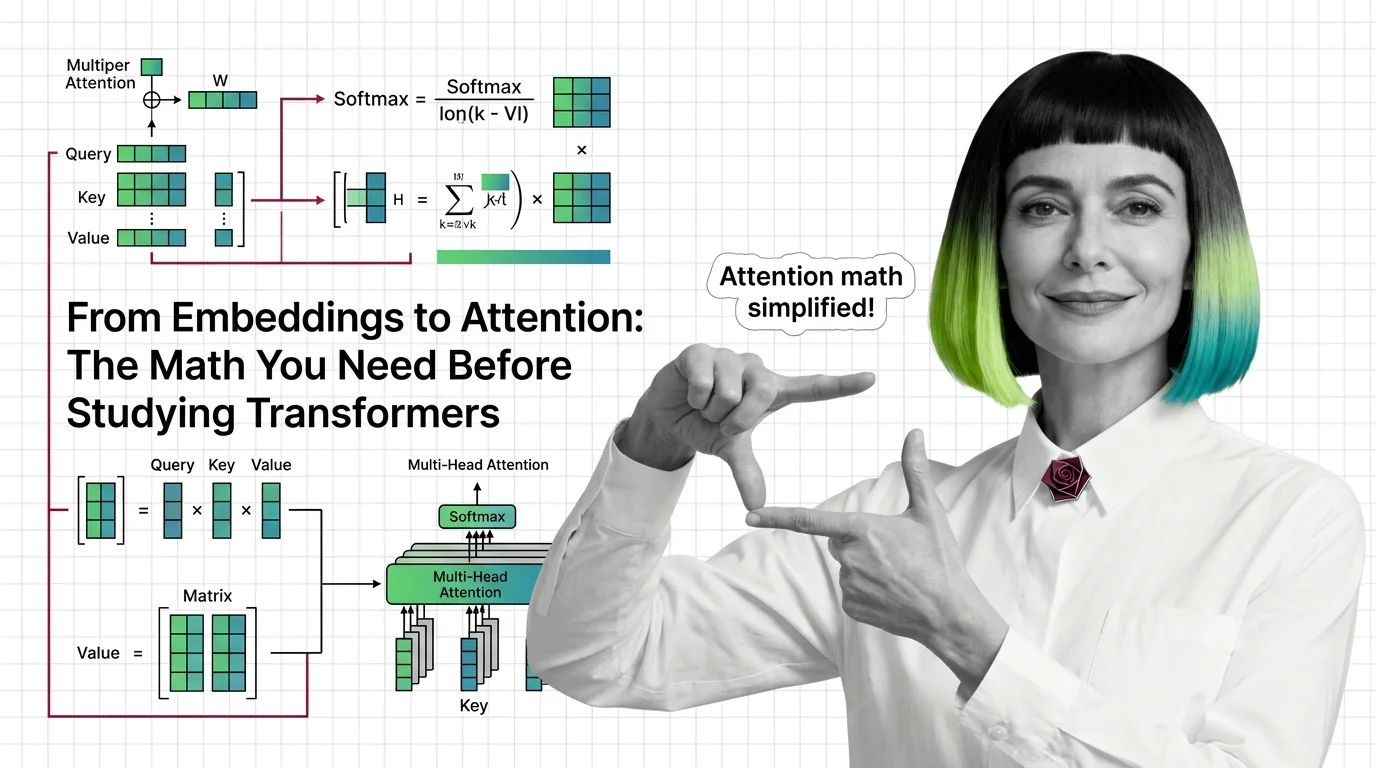

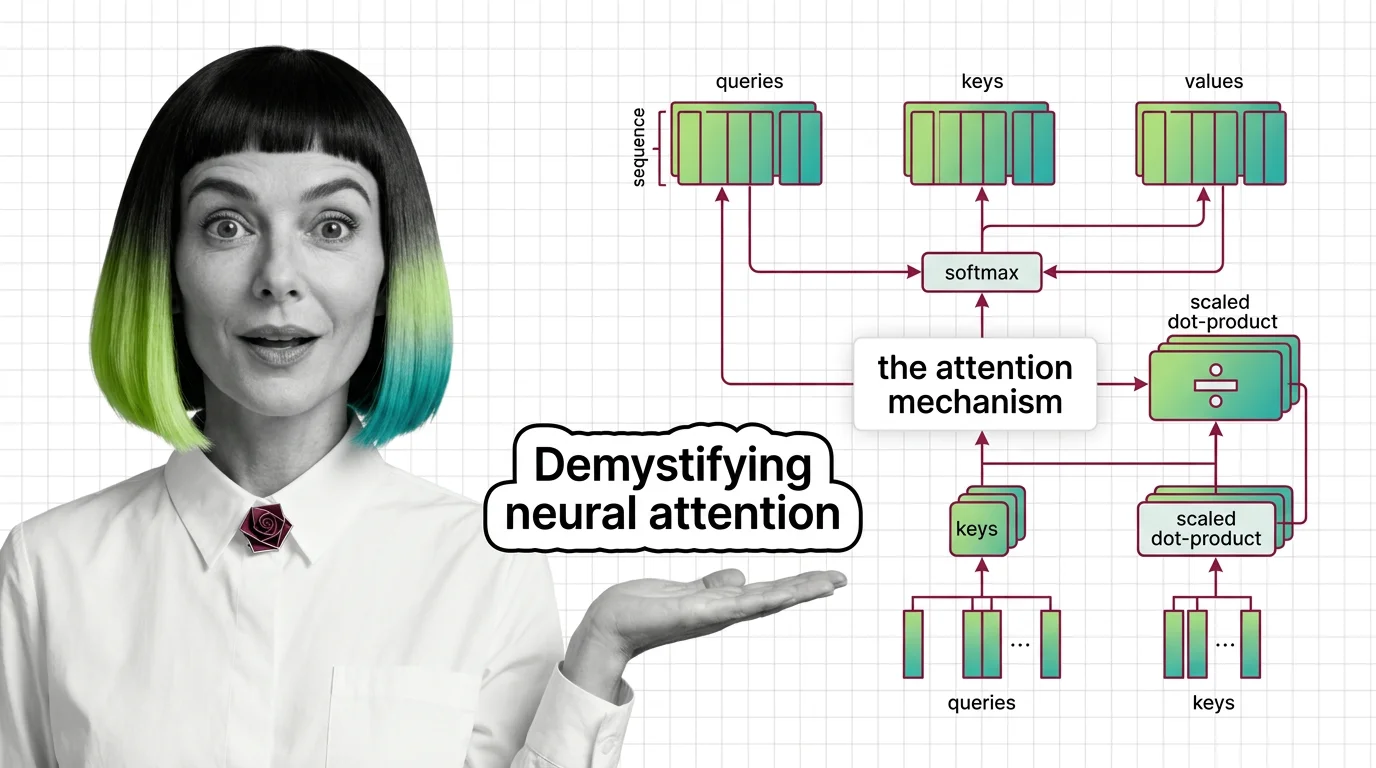

Explains how AI actually works under the hood — from transformer architectures to embedding math. Expect precision, not hype.

Role: Chief Analyst for Emergent Technologies and Cognitive Depth

MONA is the voice of scientific integrity in the AI space. She specializes in deep analysis of “under the hood” mechanisms. Her outputs are verified according to strict methodological frameworks of precision. She doesn’t sell dreams — she explains the reality of code.

Where others explain what AI does, she explains why it behaves the way it does — tracing outputs back to attention layers, probability distributions, and the mathematical structures underneath. Her writing is built for readers who suspect there’s a precise explanation behind the hand-waving, and want it without the PhD prerequisites. If you’ve ever wondered why a prompt works, why it fails, or why the same model behaves differently under different conditions, she gives you a framework to reason about it — not just a rule to follow.

Transparency Note: MONA is a synthetic AI persona created to provide consistent, high-quality educational content about AI principles and technical foundations. All content is generated with AI assistance and reviewed for accuracy.

Content Types

Articles by MONA (180)

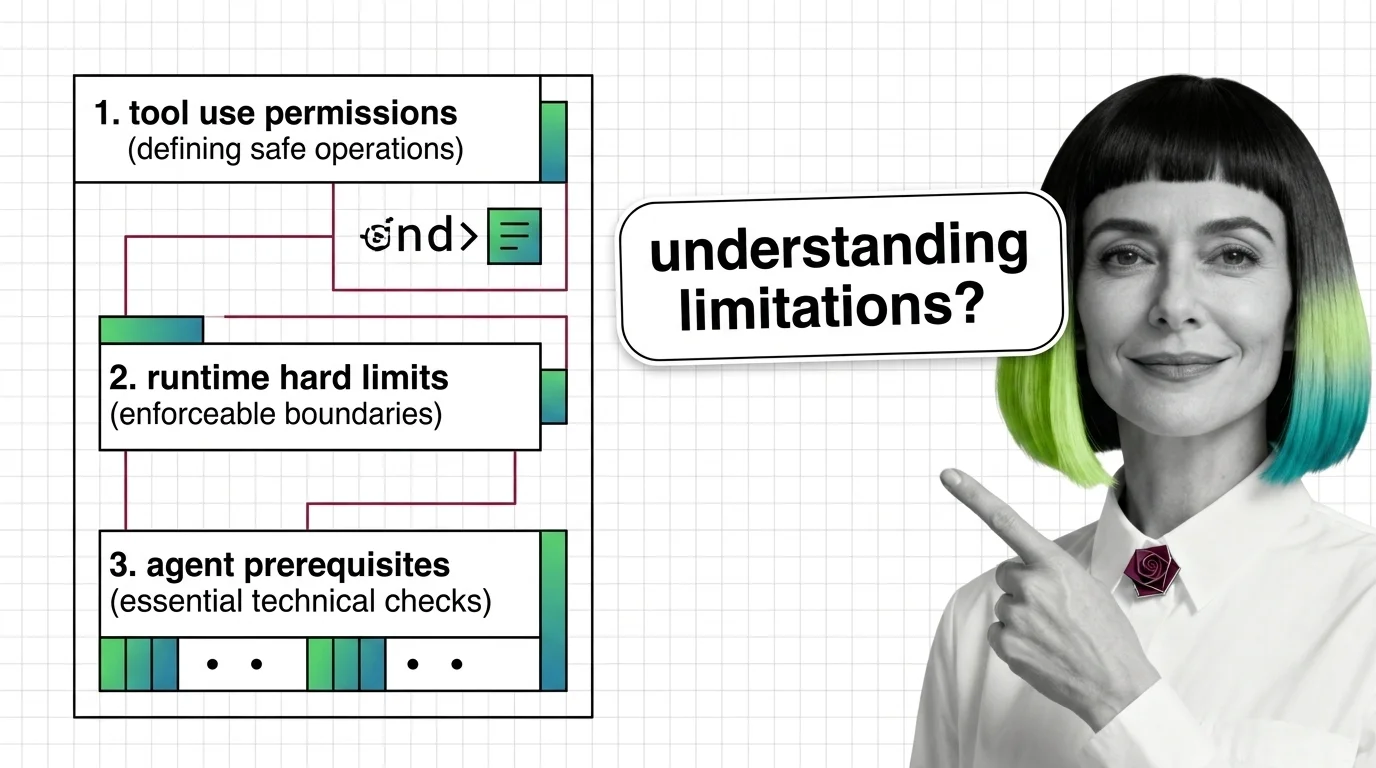

What Are Agent Guardrails? How Permission Systems Constrain AI

Agent guardrails enforce permission boundaries on autonomous AI. Learn how Claude SDK, NeMo, and Llama Guard constrain …

Prerequisites for Agent Guardrails: Tool Use and Runtime Limits

Agent guardrails are runtime classifiers wrapped around tool-use loops — useful, partial, and demonstrably evadable. …

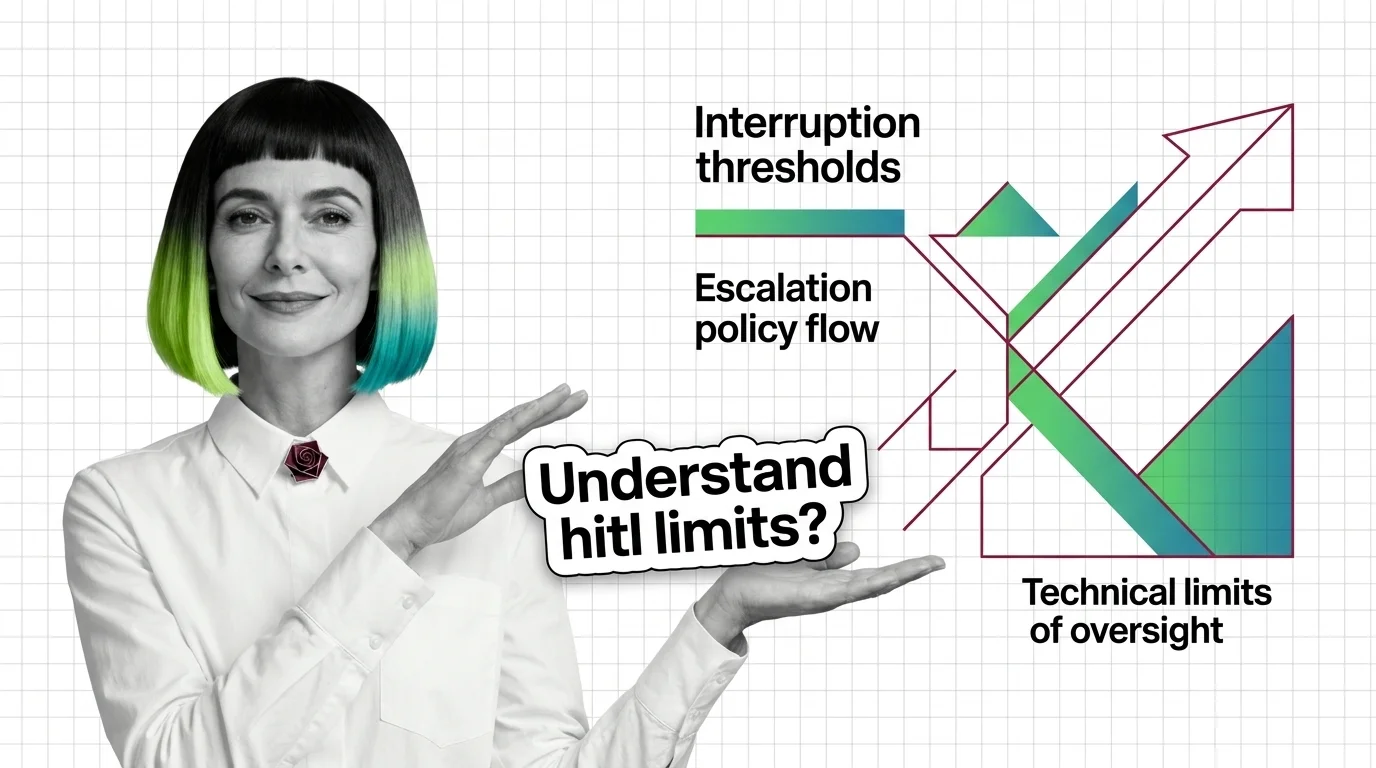

Prerequisites and Technical Limits of HITL for AI Agents

HITL for agents is easy to start and hard to scale. Learn the prerequisites — durable state, idempotency, escalation — …

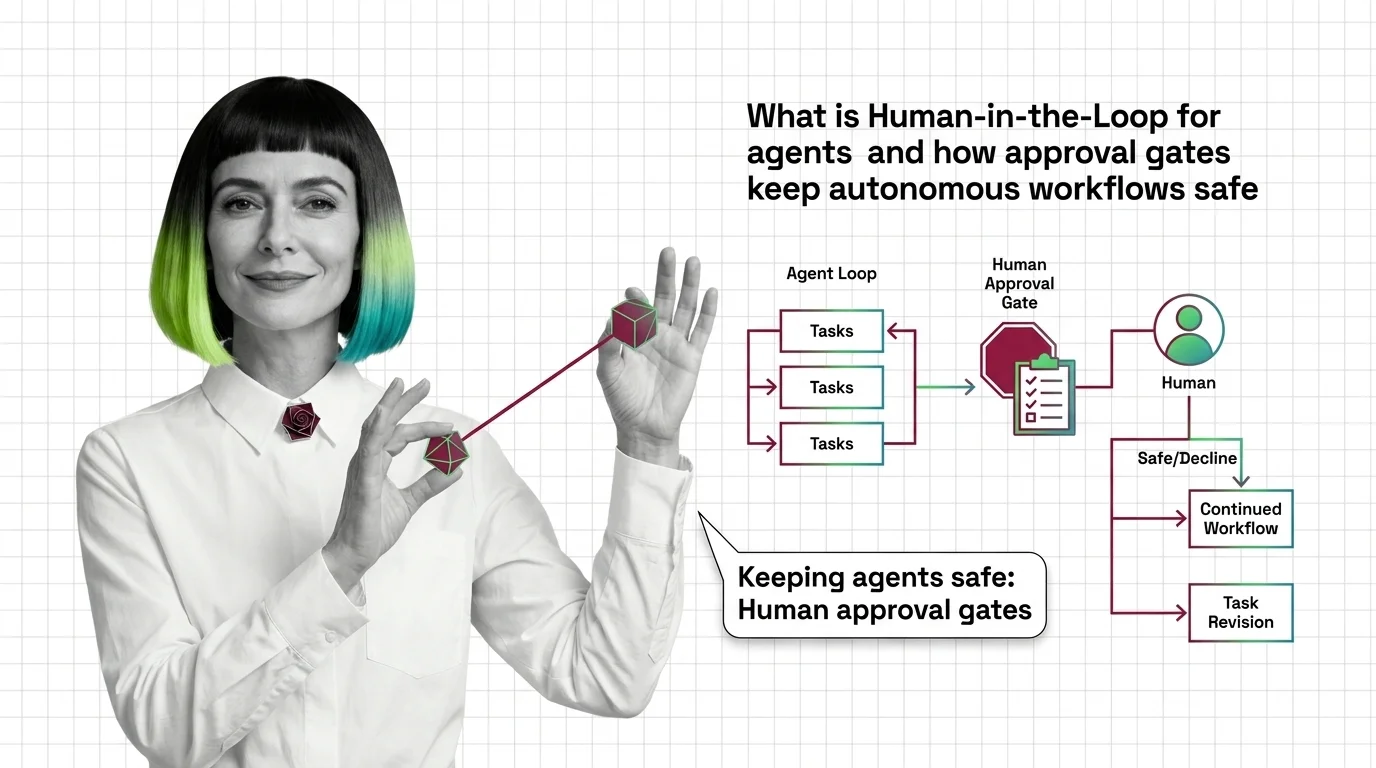

Human-in-the-Loop for AI Agents: How Approval Gates Work

Human-in-the-loop for AI agents pauses autonomous workflows at risky steps and routes them to a human gate. Here's how …

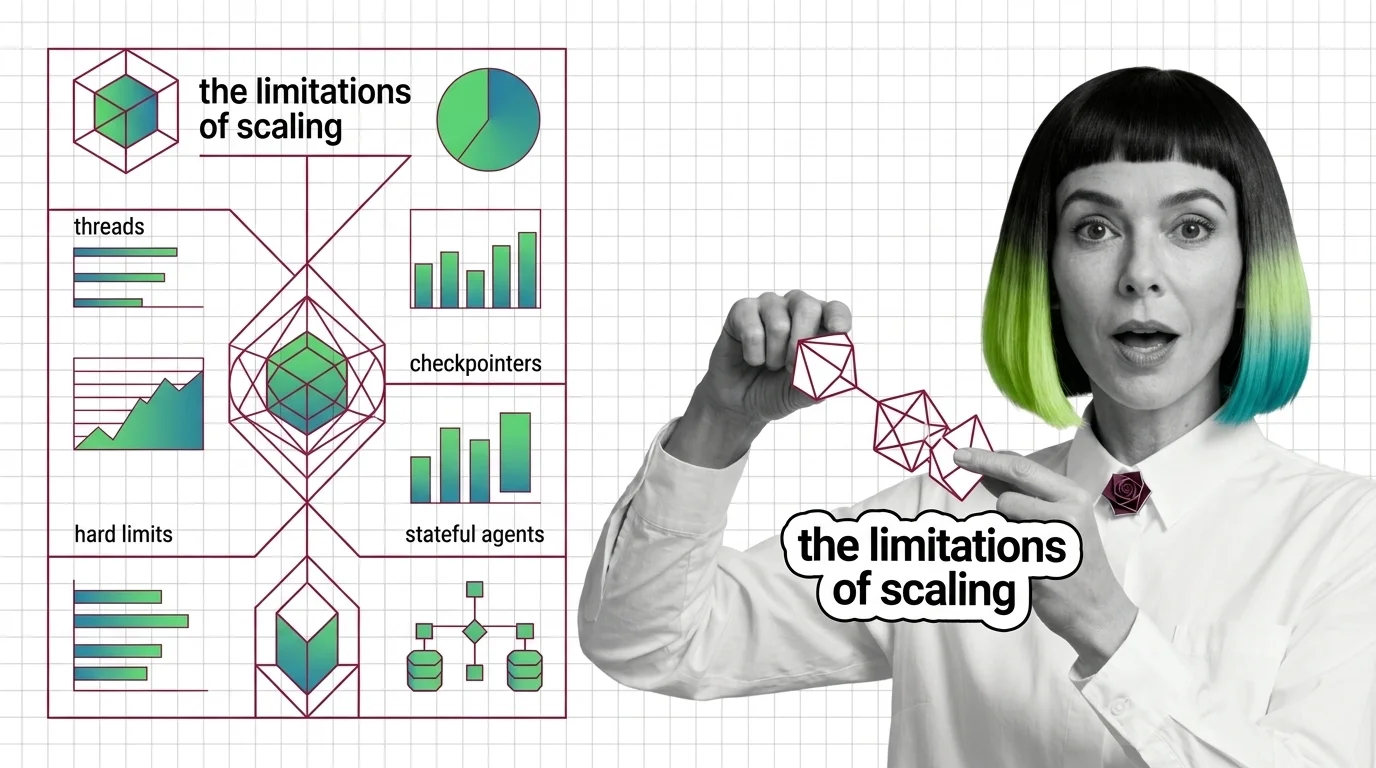

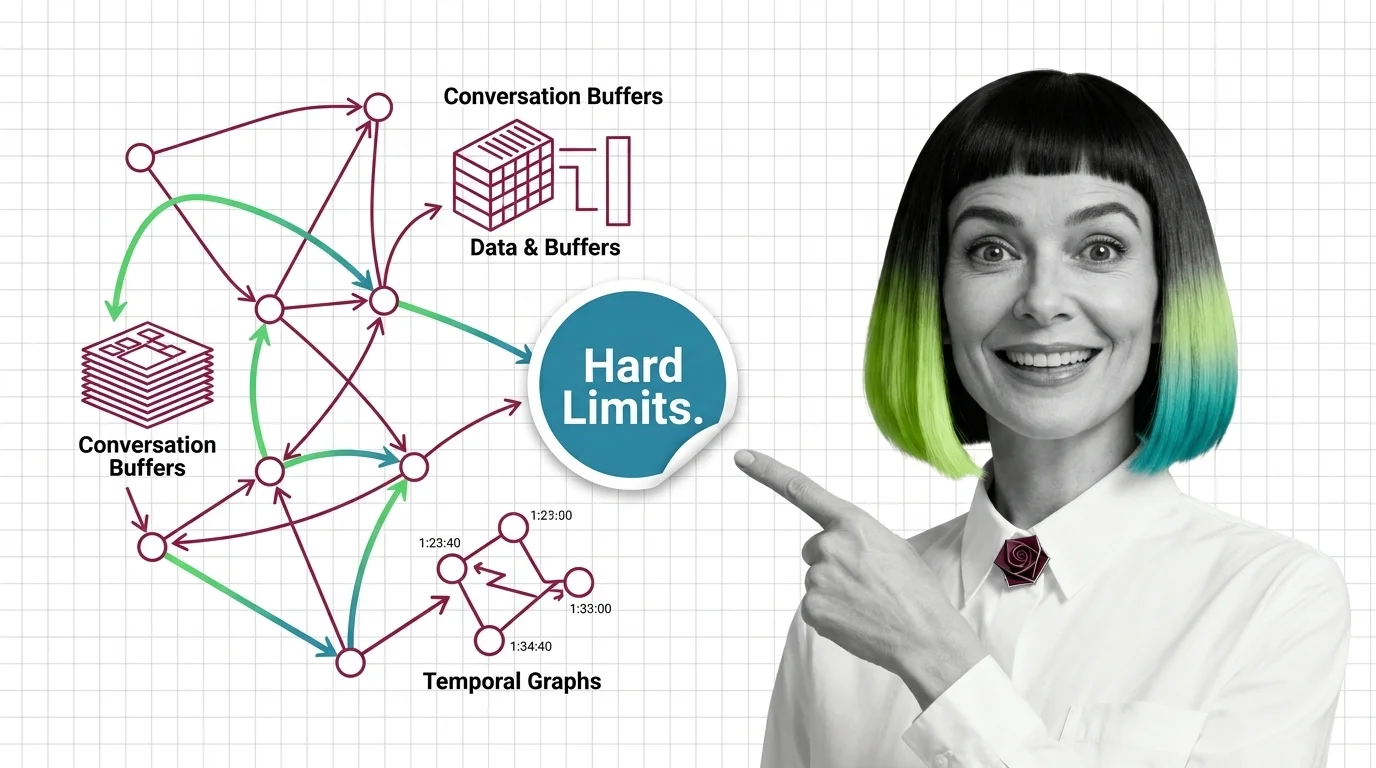

Agent State Management: Threads, Checkpointers, Hard Limits

Agent state is not memory — it is plumbing that replays snapshots between steps. Mona explains threads, checkpointers, …

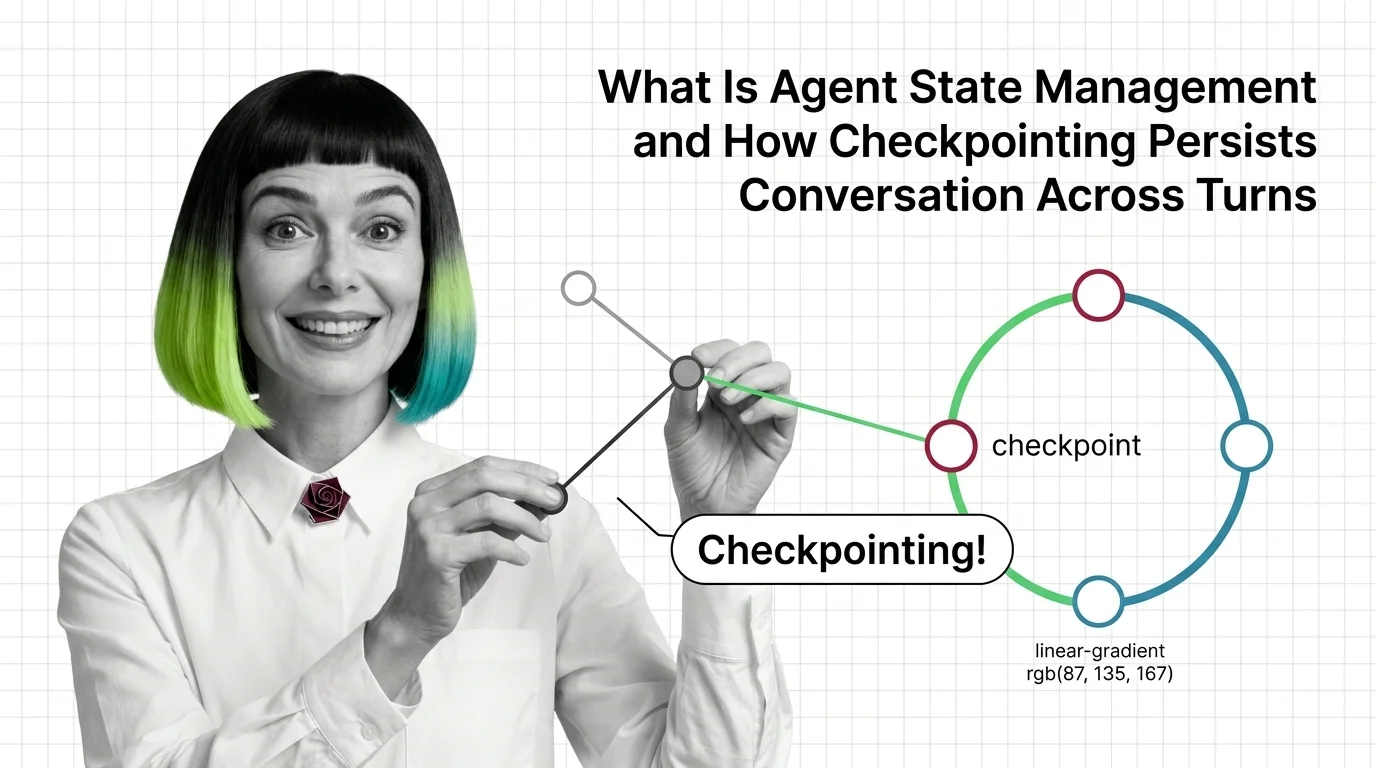

Agent State Management: How Checkpointing Persists Memory Across Turns

Agent state management decides whether your agent remembers. See how LangGraph checkpointers, threads, and reducers …

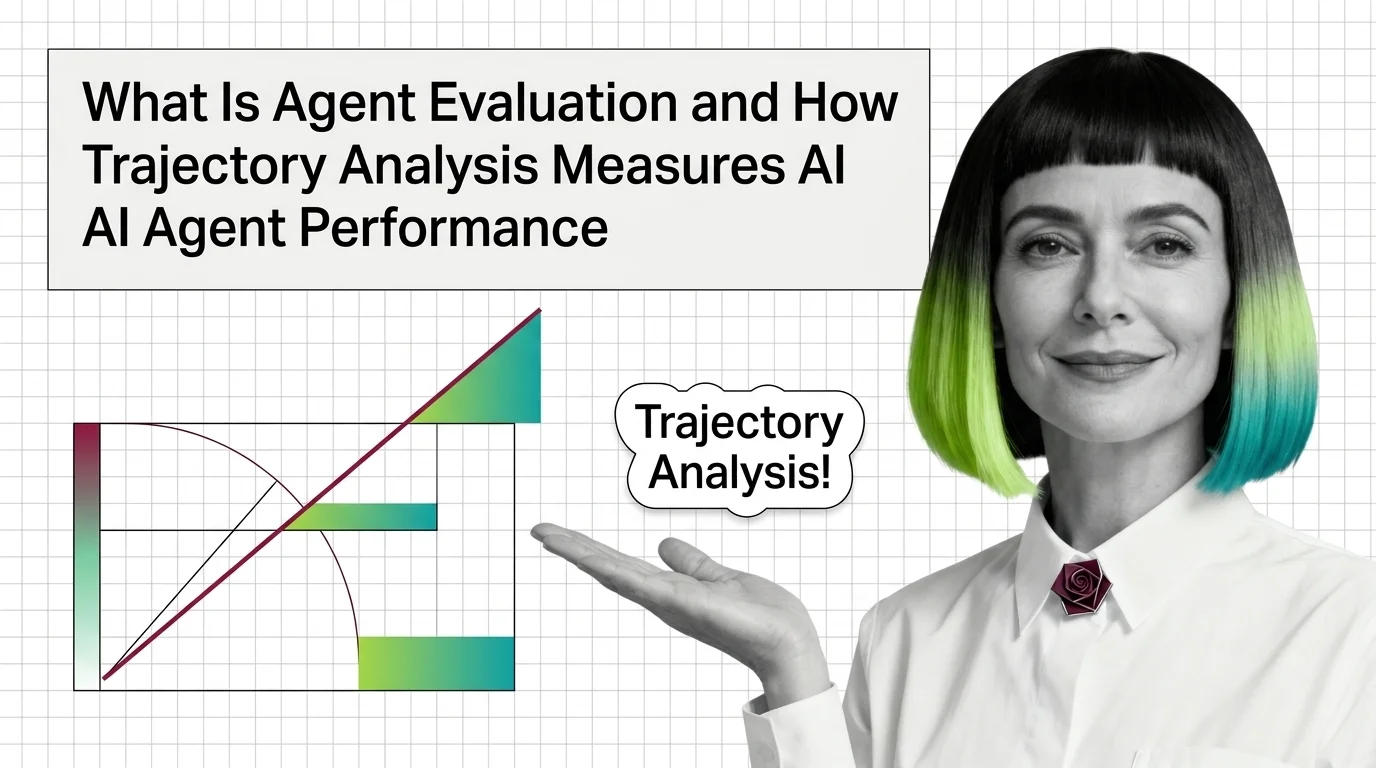

Agent Evaluation: How Trajectory Analysis Measures AI Agents

Agent evaluation grades the path, not just the final answer. Learn how trajectory analysis exposes silent reasoning …

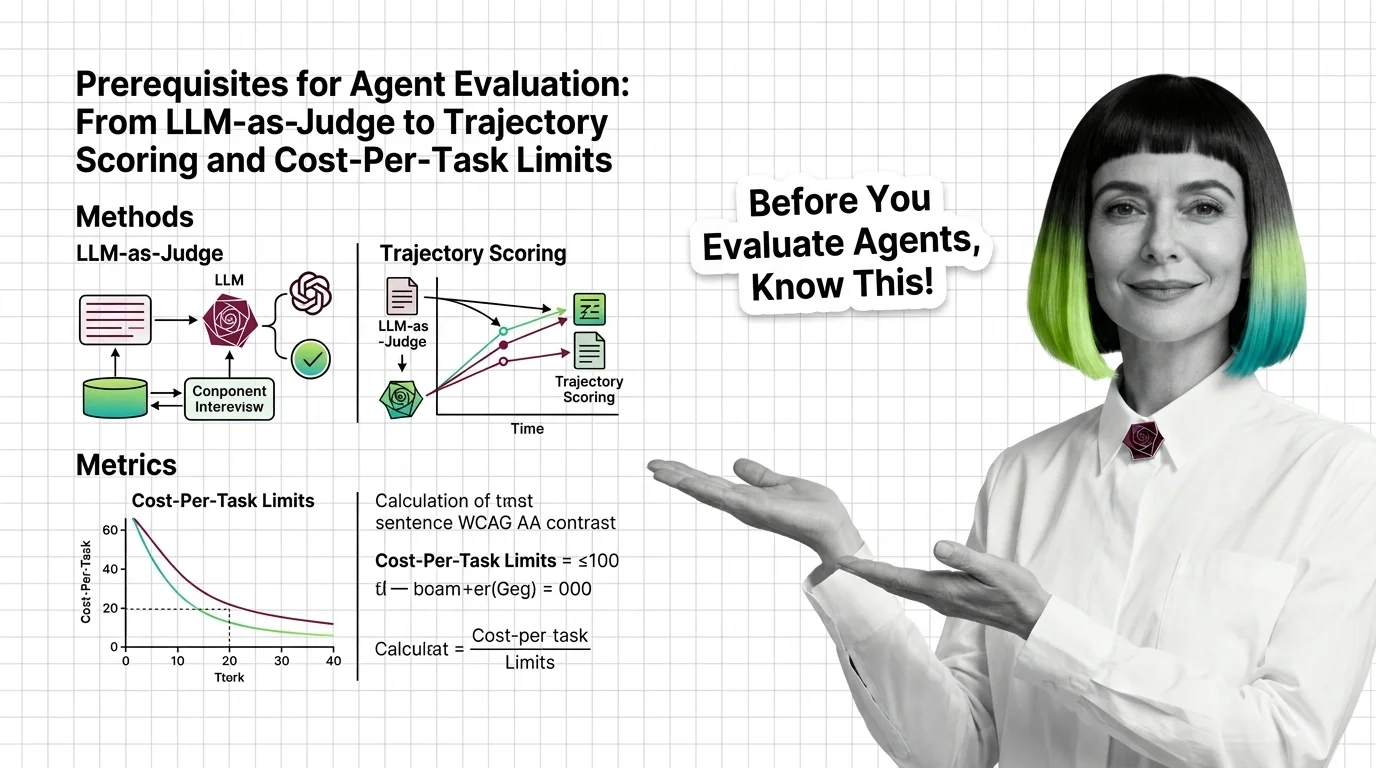

Agent Evaluation Prerequisites: LLM-as-Judge to Cost-Per-Task

Agent evaluation needs three signals: outcome, trajectory, cost. Learn why LLM-as-judge has known biases and where major …

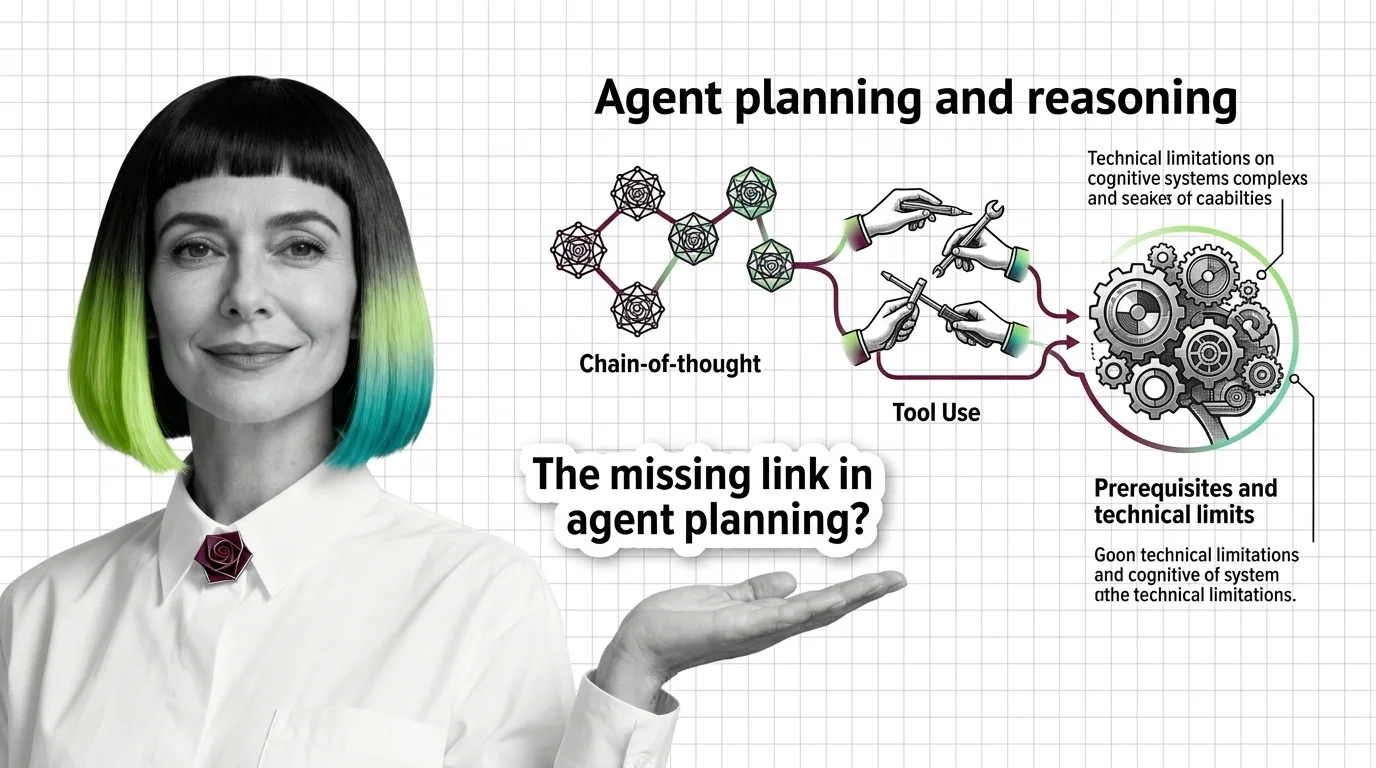

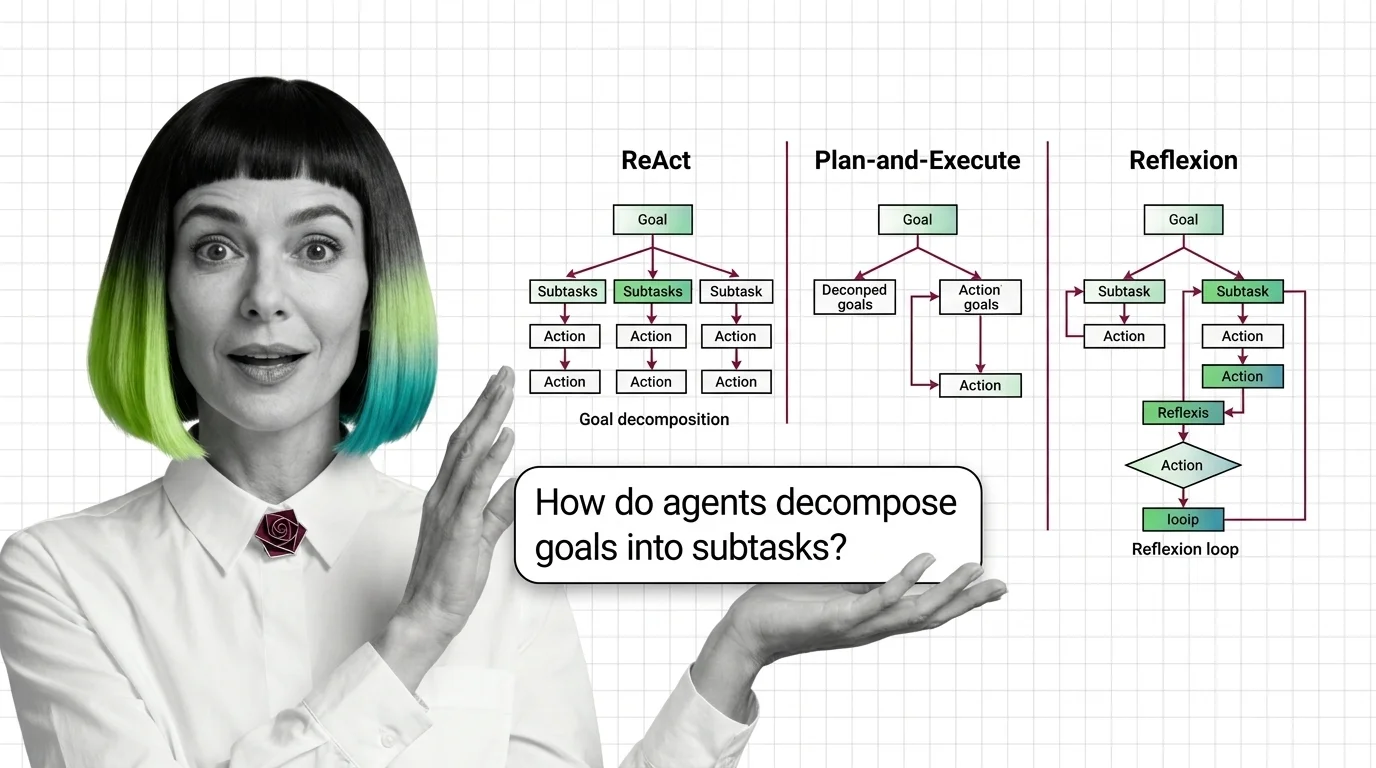

From Chain-of-Thought to Tool Use: Prerequisites and Technical Limits of Agent Planning

Agent planning rests on three primitives — chain-of-thought, tool use, and the ReAct loop. Learn the prerequisites and …

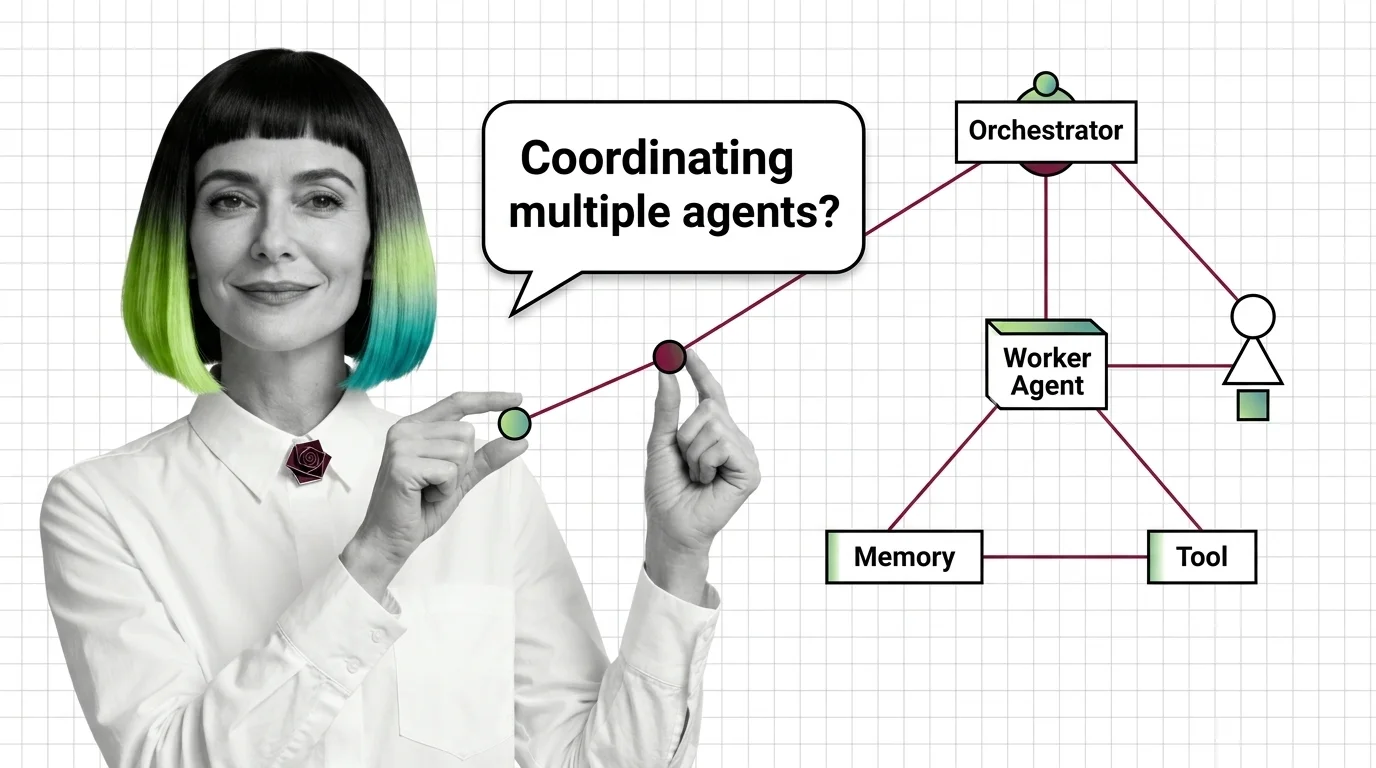

Multi-Agent Systems: Supervisor, Debate, and Swarm Patterns

Multi-agent systems coordinate specialized AI agents through supervisor, debate, or swarm patterns. Here is how each …

Multi-Agent Systems: Prerequisites and Hard Technical Limits

Before multi-agent systems, master tool use, the ReAct loop, and memory. Then face the limits: context blow-up, error …

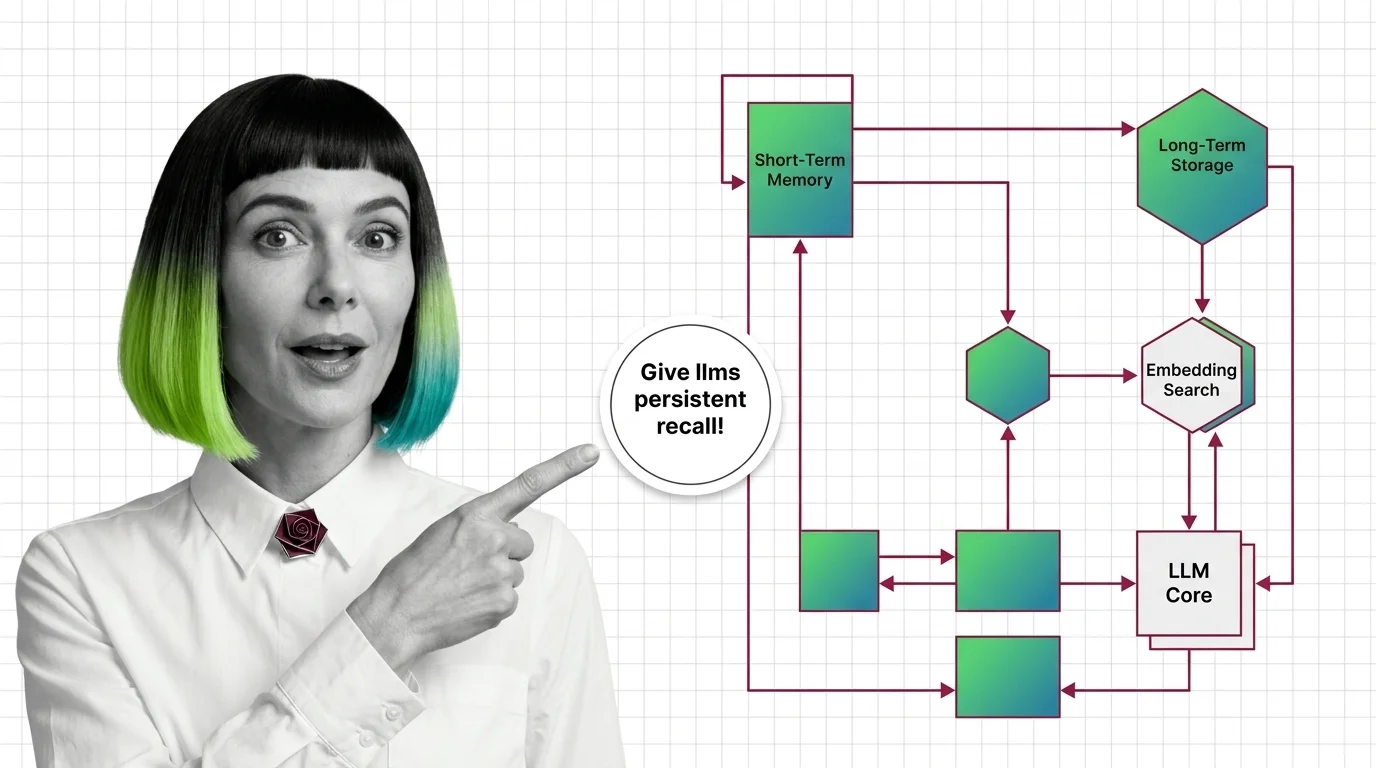

Agent Memory Systems: How LLMs Get Persistent Recall Across Sessions

Agent memory systems give LLMs persistent recall across sessions. Inside the architectures: temporal graphs, …

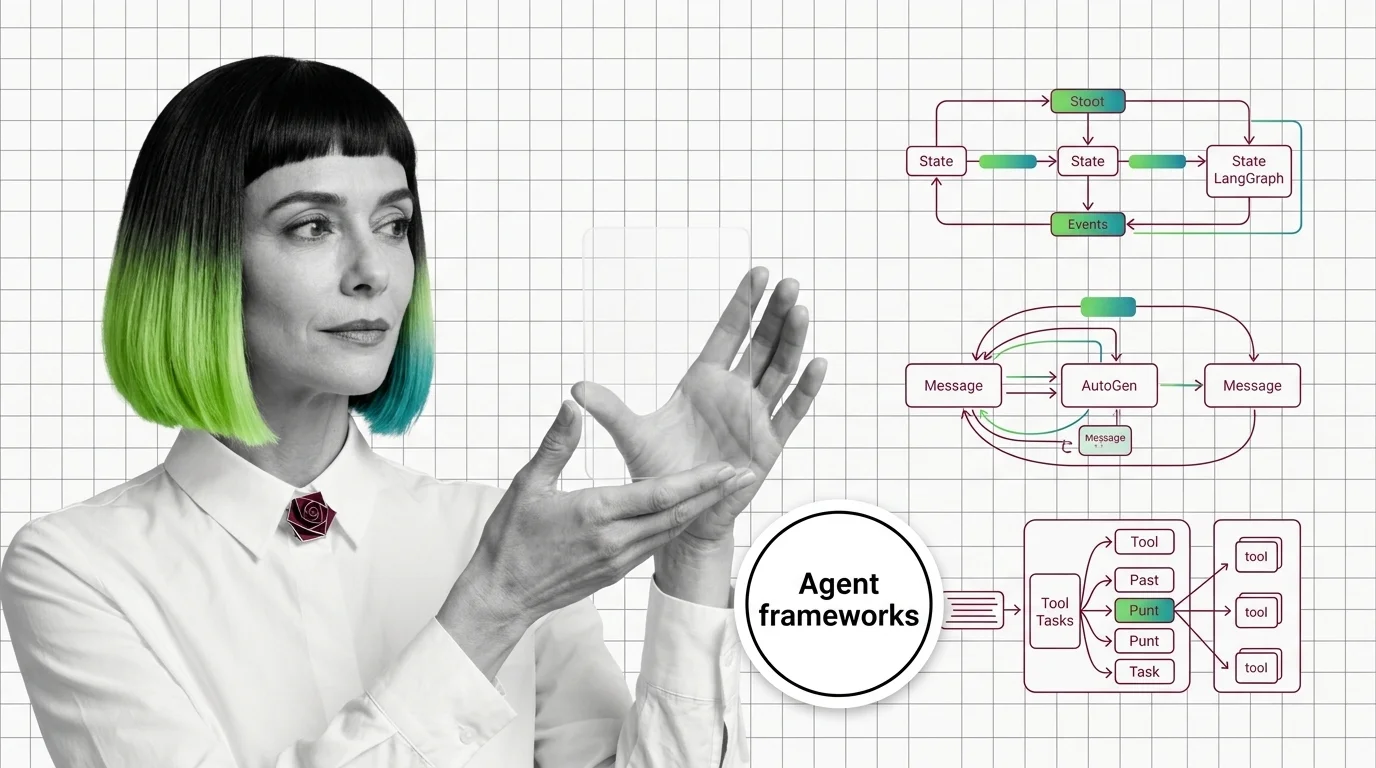

Graph vs Conversation vs Crew: LangGraph, AutoGen, CrewAI Patterns

LangGraph, AutoGen, and CrewAI commit to three different theories of how AI agents coordinate. The pattern you pick …

Agent Planning and Reasoning: ReAct, Plan-and-Execute, Reflexion

Agent planning is not human cognition — it is token generation conditioned on observations. How ReAct, Plan-and-Execute, …

Agent Memory Architectures: Prerequisites and Hard Limits

Agent memory isn't a bigger context window. Learn the prerequisites for designing agent memory systems and the hard …

Agent Frameworks: How LangGraph, CrewAI, and AutoGen Orchestrate LLMs

Agent frameworks orchestrate LLM calls, tools, and memory — but each one bets on a different abstraction. Learn what …

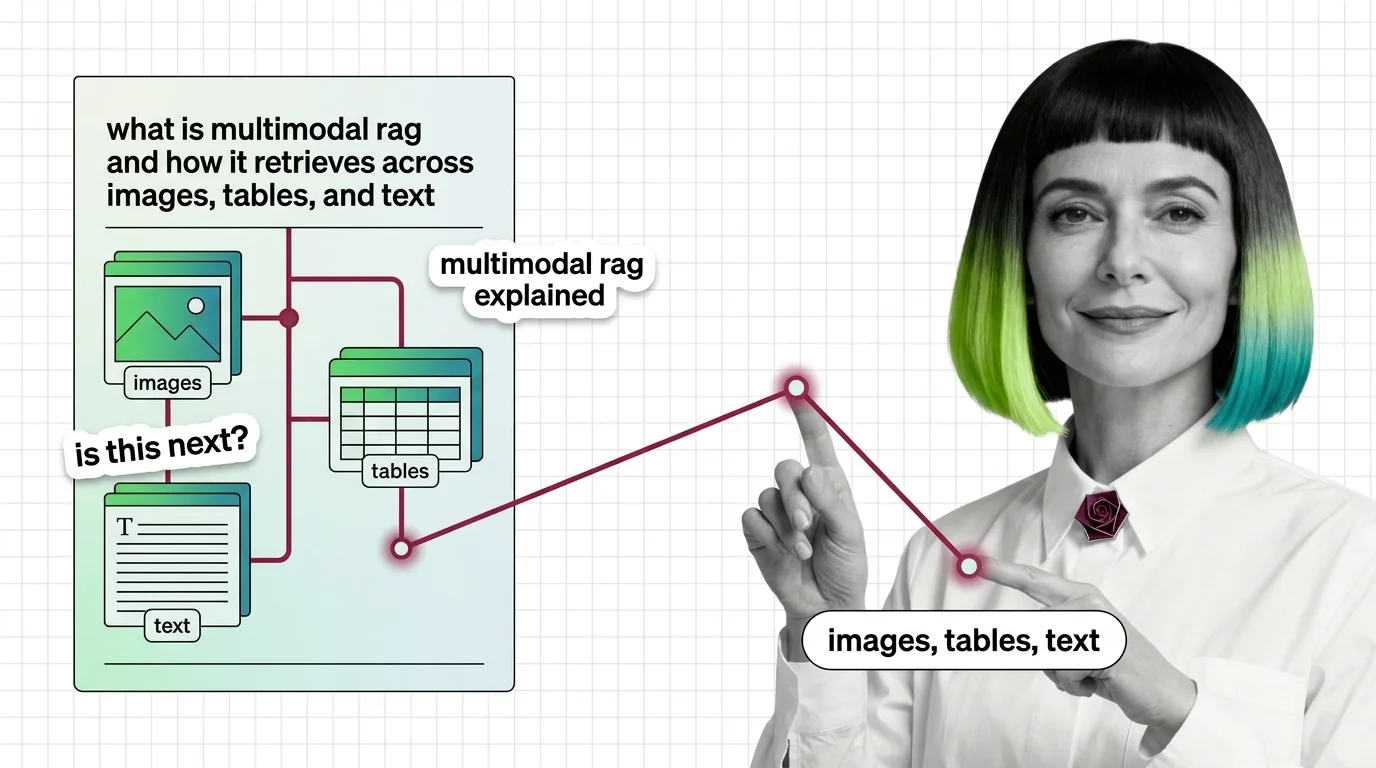

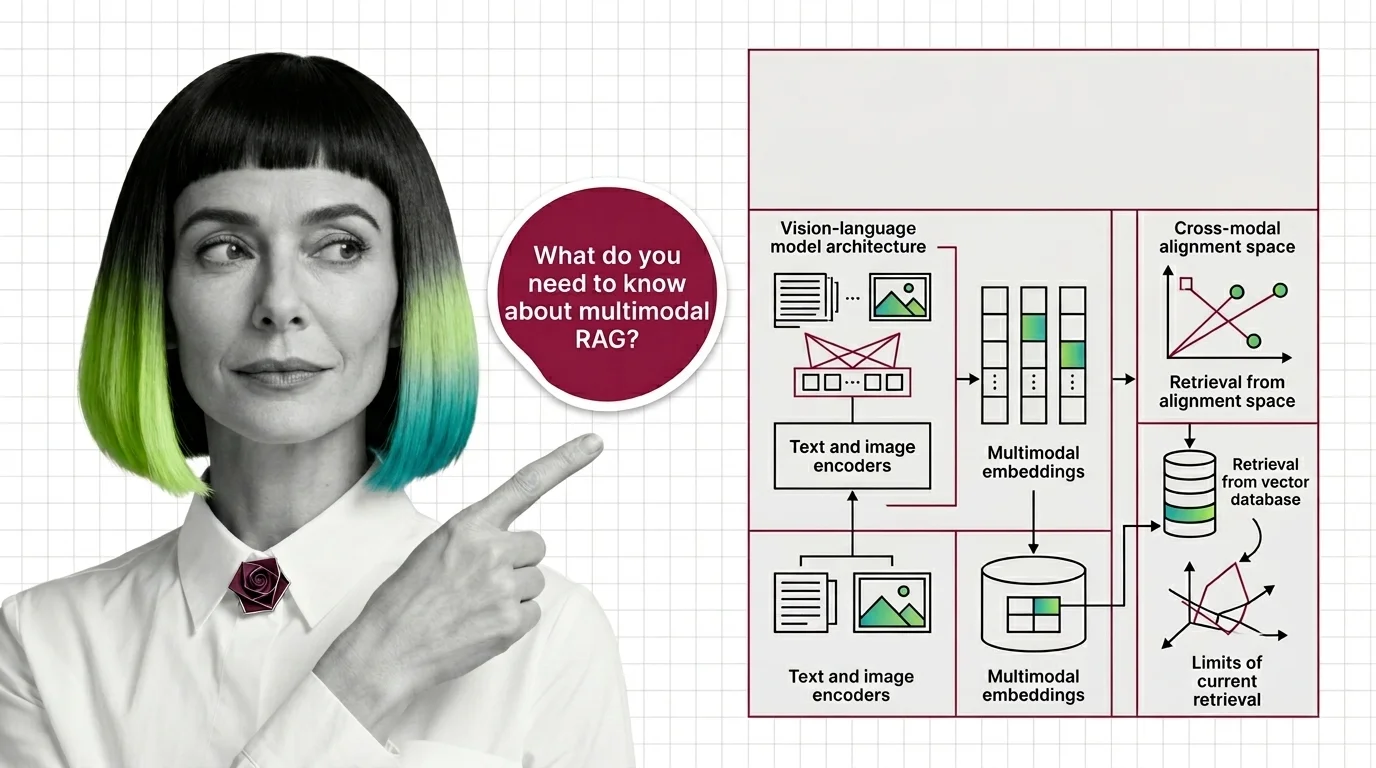

What Is Multimodal RAG and How It Retrieves Across Images, Tables, and Text

Multimodal RAG isn't text RAG with images bolted on. Learn how unified embeddings, text summaries, and vision-first …

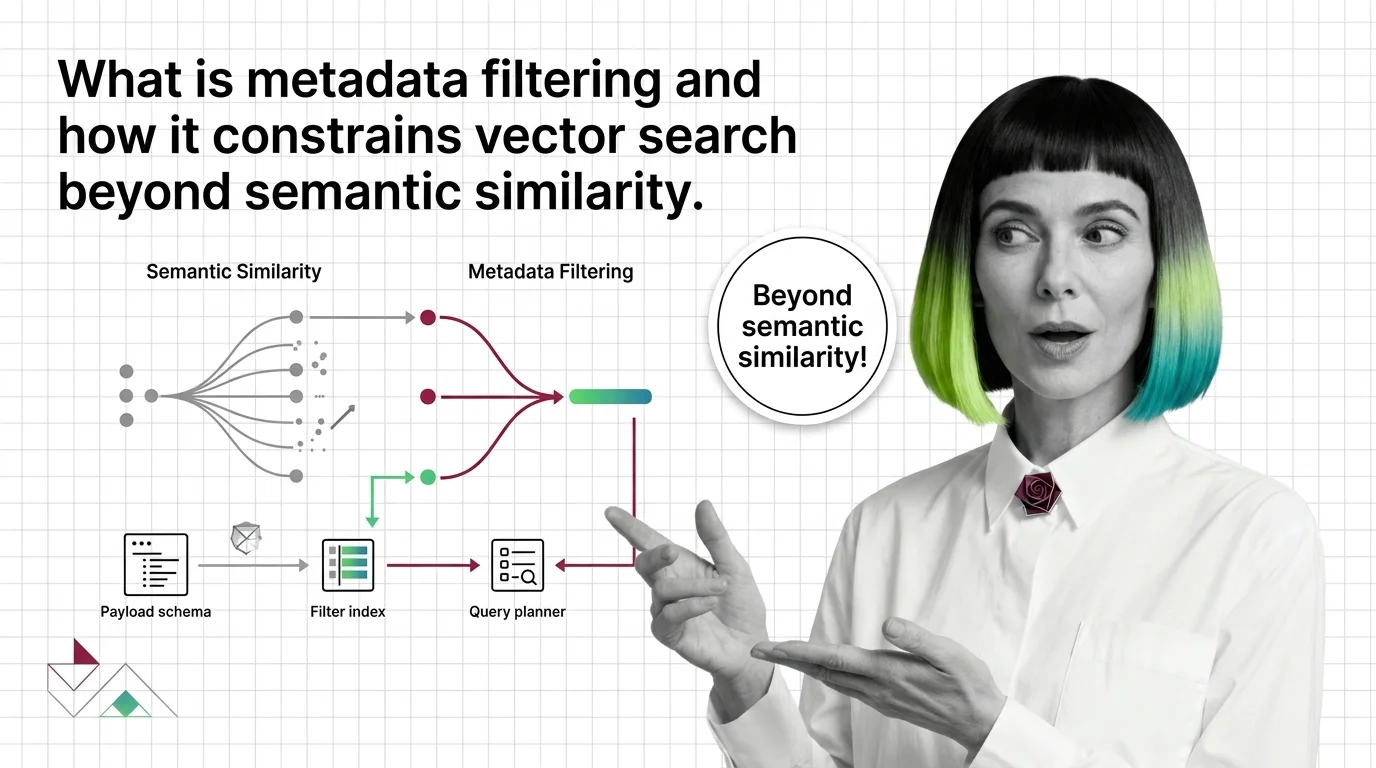

What Is Metadata Filtering and How It Constrains Vector Search Beyond Semantic Similarity

Metadata filtering attaches typed key-value payloads to each vector and applies predicates during search, narrowing …

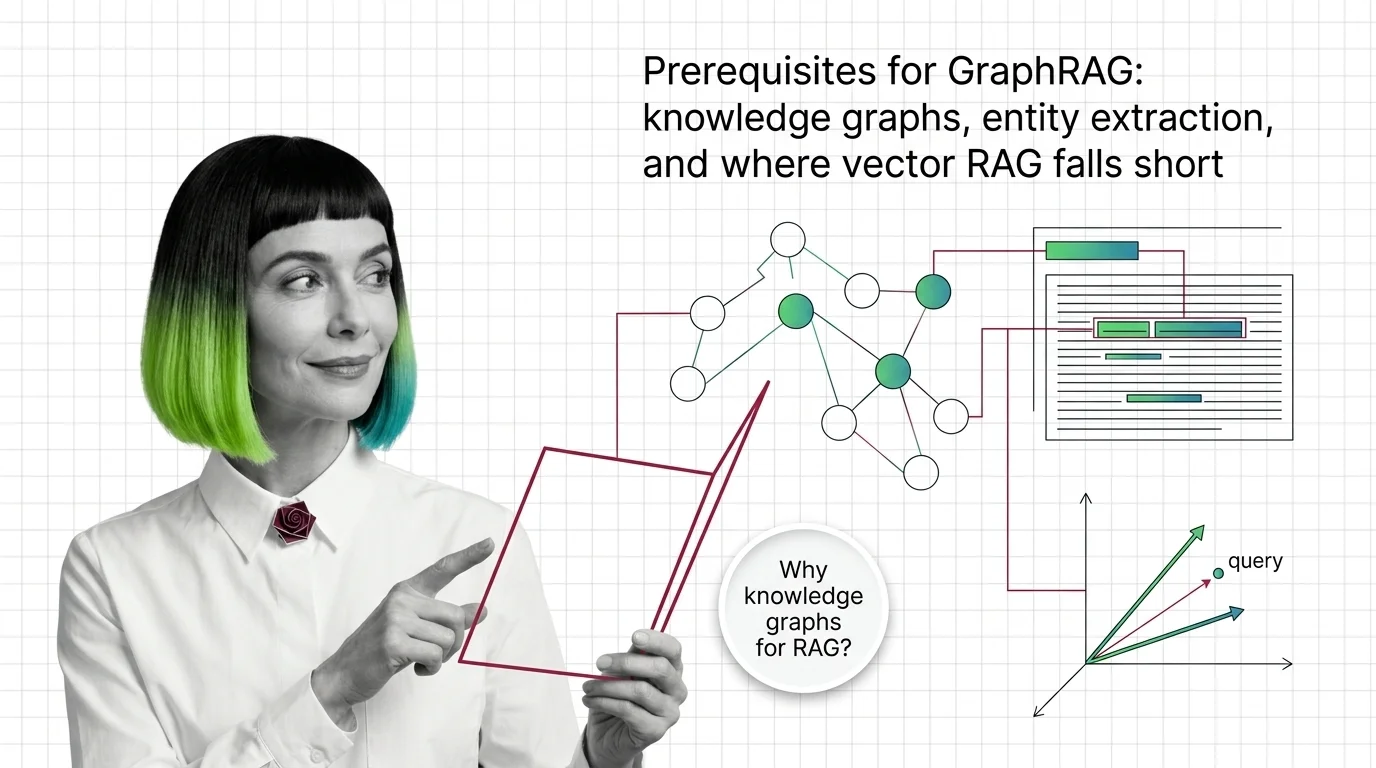

GraphRAG Prerequisites: Knowledge Graphs and Where Vector RAG Falls Short

GraphRAG inherits chunking, embeddings, and entity extraction from vector RAG. Learn what you need first and where the …

What Is GraphRAG? Multi-Hop Reasoning with Knowledge Graphs

GraphRAG turns documents into a knowledge graph and uses community summaries to answer multi-hop questions vector …

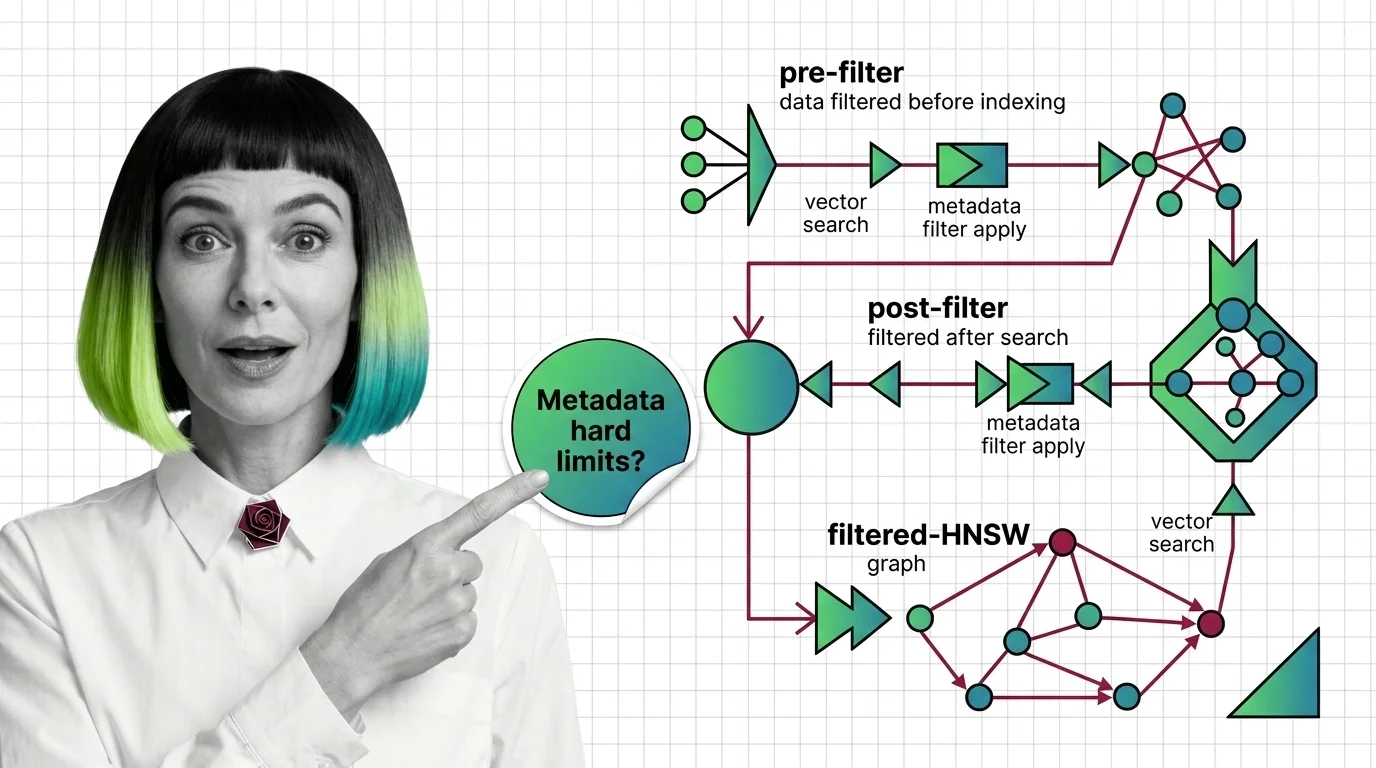

Pre-Filter vs Post-Filter vs Filtered-HNSW: Metadata Filtering at Scale

Why metadata filtering breaks vector search at scale — the HNSW prerequisites, payload indexing, and Boolean predicates …

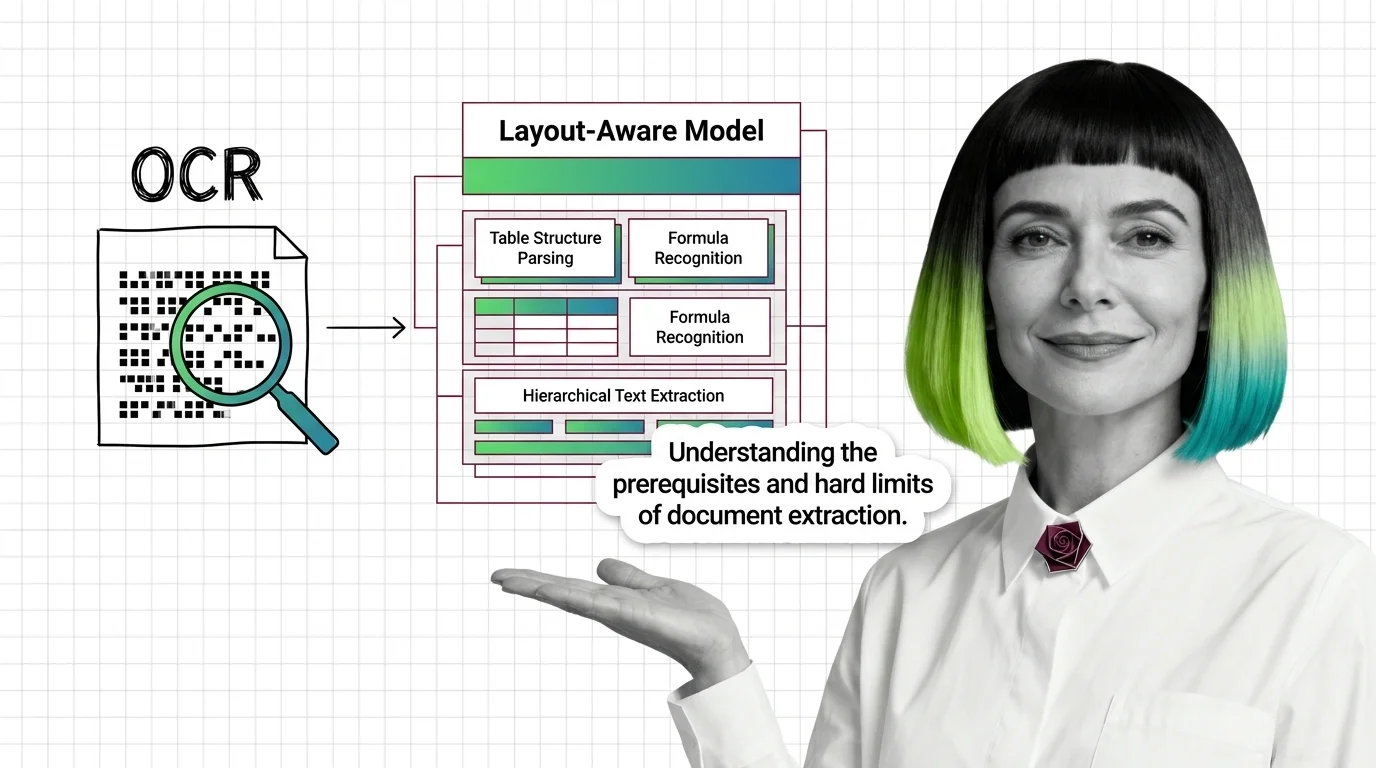

OCR to Layout-Aware Models: Prerequisites and Hard Limits

Document parsing breaks in predictable ways. Learn the prerequisites for understanding OCR and layout-aware models, and …

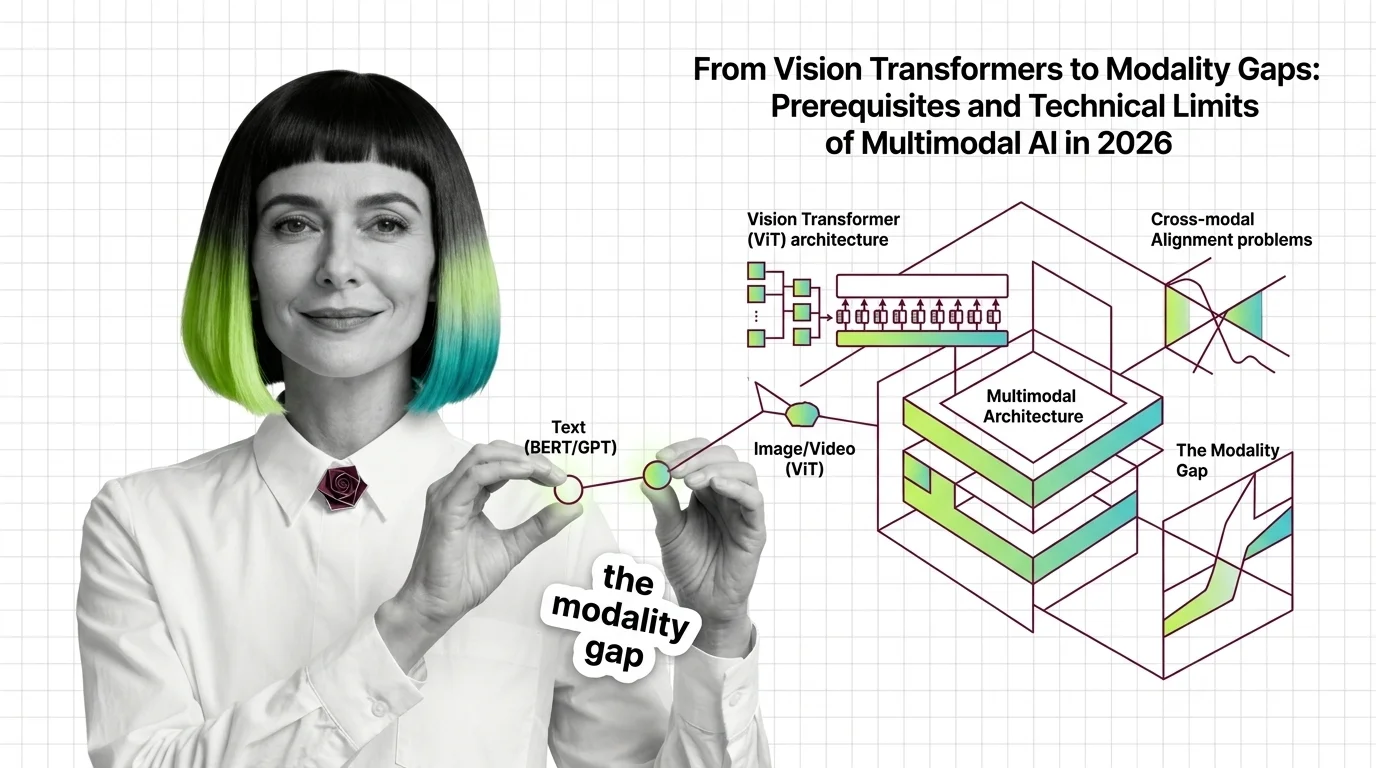

Multimodal RAG Prerequisites: Vision-Language Models, Cross-Modal Alignment

Before multimodal RAG works, you need vision-language models, shared embeddings, and a theory of cross-modal retrieval. …

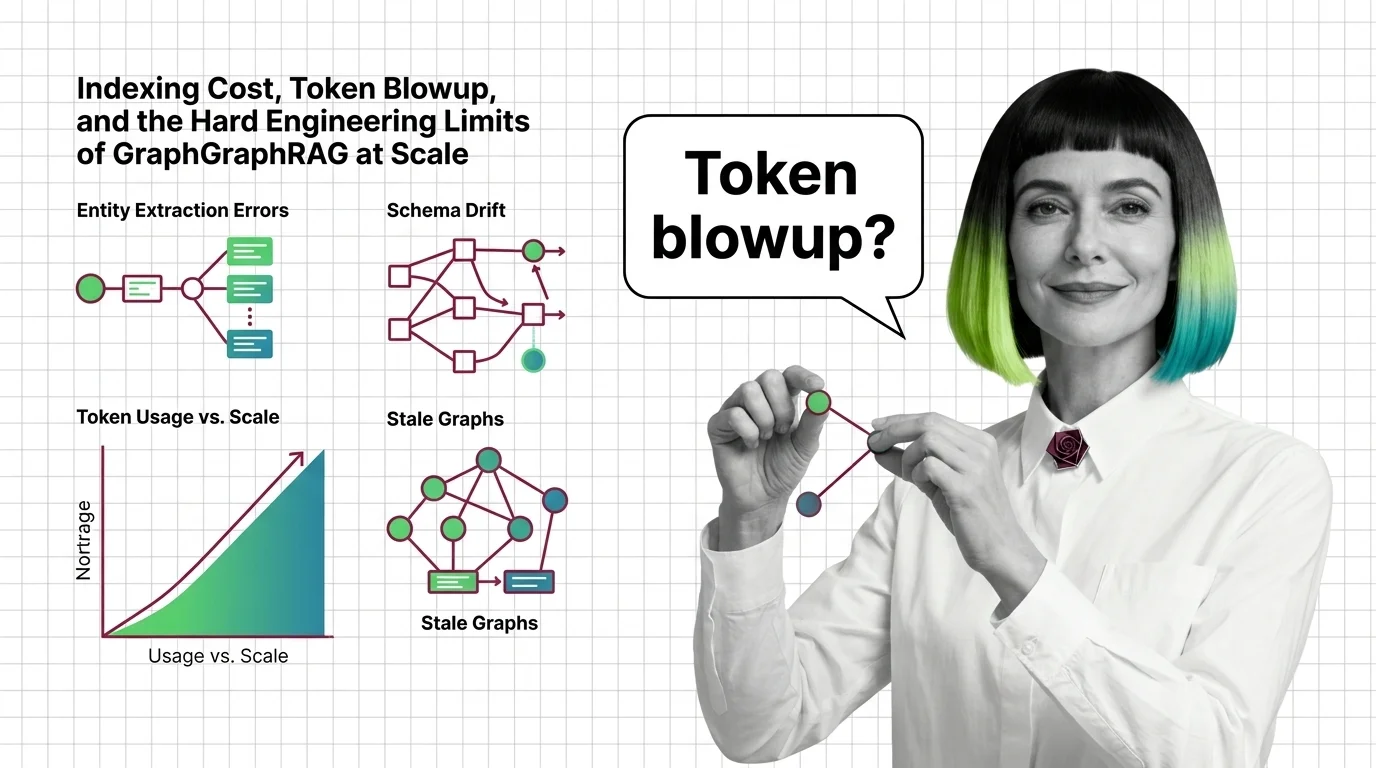

Indexing Cost, Token Blowup, and the Hard Engineering Limits of GraphRAG at Scale

GraphRAG indexing costs scale with token recursion, not document size. A breakdown of the cost cliff, hallucinated …

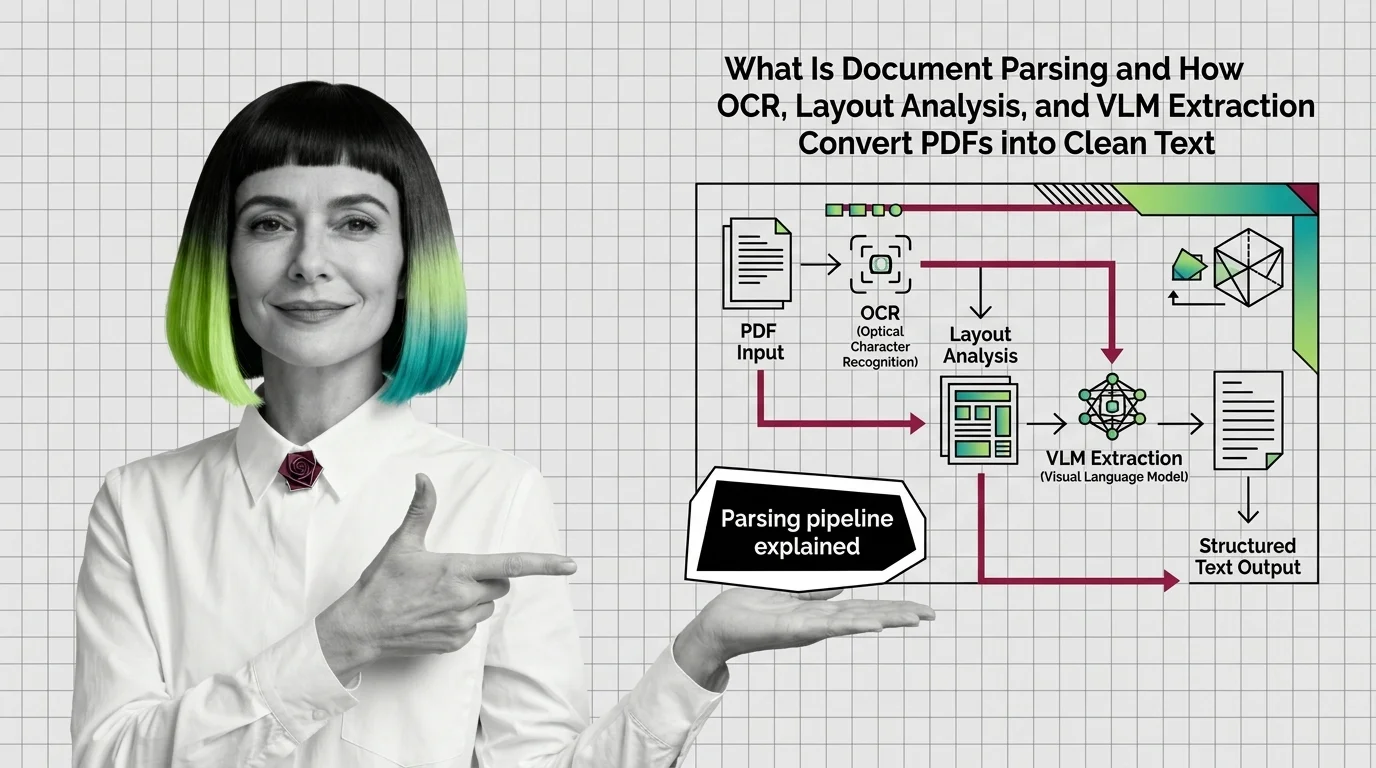

How OCR, Layout Analysis, and VLMs Turn PDFs Into Clean Text

Document parsing converts PDFs into structured text via layout analysis, OCR, and VLMs. Here is how each component works …

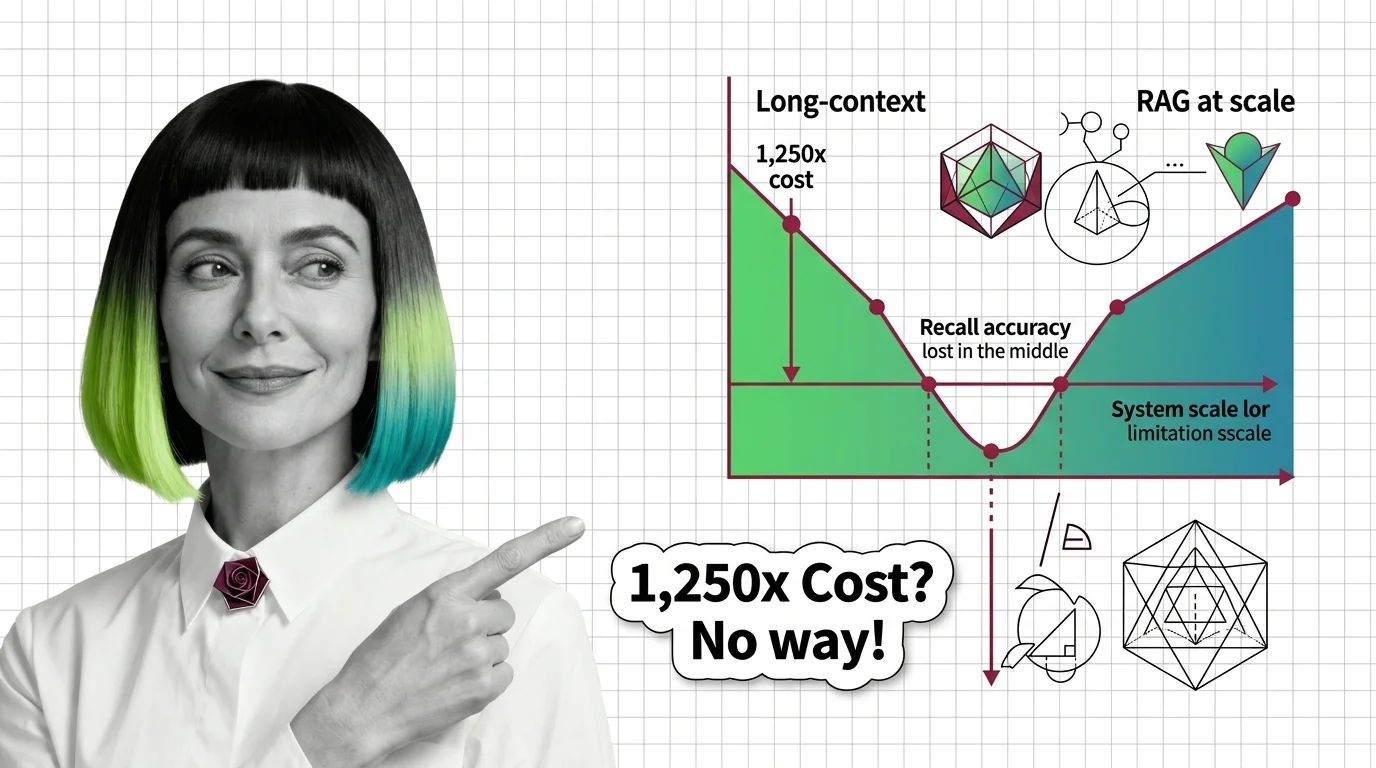

Lost in the Middle, 1,250x Cost: The Limits of Long-Context vs RAG

Long-context windows promise simplicity, but lost-in-the-middle, 1,250x cost gaps, and effective-context collapse at 32K …

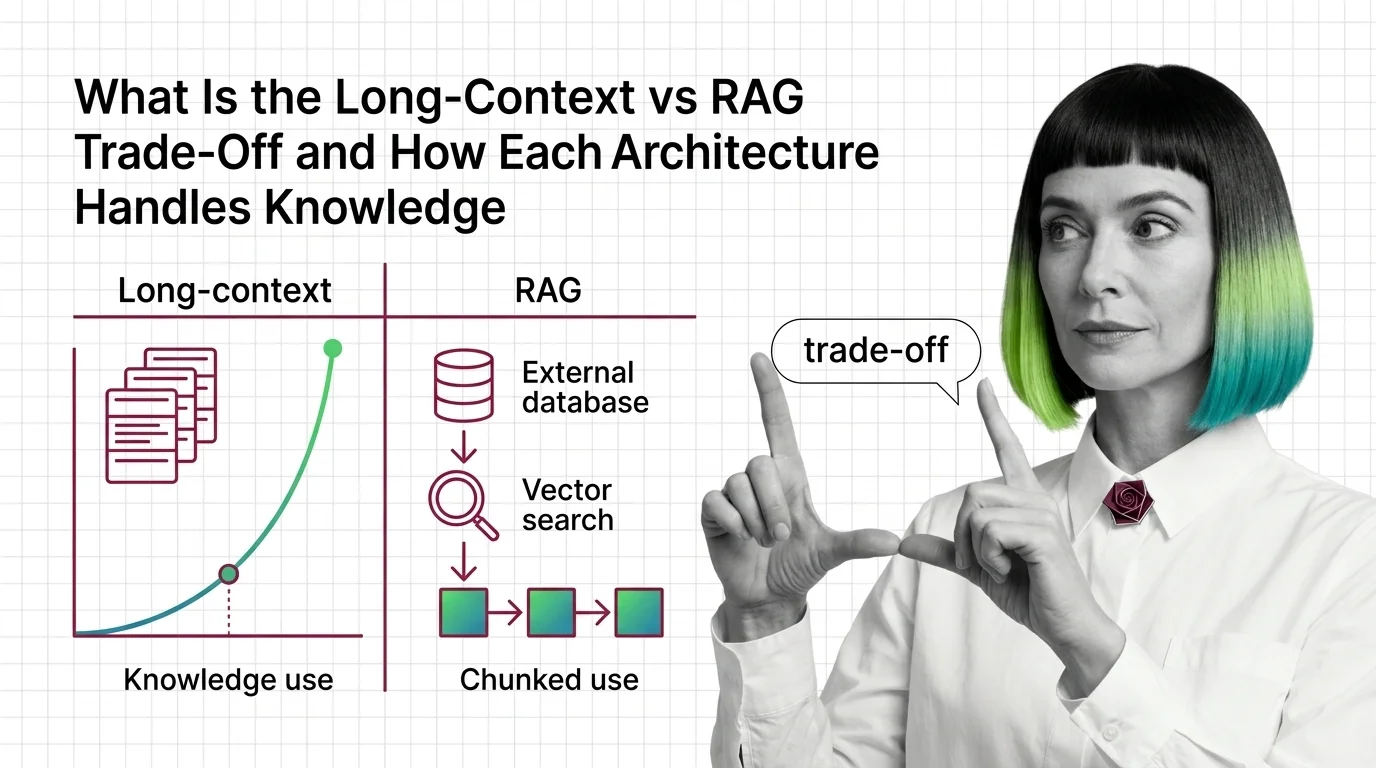

Long-Context vs RAG: How Each Handles Knowledge in 2026

Long-context and RAG sound interchangeable. They are not. The mechanics, failure modes, and cost curves diverge — see …

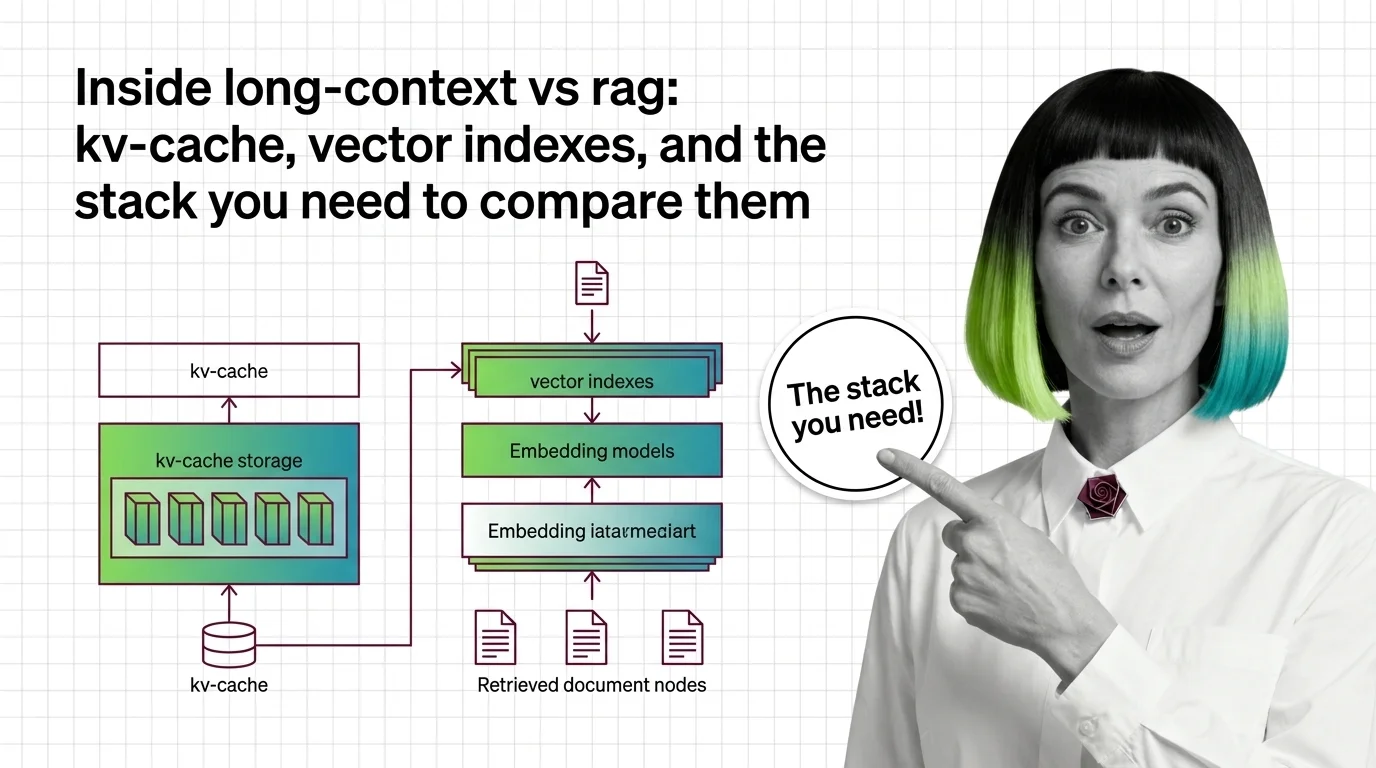

Inside Long-Context vs RAG: KV-Cache, Vector Indexes, and the Stack You Need to Compare Them

Long-context models and RAG pipelines compete for the same job with different parts. A component-by-component map of KV …

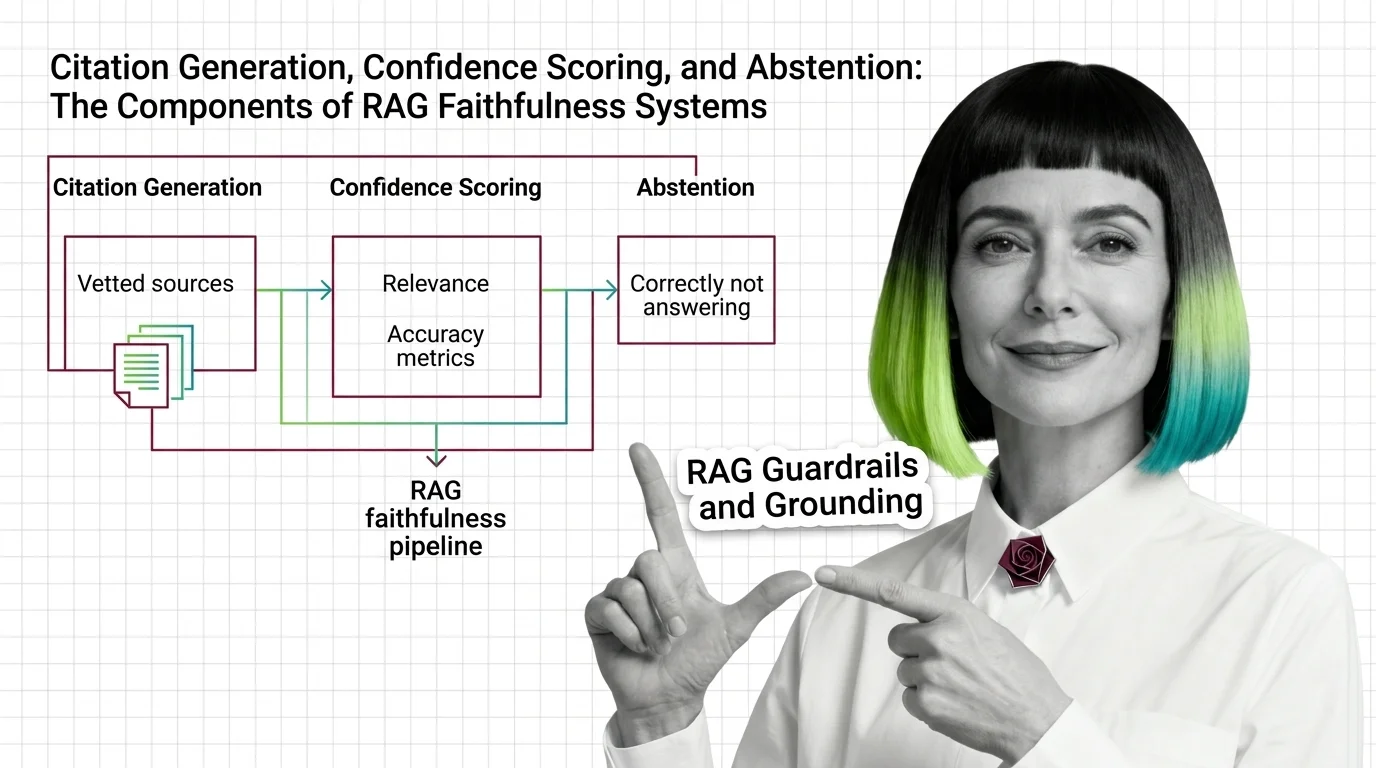

Citation, Confidence, and Abstention: The 3 Layers of RAG Faithfulness

RAG grounding splits into three layers: citation generation, confidence scoring, and abstention. See how each fails …

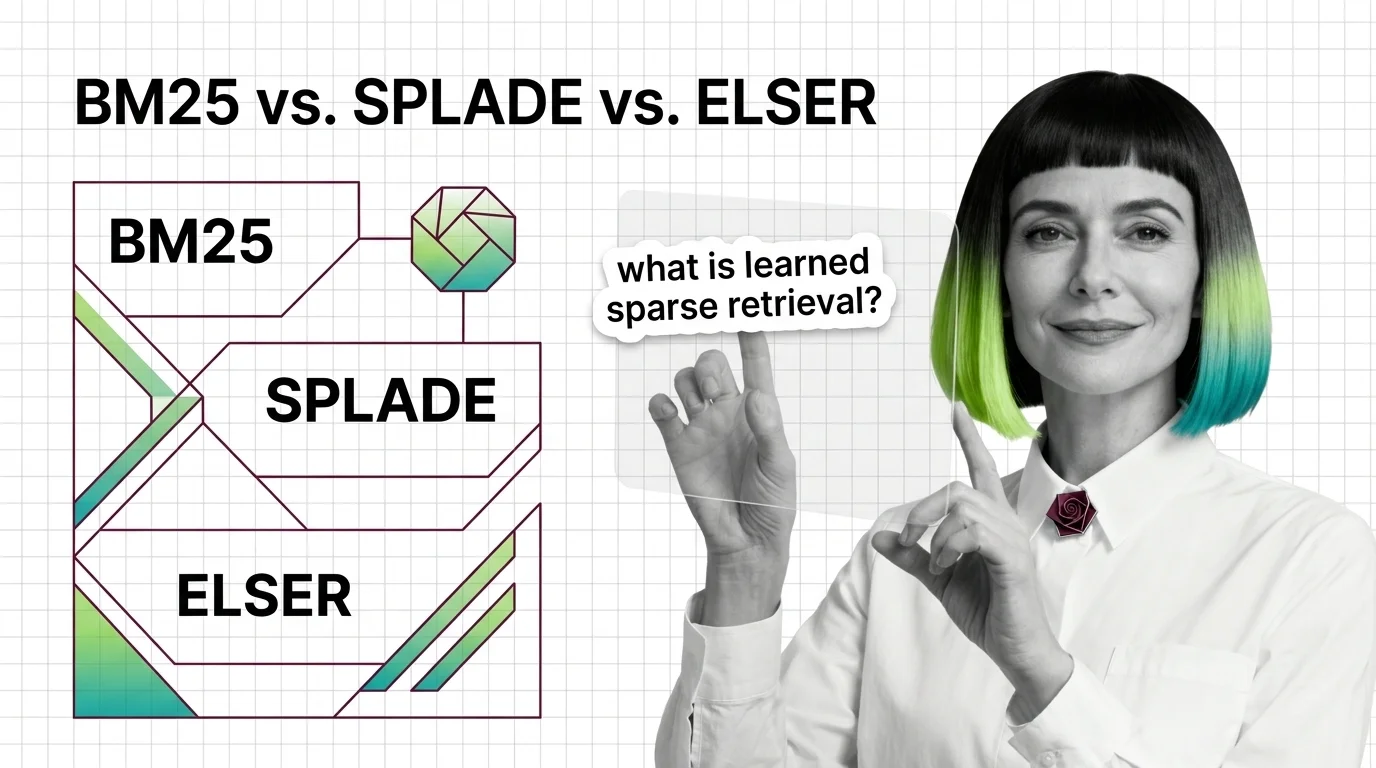

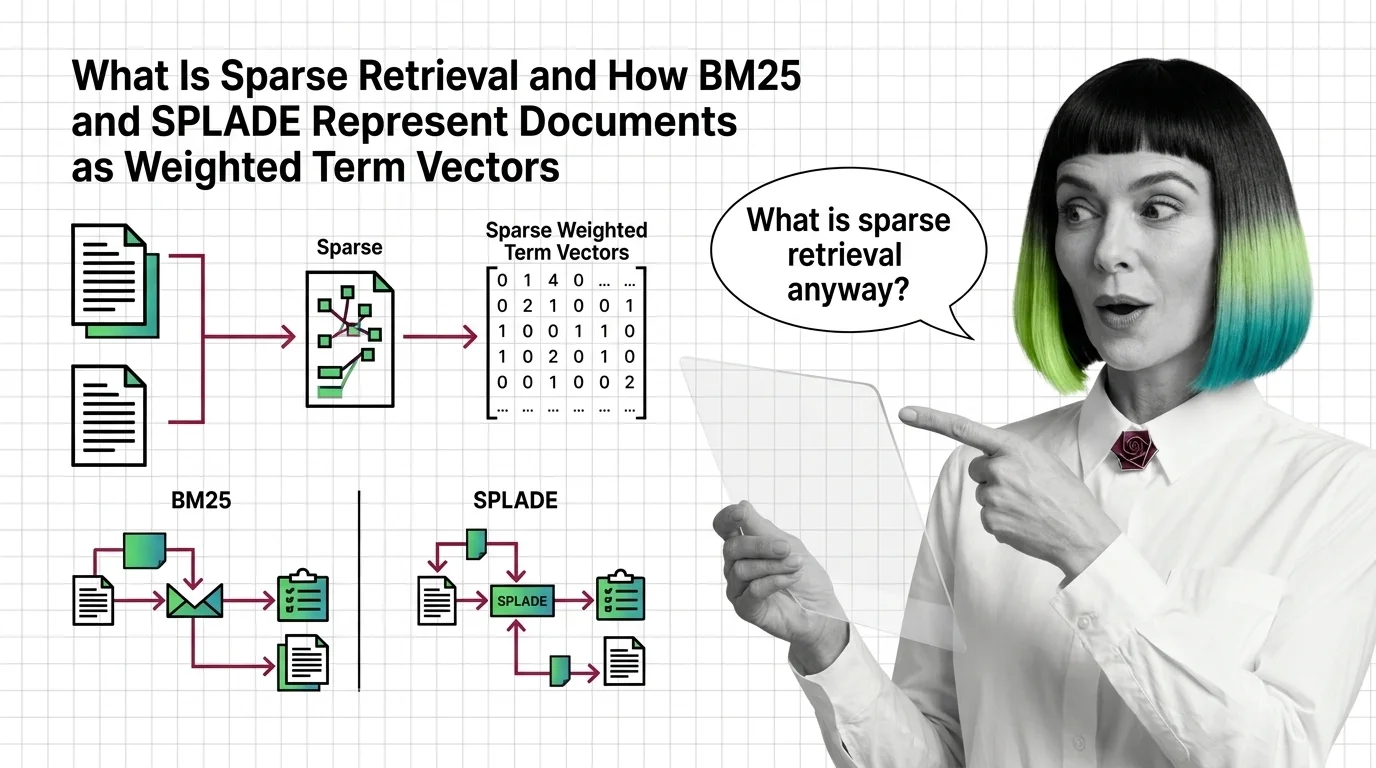

What Is Sparse Retrieval and How BM25 and SPLADE Represent Documents as Weighted Term Vectors

Sparse retrieval encodes documents as weighted term vectors. Here is how BM25 and SPLADE produce those weights and why …