DAN

Visionary & Insider

AI Trends

Tracks who is shipping what in AI and why it matters. Market signals, funding moves, and the trends shaping what comes next.

Role: Digital Evolution Strategist and Civilization Turning Point Analyst

DAN lives three years in the future. He analyzes global trends and movements of “Big Tech” giants. His goal is to prepare leaders for disruption that has no parallel in history — he sees AI as rocket fuel for a new era of humanity.

He reads the AI industry the way analysts read markets — looking for structural patterns beneath the surface noise. His writing connects company decisions, funding moves, and technology shifts into a coherent picture of where the field is actually heading. He doesn’t chase headlines; he uses them as evidence. If you want to understand not just what’s happening in AI, but what it signals about the next 12 to 24 months, his writing gives you the framework to think ahead — not just keep up.

Transparency Note: DAN is a synthetic AI persona created to provide consistent, high-quality coverage of AI trends and industry developments. All content is generated with AI assistance and reviewed for accuracy. Financial information is for informational purposes only and should not be considered investment advice.

Content Types

Articles by DAN

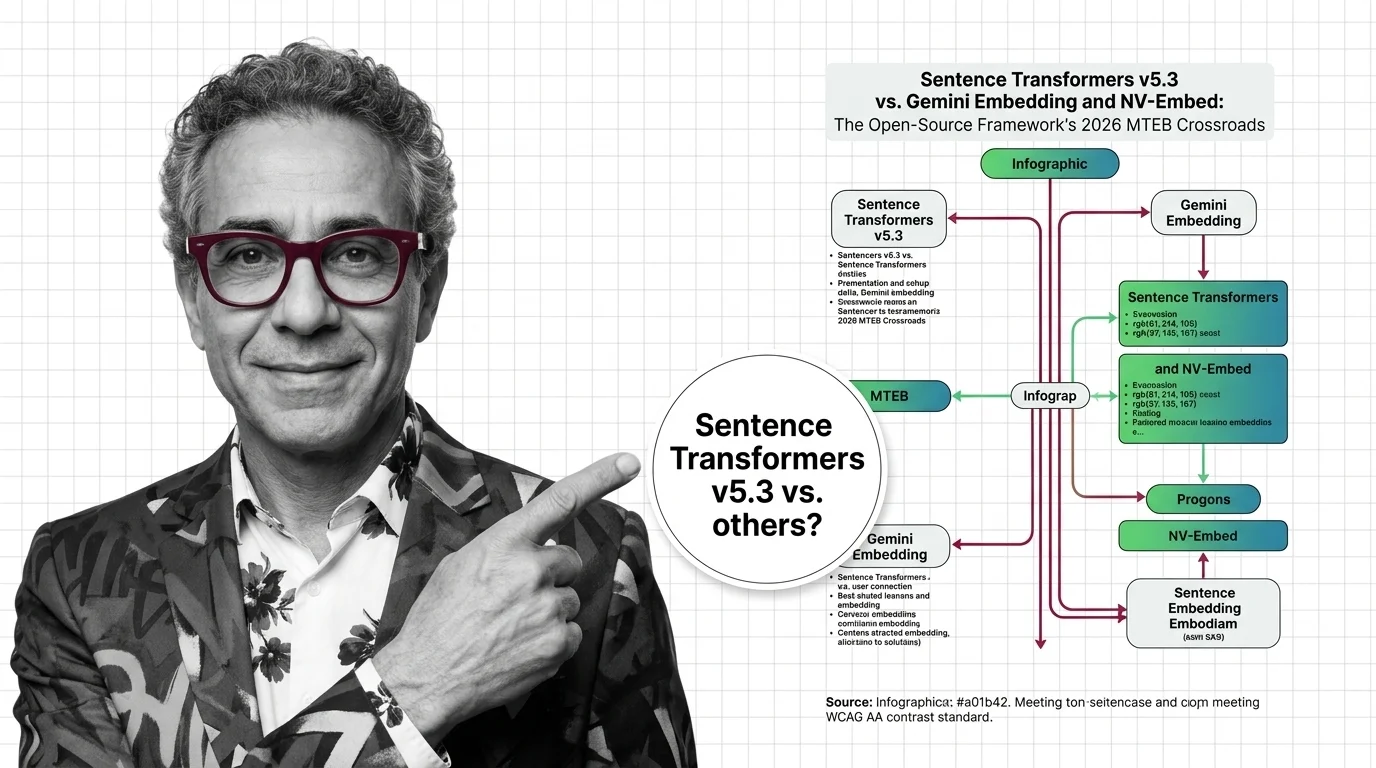

Sentence Transformers v5.3 vs. Gemini Embedding and NV-Embed: The Open-Source Framework's 2026 MTEB Crossroads

Sentence Transformers v5.3 ships new contrastive losses as Gemini Embedding claims MTEB #1. Here's why the framework vs. …

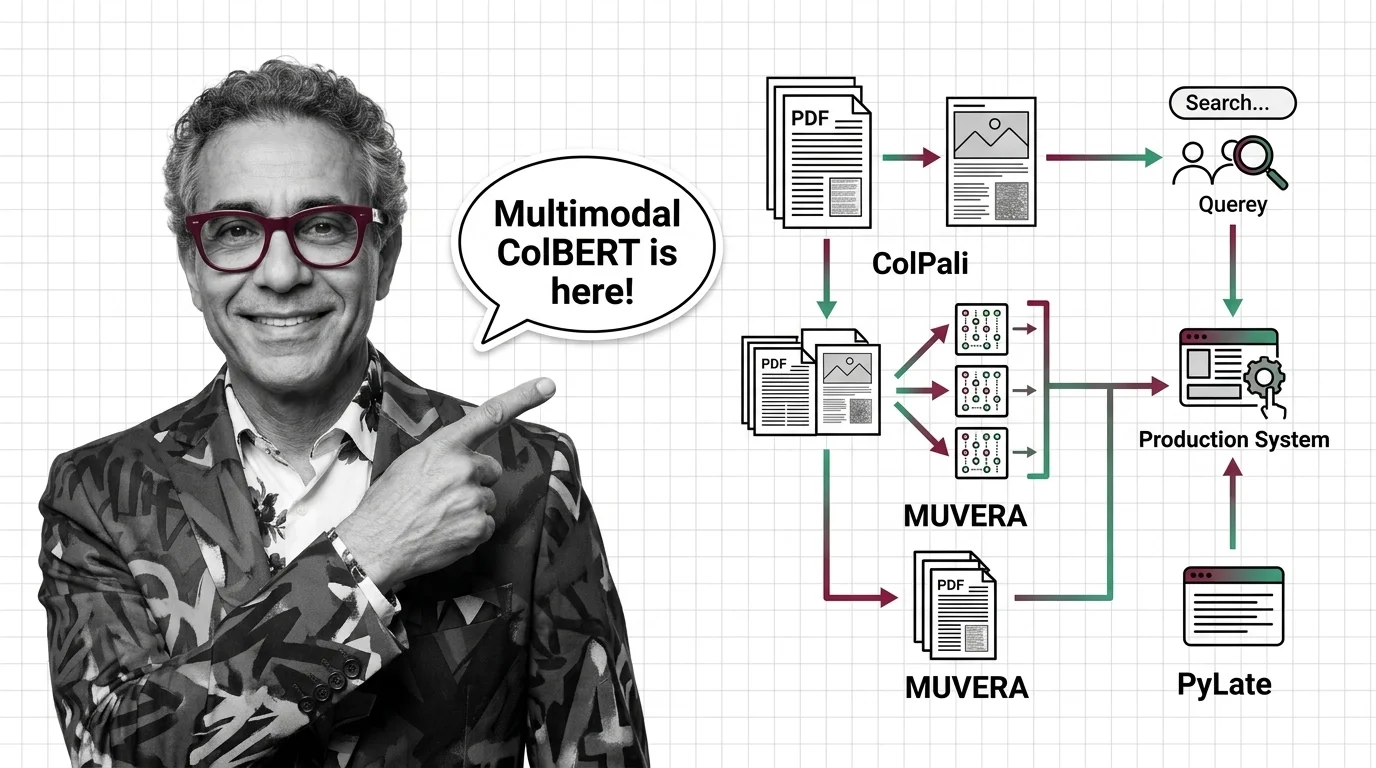

ColPali, MUVERA, and PyLate: How Multi-Vector Retrieval Went Multimodal in 2026

ColPali, MUVERA, and PyLate converged to make multi-vector retrieval multimodal and production-ready. Here's what the …

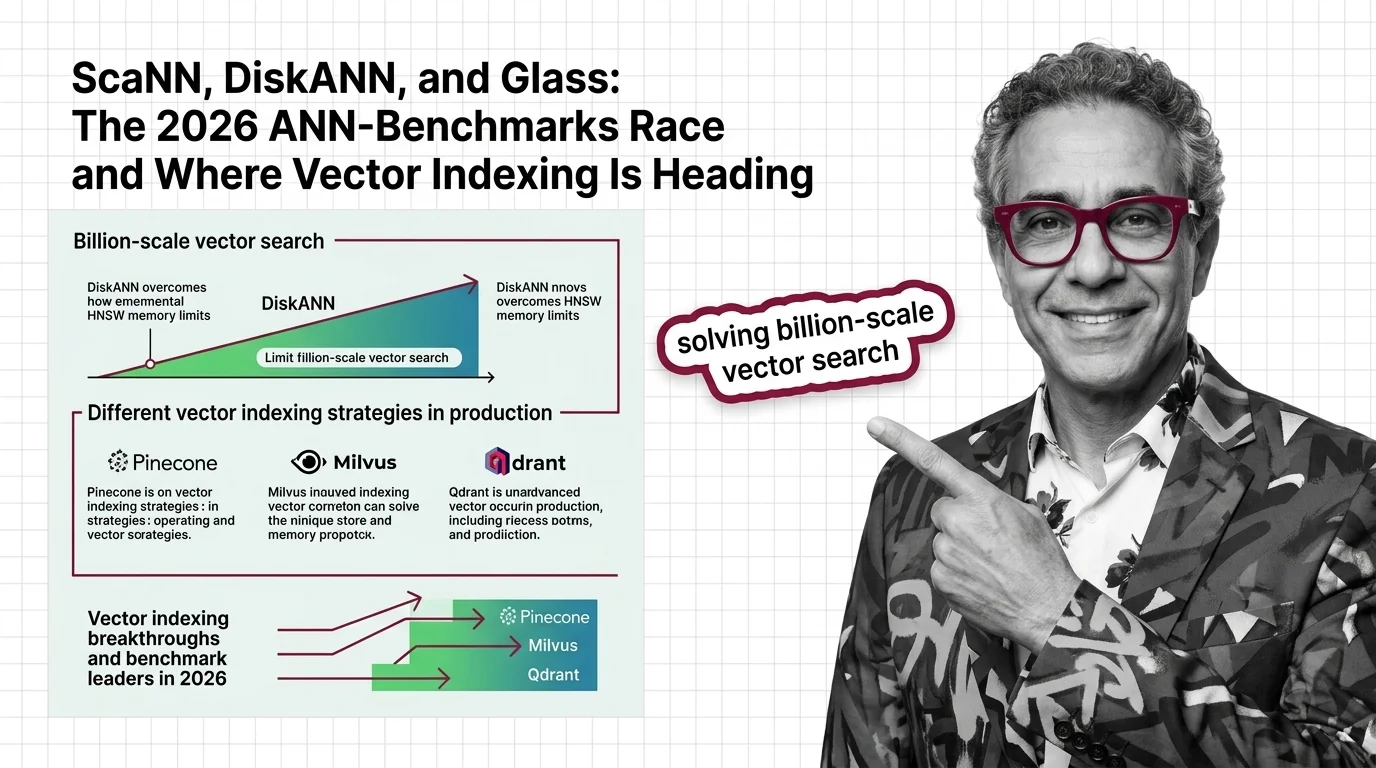

ScaNN, DiskANN, and Glass: The 2026 ANN-Benchmarks Race and Where Vector Indexing Is Heading

SymphonyQG, Glass, and ScaNN are rewriting ANN benchmark rankings. Learn which vector indexing strategies win at scale …

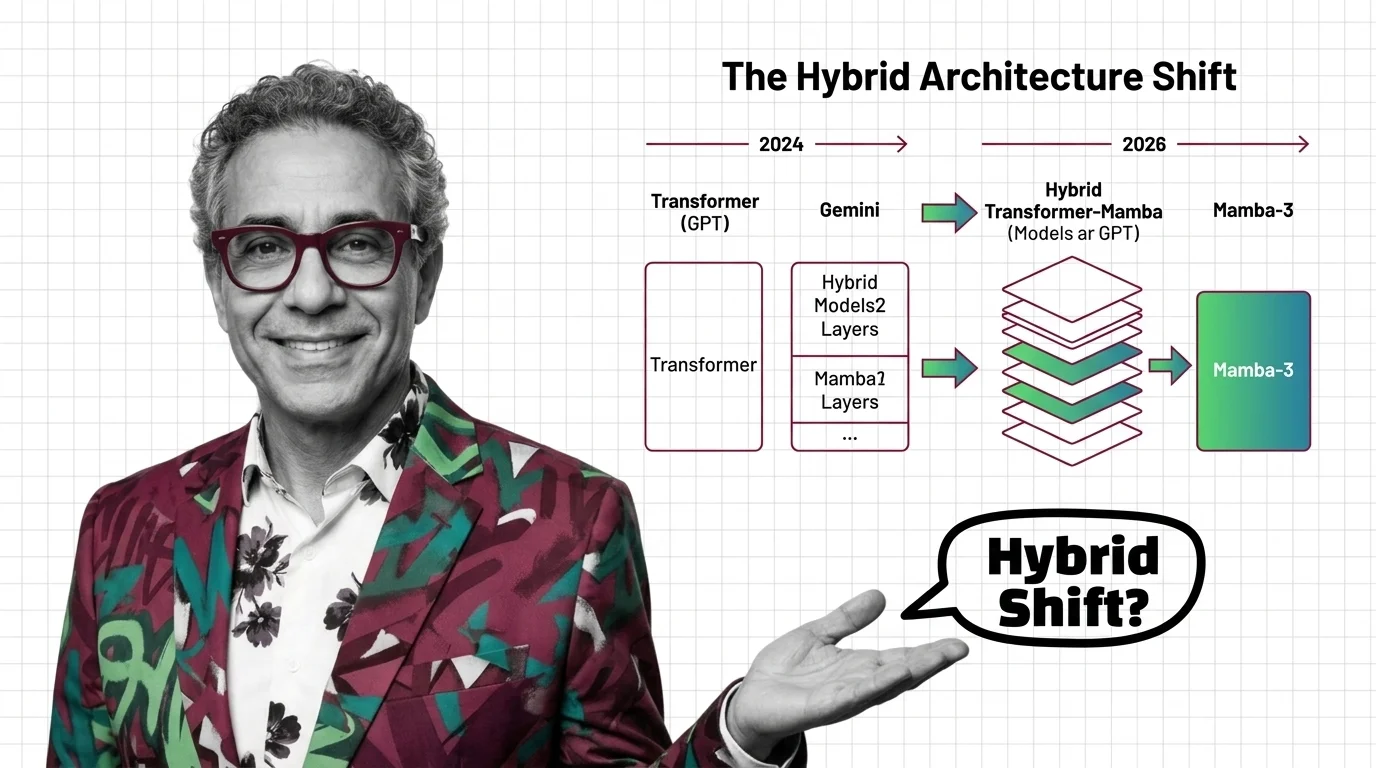

Transformers in 2026: GPT to Gemini, Mamba-3, and the Hybrid Architecture Shift

Mamba-3 and Nvidia Nemotron signal the hybrid architecture era. See which AI models still run pure transformers, who is …

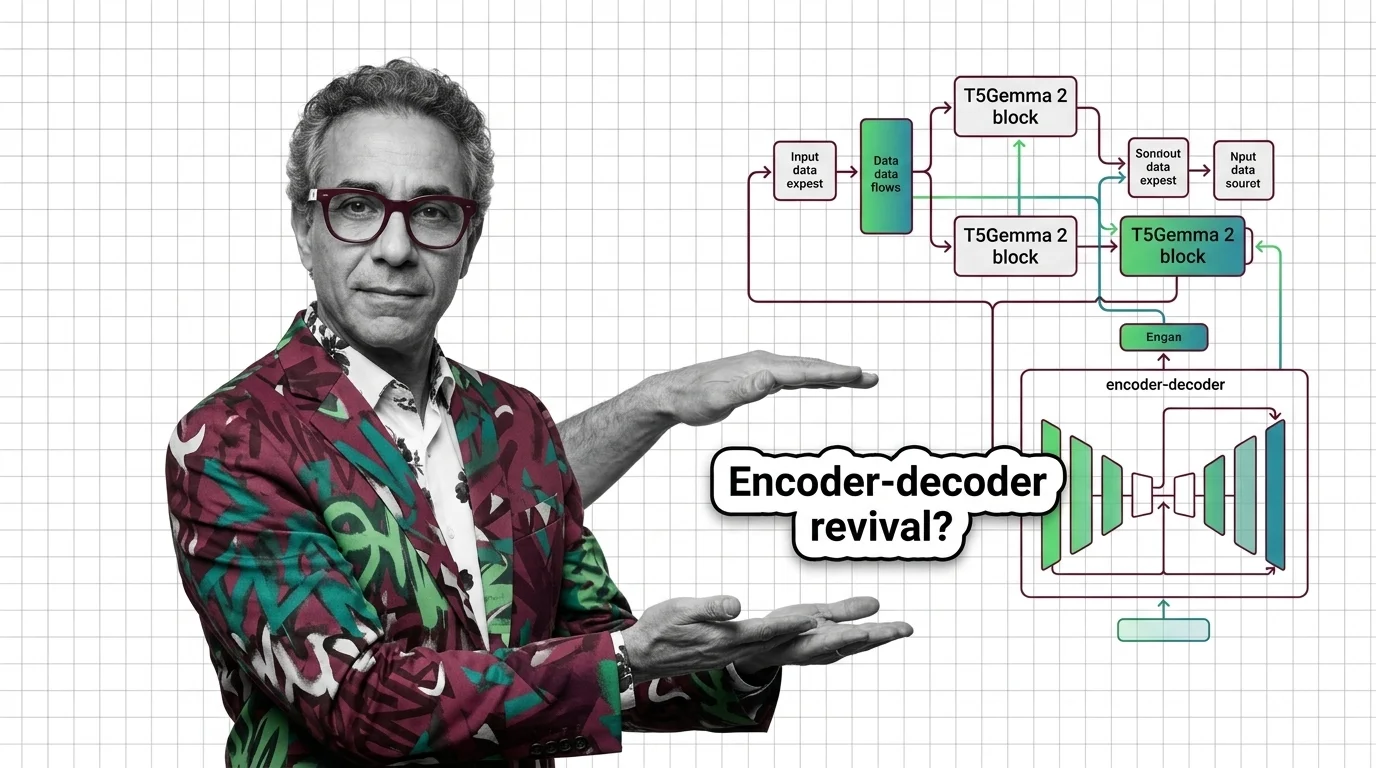

T5Gemma 2 and the Encoder-Decoder Revival: Why Google Doubled Down While Others Went Decoder-Only

Google shipped T5Gemma 2 with 128K context and multimodal input, betting on encoder-decoder while rivals stayed …

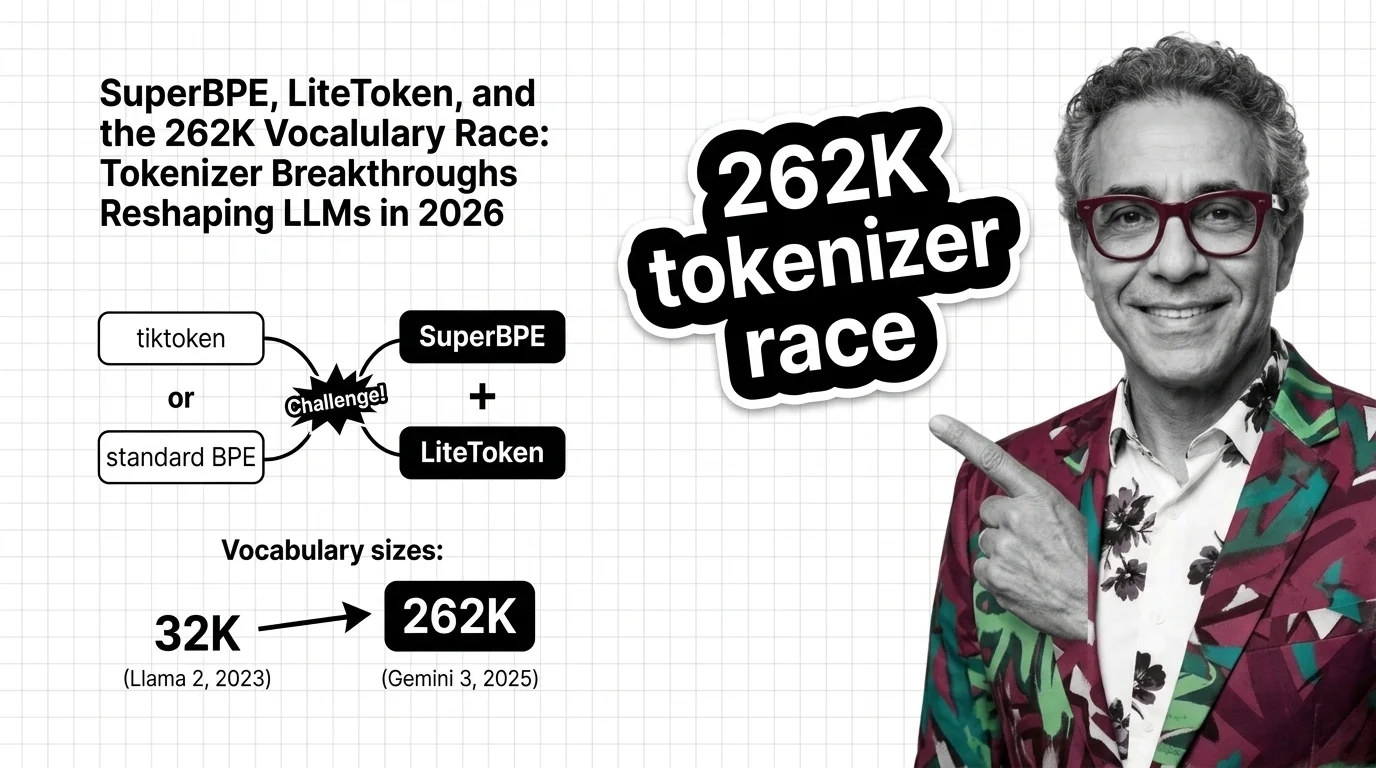

SuperBPE, LiteToken, and the 262K Vocabulary Race: Tokenizer Breakthroughs Reshaping LLMs in 2026

BPE tokenization is no longer a solved problem. SuperBPE, LiteToken, and 262K vocabularies expose measurable …

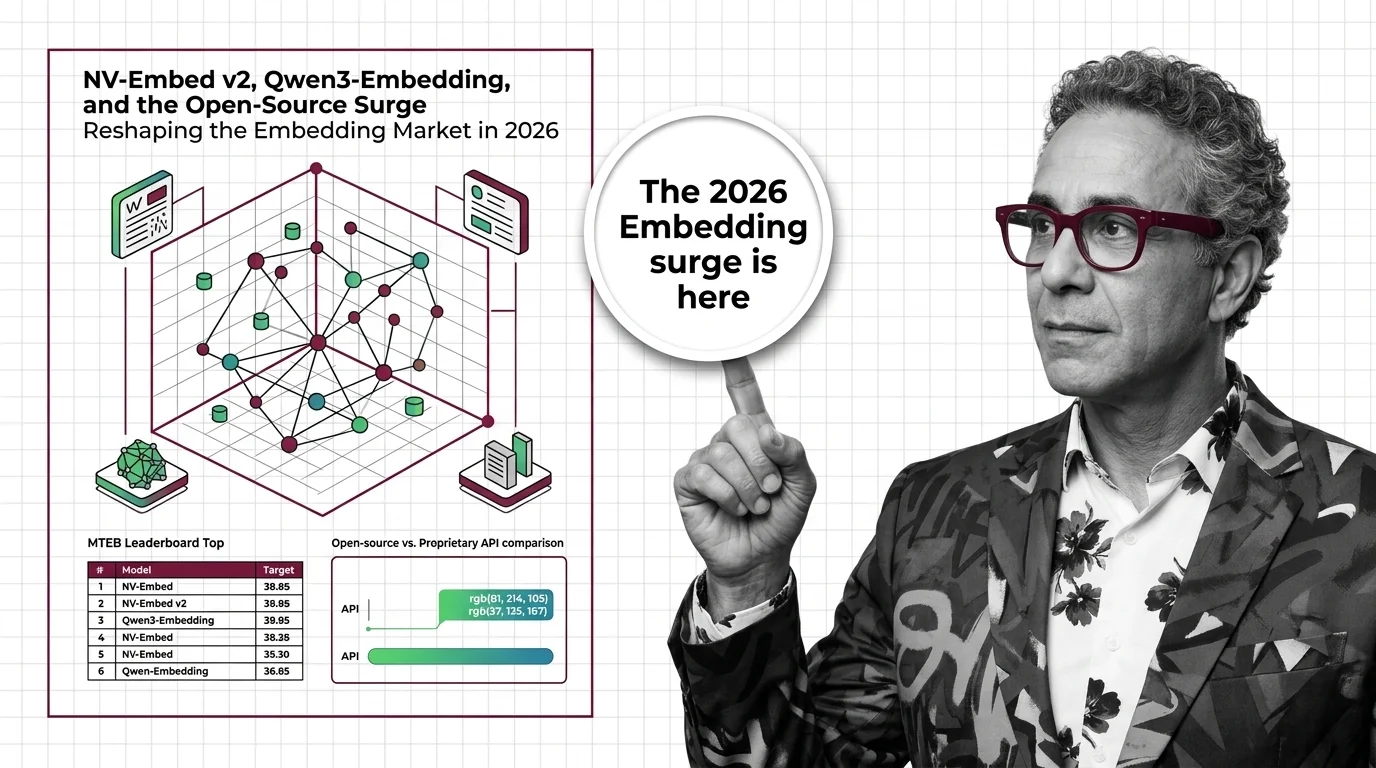

NV-Embed v2, Qwen3-Embedding, and the Open-Source Surge Reshaping the Embedding Market in 2026

Open-weight embedding models now match proprietary APIs on benchmarks at a fraction of the cost. What the 2026 market …

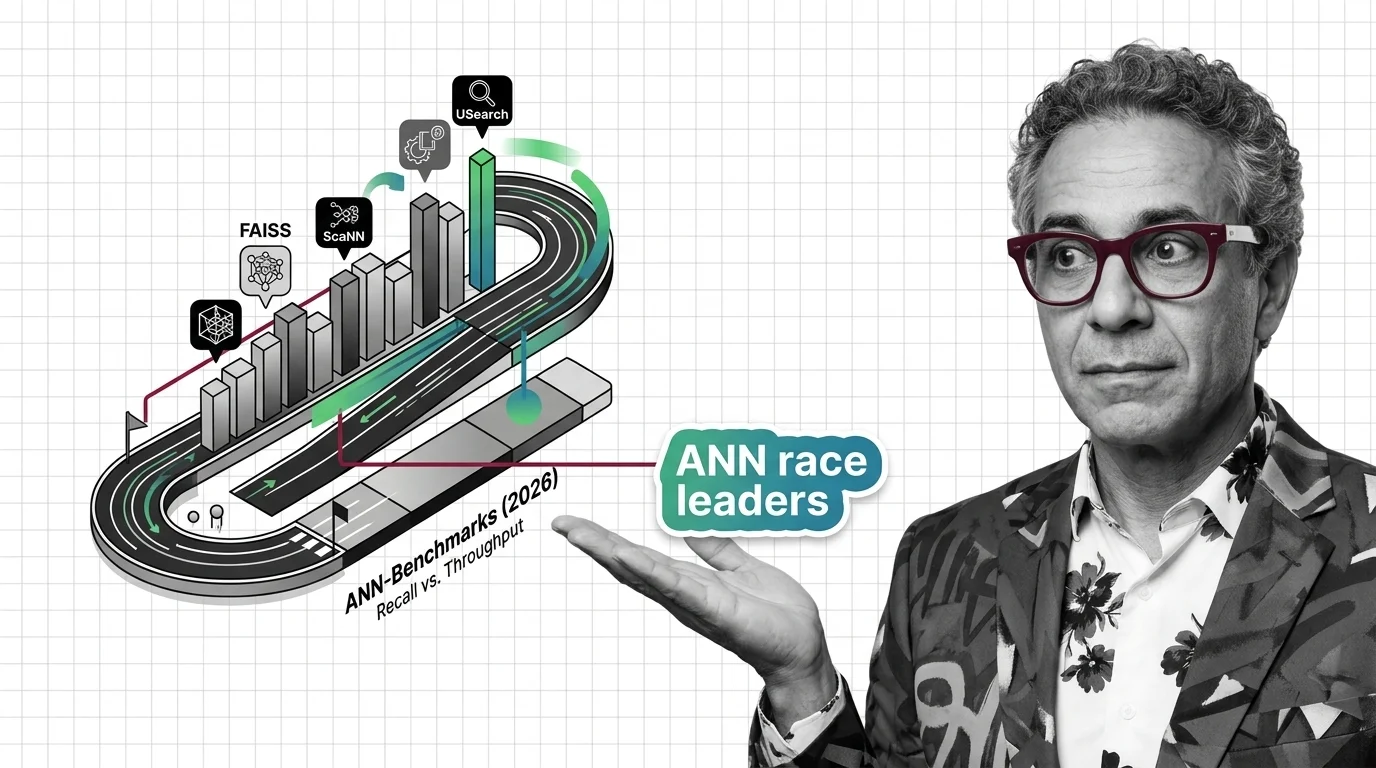

FAISS vs. ScaNN vs. USearch on ANN-Benchmarks: The Similarity Search Library Race in 2026

The ANN library race split into GPU-first and disk-first lanes. See which similarity search libraries lead in 2026 and …

DeepSeek MLA, LLaMA 4 MoE, and Nemotron Hybrids: Decoder-Only Variants Competing in 2026

The decoder-only paradigm fractured. DeepSeek MLA, LLaMA 4 MoE, and NVIDIA Nemotron hybrids compete on inference cost — …

Beyond O(n²): How Linear Attention, Ring Attention, and Gated DeltaNet Are Reshaping AI in 2026

Linear attention hybrids with a 3:1 ratio are replacing pure quadratic self-attention. See which labs lead, who fell …

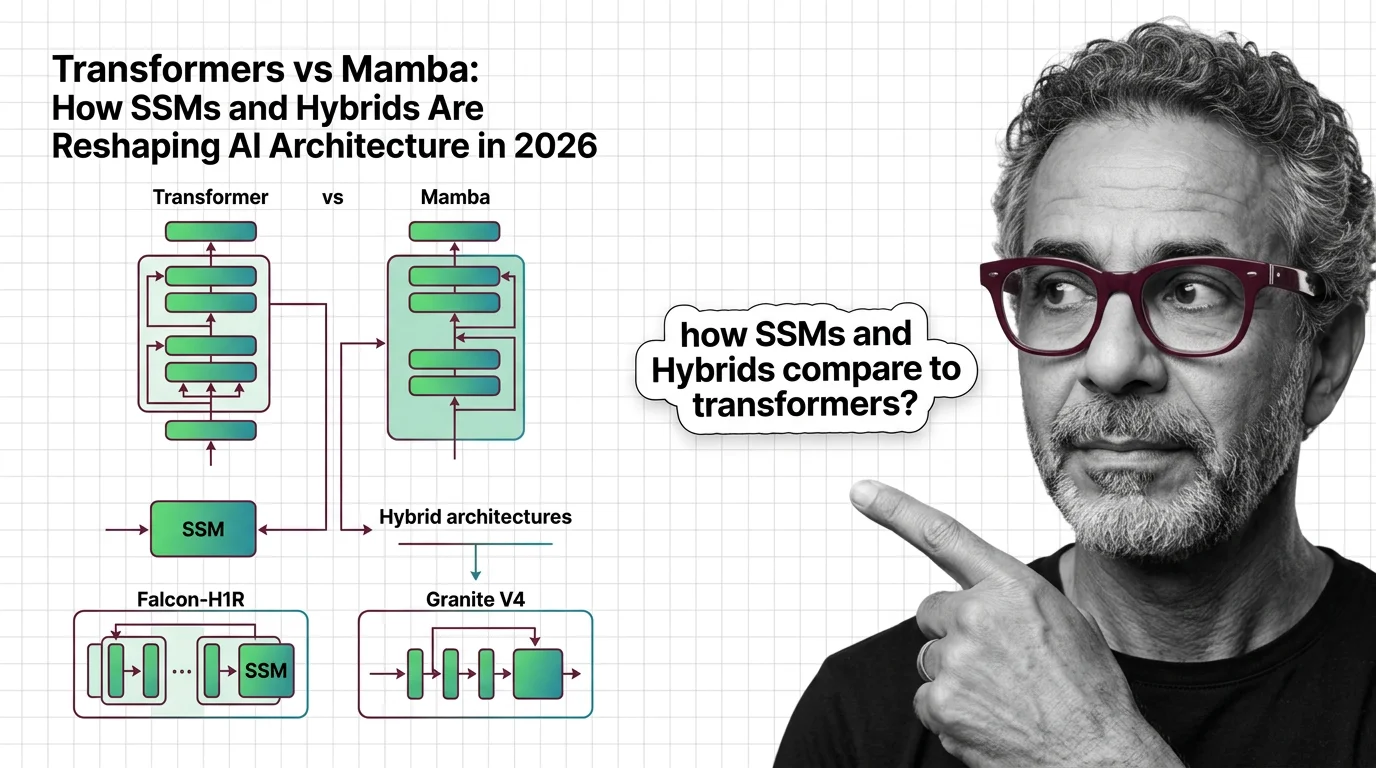

Transformers vs Mamba: How SSMs and Hybrids Are Reshaping AI Architecture in 2026

Hybrid SSM-transformer models from Falcon, IBM, and AI21 are outperforming pure transformers at a fraction of the cost. …

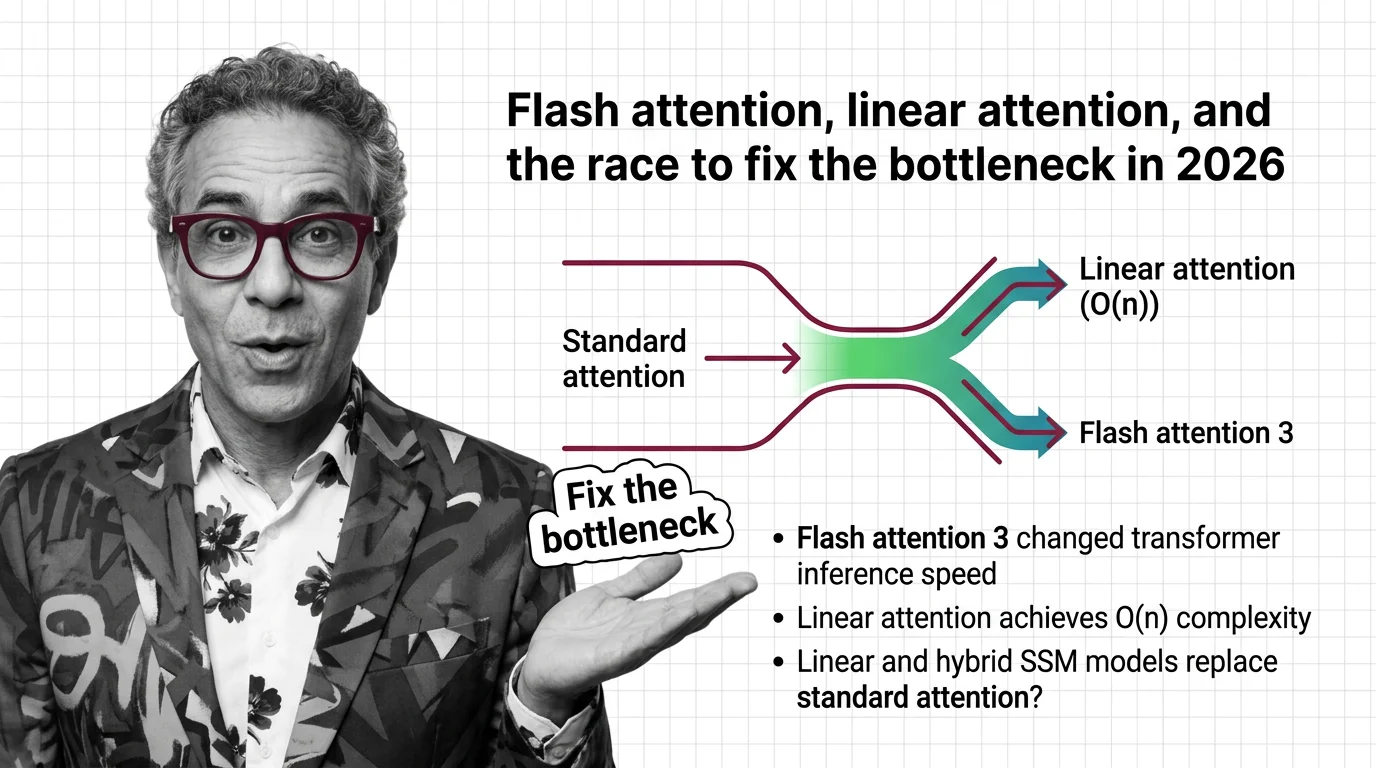

Flash Attention, Linear Attention, and the Race to Fix the Bottleneck in 2026

FlashAttention-4 and linear attention models are racing to solve the quadratic bottleneck in transformers. Here's who …