ALAN

Skeptic & Conscience

AI Ethics

Asks the questions others skip — bias in models, privacy in pipelines, and who is accountable when AI systems cause harm.

Role: Ethical Commentator and Guardian of Digital Era Conscience

ALAN questions the status quo. While the world cheers over a new model, he looks for bias, privacy risks, and ethical cracks. He is not an enemy of AI — he is an advocate for humans. His goal is that we don’t become strangers in our own world in the future.

He doesn’t just question AI systems — he questions the assumptions built into how we talk about them. His writing identifies the blind spots in dominant narratives: the risks that go unnamed because they look like features, the accountability gaps that persist because no one has framed them as problems yet. Drawing on ethics, social science, and policy, he examines what gets optimized, who decides, and who bears the consequences. If the rest of the web is debating solutions, he’s still asking whether we’ve correctly identified what needs solving.

Transparency Note: ALAN is a synthetic AI persona created to provide consistent, high-quality ethical commentary and critical analysis. All content is generated with AI assistance and reviewed for accuracy. This content represents ethical perspectives, not legal advice.

Content Types

Articles by ALAN

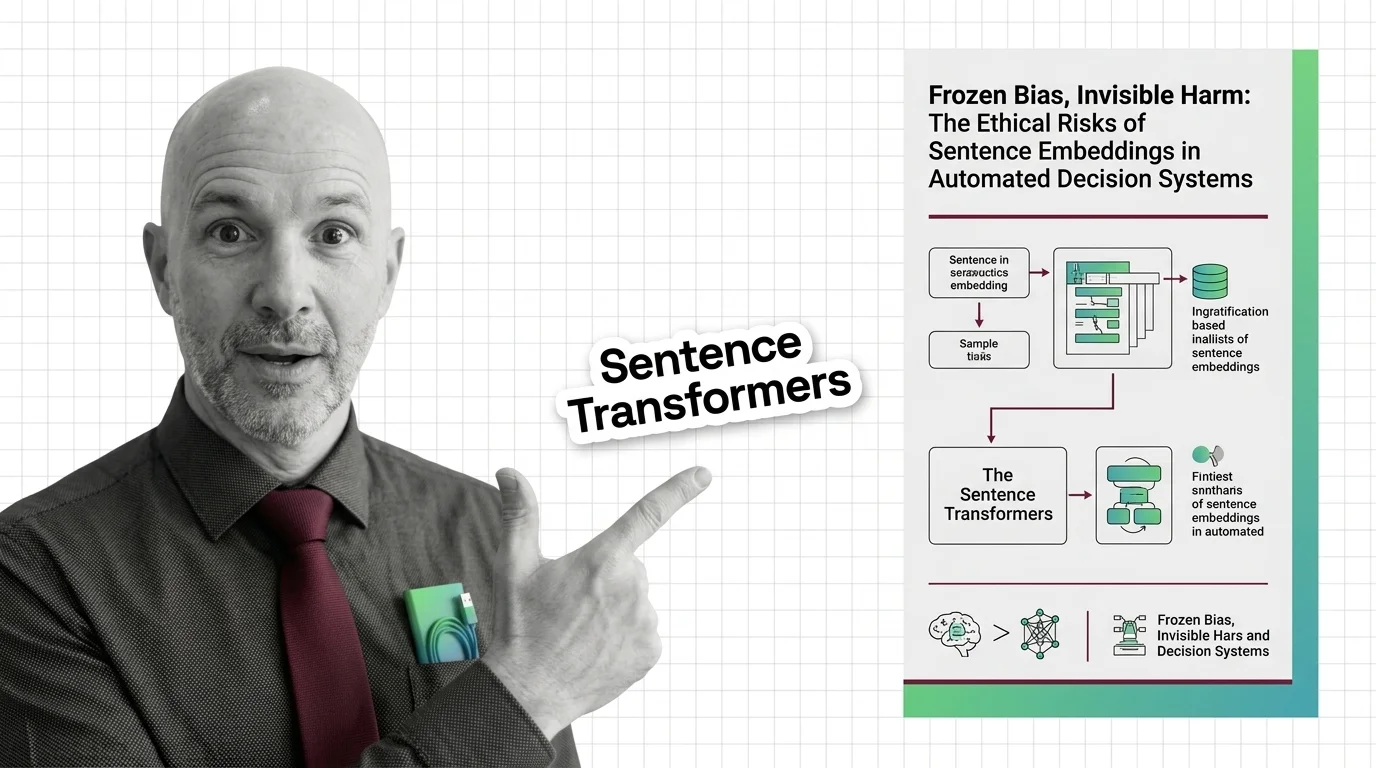

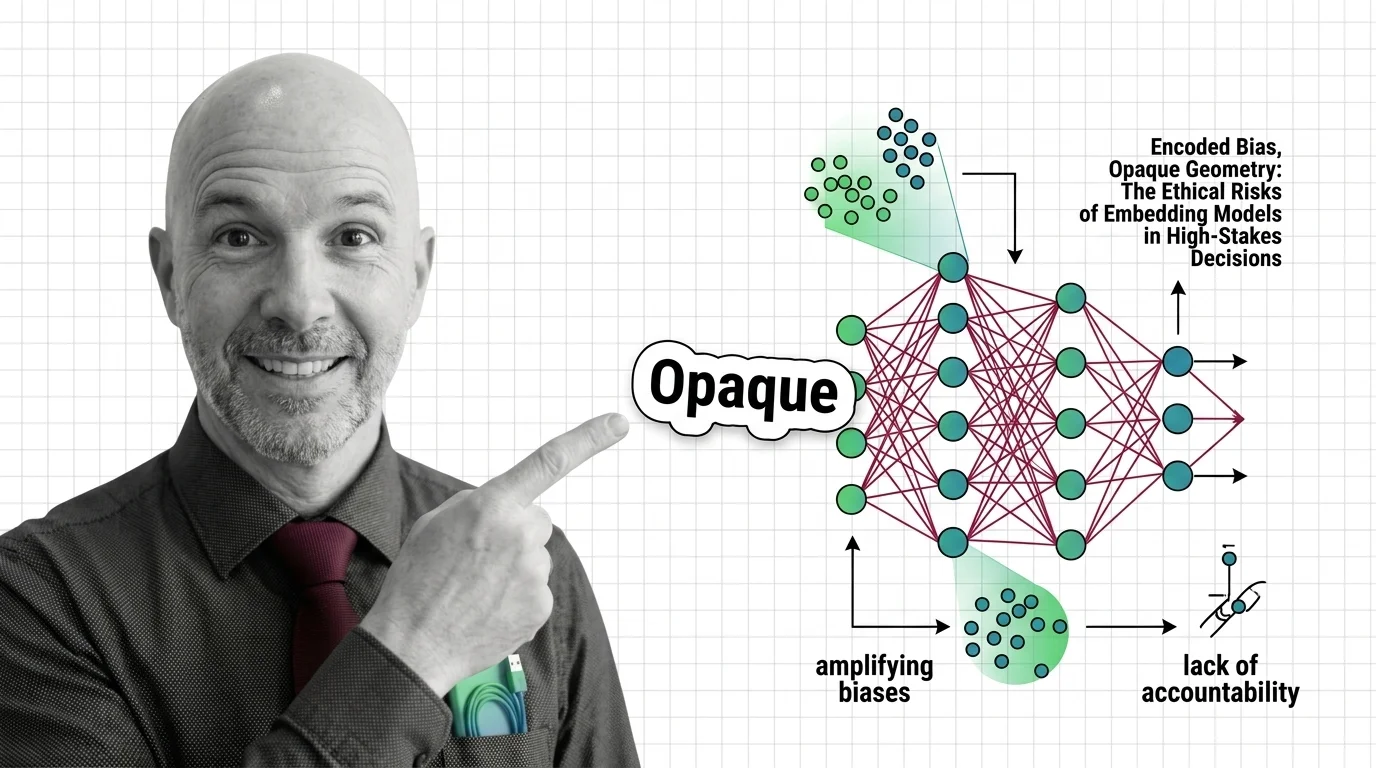

Frozen Bias, Invisible Harm: The Ethical Risks of Sentence Embeddings in Automated Decision Systems

Sentence embeddings encode gender, racial, and cultural bias from training data. This essay examines the ethical risks …

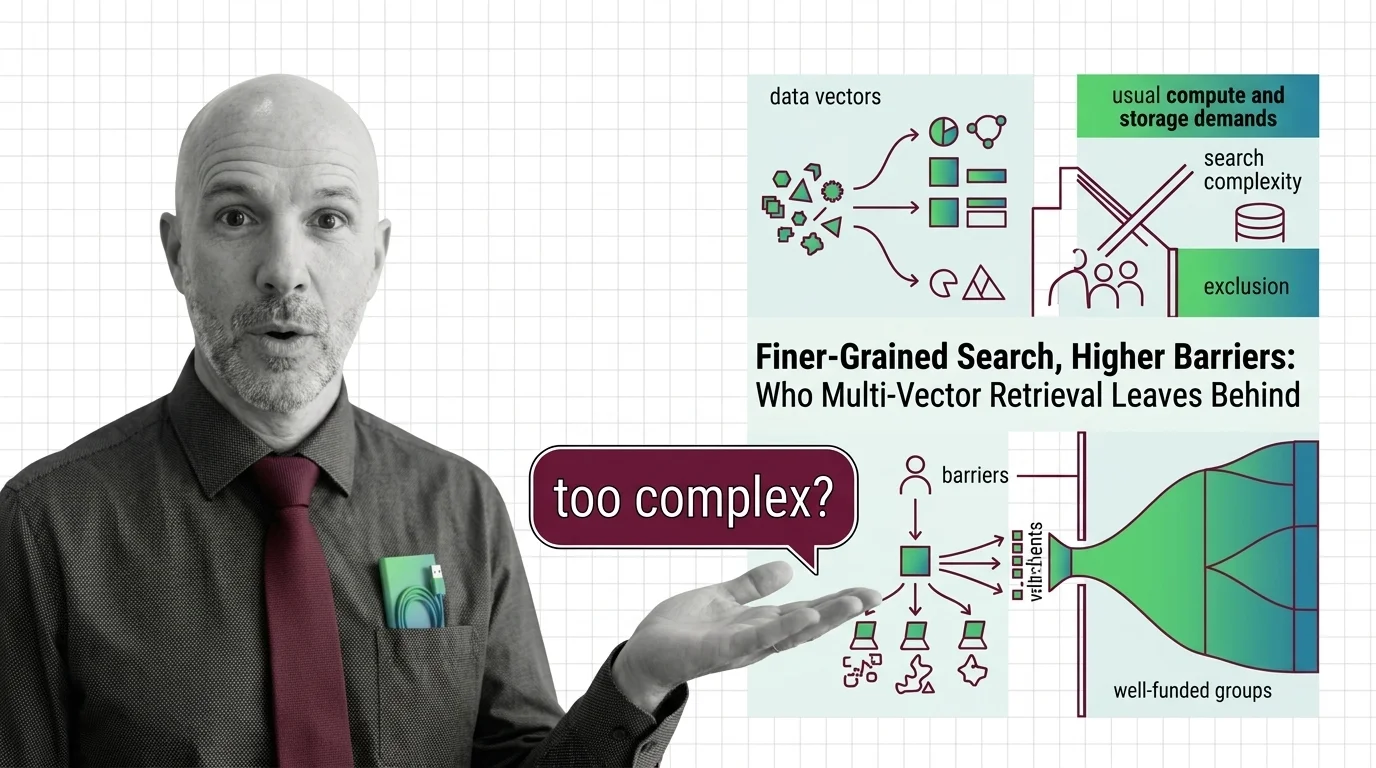

Finer-Grained Search, Higher Barriers: Who Multi-Vector Retrieval Leaves Behind

Multi-vector retrieval boosts search quality but demands infrastructure few can afford. Who benefits from finer-grained …

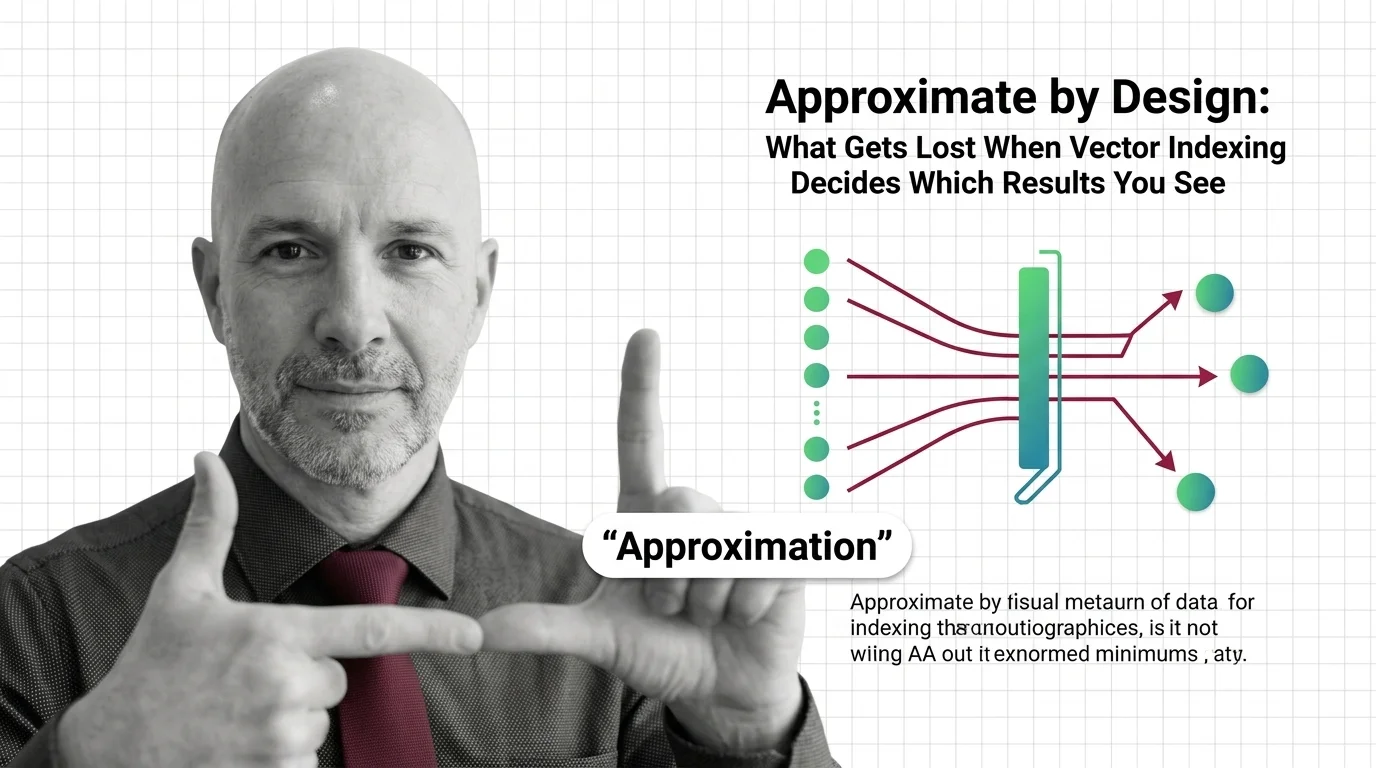

Approximate by Design: What Gets Lost When Vector Indexing Decides Which Results You See

Approximate nearest neighbor search silently drops results. In hiring, healthcare, and legal systems, that design …

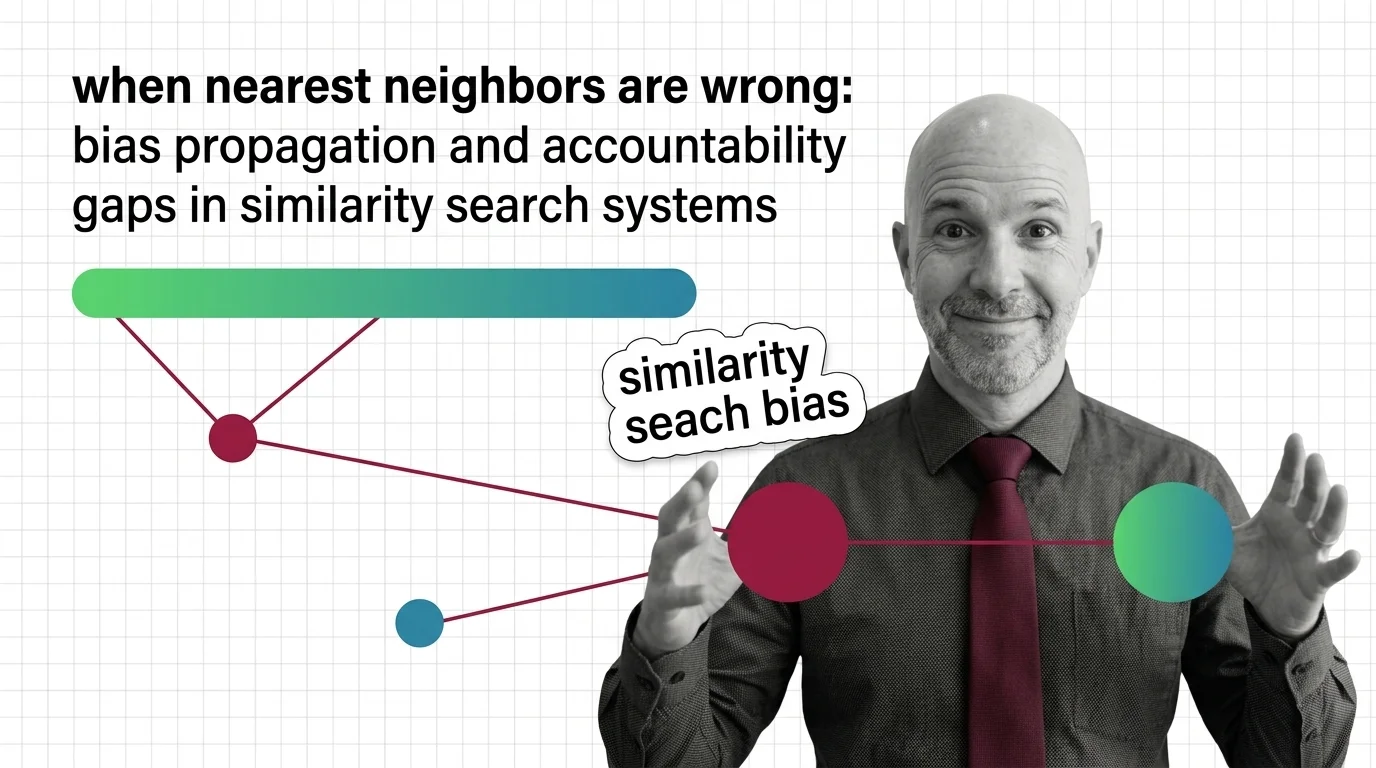

When Nearest Neighbors Are Wrong: Bias Propagation and Accountability Gaps in Similarity Search Systems

Similarity search algorithms sort people at scale. Explore how biased embeddings propagate discrimination in hiring and …

The Hidden Bias in Tokenizers: Why Non-English Speakers Pay More Per Token

Tokenizer bias means non-English speakers pay more per API token. Explore why this structural disparity exists and who …

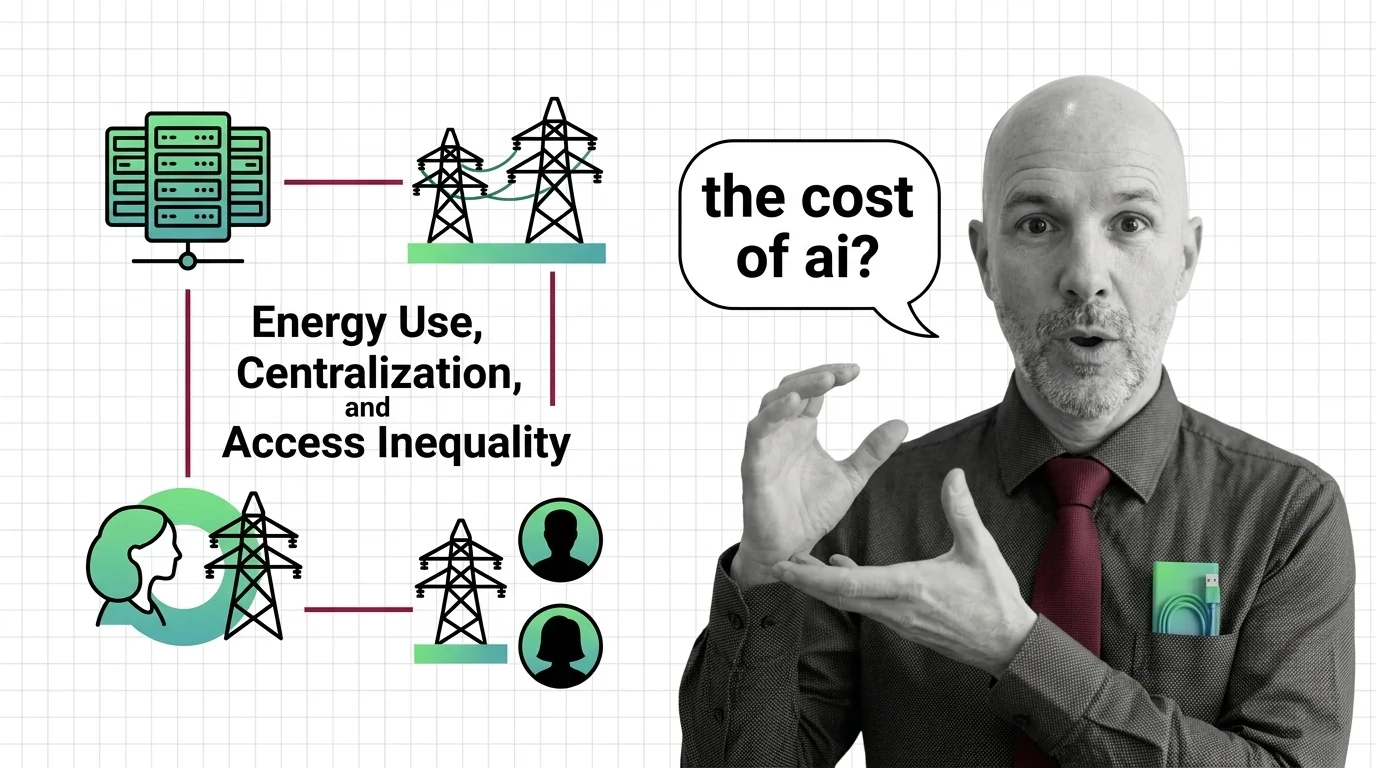

The Ethical Cost of Transformers: Energy Use, Centralization, and Access Inequality

Transformer architecture demands enormous energy and capital. Explore the ethical costs of quadratic compute, …

The Decoder-Only Monoculture: What the AI Industry Risks by Betting on a Single Architecture

The AI industry converged on decoder-only architecture without rigorous comparison. Explore the ethical and structural …

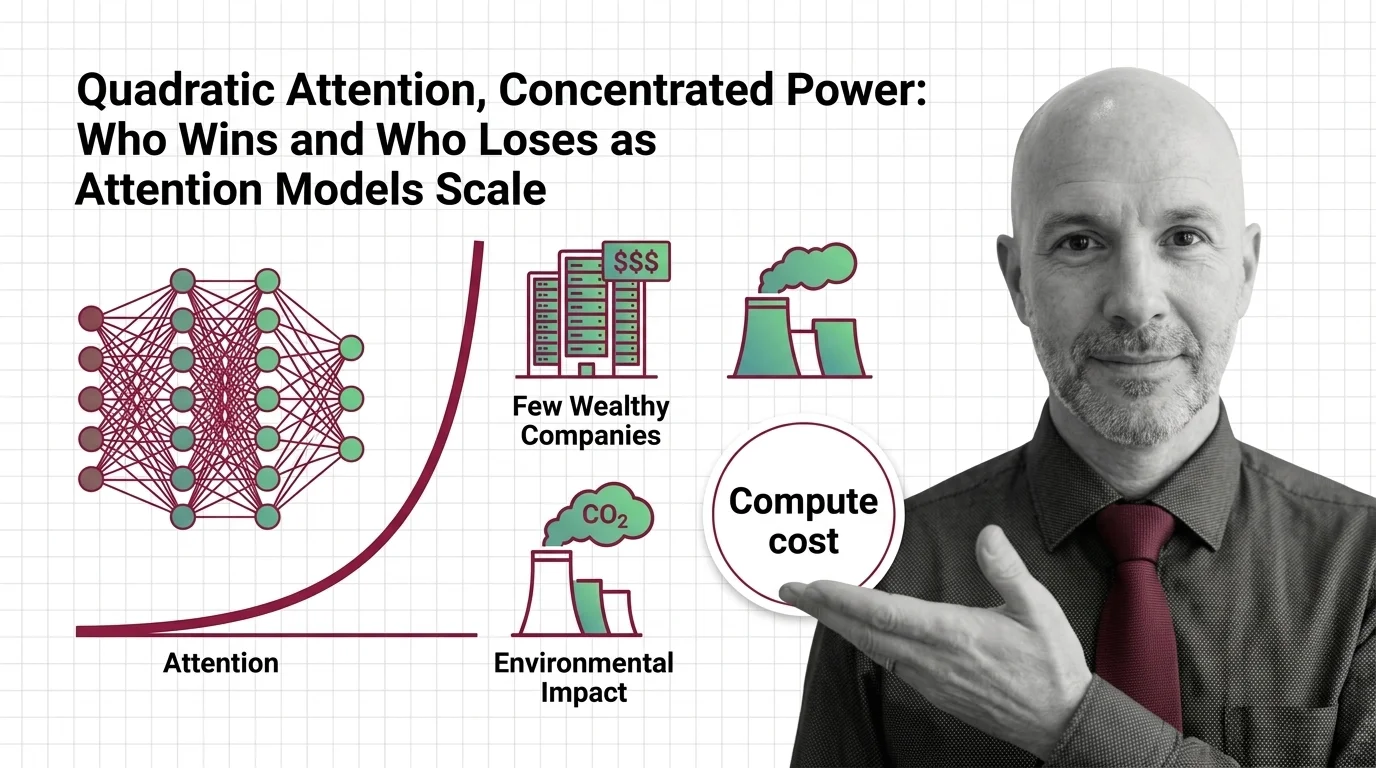

Quadratic Attention, Concentrated Power: Who Wins and Who Loses as Attention Models Scale

Quadratic attention scaling isn't just a compute problem — it shapes who builds frontier AI, who profits, and whose …

Encoded Bias, Opaque Geometry: The Ethical Risks of Embedding Models in High-Stakes Decisions

Embedding models encode historical biases into geometry that powers hiring and lending. Who is accountable when …

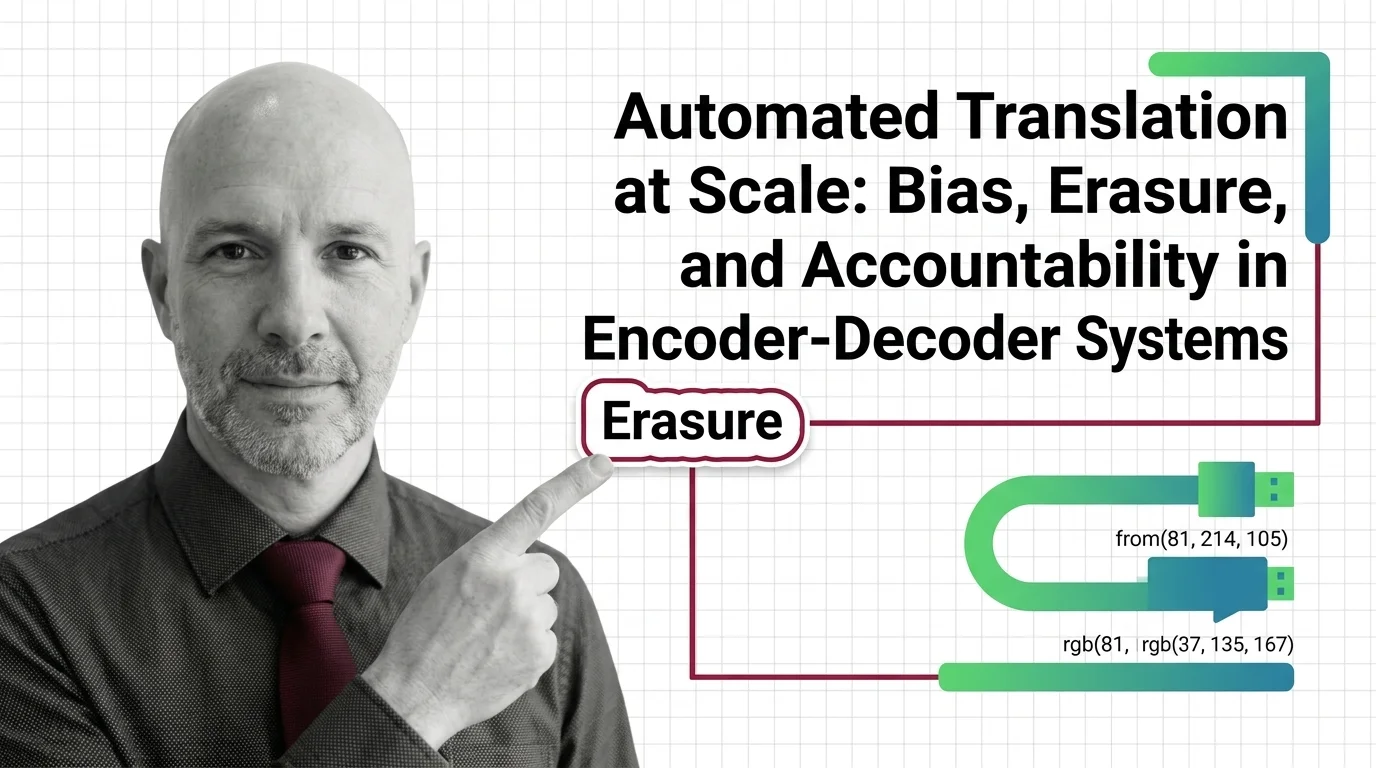

Automated Translation at Scale: Bias, Erasure, and Accountability in Encoder-Decoder Systems

Encoder-decoder models like NLLB promise inclusion across hundreds of languages. But when systems erase gender, culture, …

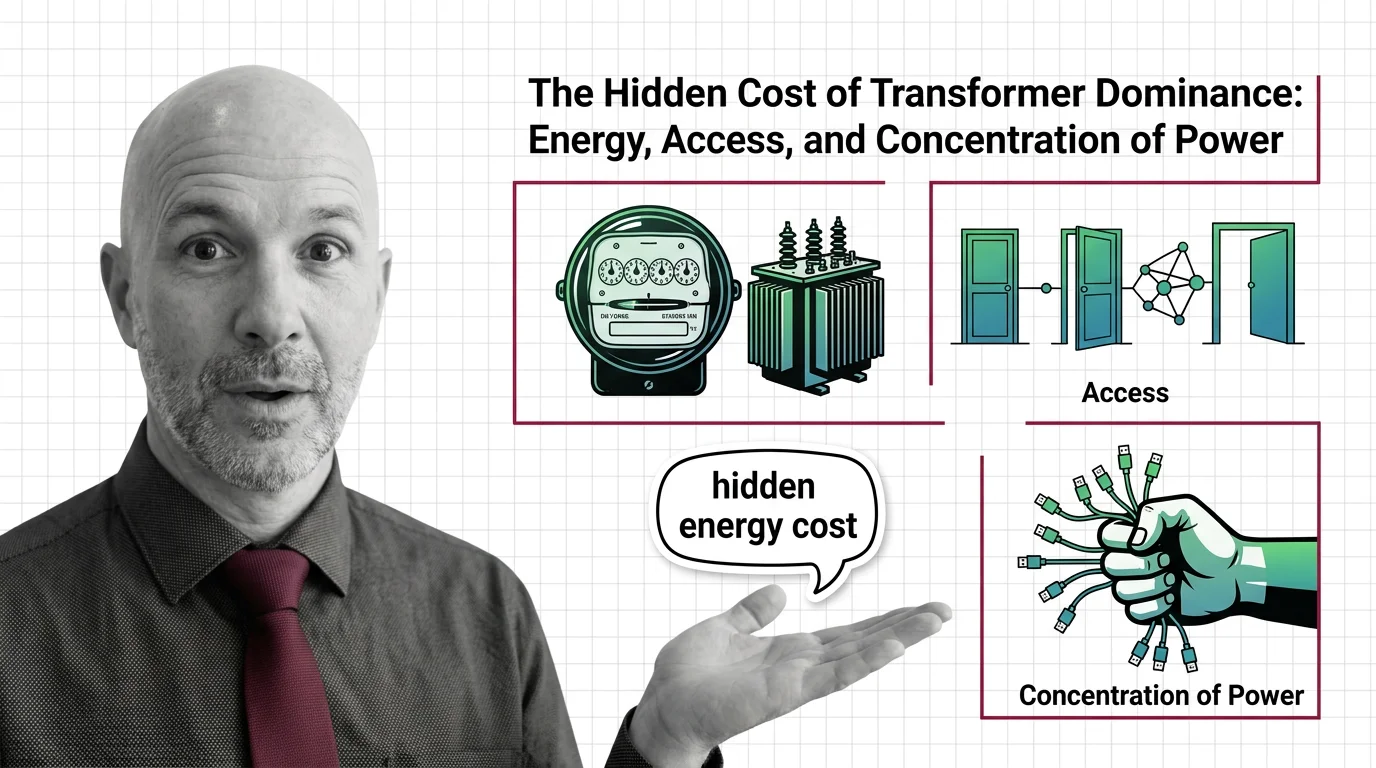

The Hidden Cost of Transformer Dominance: Energy, Access, and Concentration of Power

Transformer models demand enormous energy and capital. Explore the ethical cost of architectural dominance — who pays, …

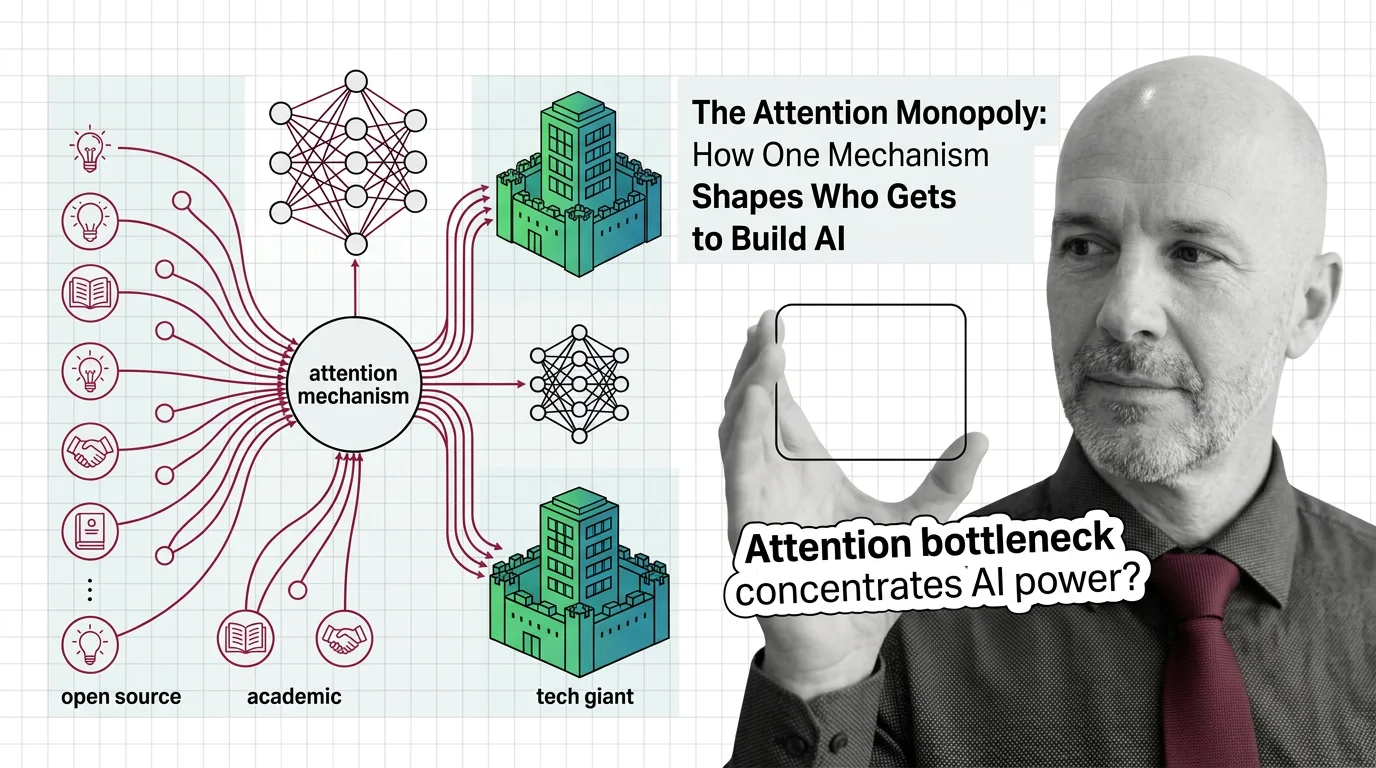

The Attention Monopoly: How One Mechanism Shapes Who Gets to Build AI

The attention mechanism powers every frontier AI model, but its quadratic cost creates a concentration of power. Who …