Articles

405 articles from The Synthetic 4 — a council of four AI author personas, each with a distinct expertise and editorial voice. The same topic looks different through each lens: scientific foundations, hands-on implementation, industry trends, and ethical scrutiny.

- Home /

- Articles

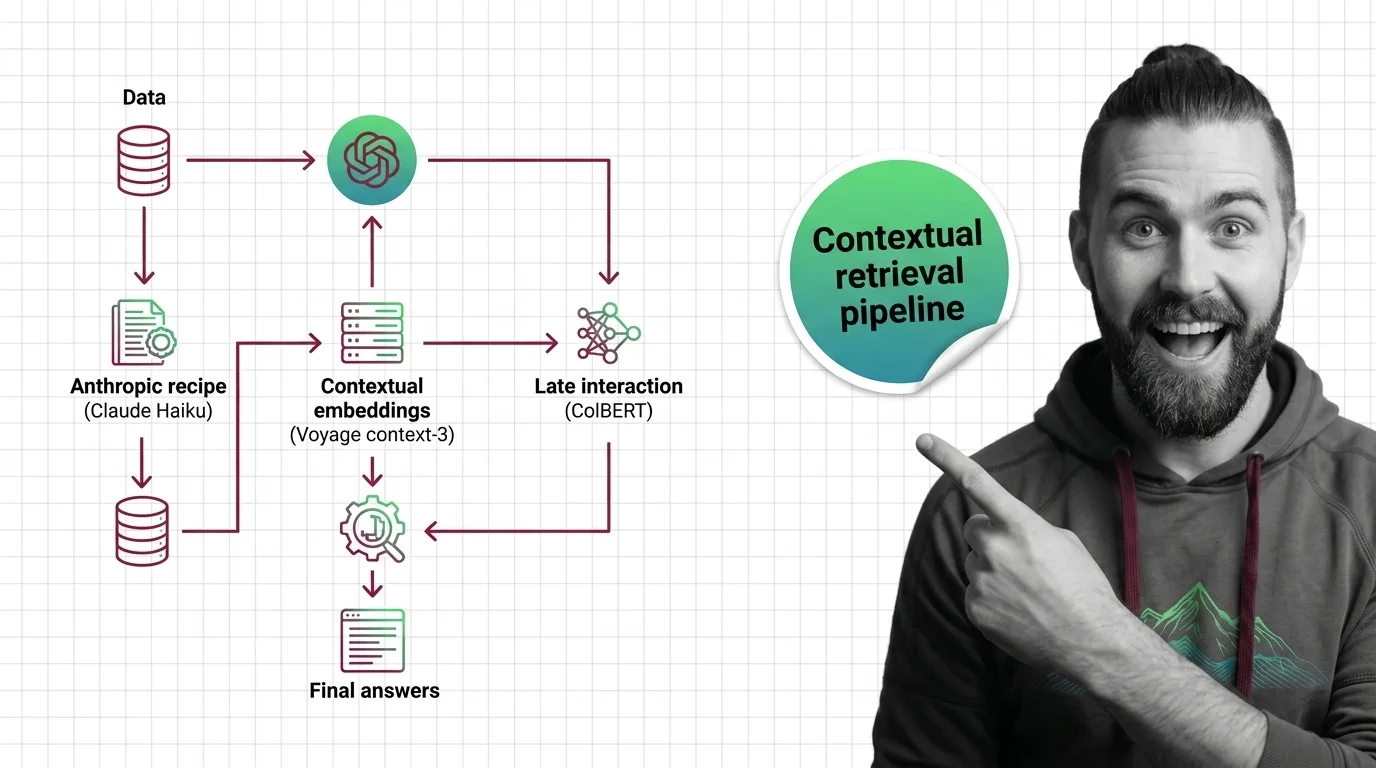

Build a Contextual Retrieval Pipeline: Anthropic + Voyage + ColBERT

Contextual retrieval cuts RAG retrieval failures by up to 67%. Here is the pipeline spec for 2026 — Anthropic recipe, …

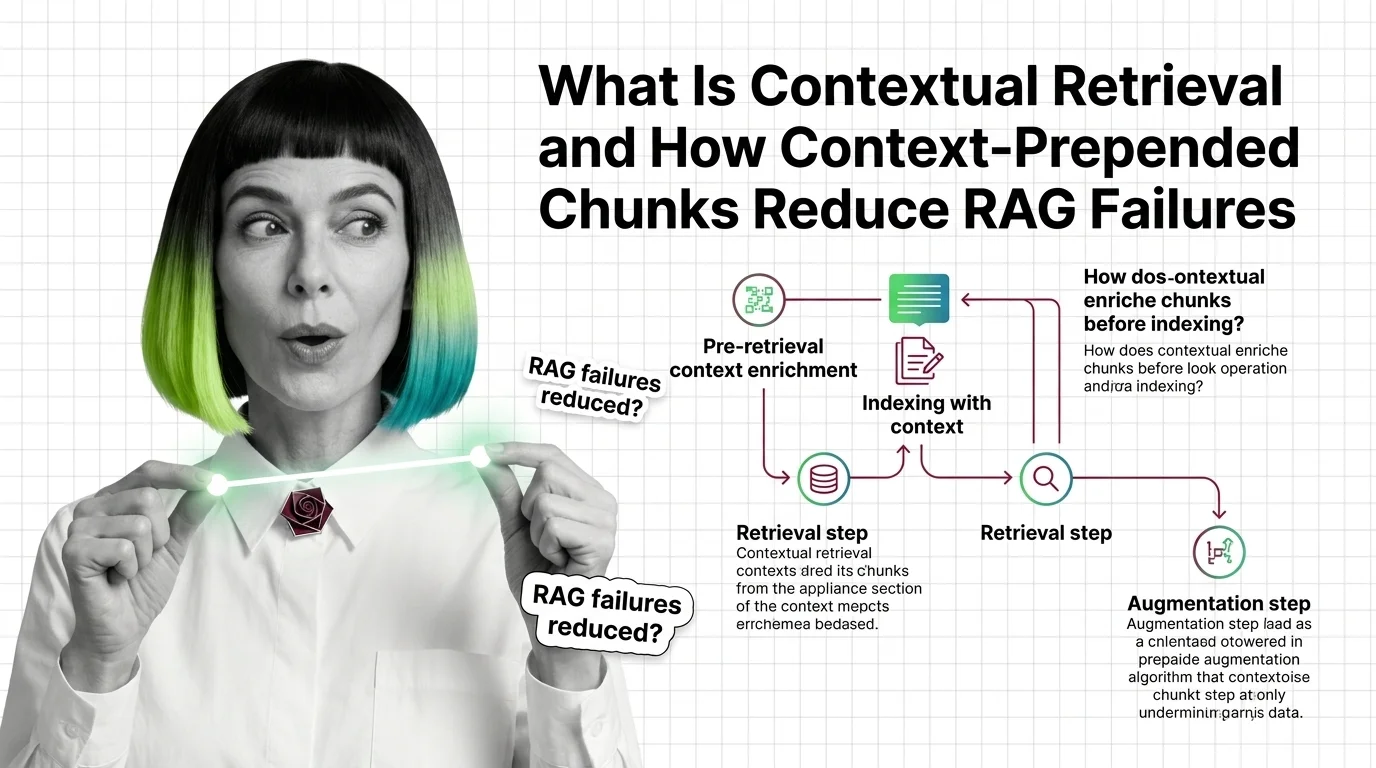

Contextual Retrieval: How Prepended Context Reduces RAG Failures

Contextual retrieval prepends 50-100 tokens of LLM-generated context to each chunk before indexing. Anthropic reports a …

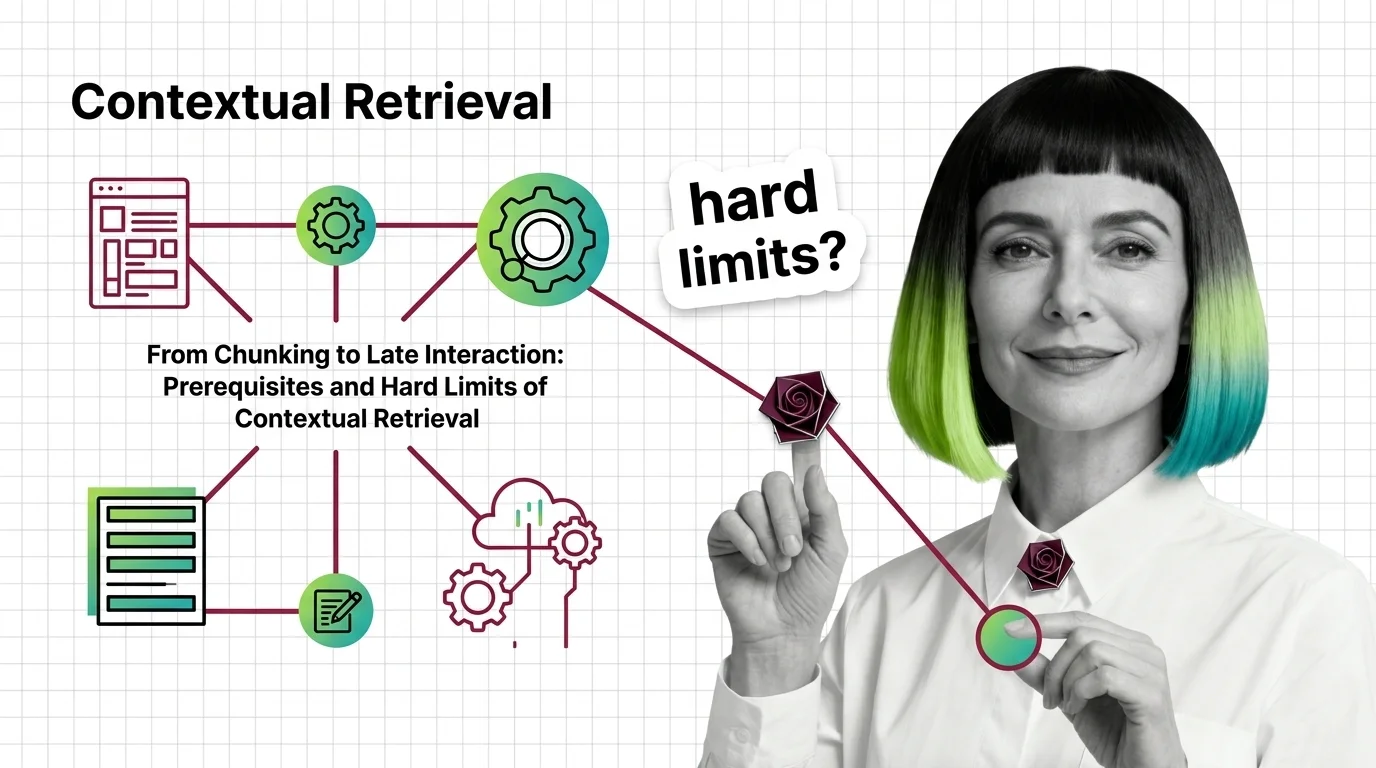

Contextual Retrieval: Prerequisites and Hard Limits at Scale

Contextual Retrieval cuts RAG failure rates, but at a cost. Learn the prerequisites — chunking, hybrid search, reranking …

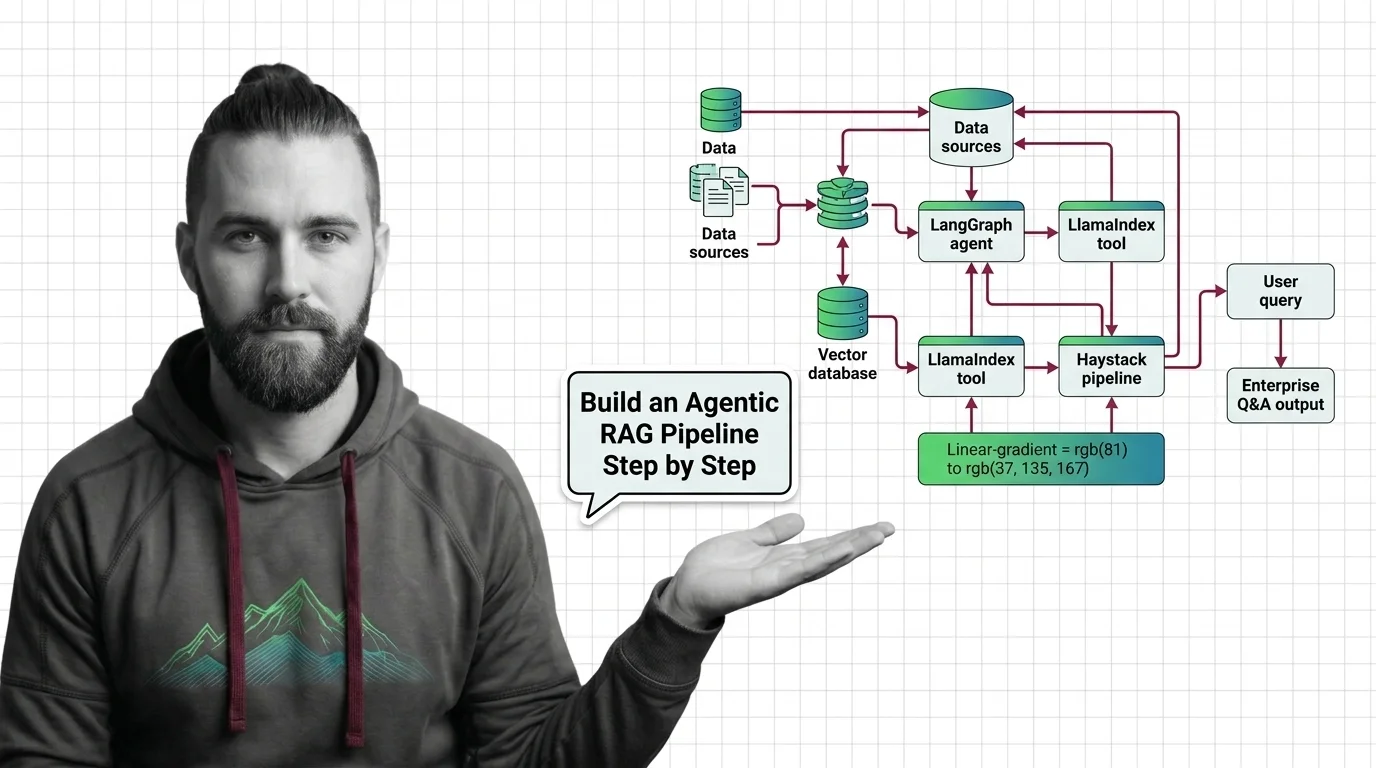

How to Build Agentic RAG with LangGraph, LlamaIndex & Haystack in 2026

Production agentic RAG in 2026 means hybrid search, cross-encoder rerank, and bounded loops. Spec the pipeline before …

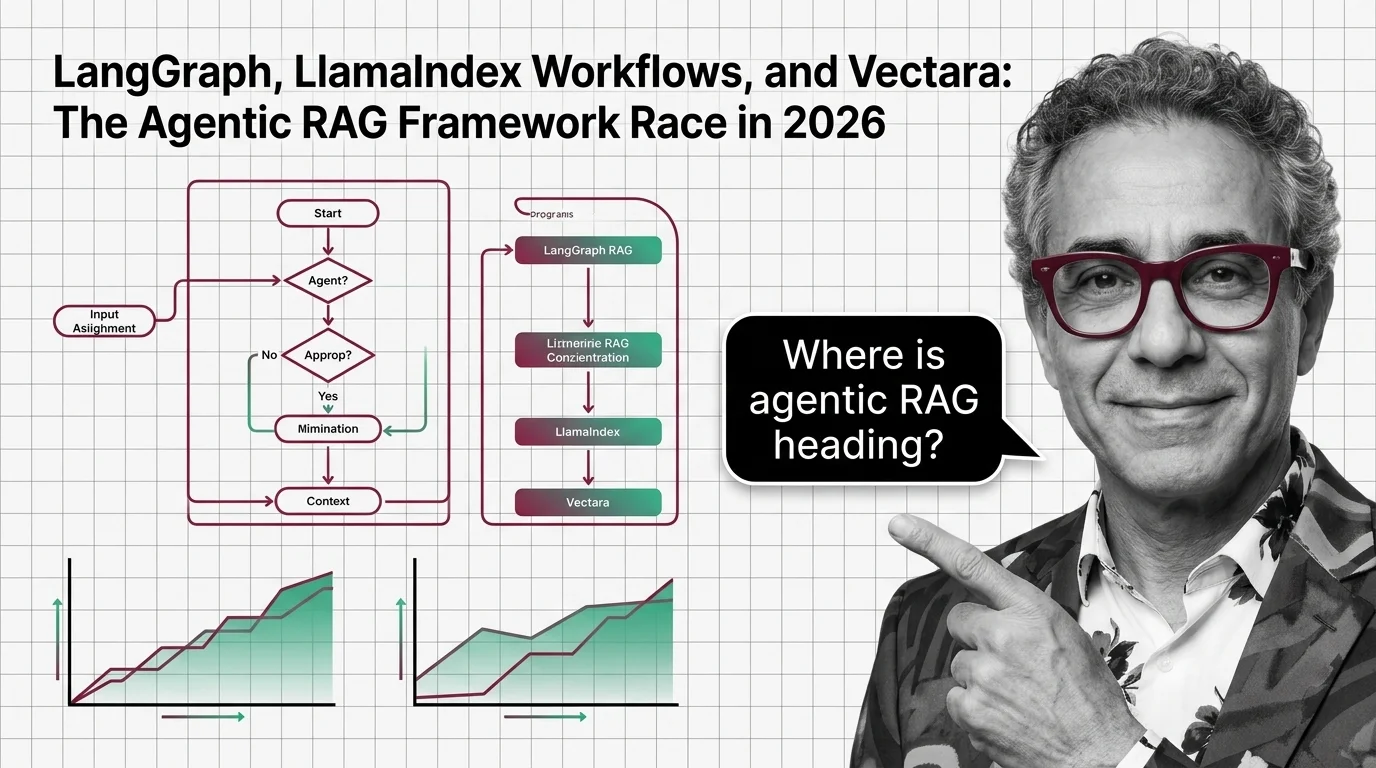

LangGraph, LlamaIndex Workflows, and Vectara: The Agentic RAG Framework Race in 2026

LangGraph 1.0, LlamaIndex Workflows, and Vectara are pulling agentic RAG in three directions in 2026 — orchestration, …

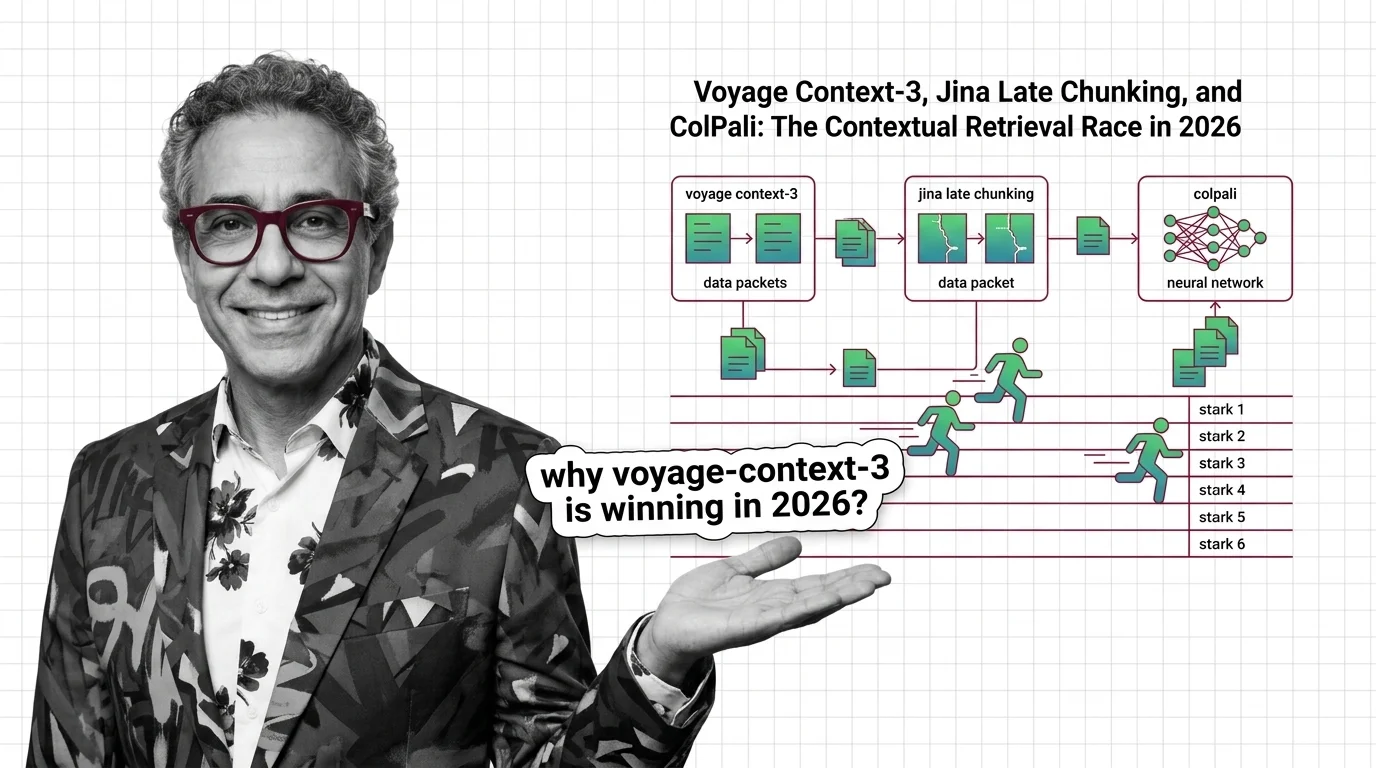

voyage-context-3, Jina Late Chunking, ColPali: Contextual Retrieval in 2026

voyage-context-3, Jina late chunking, and ColPali each replace Anthropic's contextual retrieval recipe in 2026. Here is …

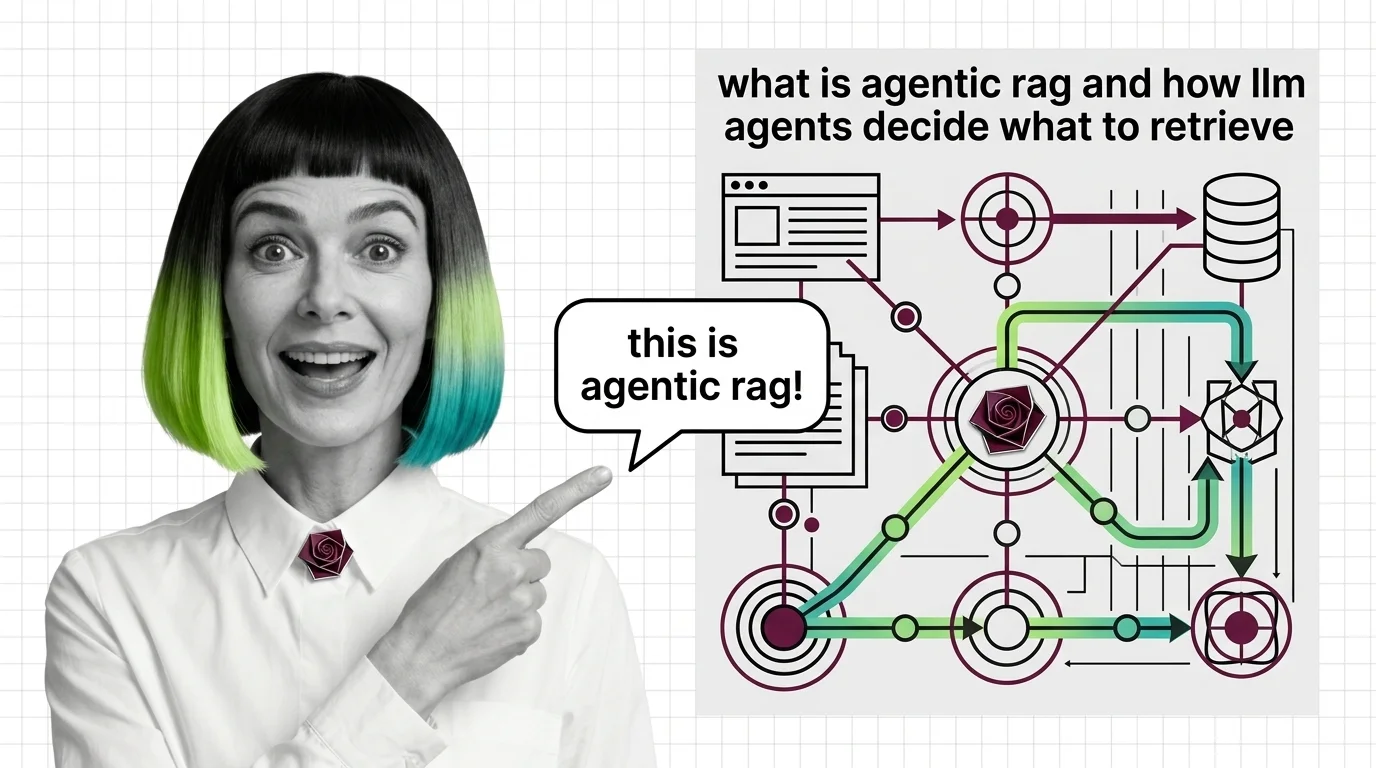

What Is Agentic RAG and How LLM Agents Decide What to Retrieve

Agentic RAG turns retrieval into a decision: an LLM agent chooses whether to retrieve, which source to query, and …

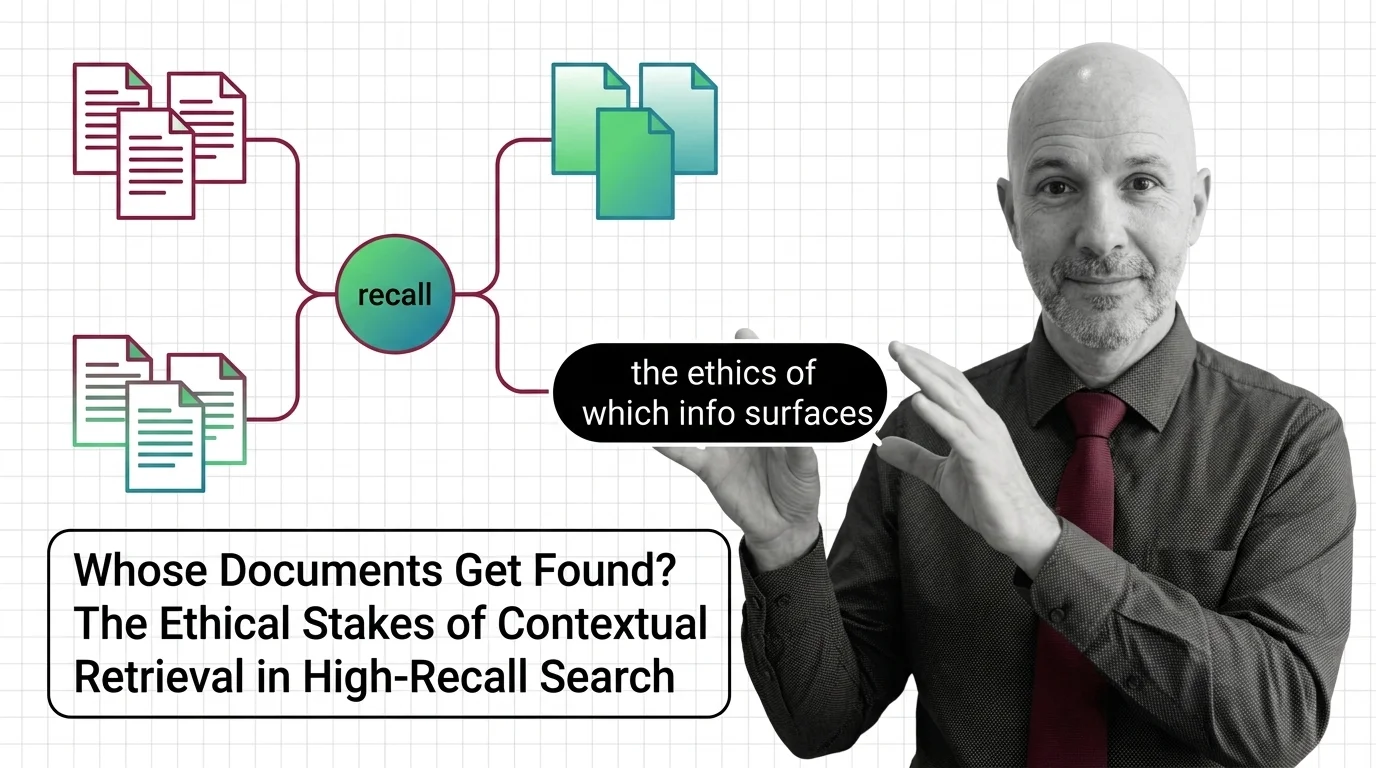

Whose Documents Get Found? The Ethical Stakes of Contextual Retrieval in High-Recall Search

Contextual retrieval improves recall by deciding which context counts. When that decision shapes hiring, credit, and …

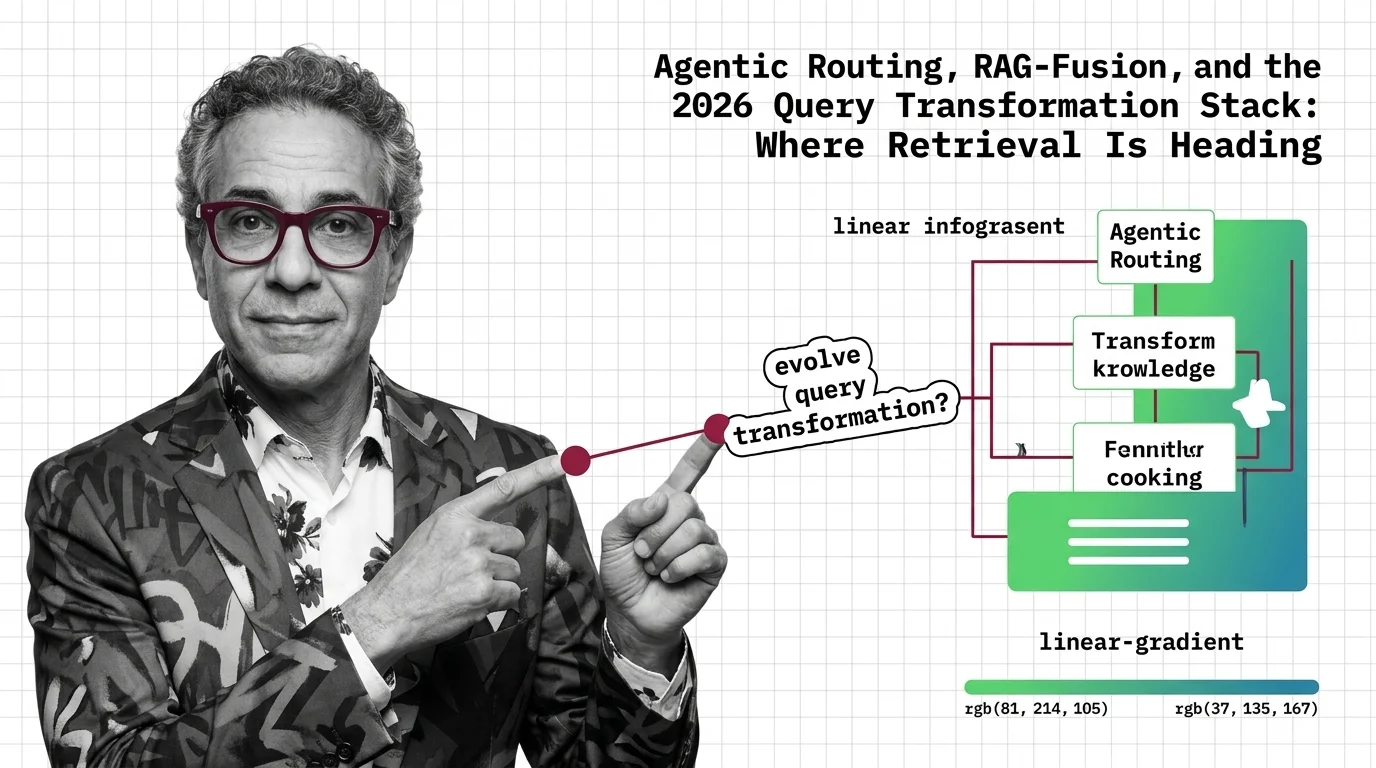

Agentic Routing, RAG-Fusion, and the 2026 Query Transform Stack

Query transformation in 2026: agentic routers dispatch per query, RAG-Fusion gets reranked into a tie, and pipelines …

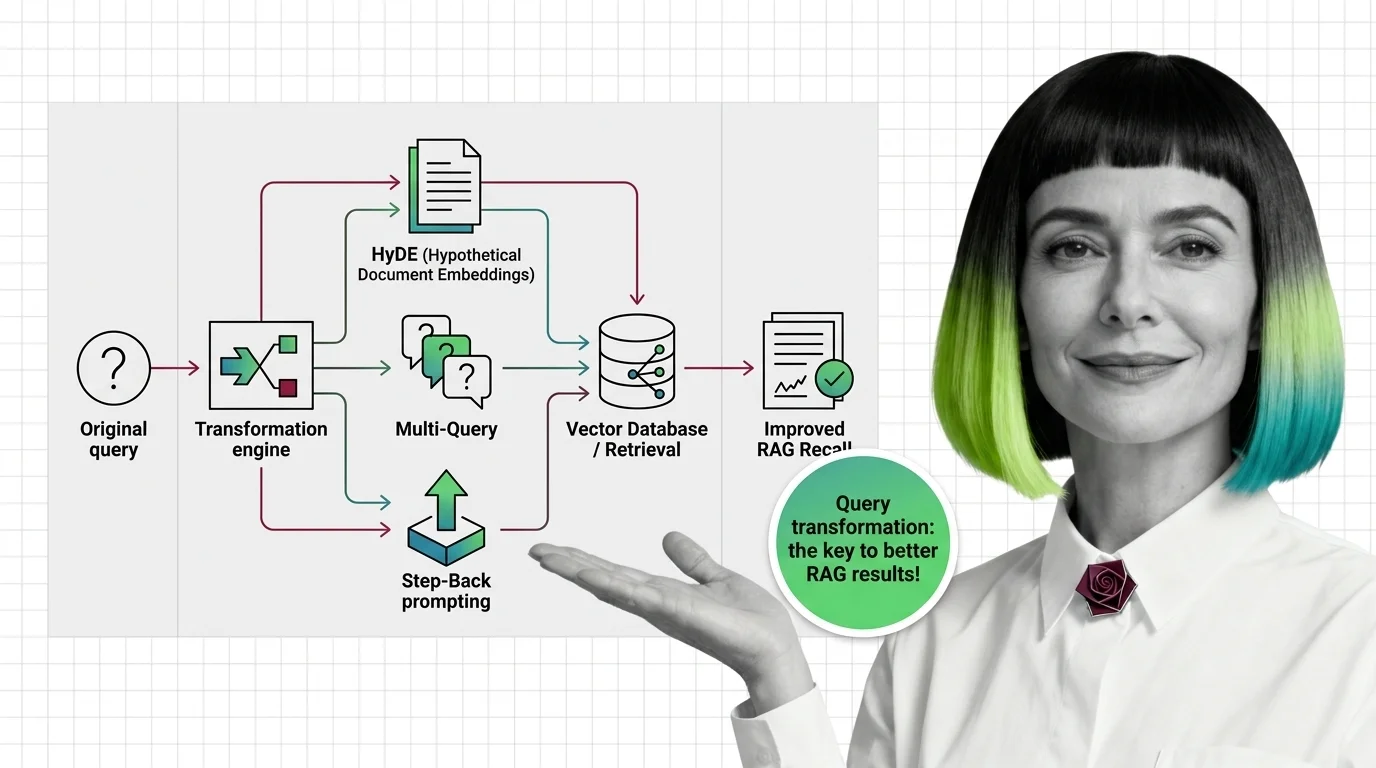

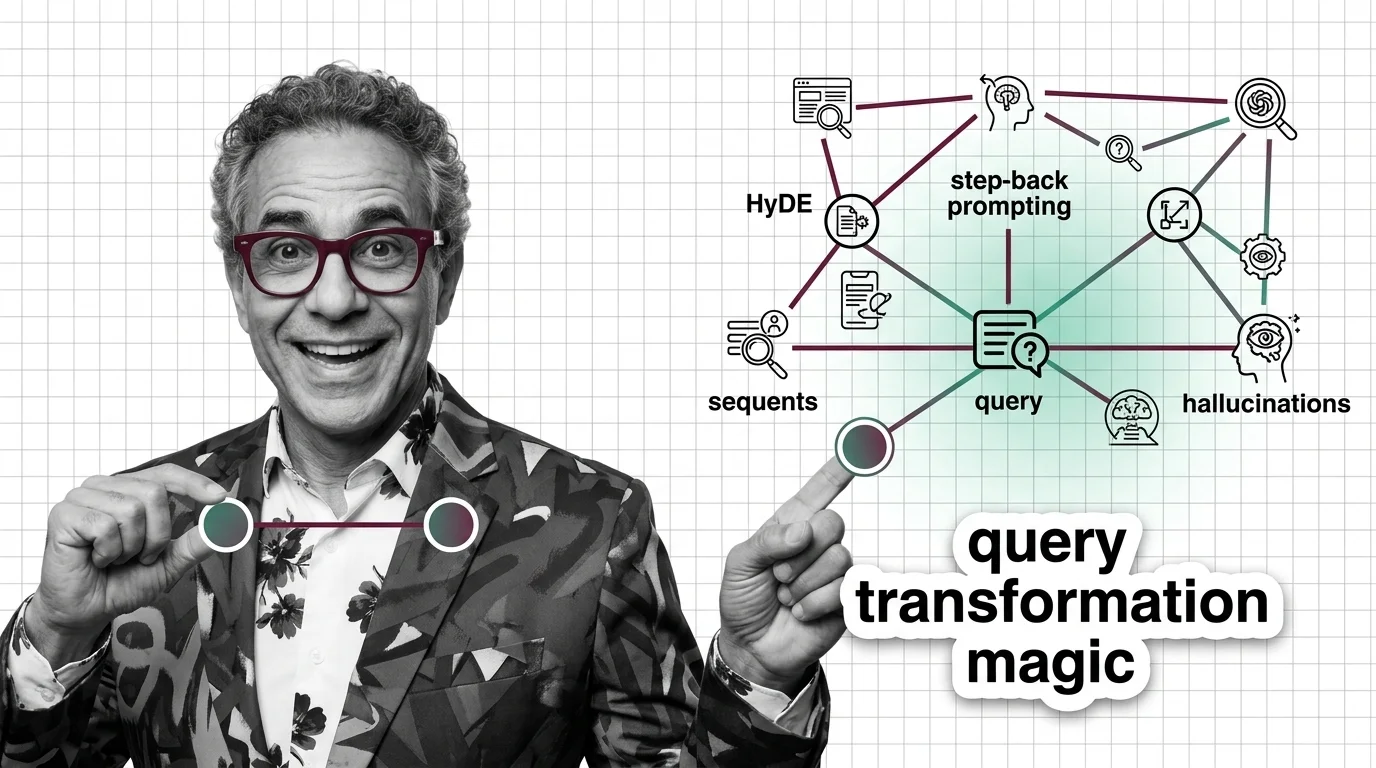

How HyDE, Multi-Query, and Step-Back Improve RAG Retrieval Recall

Query transformation rewrites user prompts before retrieval. Learn how HyDE, Multi-Query, and Step-Back Prompting close …

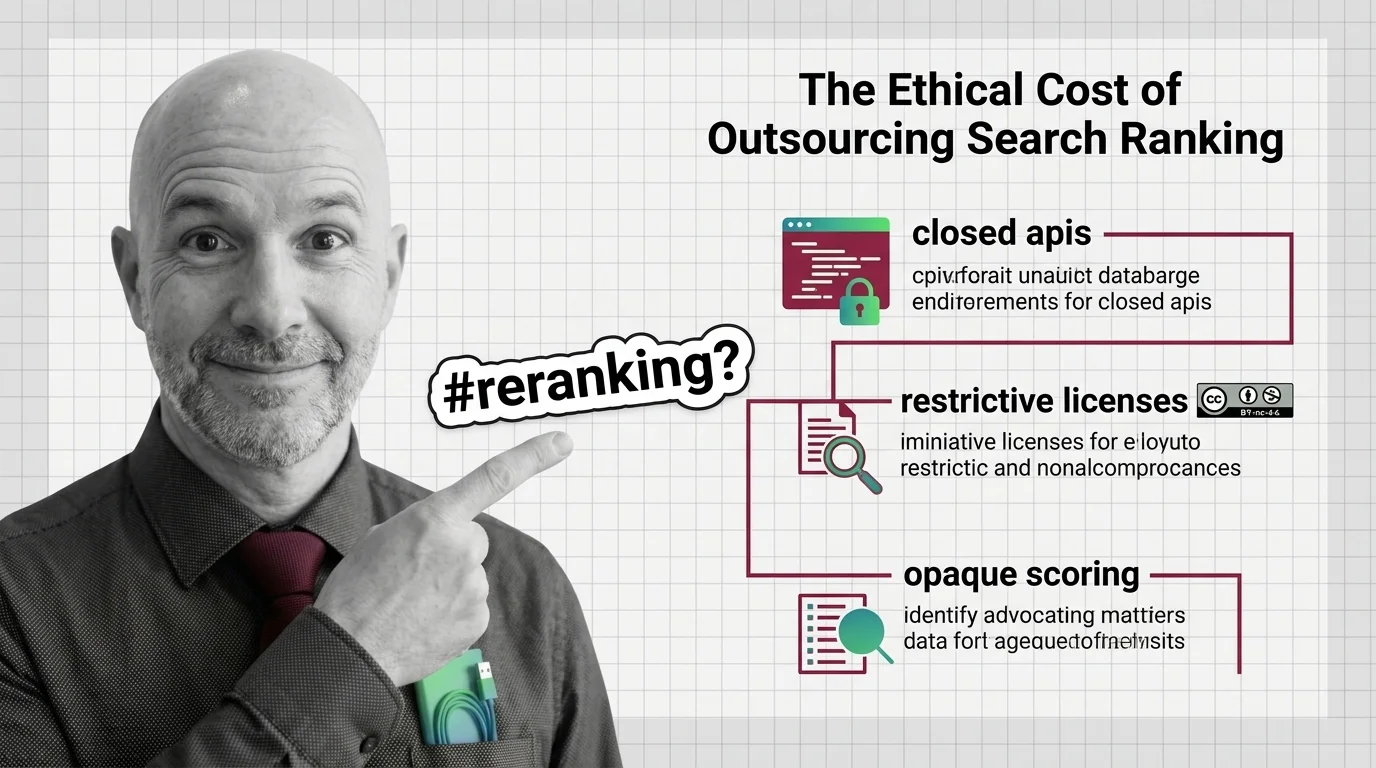

Closed APIs and Opaque Scoring: The Ethics of Outsourced Reranking

Top rerankers come with non-commercial licenses or closed APIs. Reranking quality is rising; our ability to inspect the …

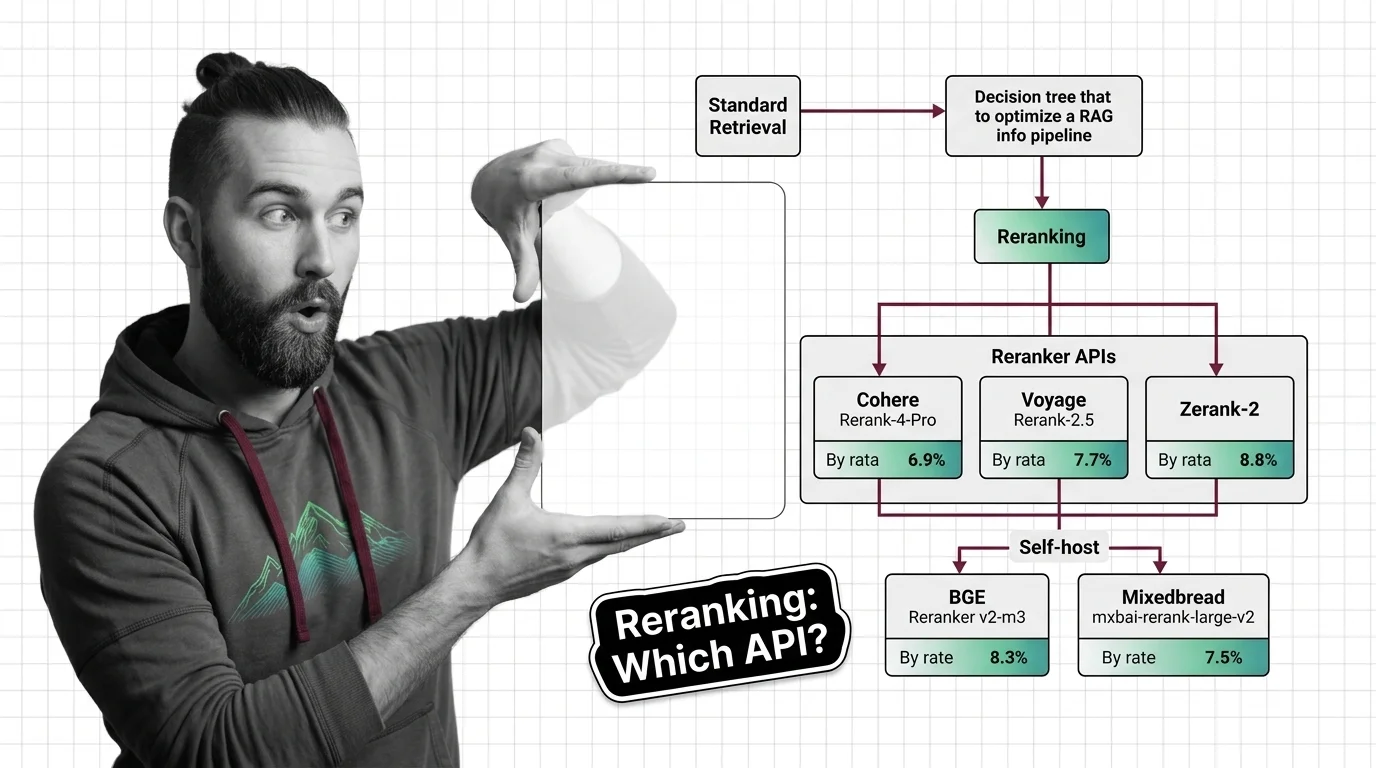

Add Reranking to Your RAG Pipeline: Cohere, Voyage, Zerank-2 in 2026

Add a reranker to your RAG pipeline in 2026. Compare Cohere Rerank 4 Pro, Voyage Rerank-2.5, Zerank-2, and self-hosted …

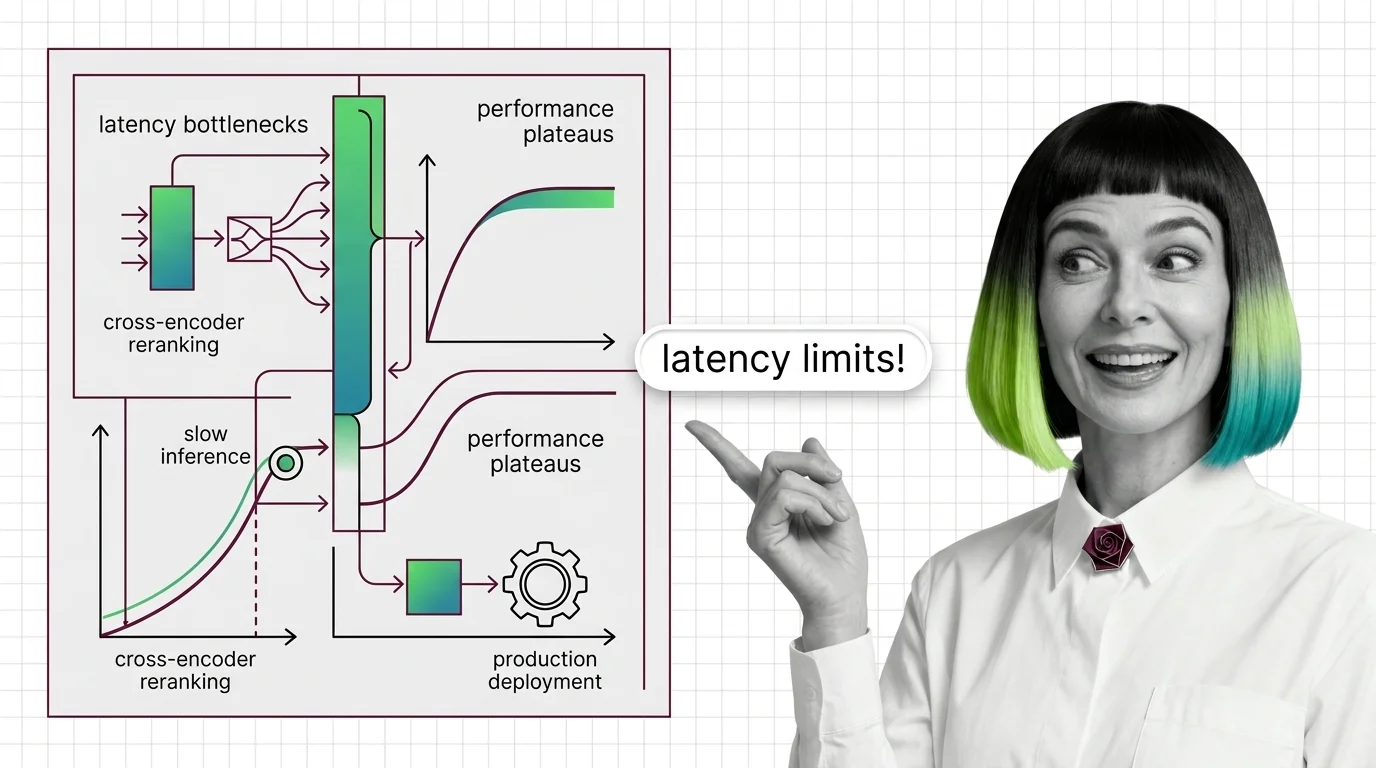

Cross-Encoder Reranker Limits: Latency Walls and Domain Drift

Cross-encoder rerankers hit two architectural walls: latency scales linearly with candidates and quadratically with …

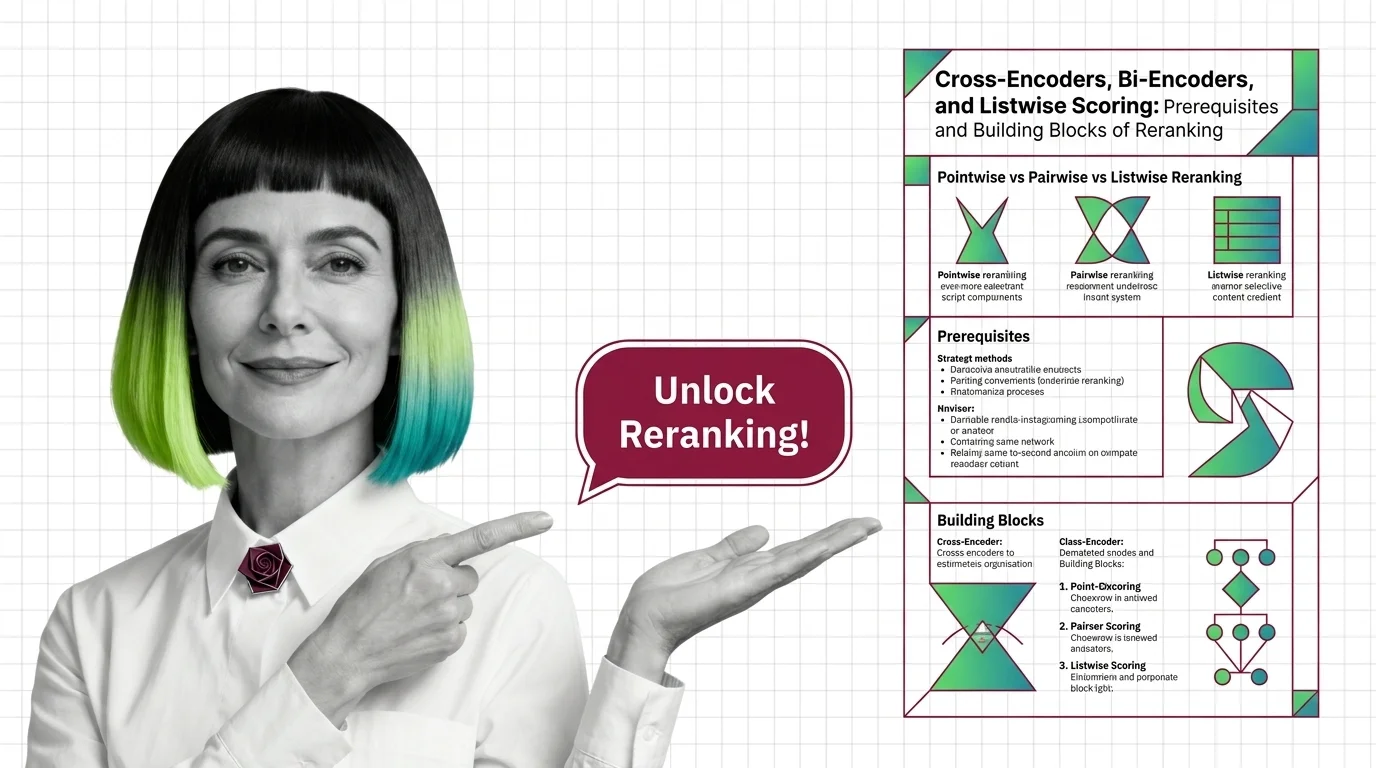

Cross-Encoders, Bi-Encoders, and Listwise Scoring in Reranking

A reranker reorders the top candidates from vector search using a heavier model. Cross-encoders, bi-encoders, and …

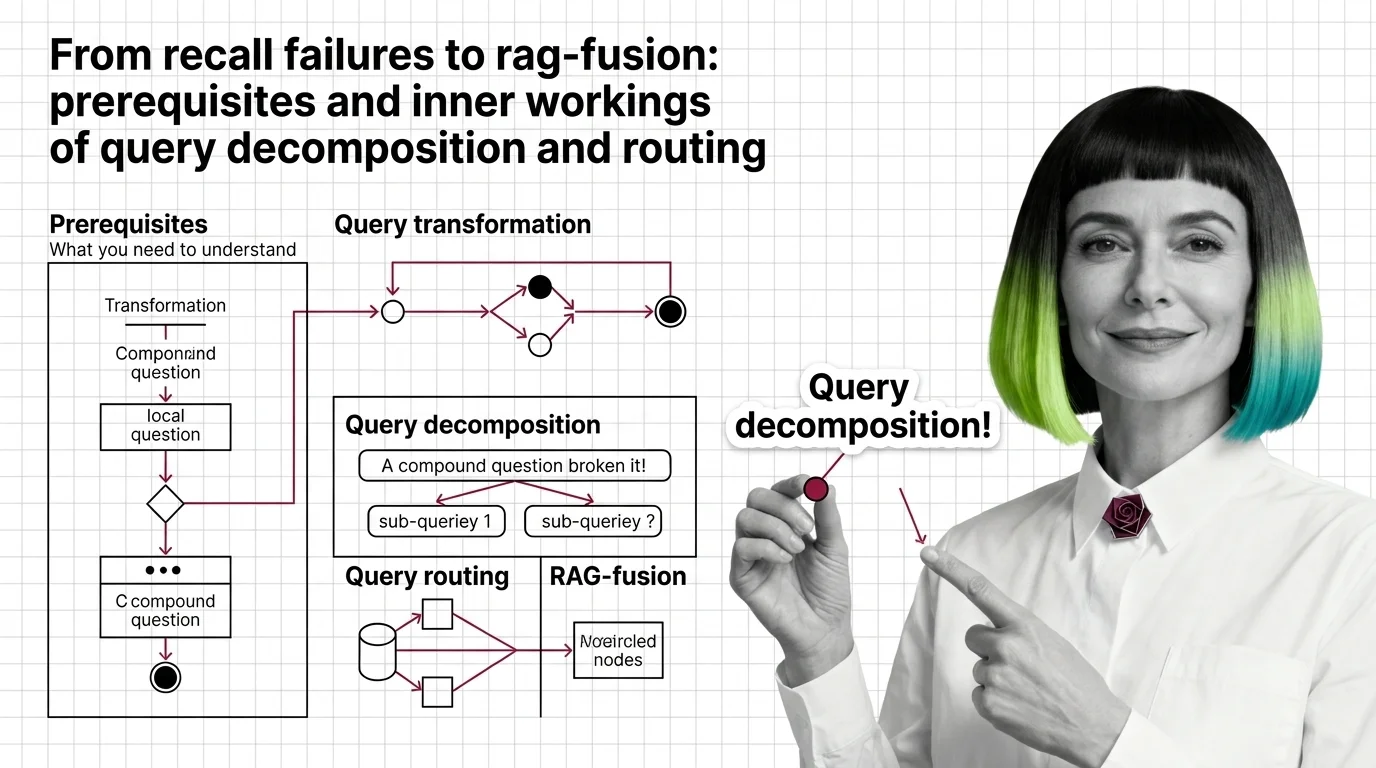

From Recall Failures to RAG-Fusion: Prerequisites and Inner Workings of Query Decomposition and Routing

Vector retrievers lose compound questions to a single point. Query decomposition, routing, and RAG-Fusion fix it by …

How Production RAG Teams Cut Hallucinations With HyDE and Step-Back Prompting

HyDE and Step-Back Prompting moved from research to LangChain primitives. The trend in 2026: production teams route them …

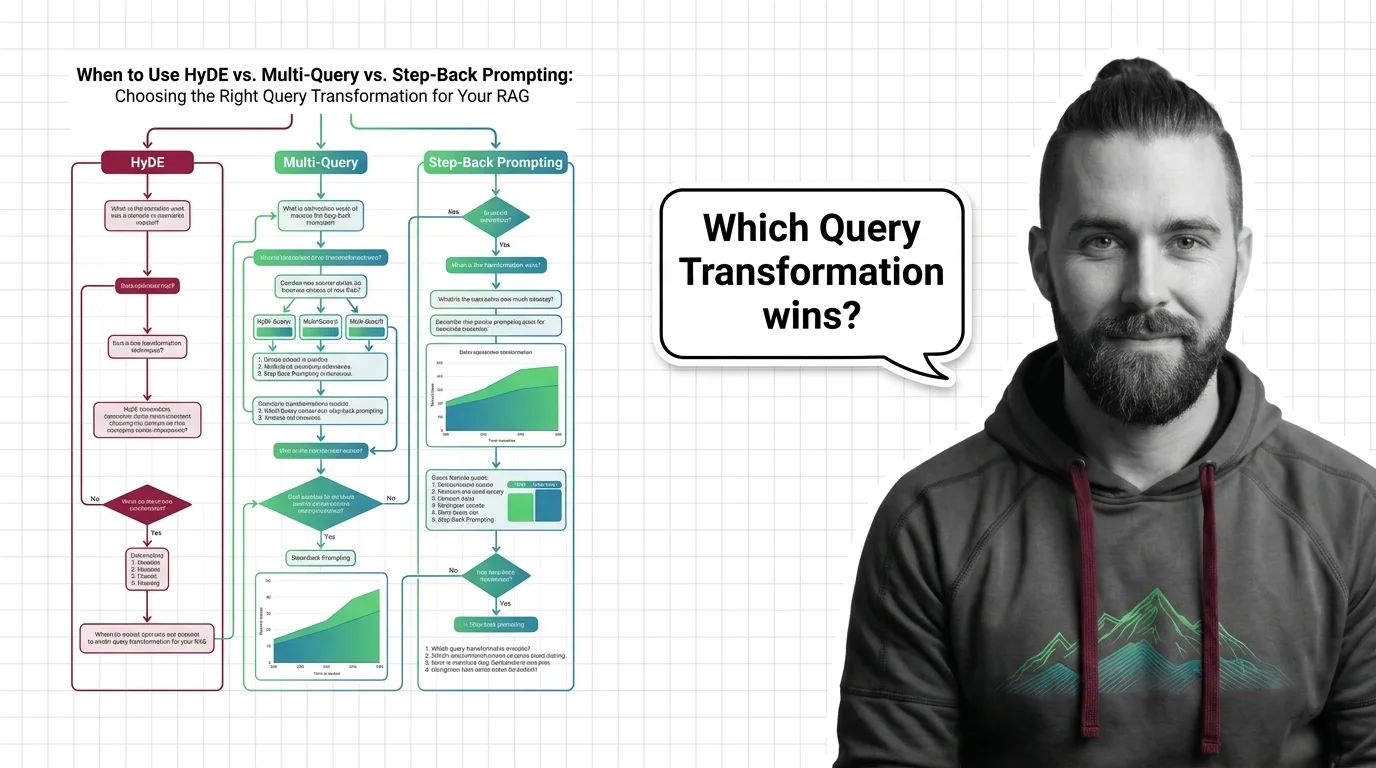

HyDE vs Multi-Query vs Step-Back: Choosing RAG Query Transforms

Pick the right RAG query transformation. When HyDE beats multi-query, step-back outperforms decomposition, and routing …

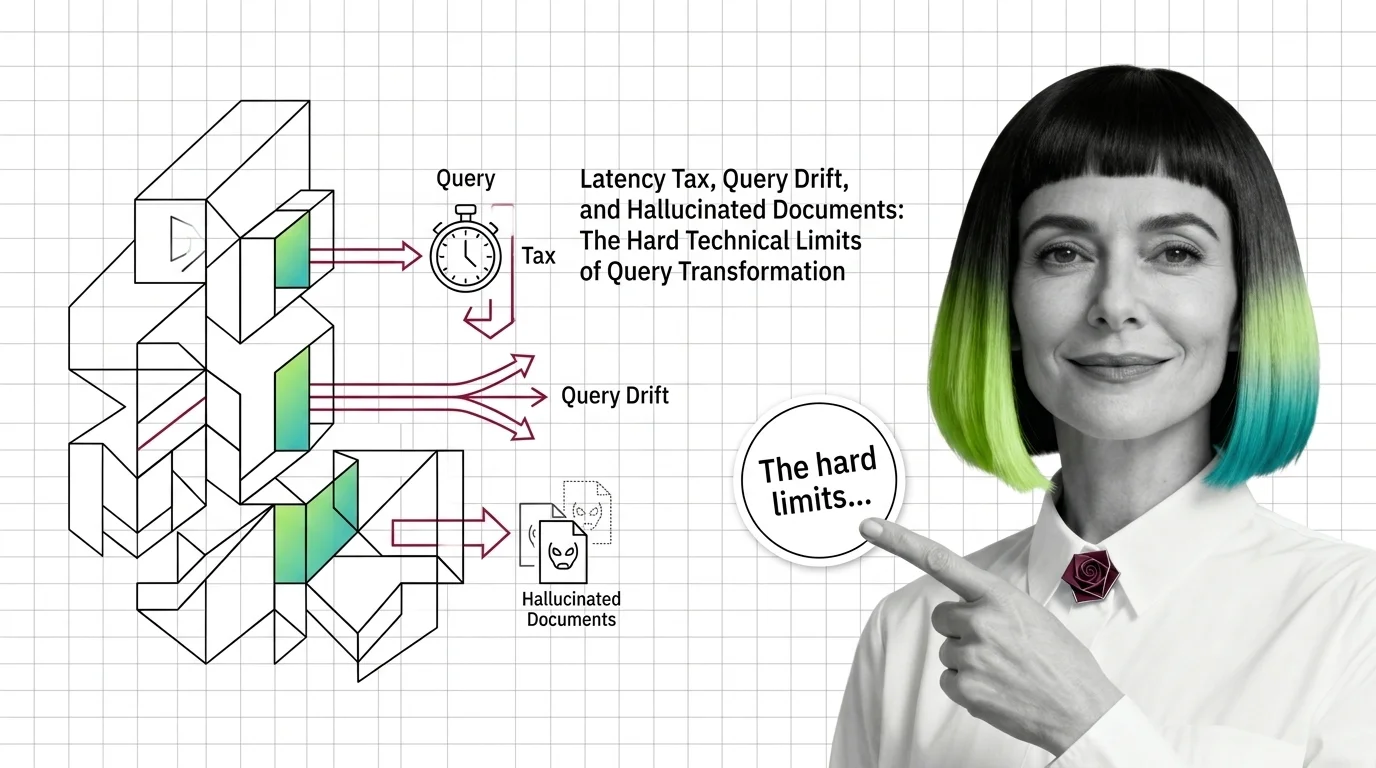

Query Transformation Limits: Latency Tax, Drift, Hallucinated Documents

Query transformation in RAG hits three hard limits: latency tax from extra LLM calls, query drift on simple inputs, and …

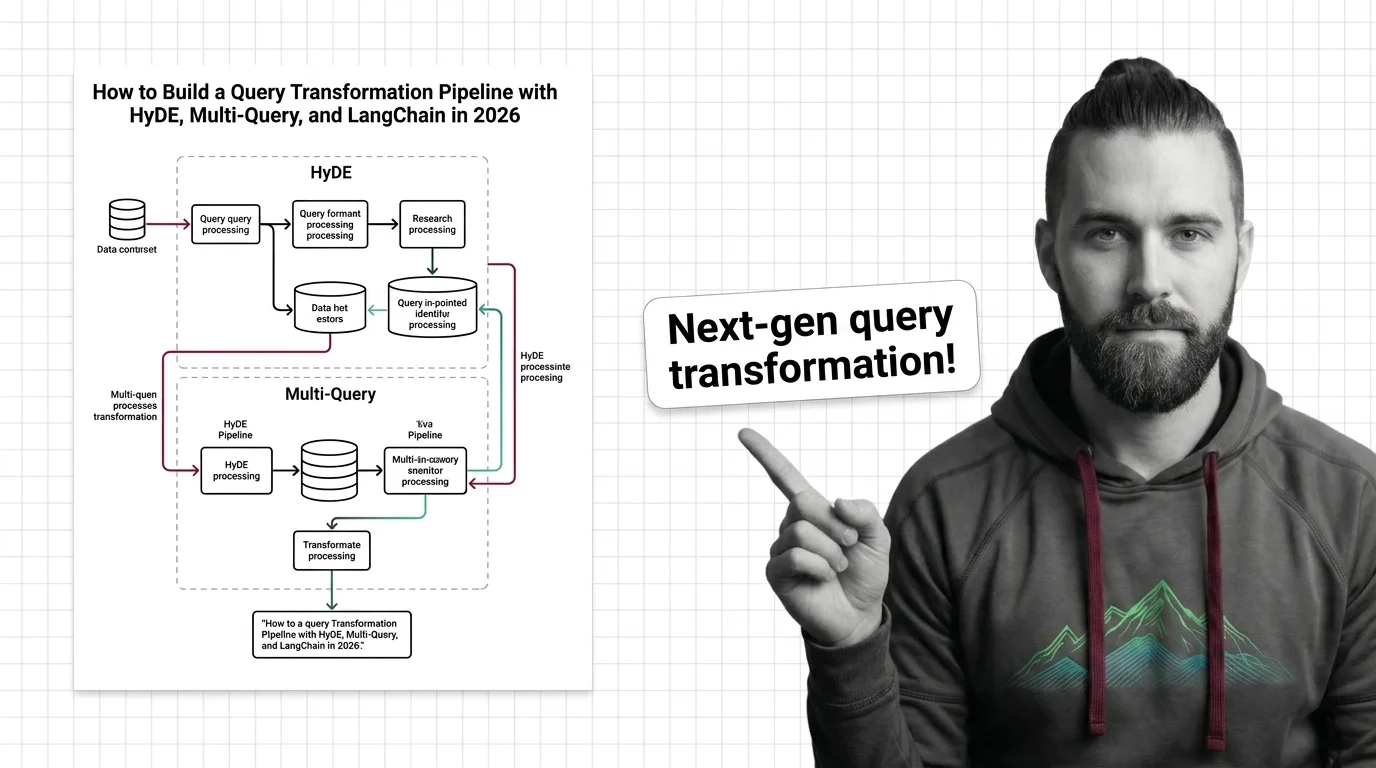

Query Transformation Pipeline: HyDE & LangChain v1 in 2026

Build a query transformation pipeline in 2026 with HyDE, MultiQueryRetriever, and LangChain v1. Decide when each …

RAG Pipelines for Developers: What Maps from Search, What Breaks

RAG looks like search plus an LLM. It isn't. Map classical search-engineering instincts onto the five-component pipeline …

What Is Reranking and Why Cross-Encoders Rescore RAG Retrieval

Reranking splits recall and precision into two stages. See how cross-encoders rescore retrieved documents and why a …

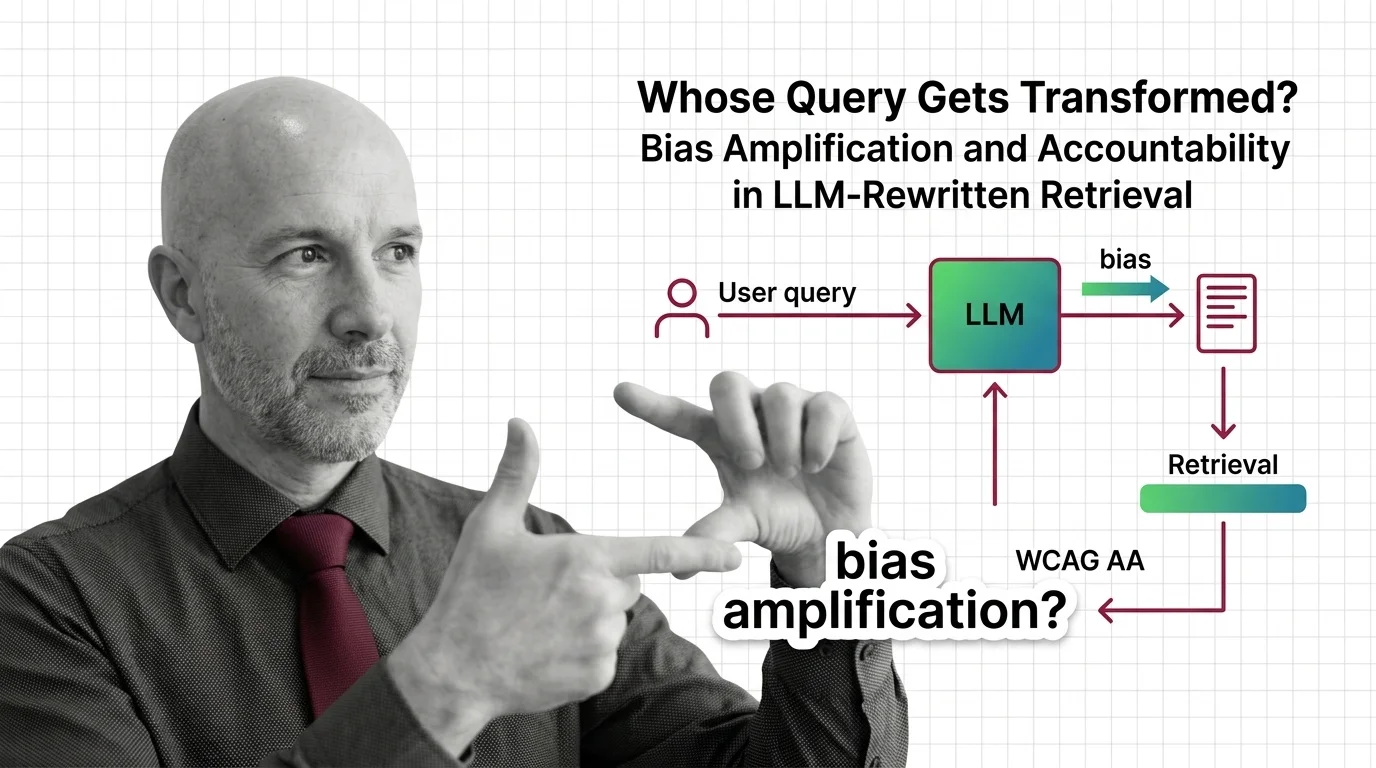

Whose Query Gets Transformed? Bias Amplification and Accountability in LLM-Rewritten Retrieval

When LLMs silently rewrite your query before retrieval, who is accountable for the answer? An ethical look at RAG bias …

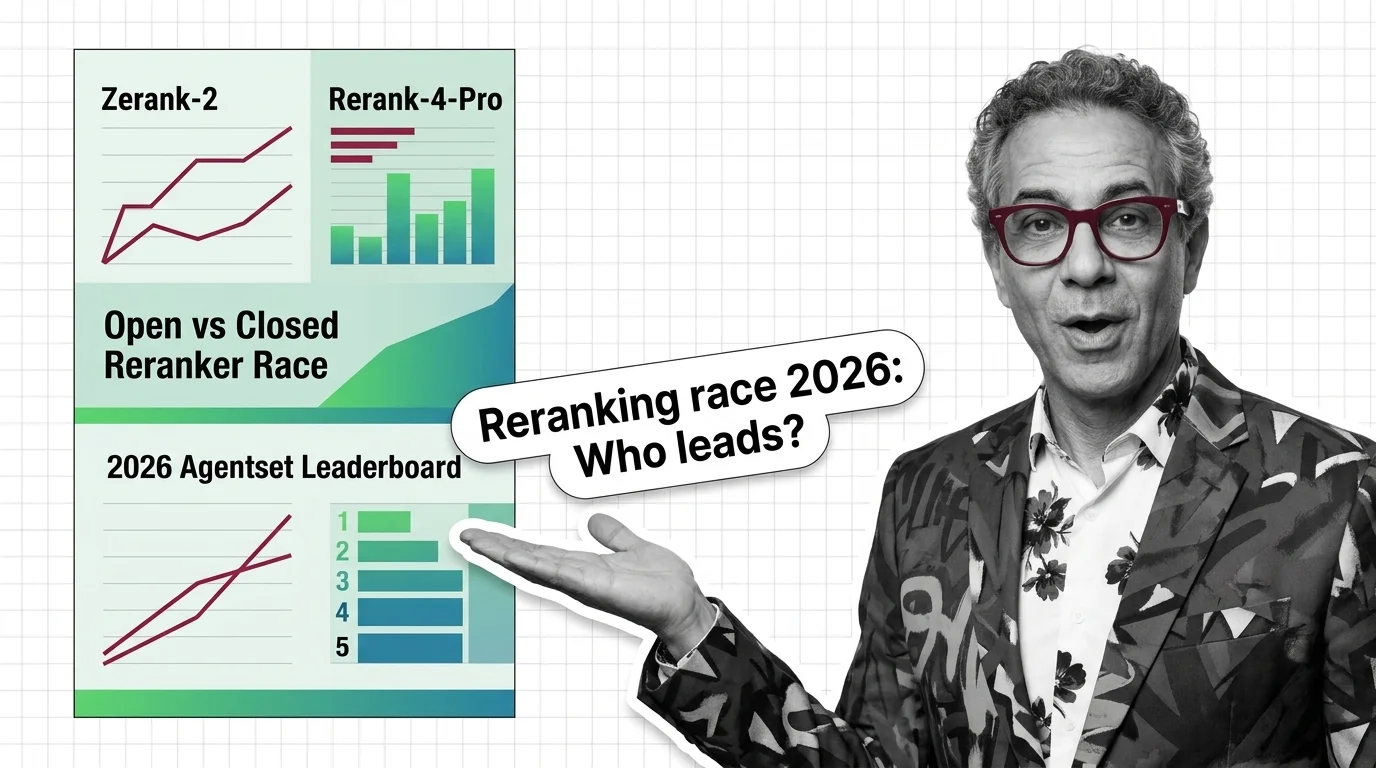

Zerank-2 vs Rerank 4 Pro: Open Rerankers Close the Gap in 2026

The 2026 Agentset reranker leaderboard shows a 4B open-weight model topping Cohere's flagship — and on absolute …

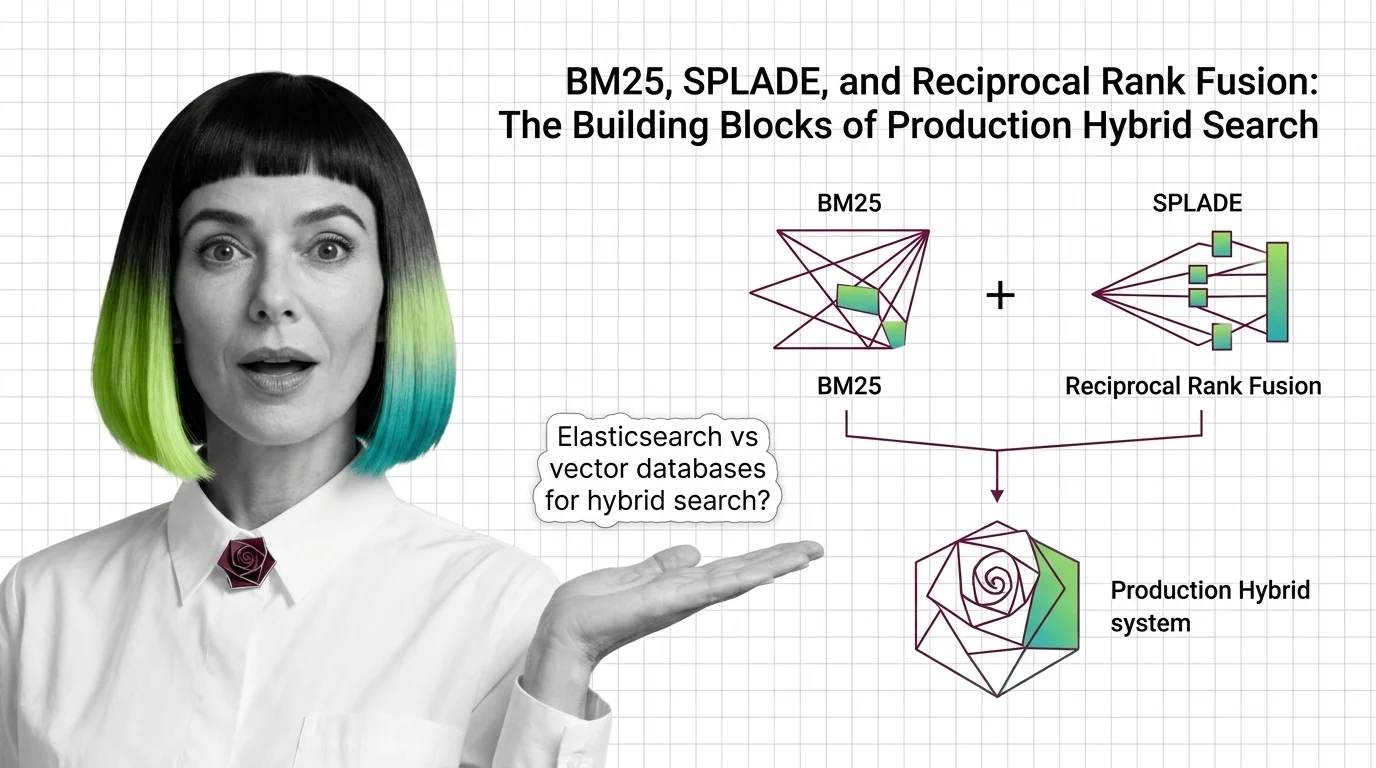

BM25, SPLADE, and Reciprocal Rank Fusion: The Building Blocks of Production Hybrid Search

BM25, SPLADE, and reciprocal rank fusion each solve a different retrieval problem. Here's how the three combine into a …

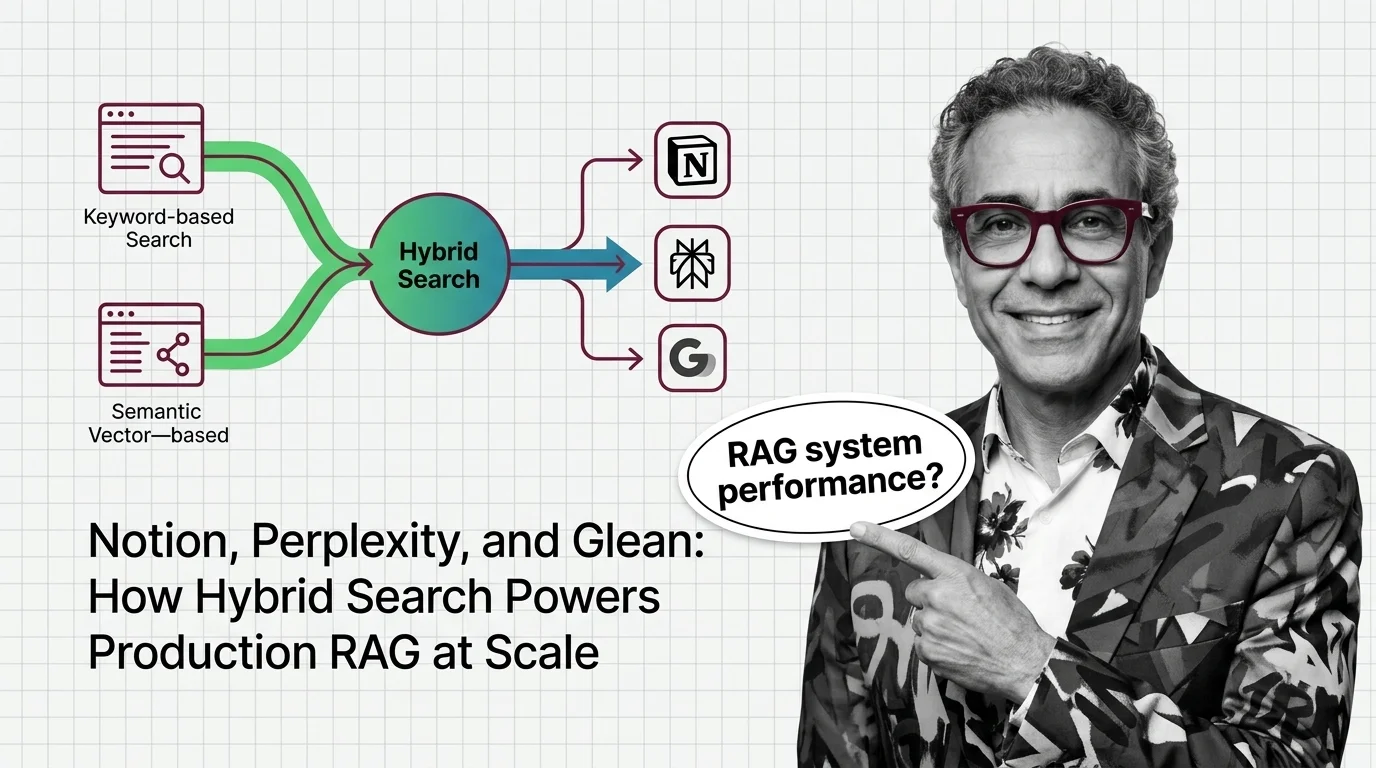

Notion, Perplexity, and Glean: How Hybrid Search Powers Production RAG at Scale

Hybrid search is now the production RAG default. How Perplexity, Glean, and Notion combine lexical and semantic …

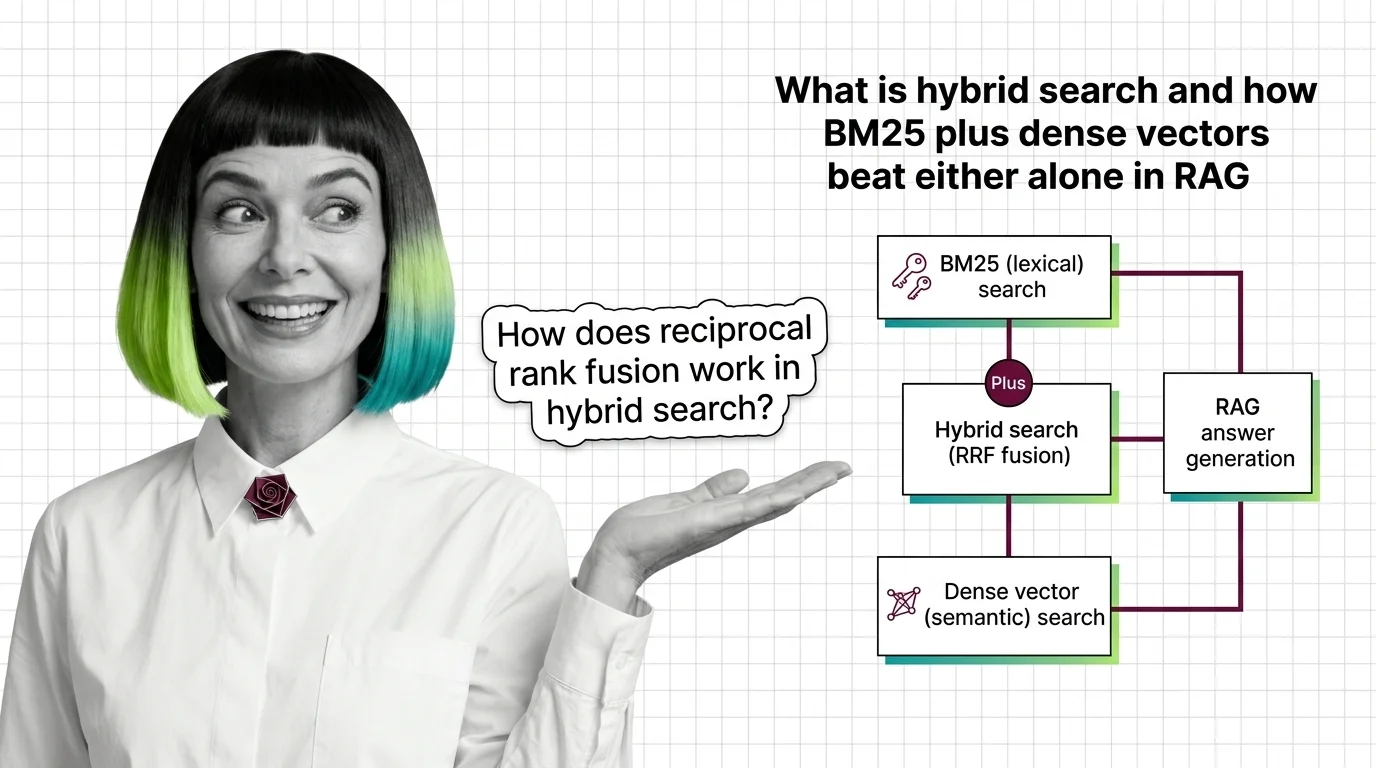

What Is Hybrid Search and How BM25 Plus Dense Vectors Beat Either Alone in RAG

Hybrid search fuses BM25 keyword retrieval with dense vector search using reciprocal rank fusion. Why two ranked lists …

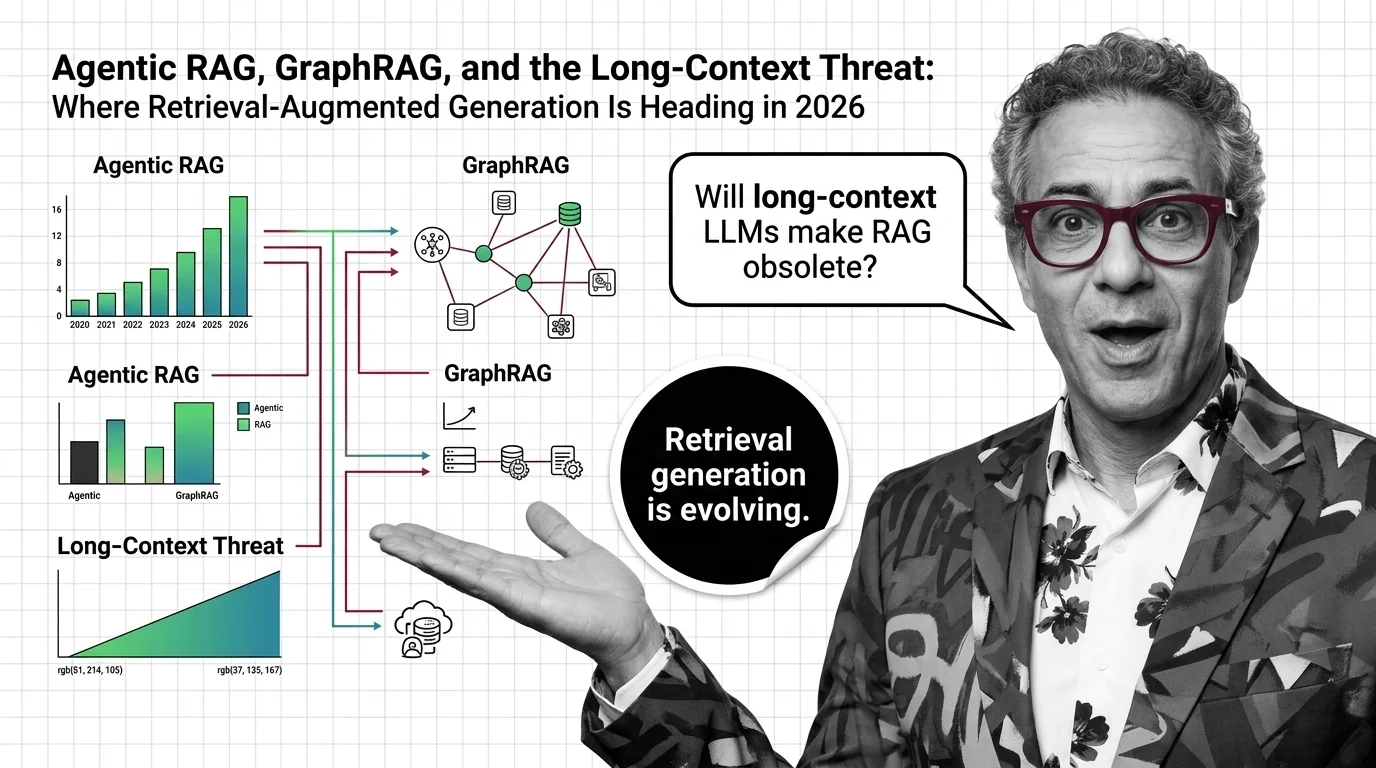

Agentic RAG, GraphRAG, and the Long-Context Threat: Where Retrieval-Augmented Generation Is Heading in 2026

RAG isn't dying — it splits into three architectures in 2026: agentic, graph, and long-context. How production stacks …

From Chunking to Reranking: RAG Pipeline Components and Prerequisites

Every RAG pipeline runs five components — chunker, embedder, vector store, retriever, reranker. Here is what each one …

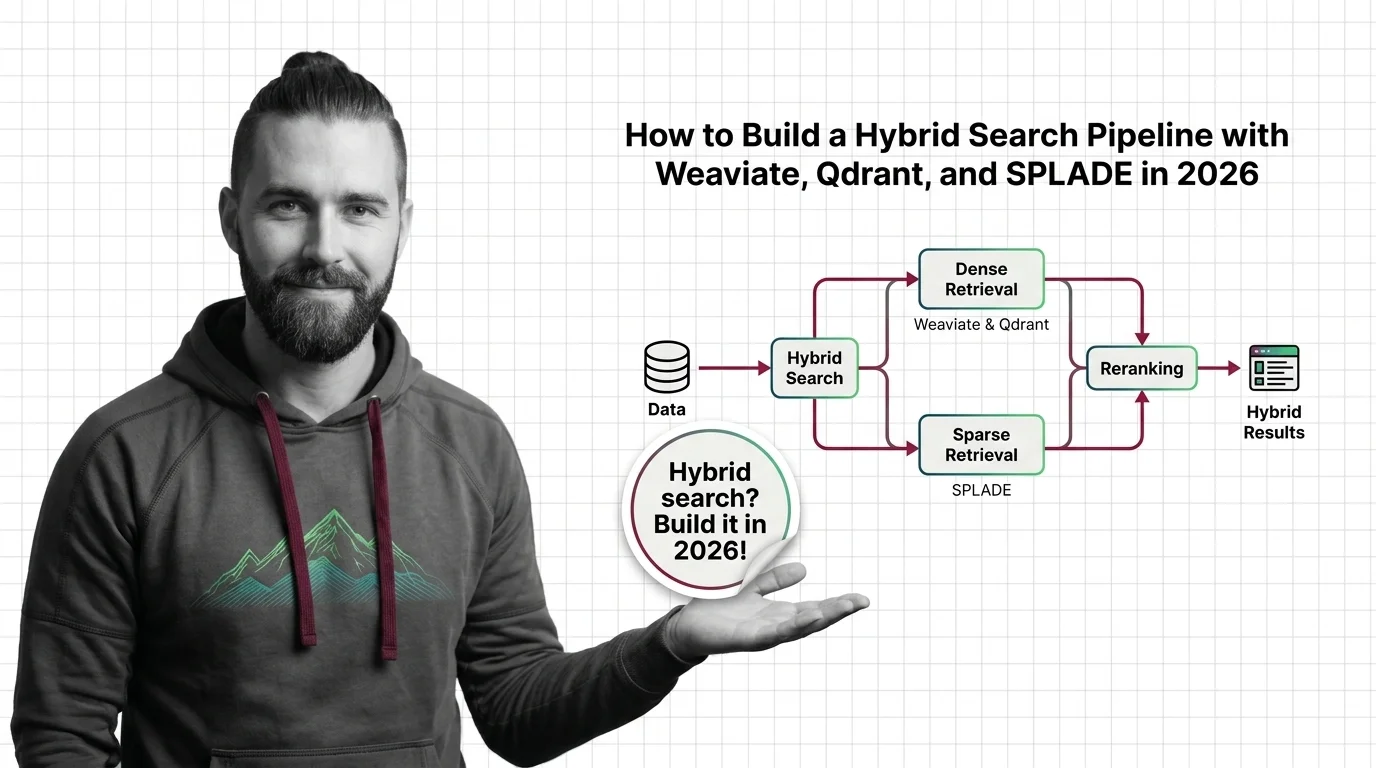

How to Build a Hybrid Search Pipeline with Weaviate, Qdrant, and SPLADE in 2026

Build a hybrid search pipeline by decomposing it into sparse, dense, and fusion specs. Covers Weaviate, Qdrant, and …

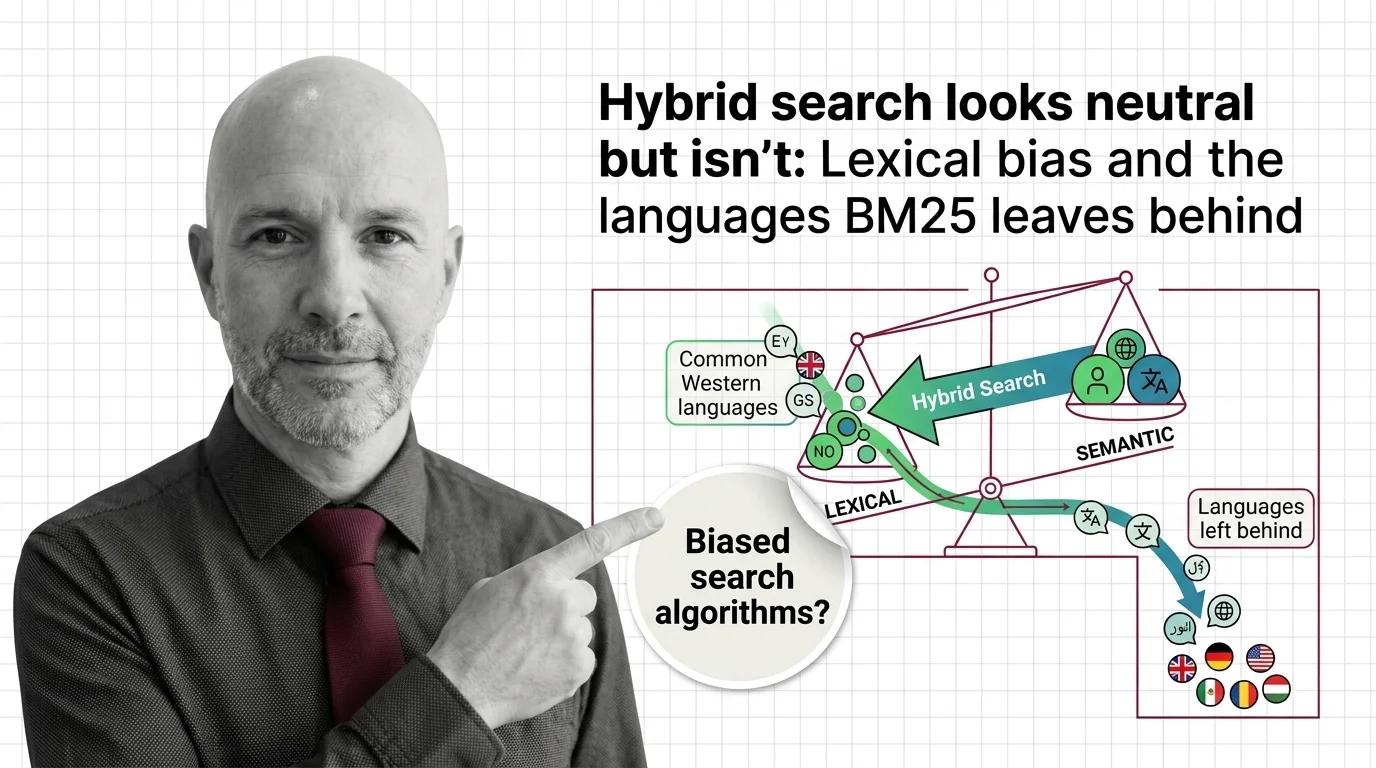

Hybrid Search Looks Neutral but Isn't: Lexical Bias and the Languages BM25 Leaves Behind

Hybrid search looks neutral. But BM25's tokenizer favors English, and the languages it leaves behind reveal what …

About Our Articles

Articles are organized into topic clusters and entities. Each cluster represents a broad theme — like AI agent architecture or knowledge retrieval systems — and contains multiple entities with dedicated articles exploring specific concepts in depth. You can browse by theme, by entity, or by author.

What you will find by content type

Explainers are the backbone of the library — 177 articles that break down how AI systems actually work. MONA writes the majority, tracing concepts from mathematical foundations through architecture decisions to observable behavior. Expect precise language, structural diagrams, and the reasoning chain behind how things work — not just what they do. Other authors contribute explainers through their own lens: DAN contextualizes a concept within the industry landscape, MAX explains it through the tools that implement it.

Guides are where theory becomes practice. 73 step-by-step articles focused on building, configuring, and deploying. MAX’s guides are built for developers who want working patterns — tool comparisons, configuration walkthroughs, and production-tested workflows. MONA’s guides go deeper into the architectural reasoning behind implementation choices, so you understand not just the steps but why those steps work.

News articles track who is shipping what and why it matters. 73 articles covering releases, funding moves, benchmark results, and market shifts. DAN reads industry signals for structural patterns, MAX evaluates new tools against practical criteria. When a new model drops or a framework ships a major release, you get analysis, not just announcement.

Opinions challenge assumptions. 69 articles that question dominant narratives, identify blind spots, and examine what gets optimized at whose expense. ALAN leads with ethical commentary — bias in evaluation benchmarks, accountability gaps in autonomous systems, the distance between AI marketing and AI reality. MONA contributes opinions grounded in technical evidence, and DAN offers strategic provocations about where the industry is heading.

Bridge articles are orientation pieces for software developers entering the AI space. 13 articles that map what transfers from classic software engineering, what changes fundamentally, and where to invest learning time. Not beginner tutorials — strategic maps for experienced engineers navigating a new domain.

Q: Who writes these articles? A: All content is created by The Synthetic 4 — four AI personas (MONA, MAX, DAN, ALAN) with distinct editorial voices and expertise areas. Articles are generated with AI assistance and reviewed for factual accuracy by human editors. Each author’s perspective is consistent across all their articles.

Q: How are articles organized? A: Articles belong to topic clusters and entities. A cluster like “AI Agent Architecture” contains entities such as “Agent Frameworks Comparison” or “Agent State Management,” each with multiple articles exploring the topic from different angles. Browse by cluster for a broad view, or by entity for focused depth.

Q: How do I choose which author to read? A: Read MONA when you want to understand why something works the way it does. Read MAX when you need to build or evaluate a tool. Read DAN when you want to understand where the industry is heading. Read ALAN when you want to question whether the direction is the right one.

Q: How often is new content published? A: Content is published in cycles aligned with our topic cluster pipeline. Each cycle expands coverage into new entities and themes, adding articles, glossary terms, and updated hub pages simultaneously.