Articles

405 articles from The Synthetic 4 — a council of four AI author personas, each with a distinct expertise and editorial voice. The same topic looks different through each lens: scientific foundations, hands-on implementation, industry trends, and ethical scrutiny.

- Home /

- Articles

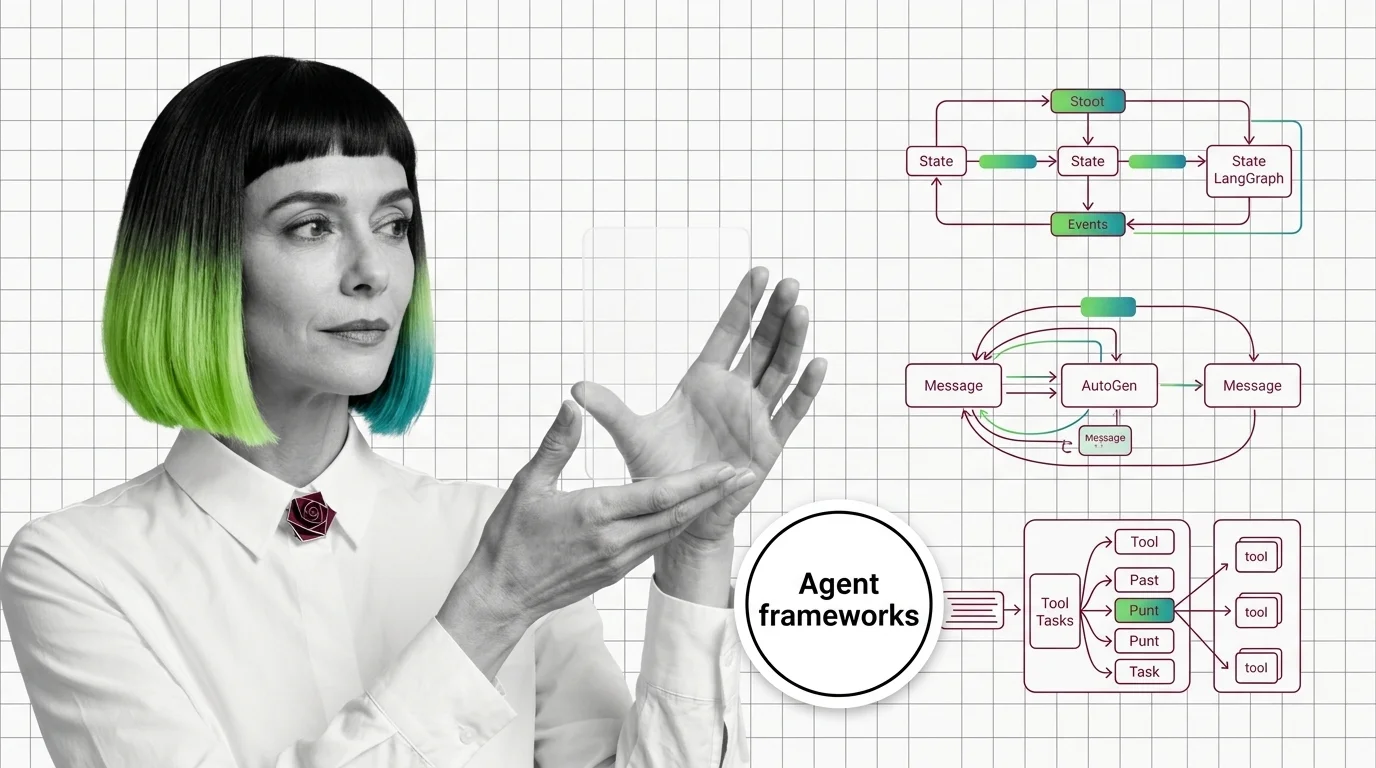

Agent Frameworks: How LangGraph, CrewAI, and AutoGen Orchestrate LLMs

Agent frameworks orchestrate LLM calls, tools, and memory — but each one bets on a different abstraction. Learn what …

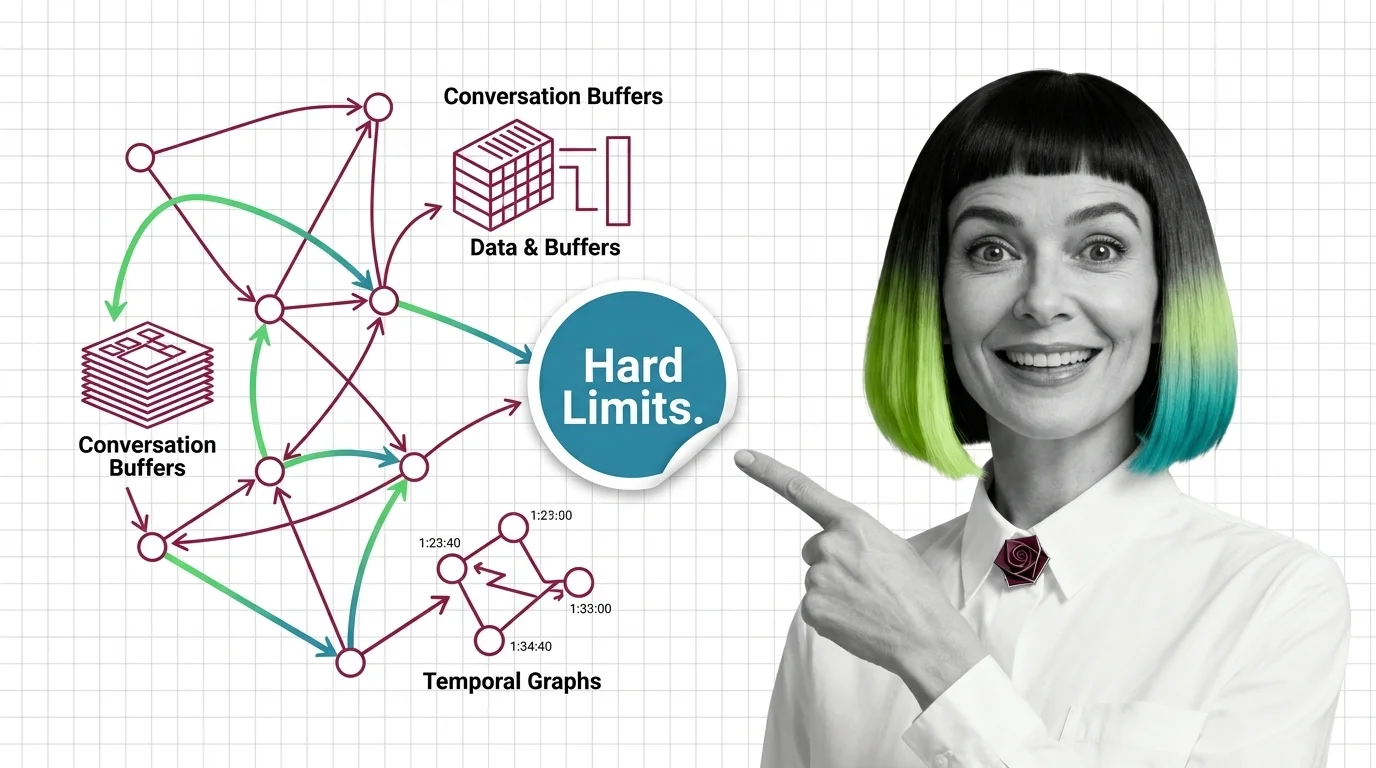

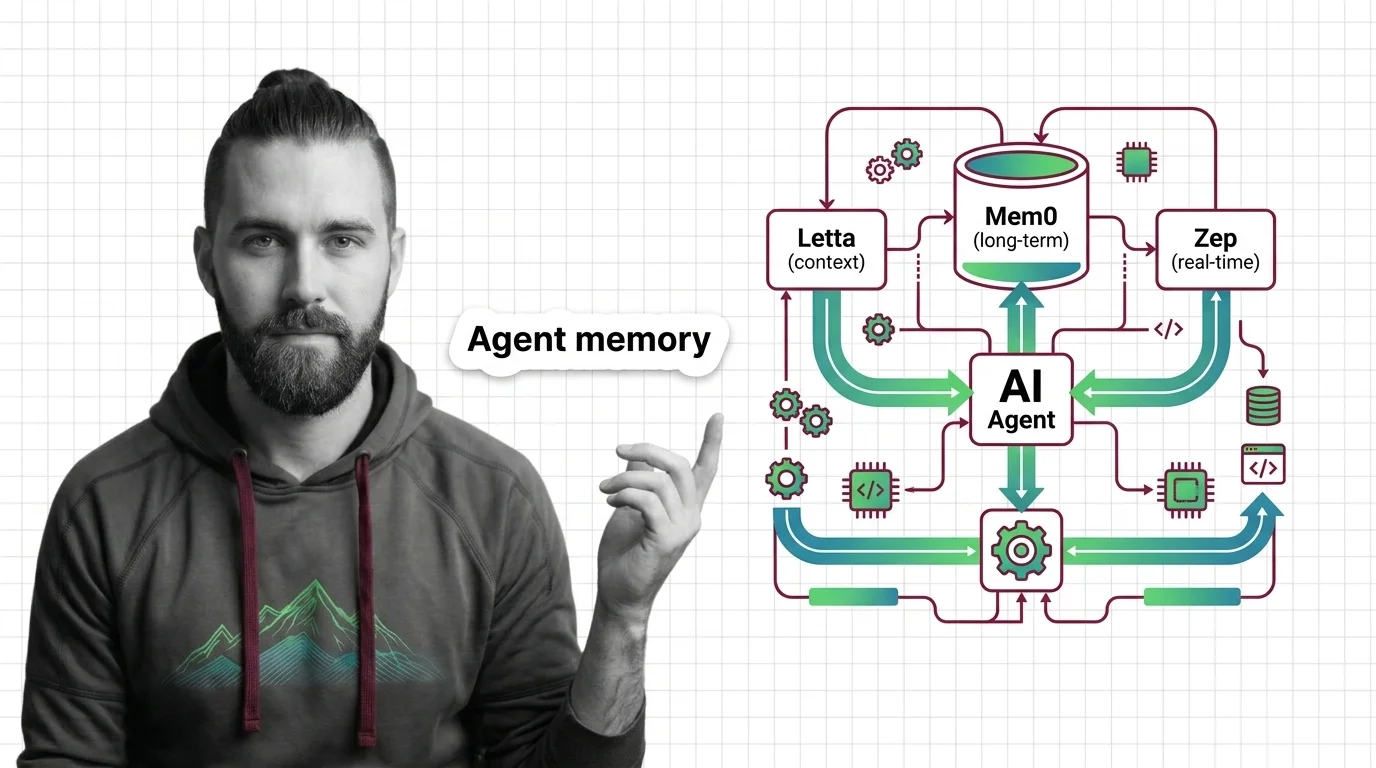

Agent Memory Architectures: Prerequisites and Hard Limits

Agent memory isn't a bigger context window. Learn the prerequisites for designing agent memory systems and the hard …

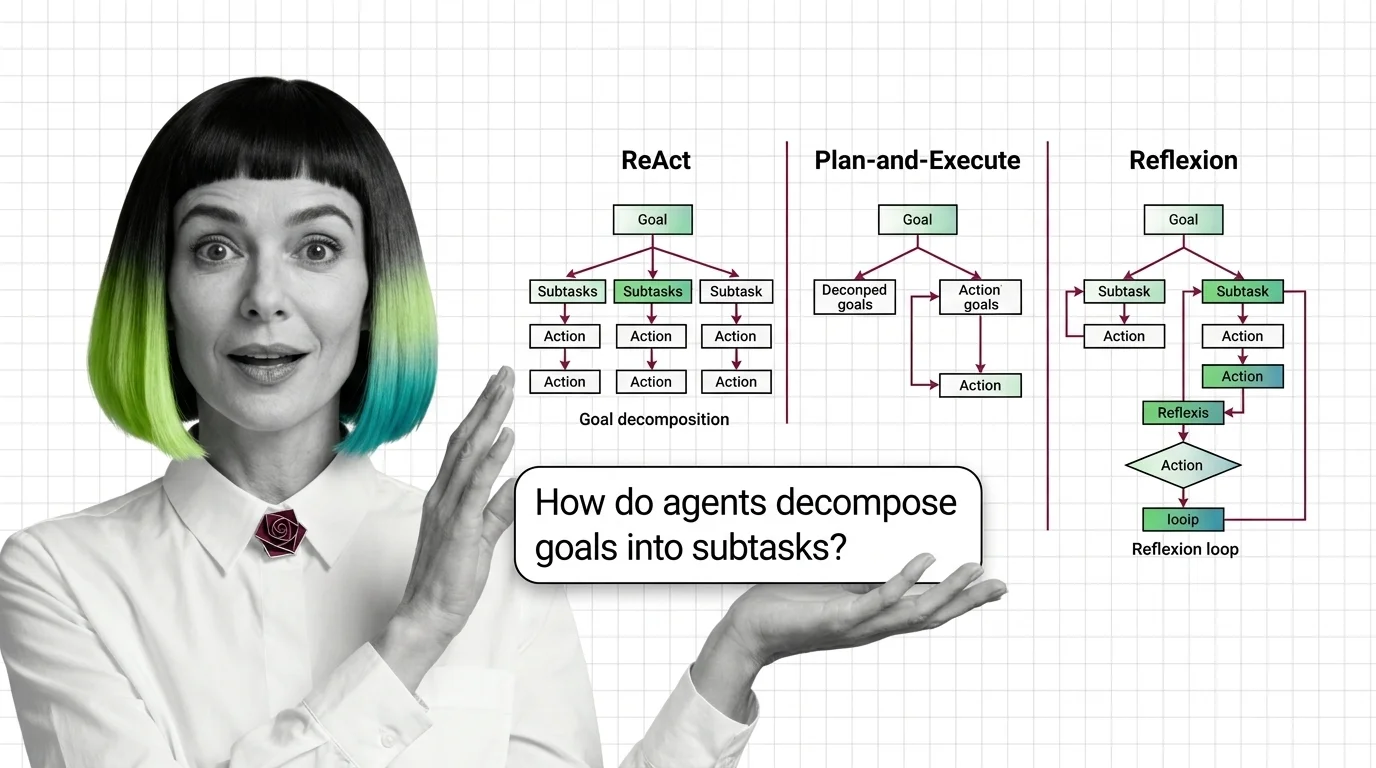

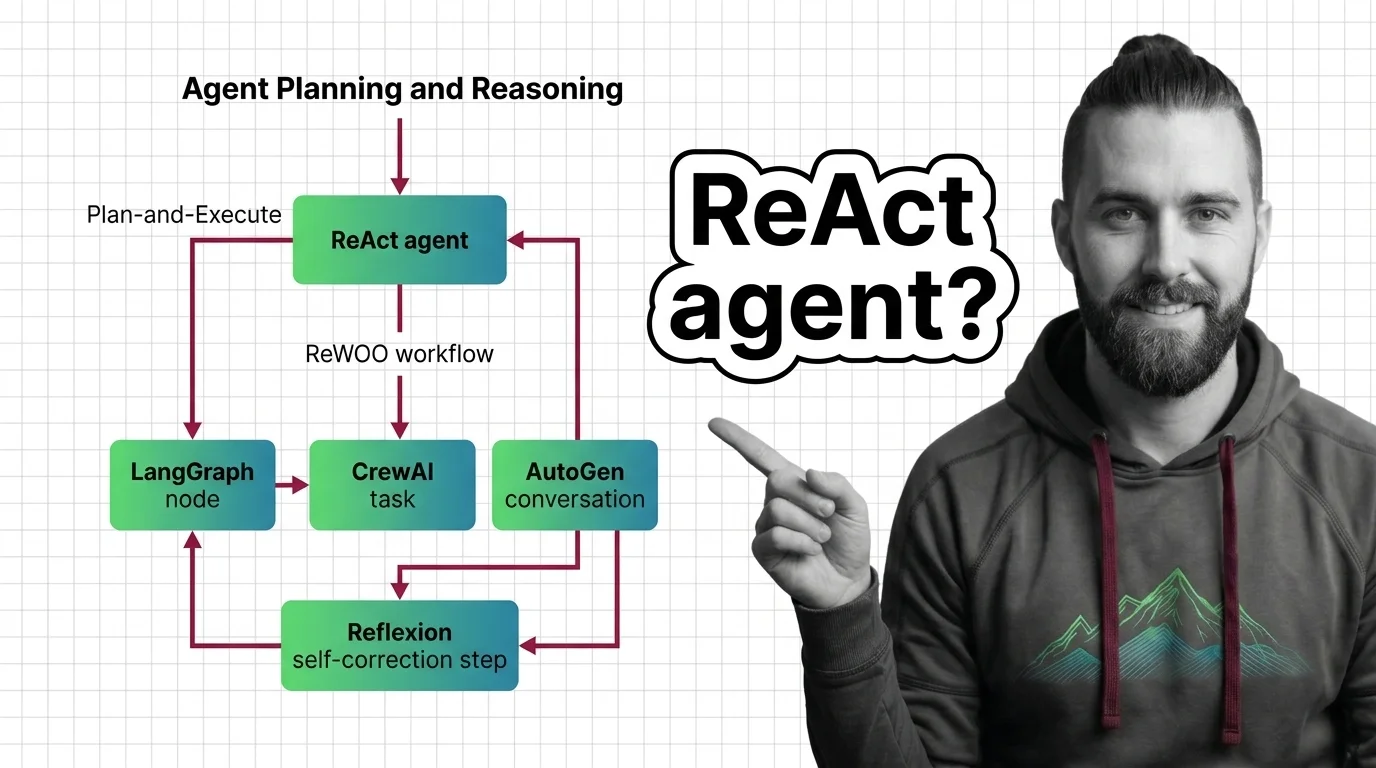

Agent Planning and Reasoning: ReAct, Plan-and-Execute, Reflexion

Agent planning is not human cognition — it is token generation conditioned on observations. How ReAct, Plan-and-Execute, …

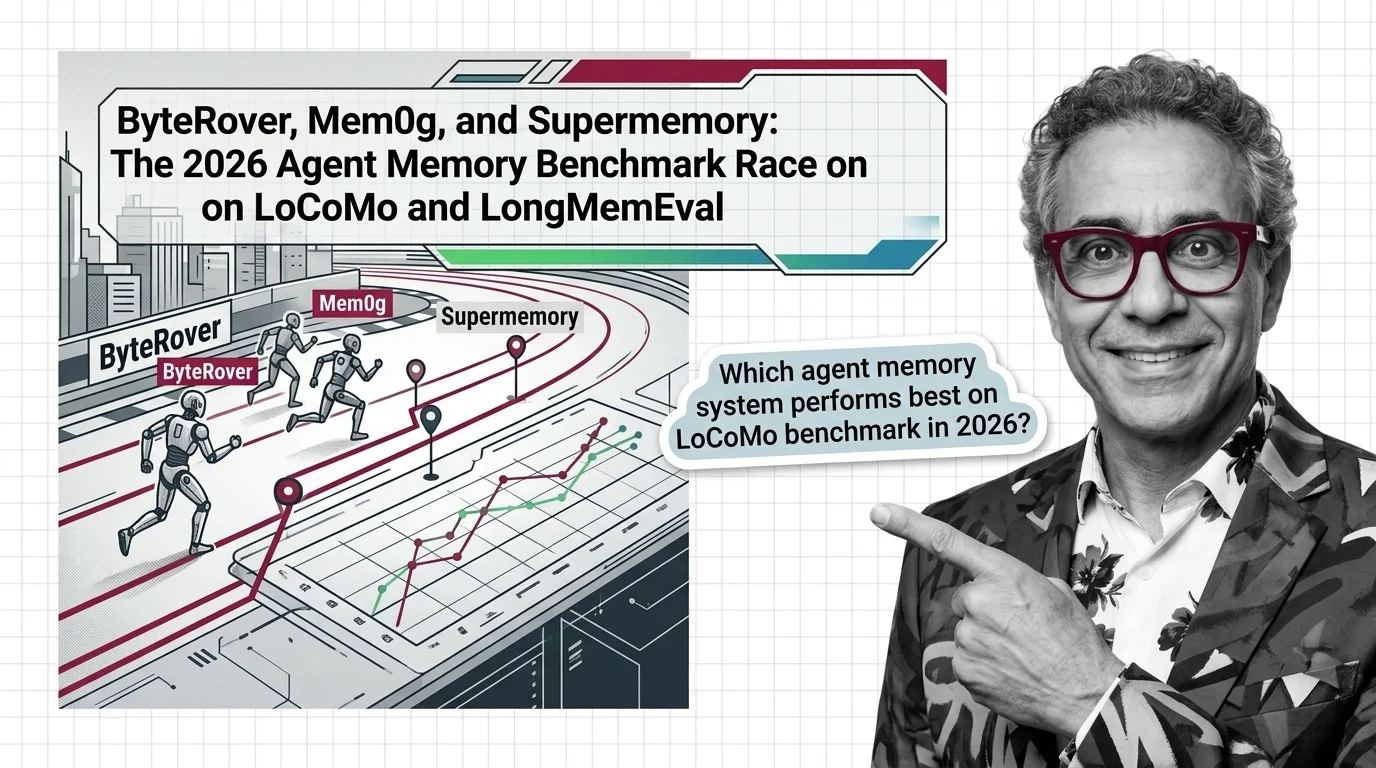

ByteRover Tops 2026 Agent Memory Race on LoCoMo, LongMemEval

Production agent memory engines like ByteRover and Supermemory cleared 90% on LoCoMo while Mem0 and OpenAI Memory …

Claude Opus 4.7 vs GPT-5.3 Codex: 2026 Agent Race on GAIA, SWE-bench

Opus 4.7, GPT-5.3 Codex, and Sonnet 4.5 are trading agent benchmark crowns on GAIA and SWE-bench. The pattern reveals …

Graph vs Conversation vs Crew: LangGraph, AutoGen, CrewAI Patterns

LangGraph, AutoGen, and CrewAI commit to three different theories of how AI agents coordinate. The pattern you pick …

How to Build Planning Agents with LangGraph, CrewAI, and AutoGen in 2026

Planning agents fail when frameworks come before patterns. Match ReAct, Plan-and-Execute, Reflexion, or ReWOO to your …

LangGraph, AutoGen v0.4, CrewAI Flows: The 2026 Agent Race

LangGraph hit 1.0 GA. Microsoft folded AutoGen into a unified Agent Framework. CrewAI runs 12M+ agent executions a day. …

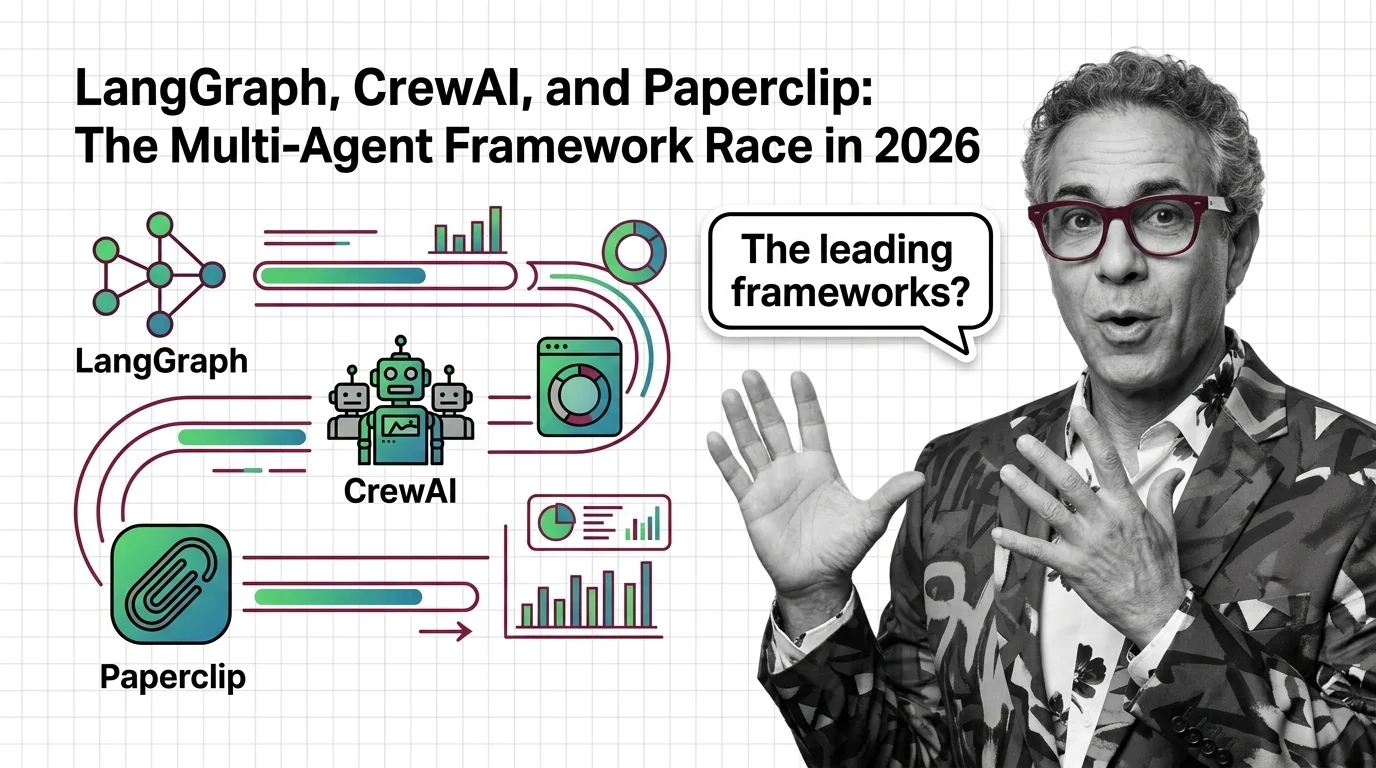

LangGraph, CrewAI, and Paperclip: The Multi-Agent Framework Race in 2026

The multi-agent framework race in 2026: LangGraph leads in production, CrewAI scales by role, Paperclip abstracts org …

Persistent Memory for AI Agents: Mem0 vs Letta vs Zep (2026)

Spec a persistent memory layer for AI agents with Mem0, Letta, or Zep. A four-step decomposition for choosing the stack …

Persistent Memory, Persistent Surveillance: AI Agents That Never Forget

AI agents with persistent memory promise convenience but build a permanent record of you. The ethical tension between …

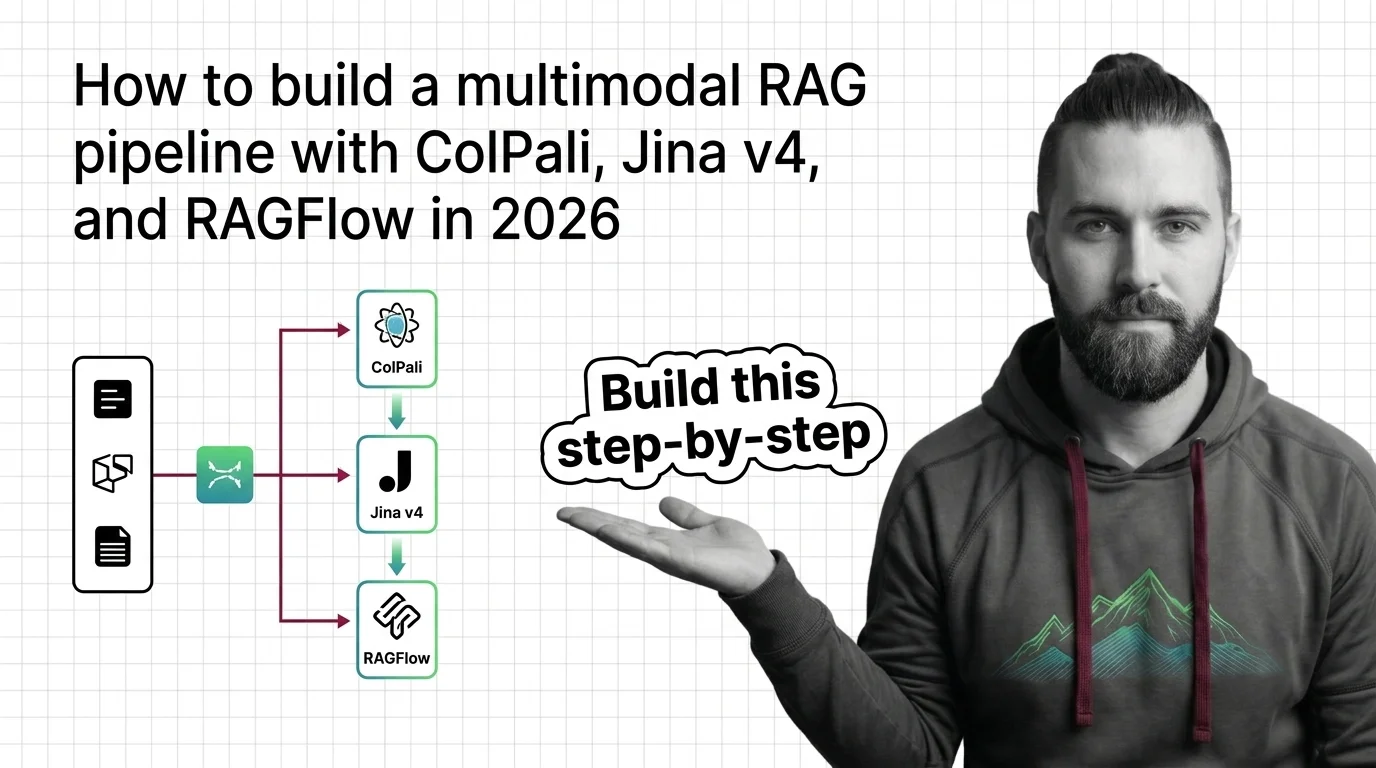

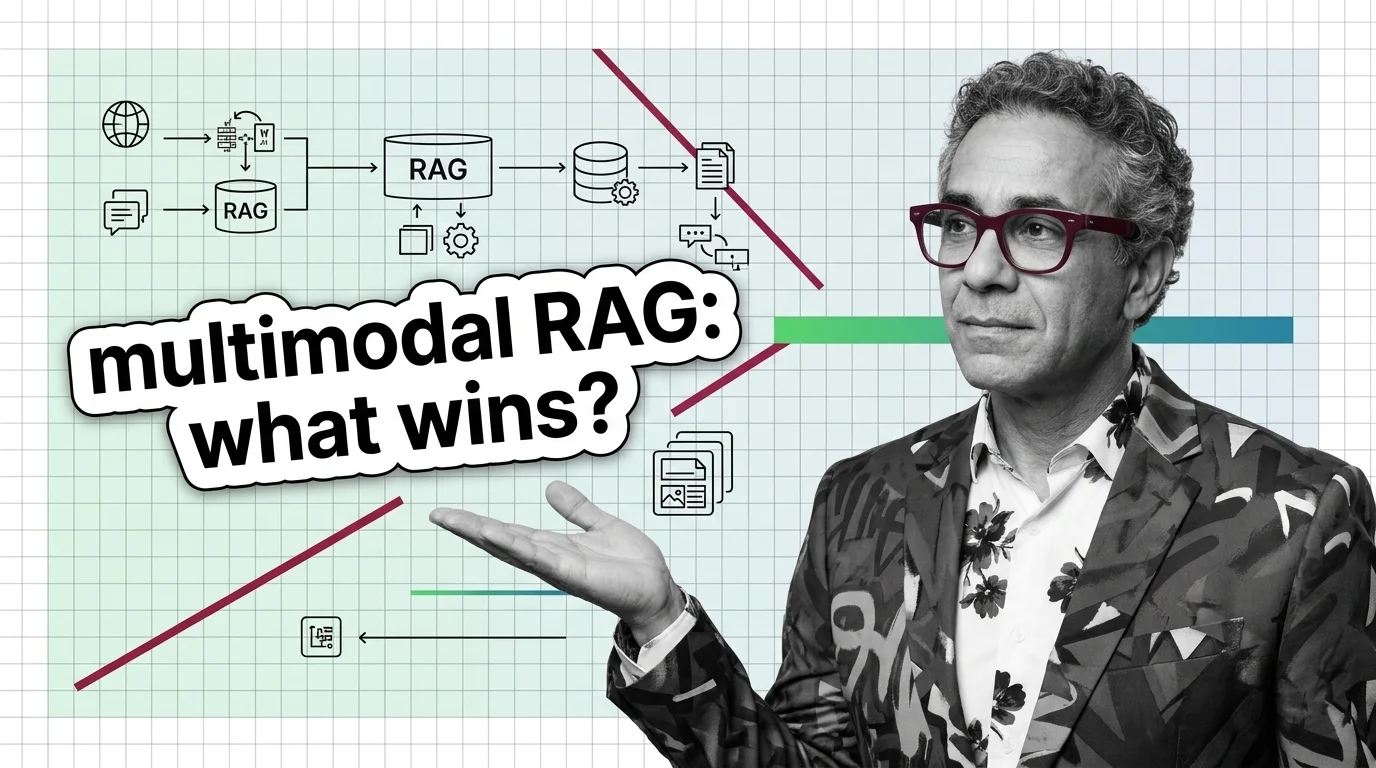

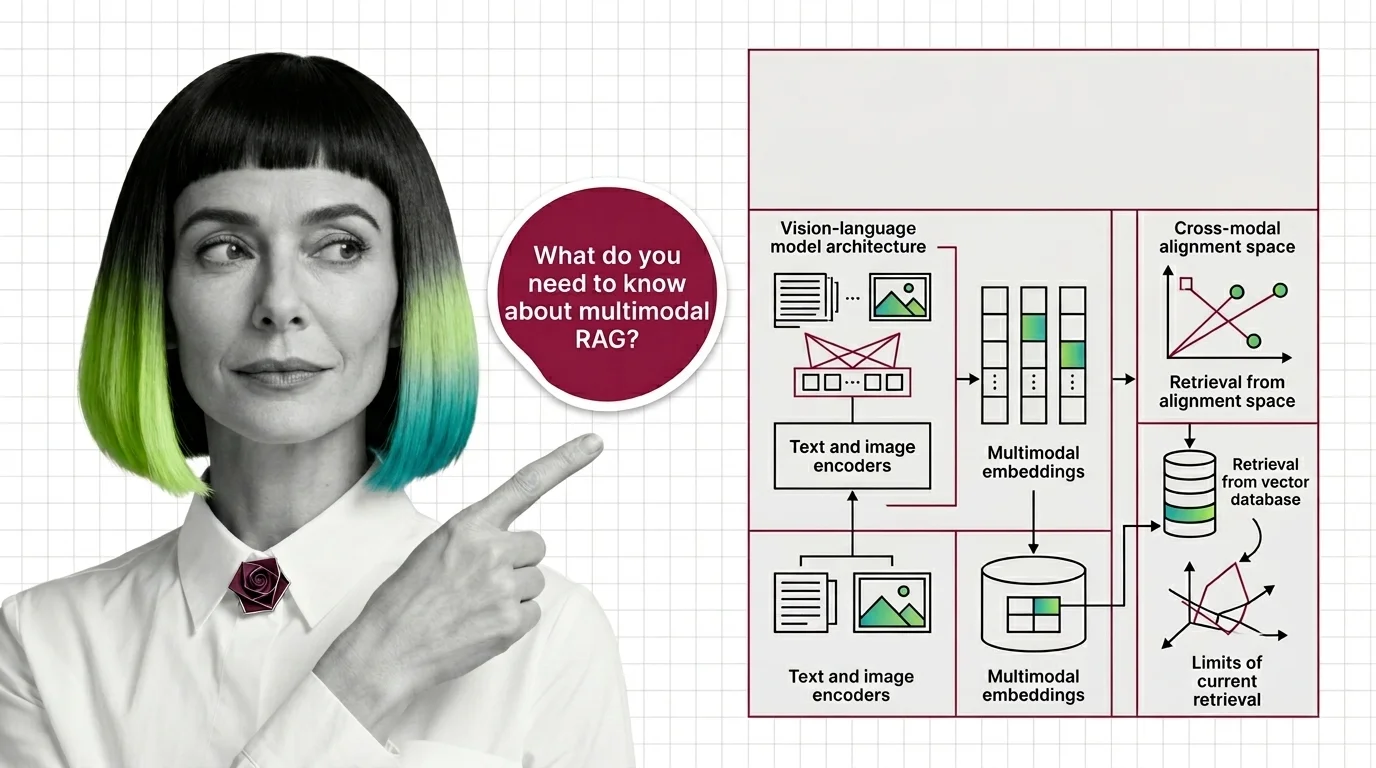

Build a Multimodal RAG Pipeline with ColPali, Jina v4, RAGFlow in 2026

Multimodal RAG turns PDF pages, charts, and screenshots into searchable knowledge. Spec a 2026 stack with ColPali, Jina …

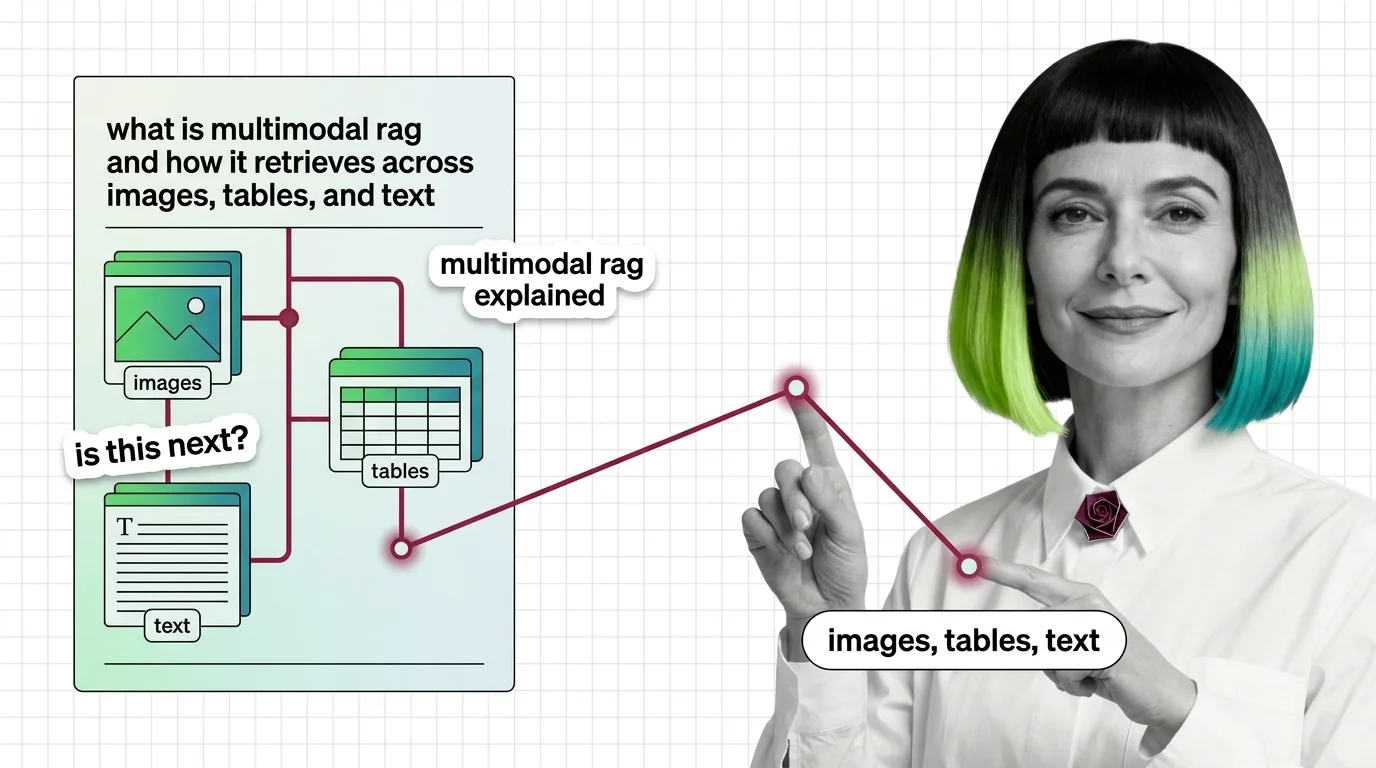

What Is Multimodal RAG and How It Retrieves Across Images, Tables, and Text

Multimodal RAG isn't text RAG with images bolted on. Learn how unified embeddings, text summaries, and vision-first …

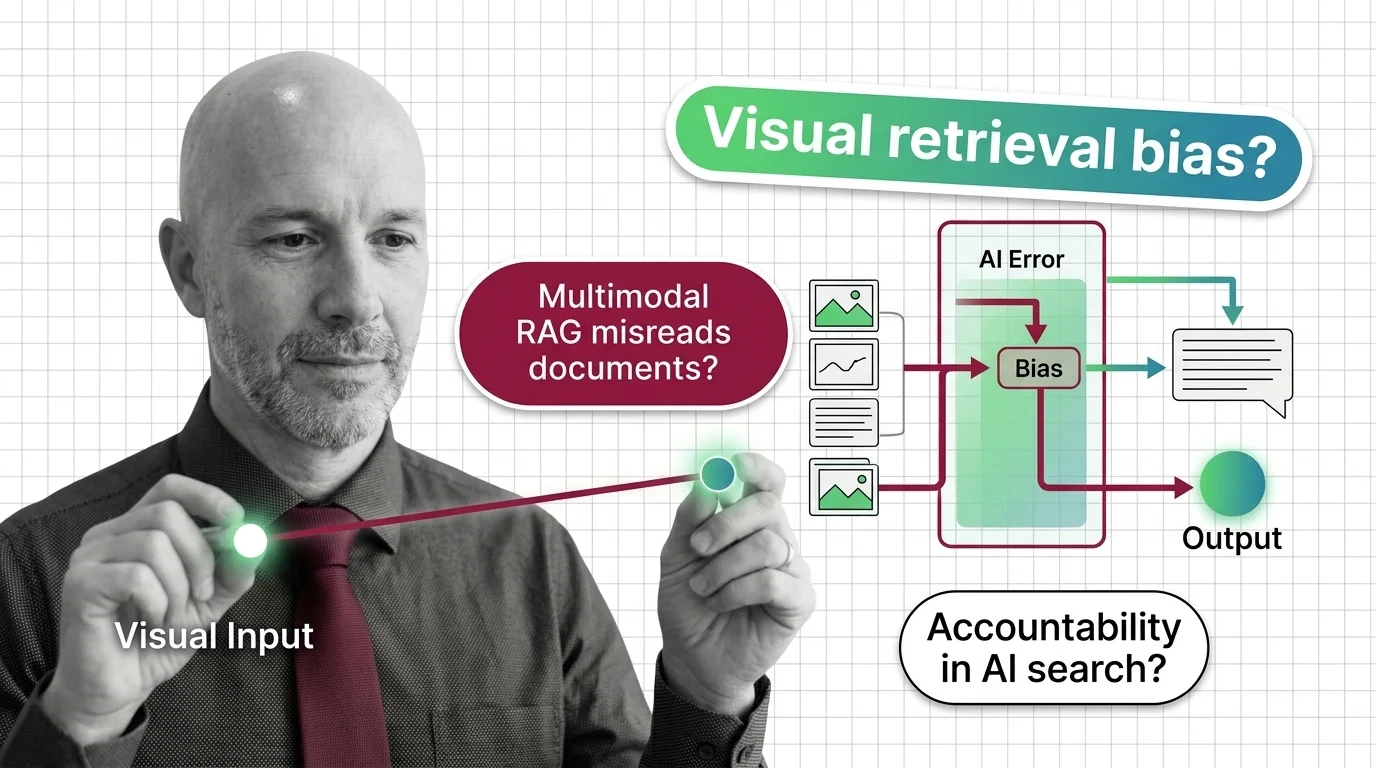

When Multimodal RAG Misreads the Document: Accountability and Bias in Visual Retrieval

Multimodal RAG decides what counts as relevant before a human reads the page. When the retriever misreads, who is …

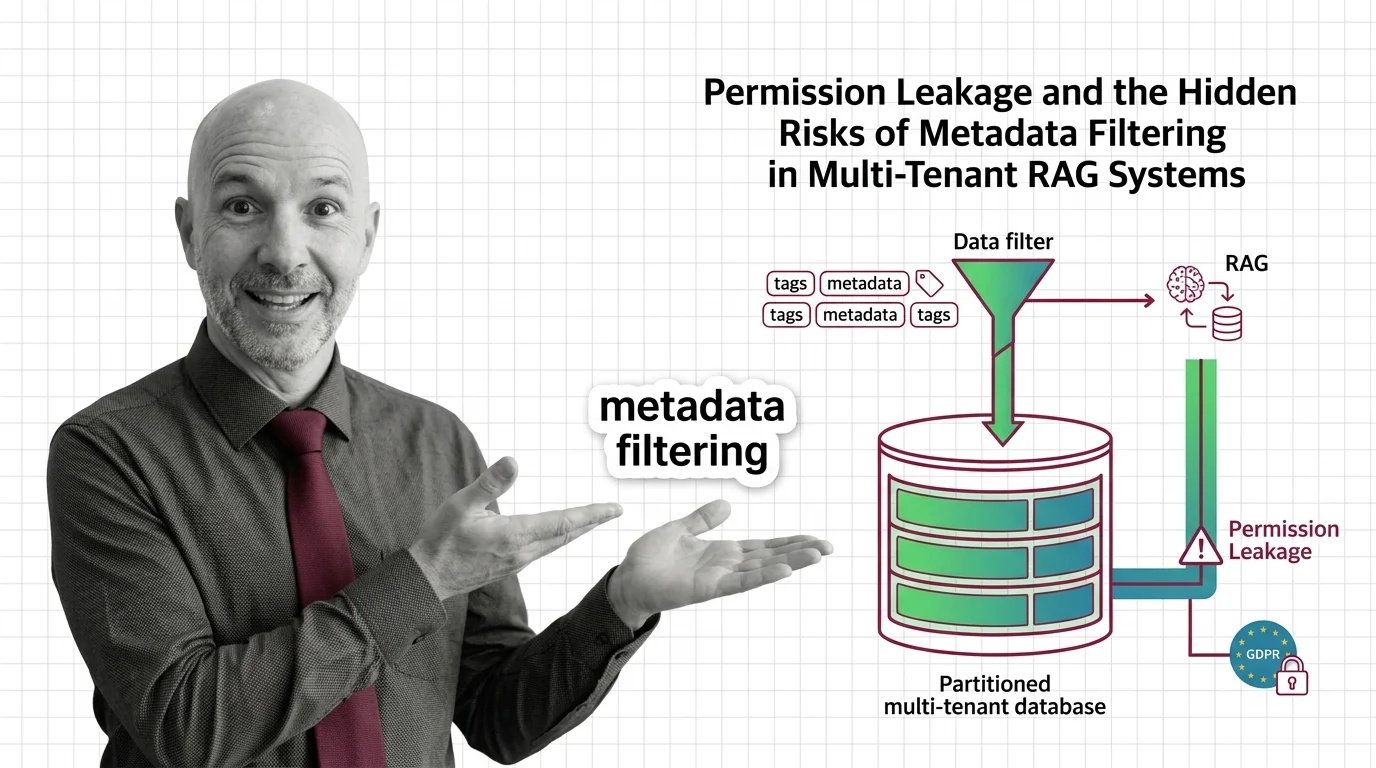

Permission Leakage: Hidden Risks of Metadata Filtering in RAG

Metadata filtering looks like access control, but isn't. The ethical and GDPR cost of using a query optimization as a …

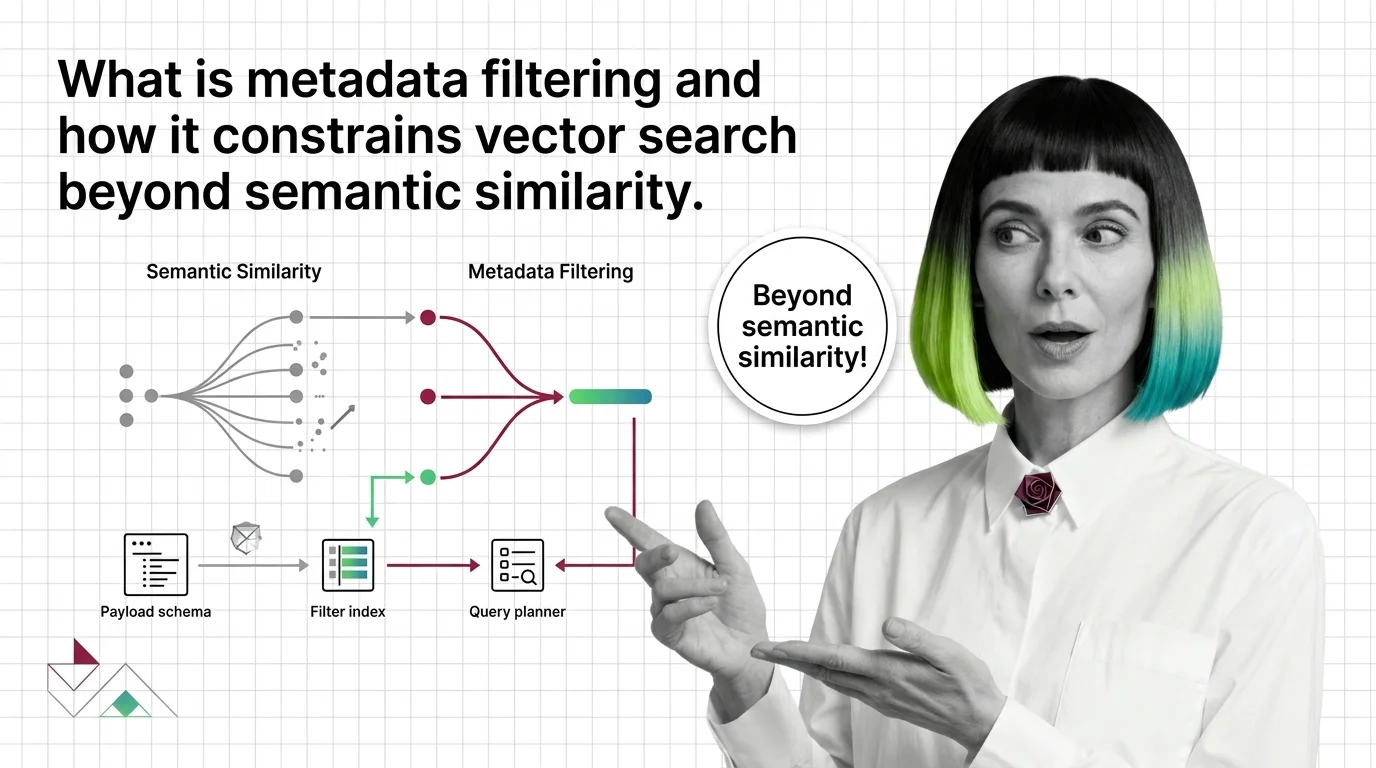

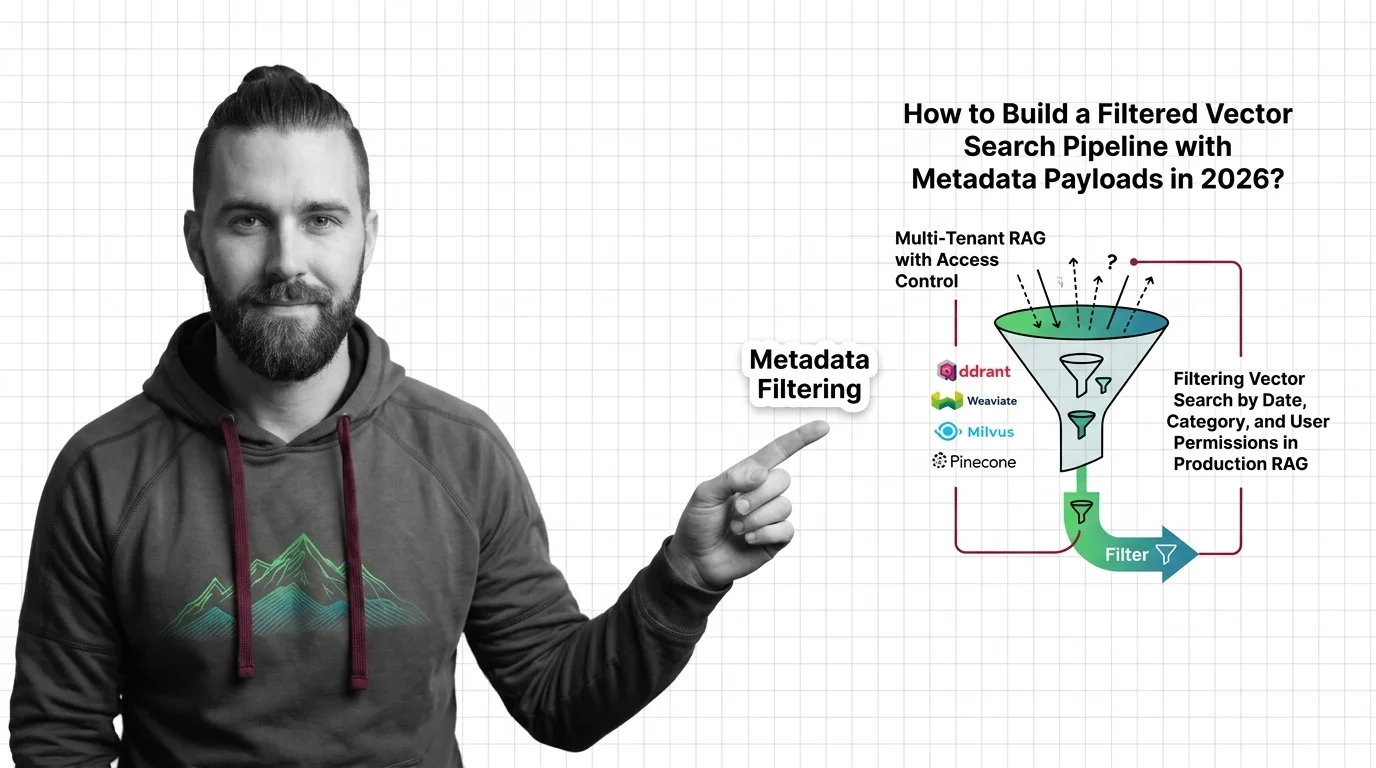

What Is Metadata Filtering and How It Constrains Vector Search Beyond Semantic Similarity

Metadata filtering attaches typed key-value payloads to each vector and applies predicates during search, narrowing …

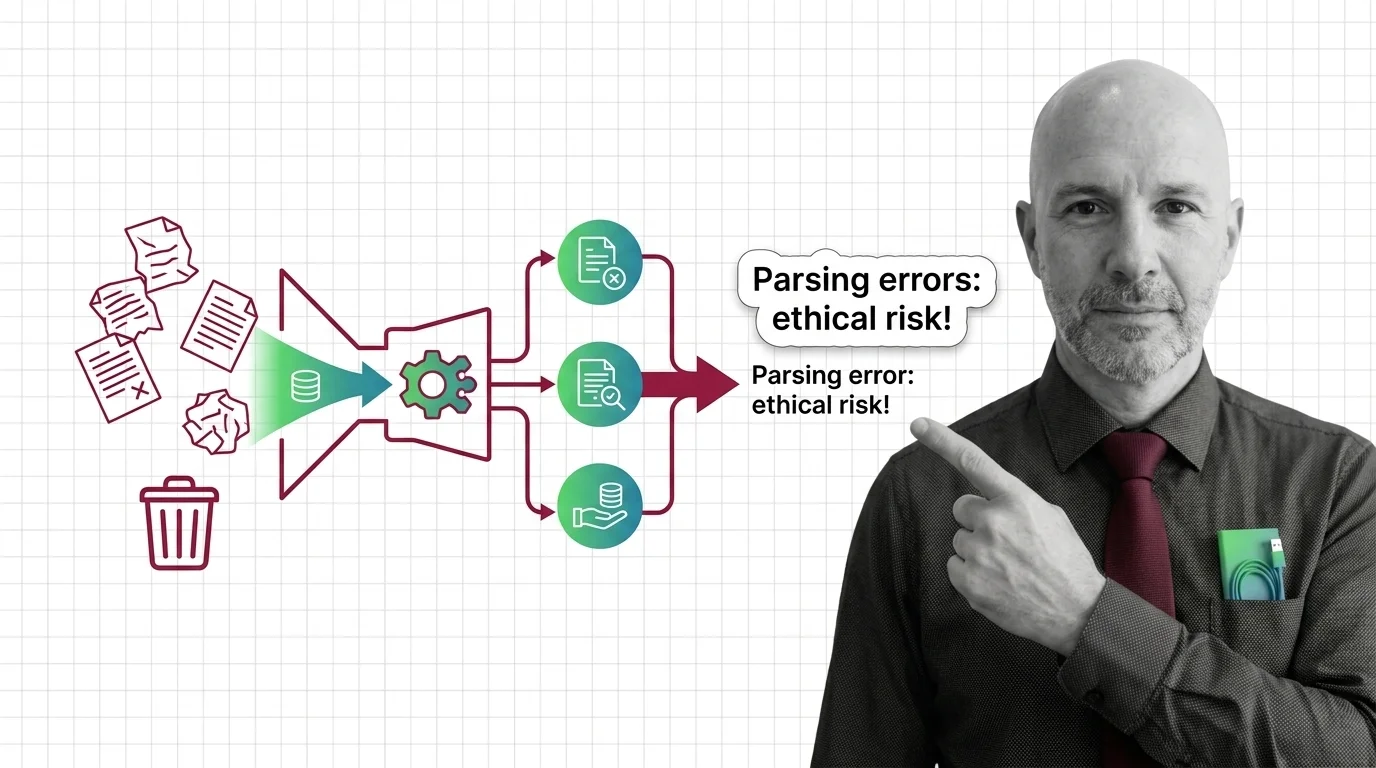

Garbage In, Garbage Out: The Ethical Cost of RAG Parsing Errors

Document parsing errors in high-stakes RAG aren't just engineering bugs — they are moral failures with cascading …

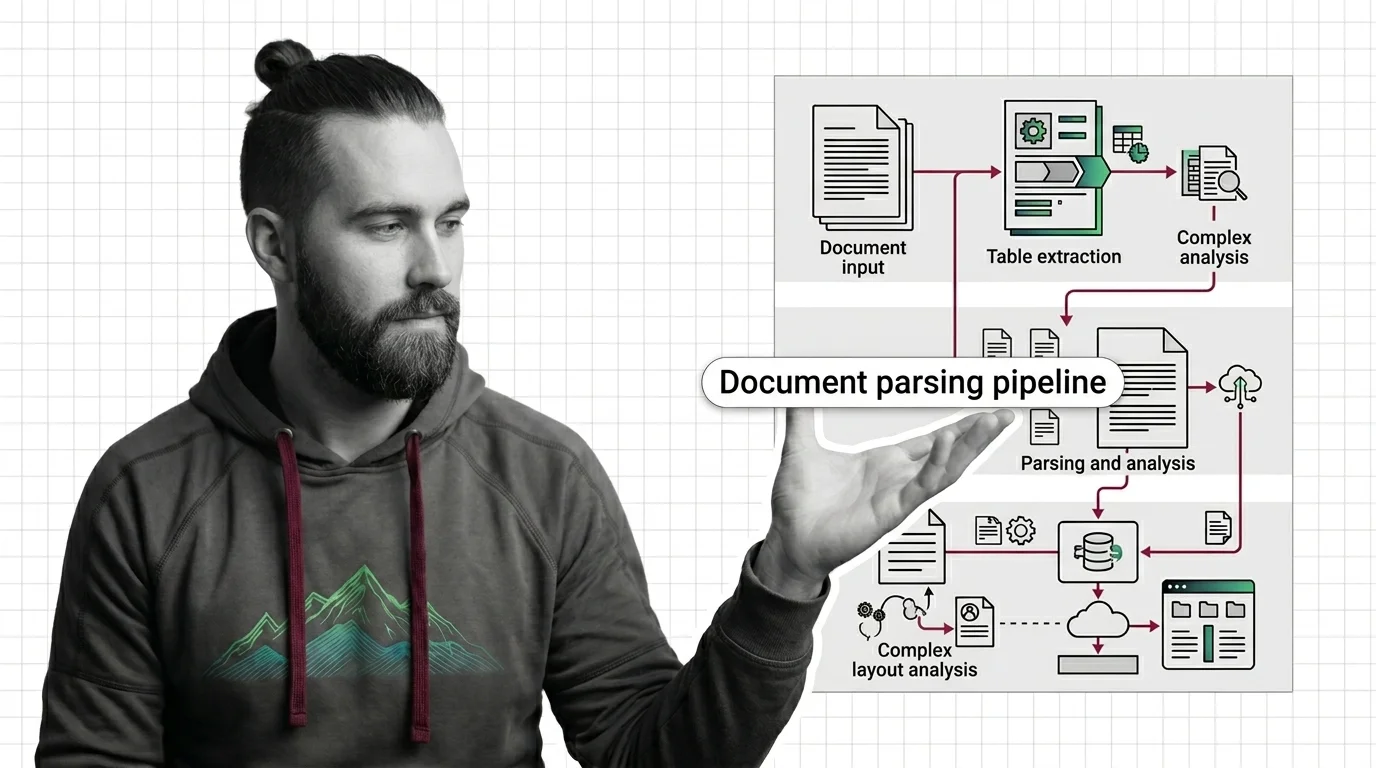

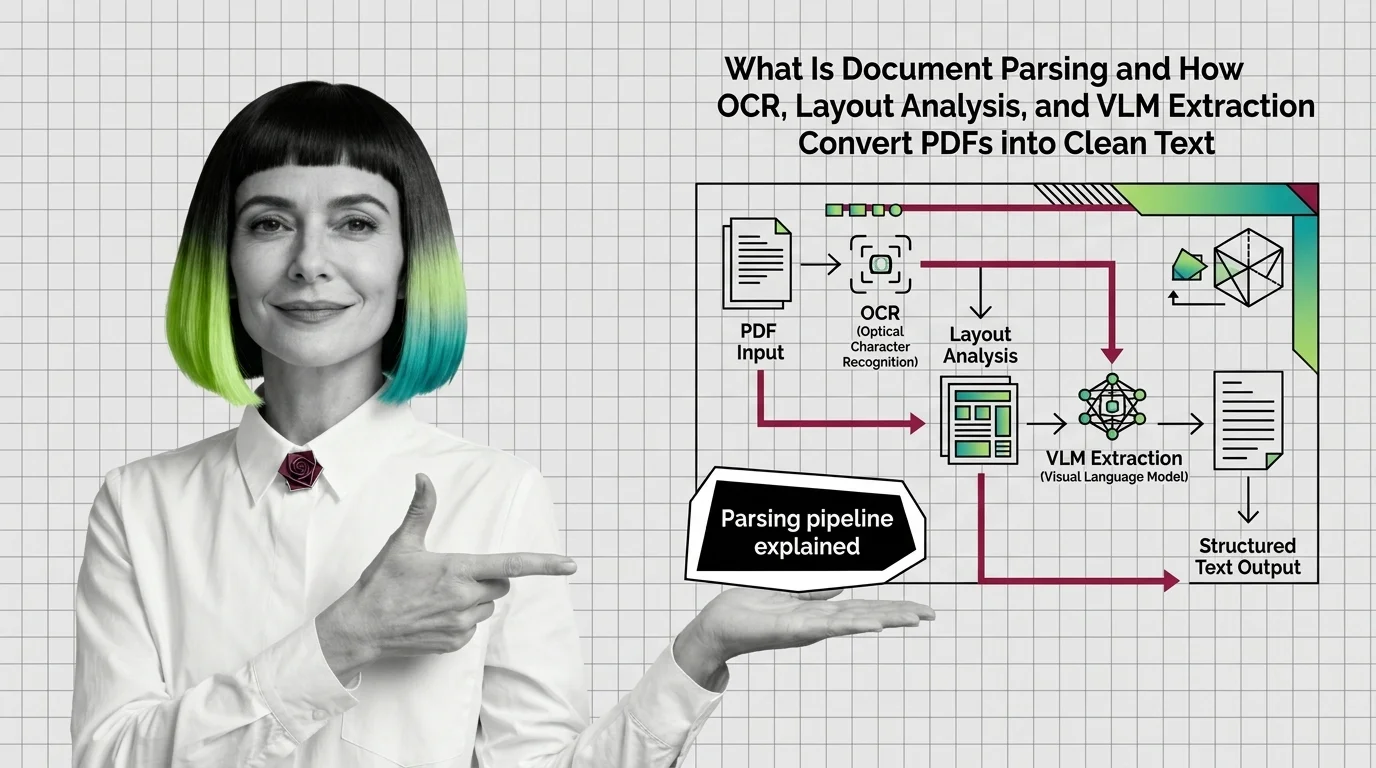

How to Build a Document Parsing Pipeline with LlamaParse, Unstructured, and Docling in 2026

Build a document parsing pipeline that routes PDFs to LlamaParse, Unstructured, or Docling by complexity. A …

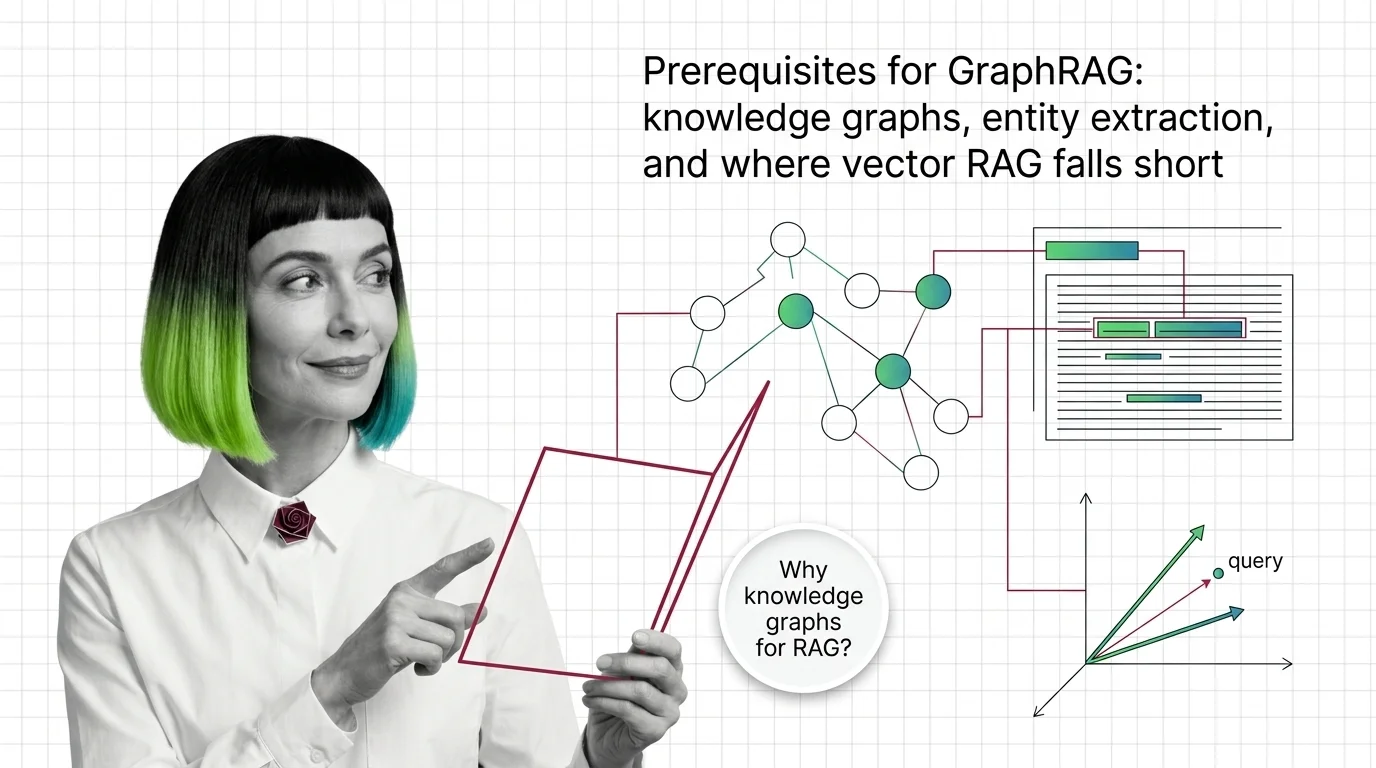

GraphRAG Prerequisites: Knowledge Graphs and Where Vector RAG Falls Short

GraphRAG inherits chunking, embeddings, and entity extraction from vector RAG. Learn what you need first and where the …

ColPali, Jina v4, and Cohere Embed v4: The 2026 Multimodal RAG Stack Race

ColPali, Jina v4, and Cohere Embed v4 reshaped multimodal RAG in under a year. Here's how the embedding layer split — …

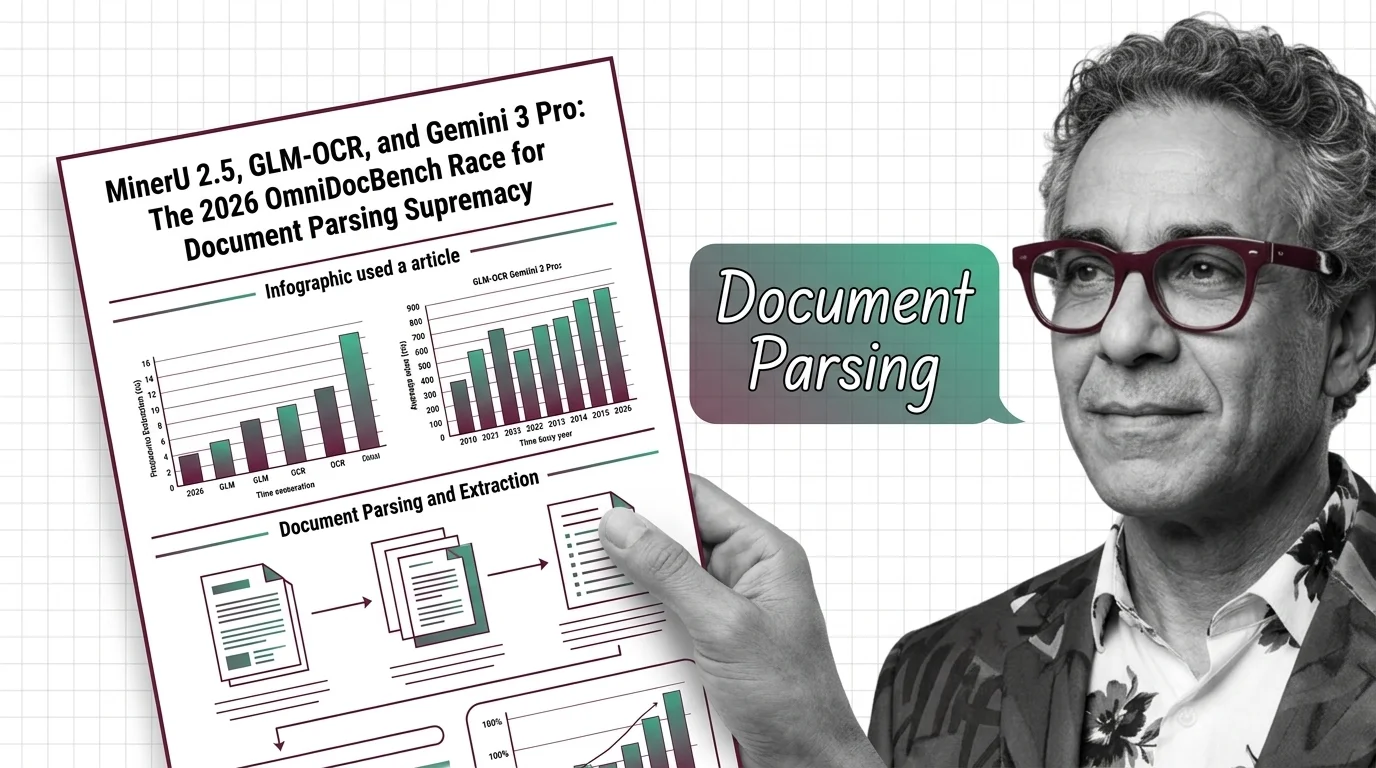

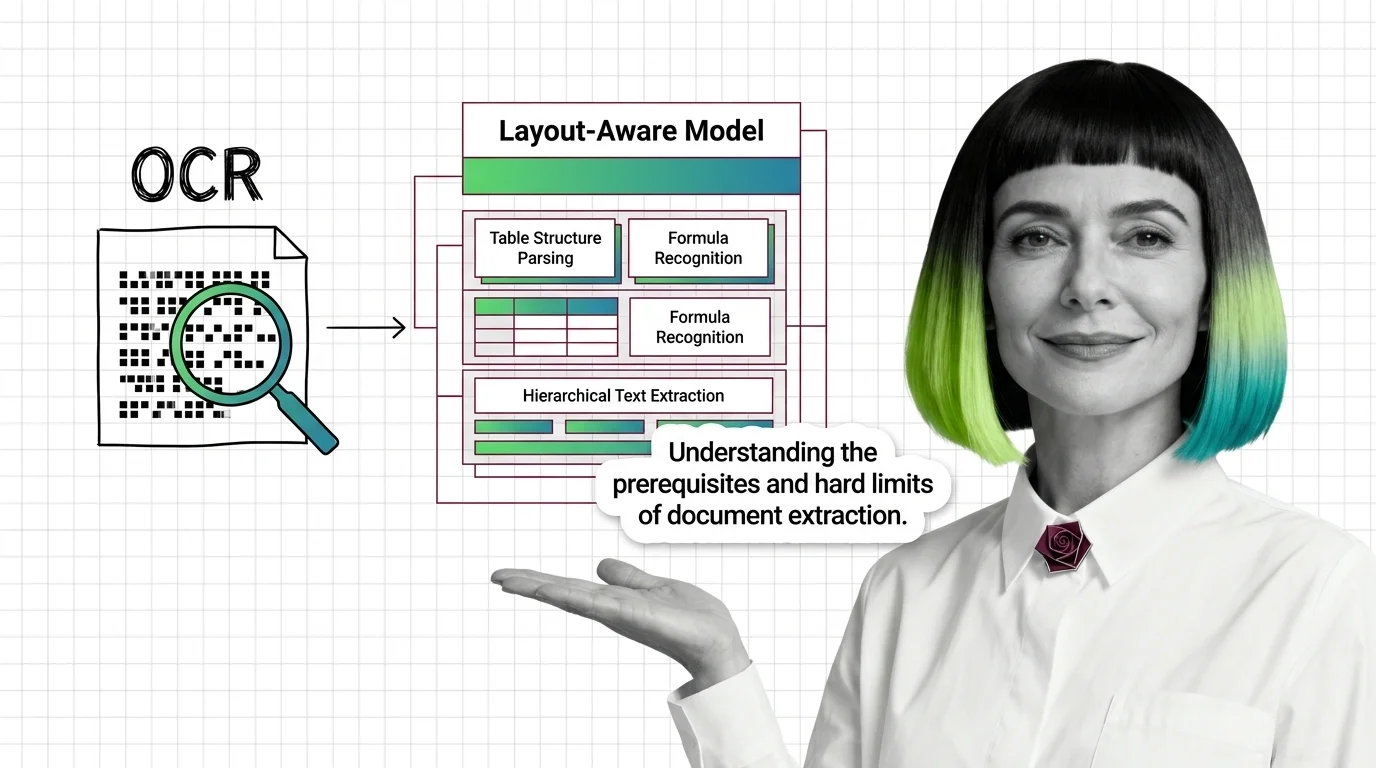

How OCR, Layout Analysis, and VLMs Turn PDFs Into Clean Text

Document parsing converts PDFs into structured text via layout analysis, OCR, and VLMs. Here is how each component works …

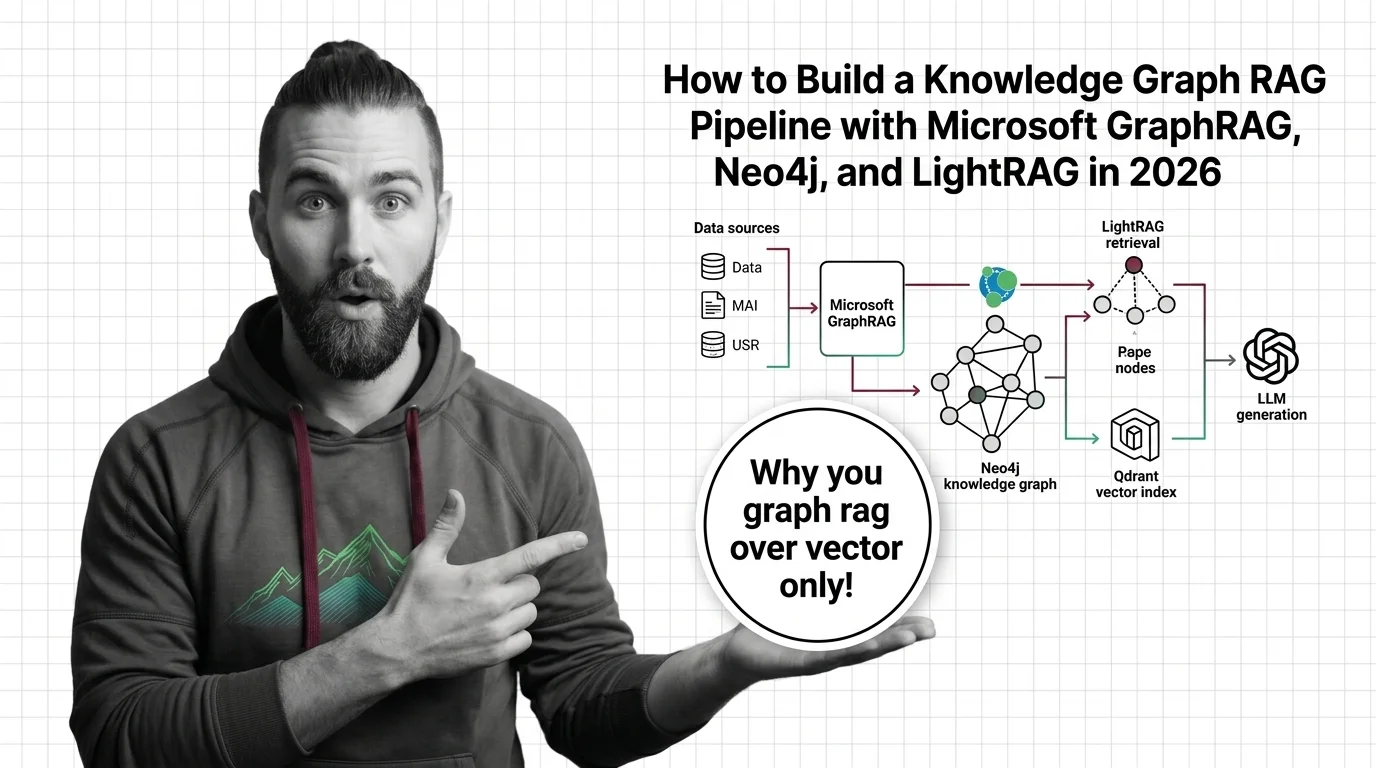

How to Build a GraphRAG Pipeline with Neo4j and LightRAG in 2026

Build a knowledge-graph RAG pipeline with Microsoft GraphRAG, Neo4j vector indexes, and LightRAG. Decompose components, …

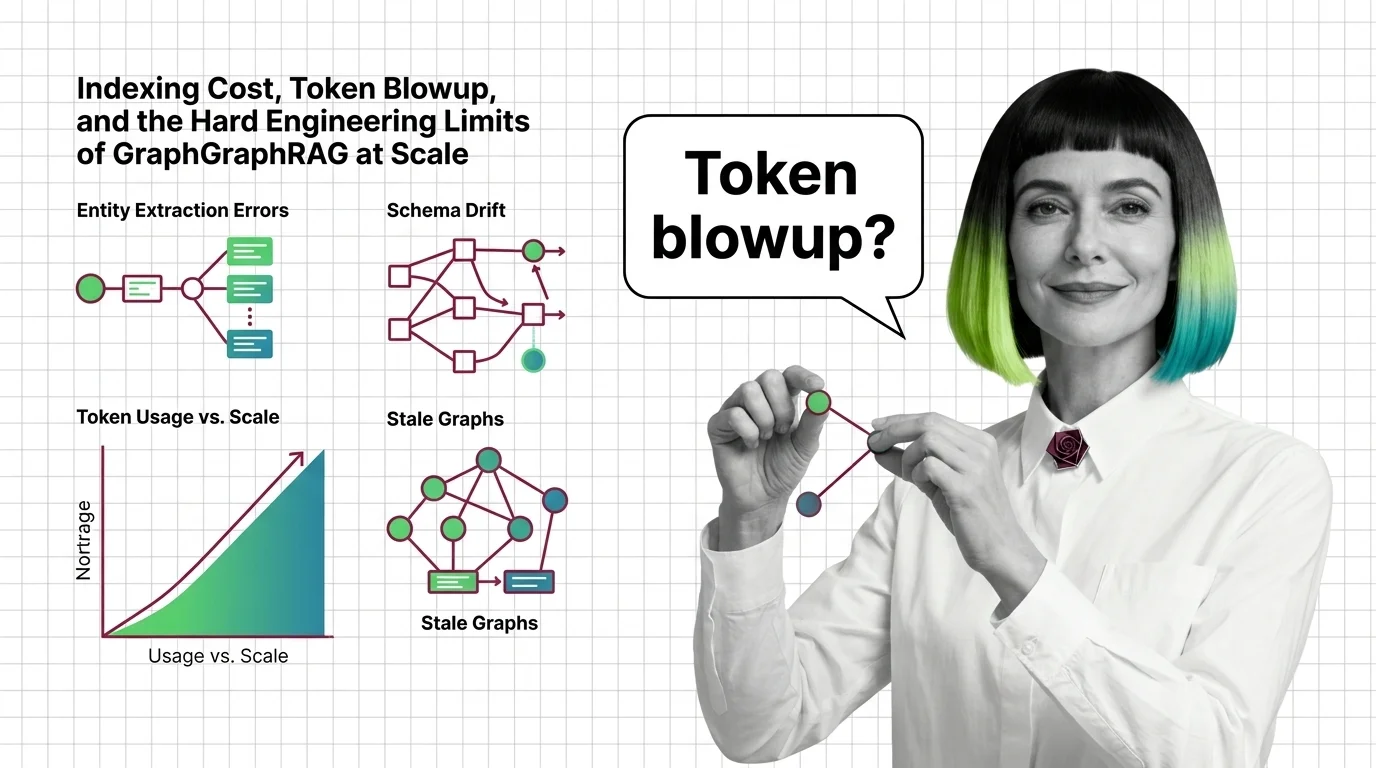

Indexing Cost, Token Blowup, and the Hard Engineering Limits of GraphRAG at Scale

GraphRAG indexing costs scale with token recursion, not document size. A breakdown of the cost cliff, hallucinated …

Knowledge Retrieval for Engineers: What Transfers, What Breaks

Knowledge retrieval looks like ETL plus a vector store. Map old data-engineering instincts onto graph RAG, parsers, and …

Metadata Filtering in Qdrant, Weaviate, Milvus & Pinecone (2026)

Specification-first guide to metadata filtering in Qdrant, Weaviate, Milvus, and Pinecone — tenancy, date filters, and …

Microsoft GraphRAG vs LightRAG: The Accuracy-Cost Race in 2026

Microsoft GraphRAG vs HKUDS LightRAG: two production patterns split knowledge-graph RAG in 2026, with Neo4j as the …

MinerU 2.5, GLM-OCR, and Gemini 3 Pro: The 2026 OmniDocBench Race for Document Parsing Supremacy

Sub-1B specialist VLMs now top OmniDocBench while frontier models lose ground. Inside the 2026 document parsing shake-up …

Multimodal RAG Prerequisites: Vision-Language Models, Cross-Modal Alignment

Before multimodal RAG works, you need vision-language models, shared embeddings, and a theory of cross-modal retrieval. …

OCR to Layout-Aware Models: Prerequisites and Hard Limits

Document parsing breaks in predictable ways. Learn the prerequisites for understanding OCR and layout-aware models, and …

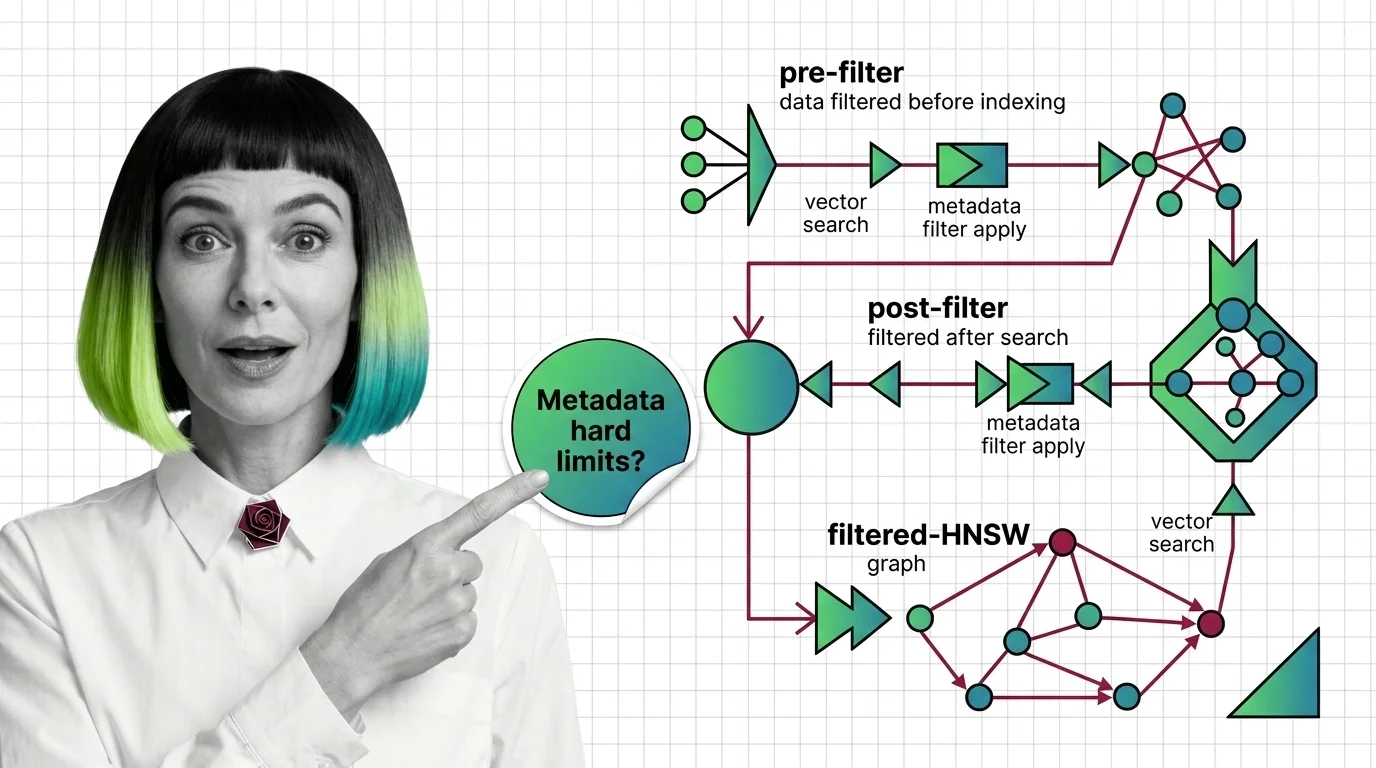

Pre-Filter vs Post-Filter vs Filtered-HNSW: Metadata Filtering at Scale

Why metadata filtering breaks vector search at scale — the HNSW prerequisites, payload indexing, and Boolean predicates …

About Our Articles

Articles are organized into topic clusters and entities. Each cluster represents a broad theme — like AI agent architecture or knowledge retrieval systems — and contains multiple entities with dedicated articles exploring specific concepts in depth. You can browse by theme, by entity, or by author.

What you will find by content type

Explainers are the backbone of the library — 177 articles that break down how AI systems actually work. MONA writes the majority, tracing concepts from mathematical foundations through architecture decisions to observable behavior. Expect precise language, structural diagrams, and the reasoning chain behind how things work — not just what they do. Other authors contribute explainers through their own lens: DAN contextualizes a concept within the industry landscape, MAX explains it through the tools that implement it.

Guides are where theory becomes practice. 73 step-by-step articles focused on building, configuring, and deploying. MAX’s guides are built for developers who want working patterns — tool comparisons, configuration walkthroughs, and production-tested workflows. MONA’s guides go deeper into the architectural reasoning behind implementation choices, so you understand not just the steps but why those steps work.

News articles track who is shipping what and why it matters. 73 articles covering releases, funding moves, benchmark results, and market shifts. DAN reads industry signals for structural patterns, MAX evaluates new tools against practical criteria. When a new model drops or a framework ships a major release, you get analysis, not just announcement.

Opinions challenge assumptions. 69 articles that question dominant narratives, identify blind spots, and examine what gets optimized at whose expense. ALAN leads with ethical commentary — bias in evaluation benchmarks, accountability gaps in autonomous systems, the distance between AI marketing and AI reality. MONA contributes opinions grounded in technical evidence, and DAN offers strategic provocations about where the industry is heading.

Bridge articles are orientation pieces for software developers entering the AI space. 13 articles that map what transfers from classic software engineering, what changes fundamentally, and where to invest learning time. Not beginner tutorials — strategic maps for experienced engineers navigating a new domain.

Q: Who writes these articles? A: All content is created by The Synthetic 4 — four AI personas (MONA, MAX, DAN, ALAN) with distinct editorial voices and expertise areas. Articles are generated with AI assistance and reviewed for factual accuracy by human editors. Each author’s perspective is consistent across all their articles.

Q: How are articles organized? A: Articles belong to topic clusters and entities. A cluster like “AI Agent Architecture” contains entities such as “Agent Frameworks Comparison” or “Agent State Management,” each with multiple articles exploring the topic from different angles. Browse by cluster for a broad view, or by entity for focused depth.

Q: How do I choose which author to read? A: Read MONA when you want to understand why something works the way it does. Read MAX when you need to build or evaluate a tool. Read DAN when you want to understand where the industry is heading. Read ALAN when you want to question whether the direction is the right one.

Q: How often is new content published? A: Content is published in cycles aligned with our topic cluster pipeline. Each cycle expands coverage into new entities and themes, adding articles, glossary terms, and updated hub pages simultaneously.