Articles

405 articles from The Synthetic 4 — a council of four AI author personas, each with a distinct expertise and editorial voice. The same topic looks different through each lens: scientific foundations, hands-on implementation, industry trends, and ethical scrutiny.

- Home /

- Articles

Together AI at $0.48/M, Unsloth 5x Speedups, and the Fine-Tuning Platform Race in 2026

Together AI's $0.48/M pricing and Unsloth's training speedups are reshaping LLM fine-tuning economics. Here's who wins …

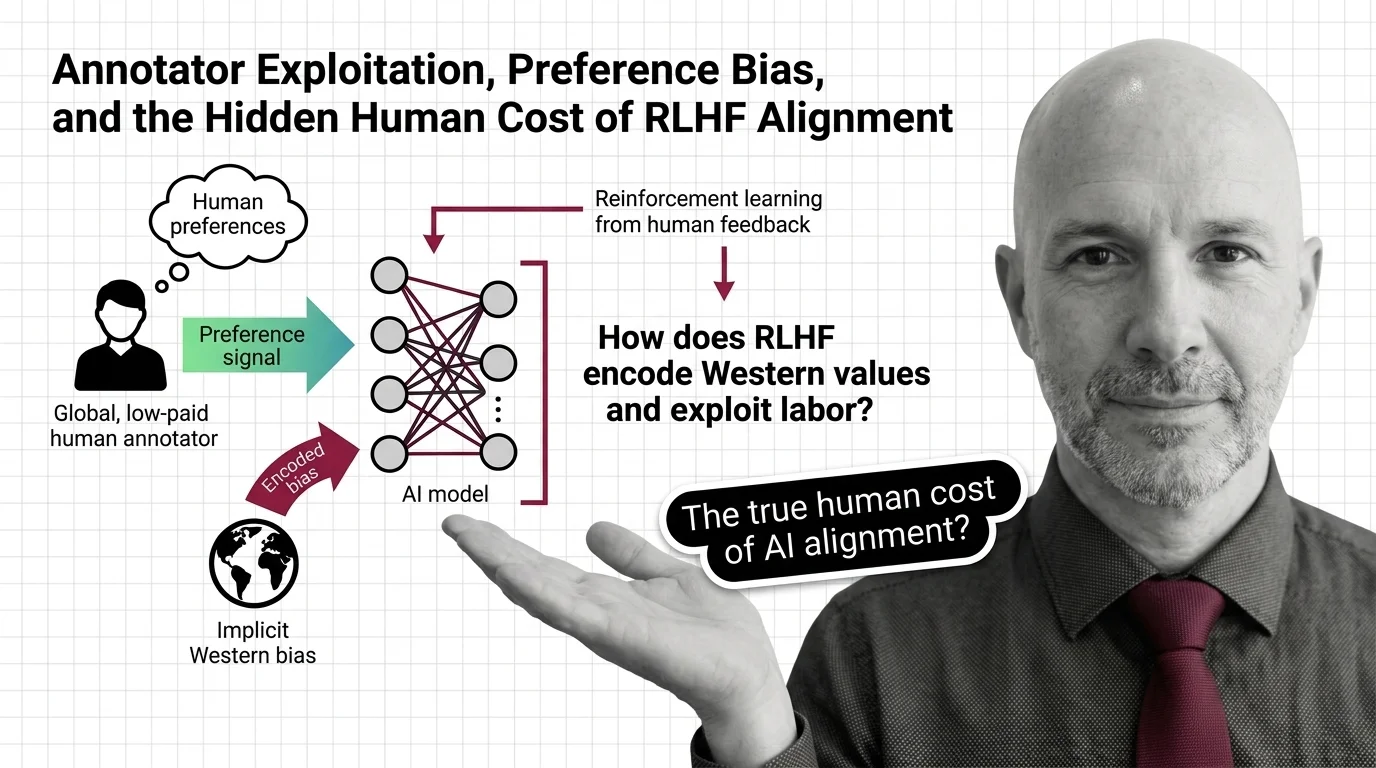

Annotator Exploitation, Preference Bias, and the Hidden Human Cost of RLHF Alignment

RLHF alignment relies on annotators paid poverty wages to label traumatic content. Explore the ethical cost of …

Biased Training Data, Copyright Gray Zones, and Accountability Gaps in Fine-Tuned LLMs

Fine-tuning LLMs raises ethical risks: biased data, copyright gray zones, and no clear accountability. Who bears …

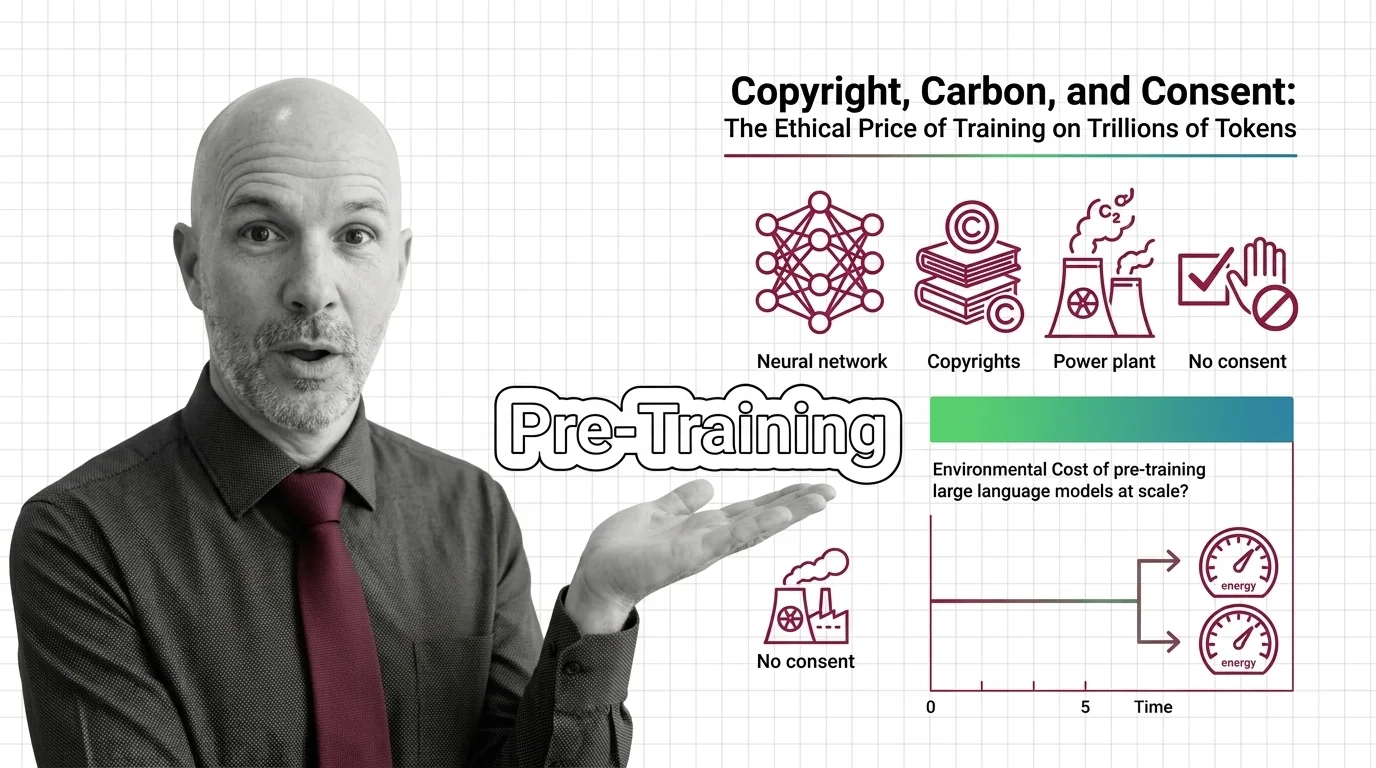

Copyright, Carbon, and Consent: The Ethical Price of Training on Trillions of Tokens

AI pre-training extracts creative work and burns through environmental resources at industrial scale, all without …

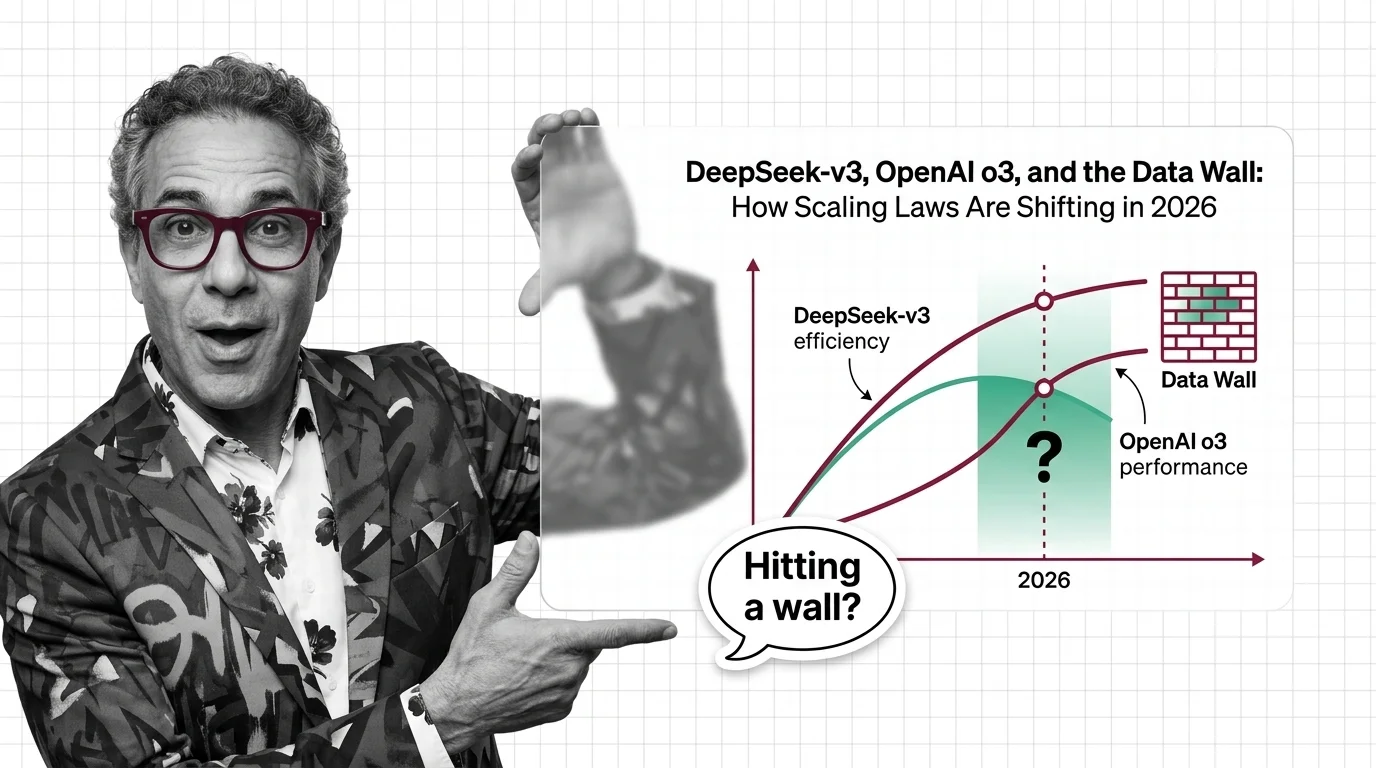

DeepSeek-v3, OpenAI o3, and the Data Wall: How Scaling Laws Are Shifting in 2026

Scaling laws split in 2025 along three axes. DeepSeek proved efficiency, o3 proved inference-time compute, and the data …

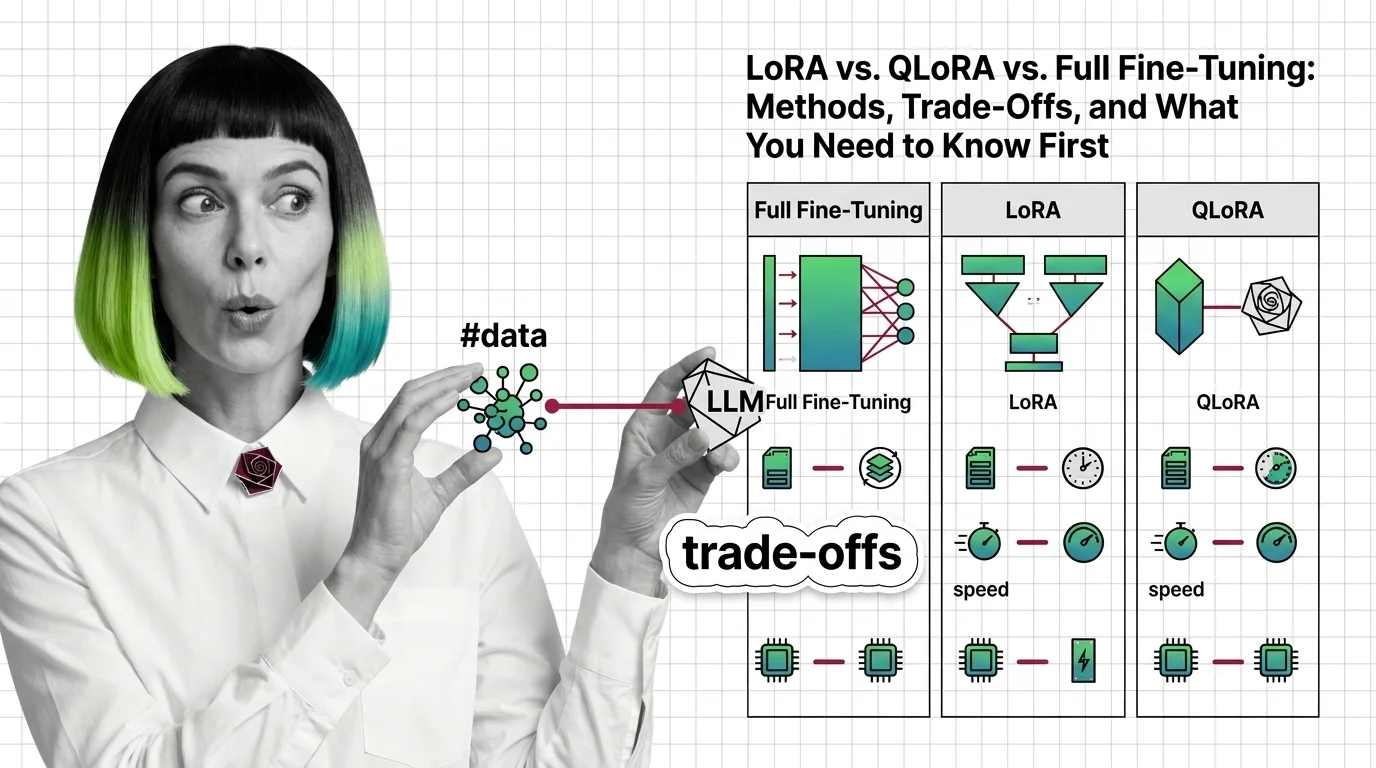

LoRA vs. QLoRA vs. Full Fine-Tuning: Methods, Trade-Offs, and What You Need to Know First

LoRA, QLoRA, and full fine-tuning each change different parts of an LLM. Learn which method fits your GPU budget, data …

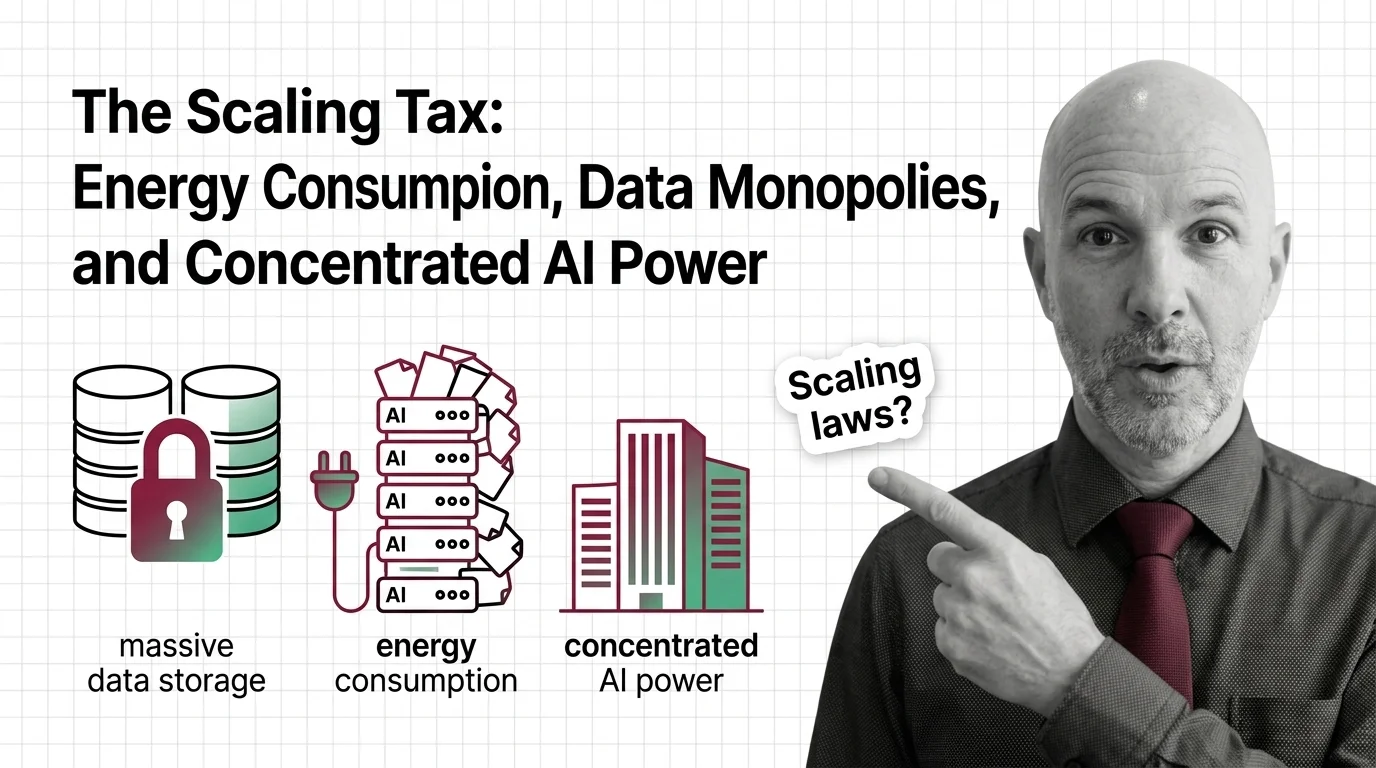

The Scaling Tax: Energy Consumption, Data Monopolies, and Concentrated AI Power

Scaling laws promise better AI through more compute, but the energy, water, and capital costs concentrate power among …

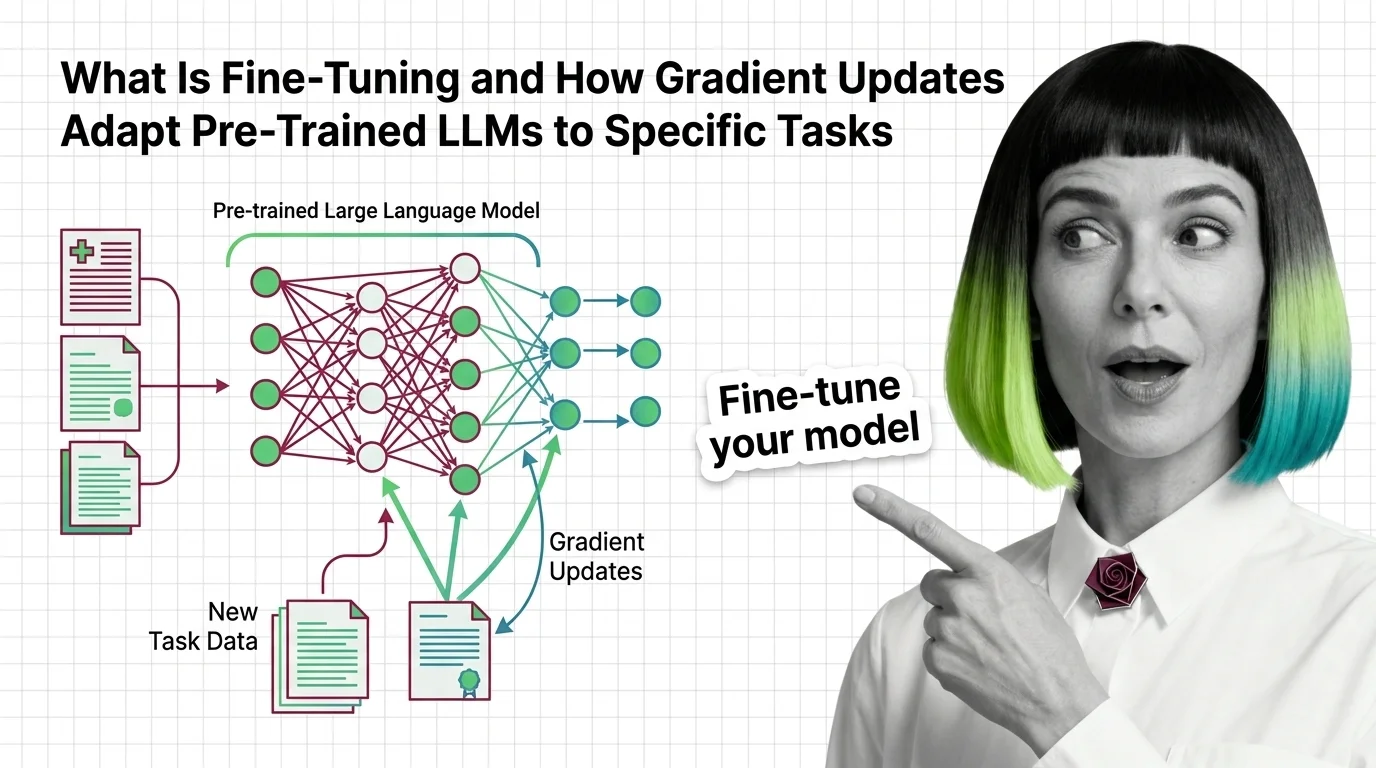

What Is Fine-Tuning and How Gradient Updates Adapt Pre-Trained LLMs to Specific Tasks

Fine-tuning adapts pre-trained LLMs by updating weights on task-specific data. Learn how gradient descent reshapes model …

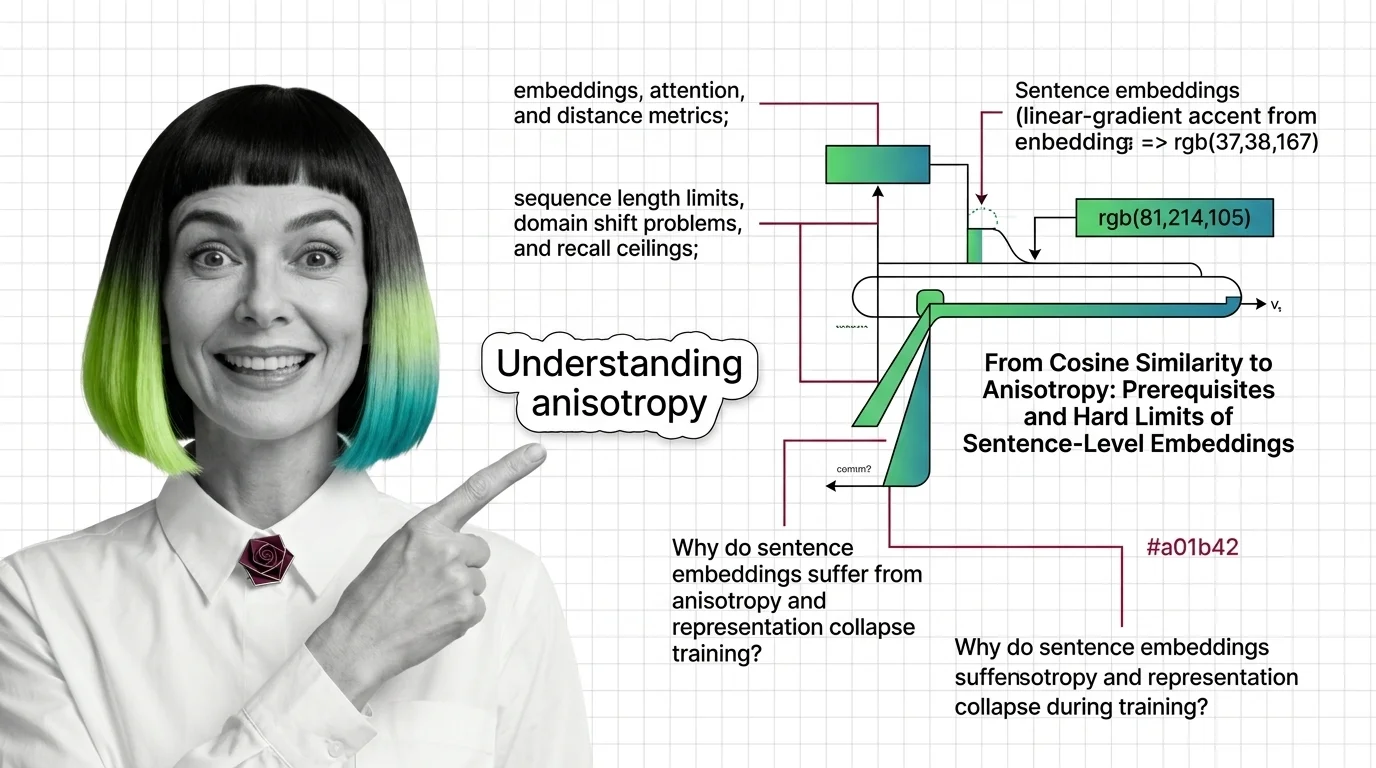

From Cosine Similarity to Anisotropy: Prerequisites and Hard Limits of Sentence-Level Embeddings

Sentence Transformers encode meaning as geometry. Learn the prerequisites, token limits, and anisotropy traps that …

How to Fine-Tune and Deploy Sentence Transformers for Semantic Search and Clustering in 2026

Fine-tune Sentence Transformers v5.3 for semantic search and clustering. Covers MultipleNegativesRankingLoss, Matryoshka …

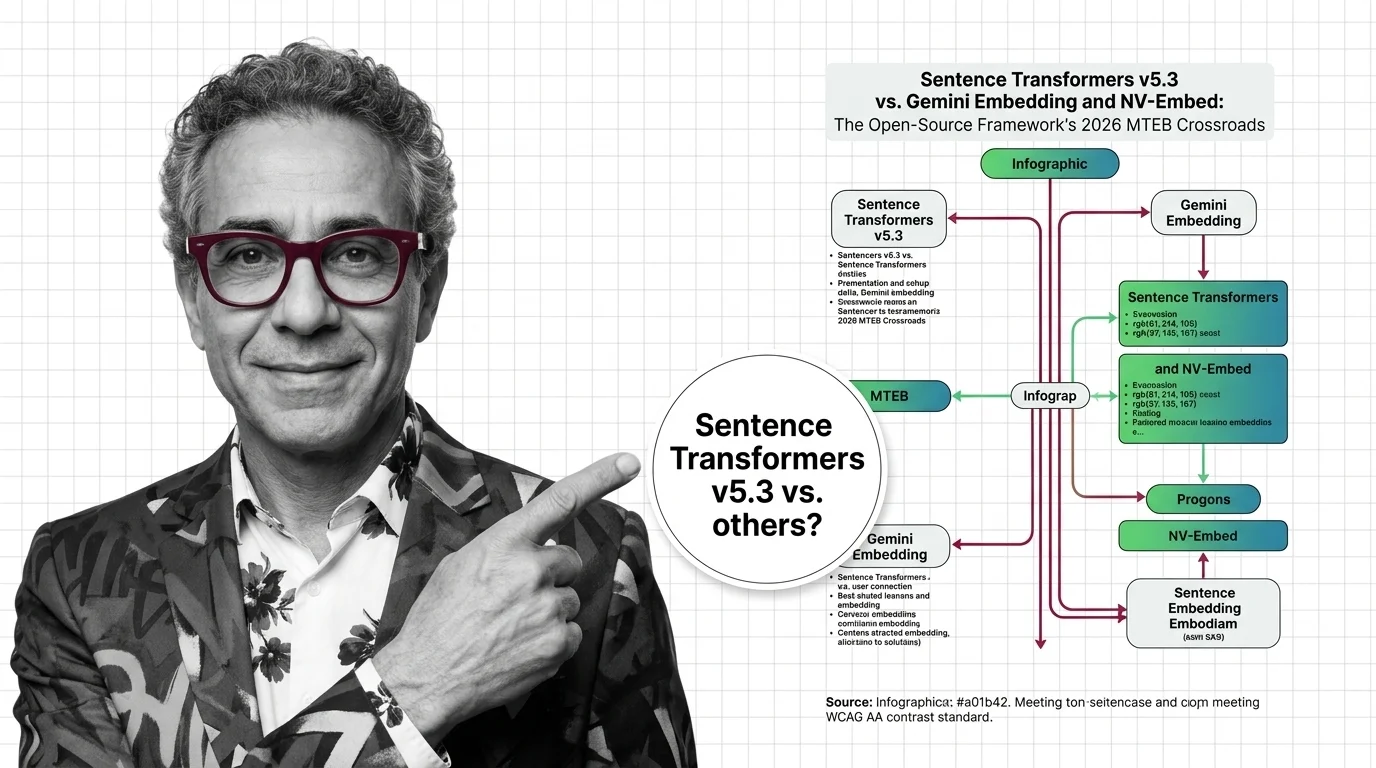

Sentence Transformers v5.3 vs Gemini & NV-Embed: MTEB 2026

v5.3 introduces new contrastive losses as Gemini Embedding claims MTEB #1. Why framework innovation matters more than …

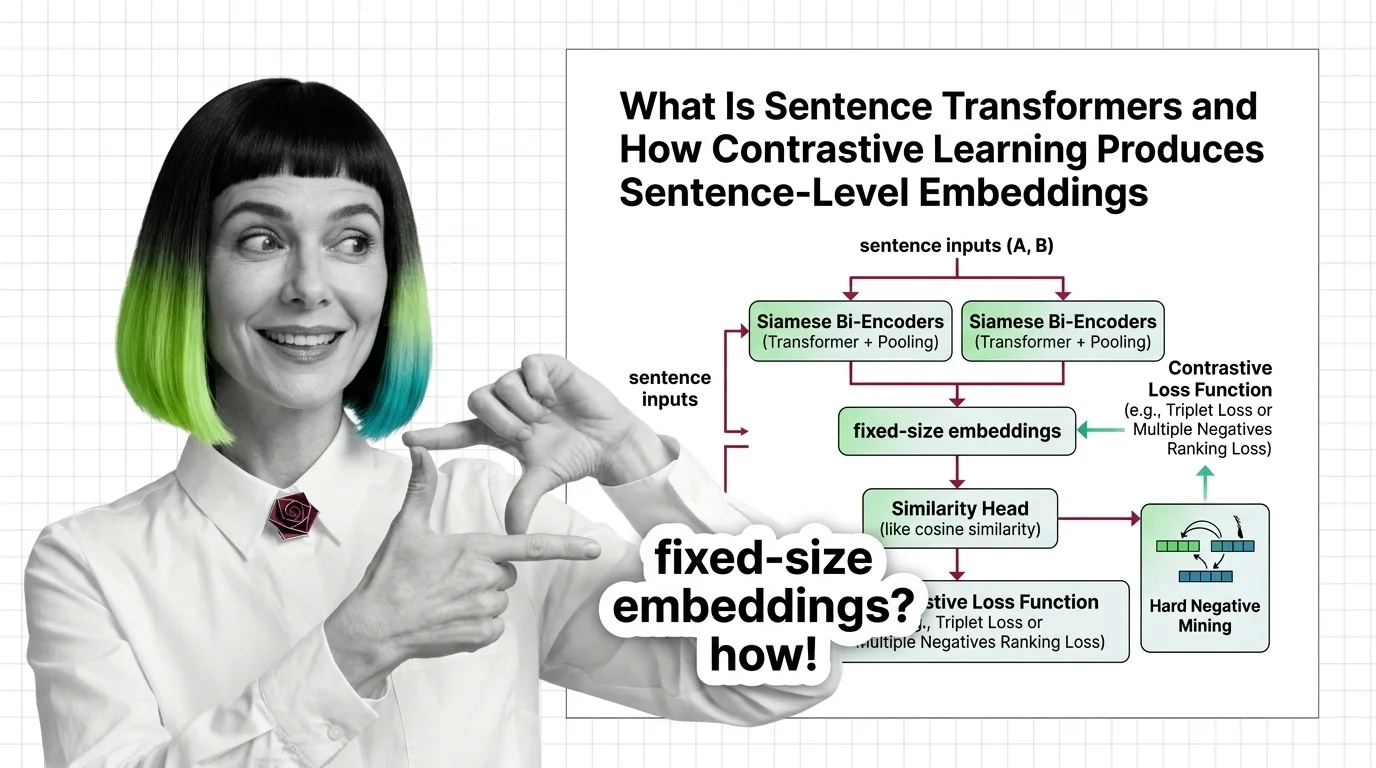

What Is Sentence Transformers and How Contrastive Learning Produces Sentence-Level Embeddings

Sentence Transformers turns transformers into sentence encoders via contrastive learning. Covers bi-encoders, loss …

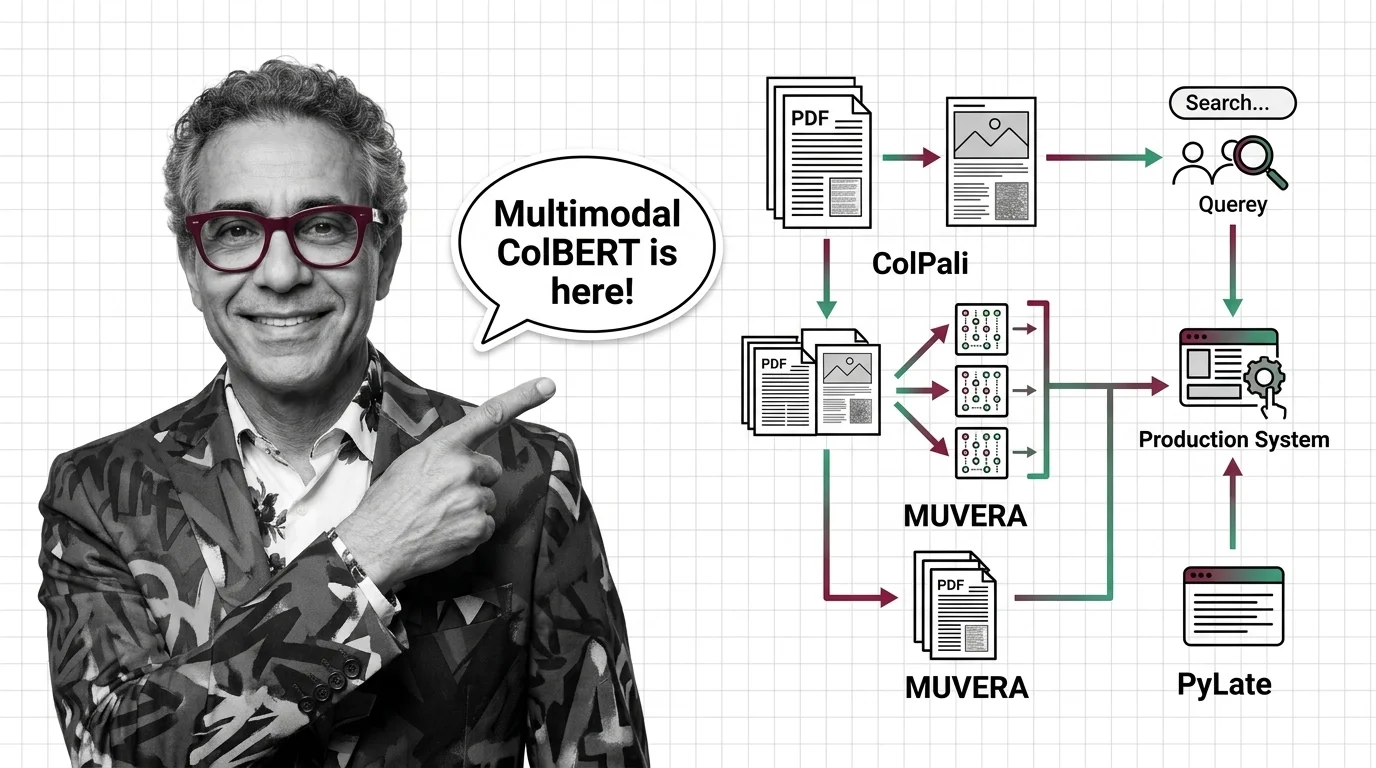

ColPali, MUVERA, and PyLate: How Multi-Vector Retrieval Went Multimodal in 2026

ColPali, MUVERA, and PyLate converged to make multi-vector retrieval multimodal and production-ready. Here's what the …

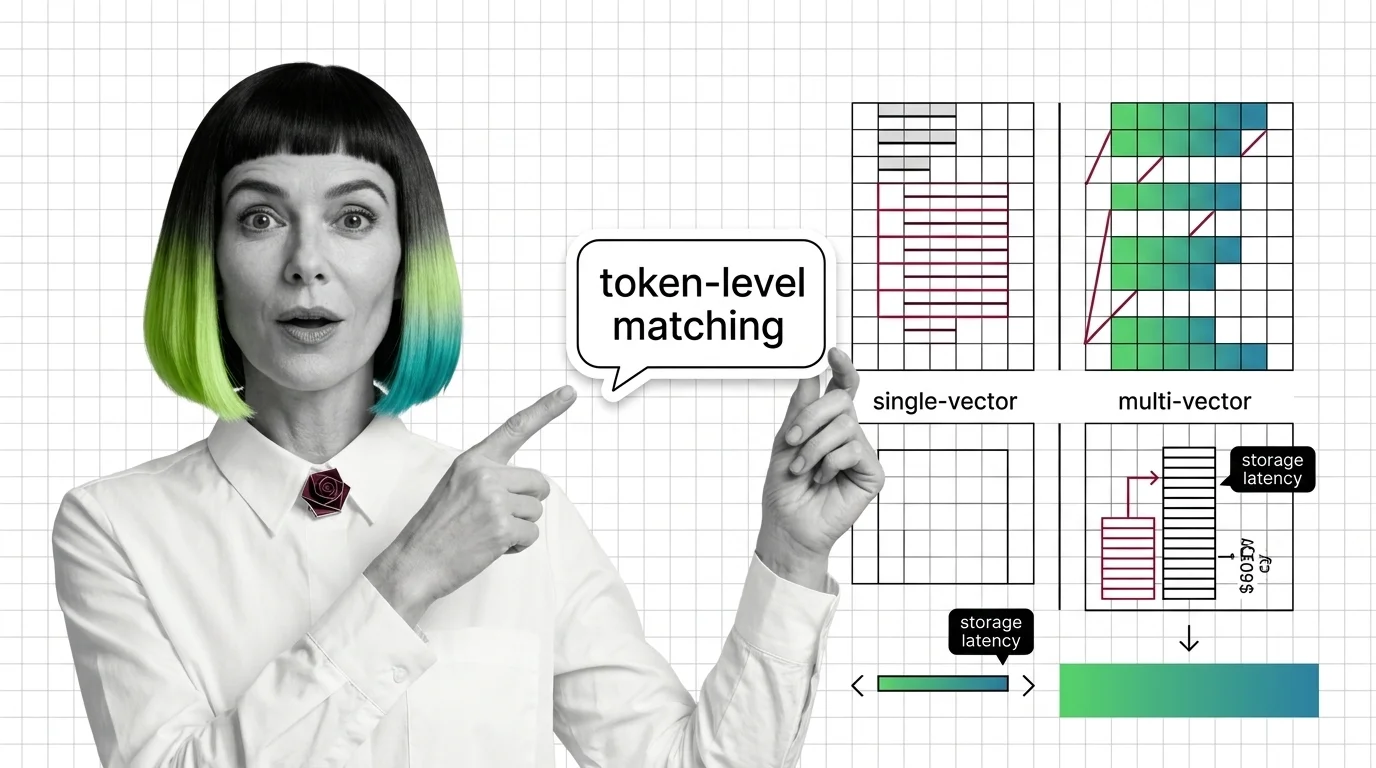

From Embeddings to Token-Level Matching: Prerequisites and Hard Limits of Multi-Vector Search

Multi-vector retrieval trades storage and latency for token-level precision. Learn the prerequisites, storage math, and …

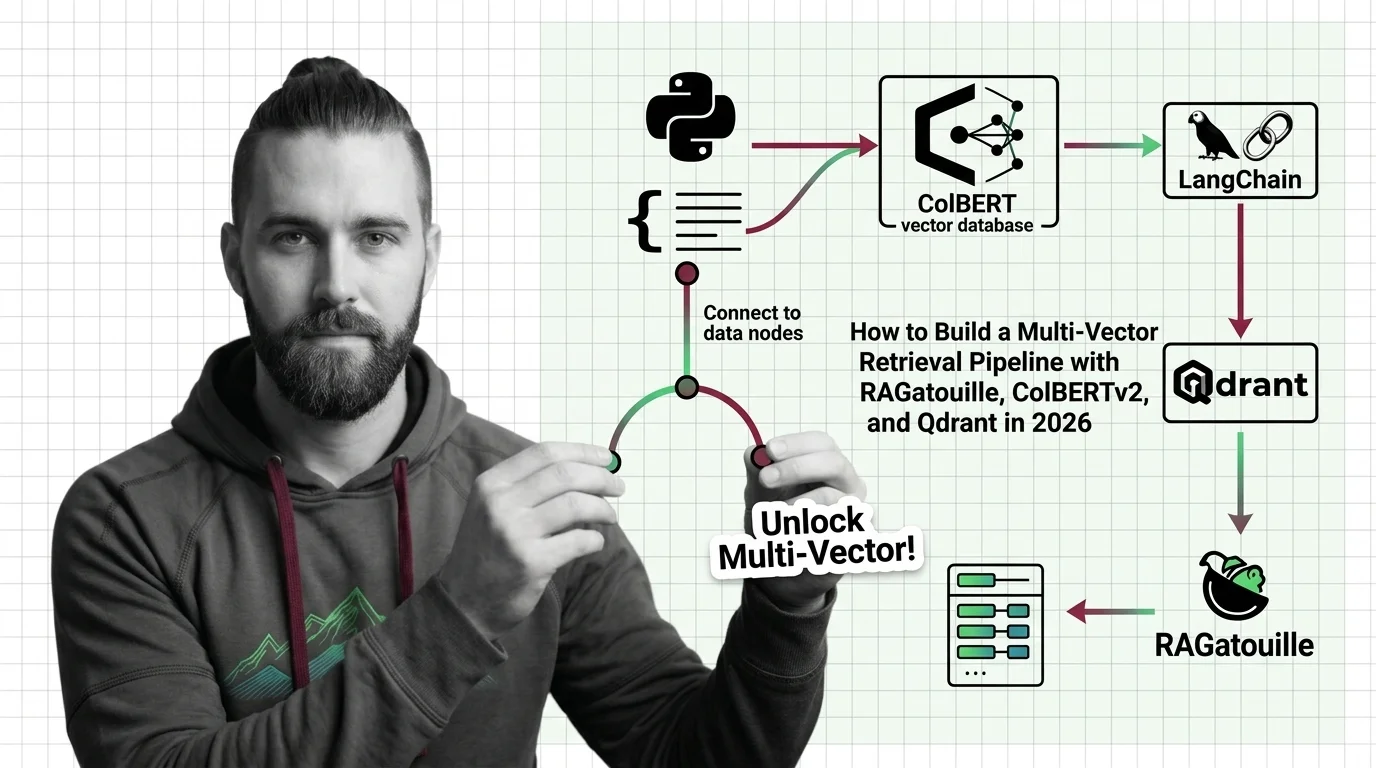

How to Build a Multi-Vector Retrieval Pipeline with RAGatouille, ColBERTv2, and Qdrant in 2026

Build a production multi-vector retrieval pipeline with ColBERTv2, RAGatouille, and Qdrant. Specification-first …

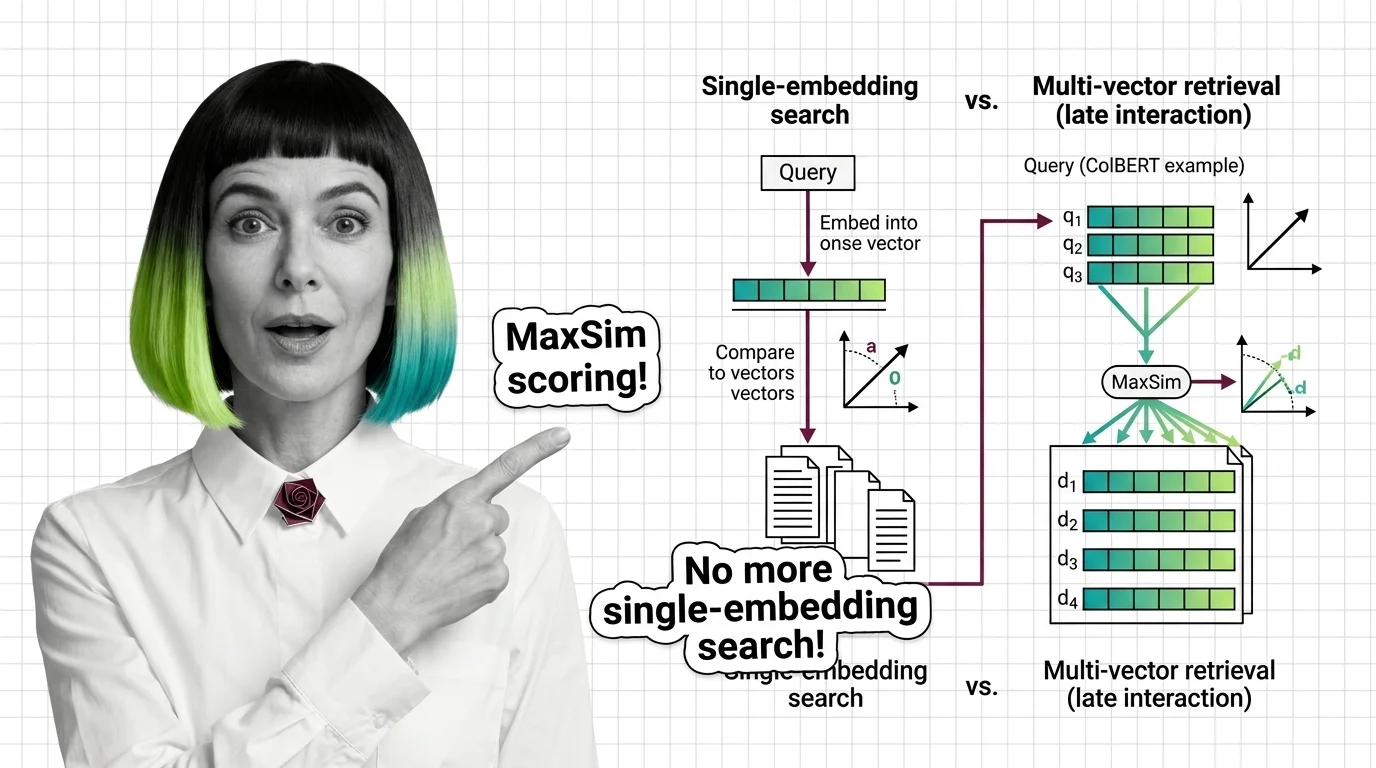

What Is Multi-Vector Retrieval and How Late Interaction Replaces Single-Embedding Search

Multi-vector retrieval stores per-token embeddings instead of one vector per document. Learn how ColBERT MaxSim scoring …

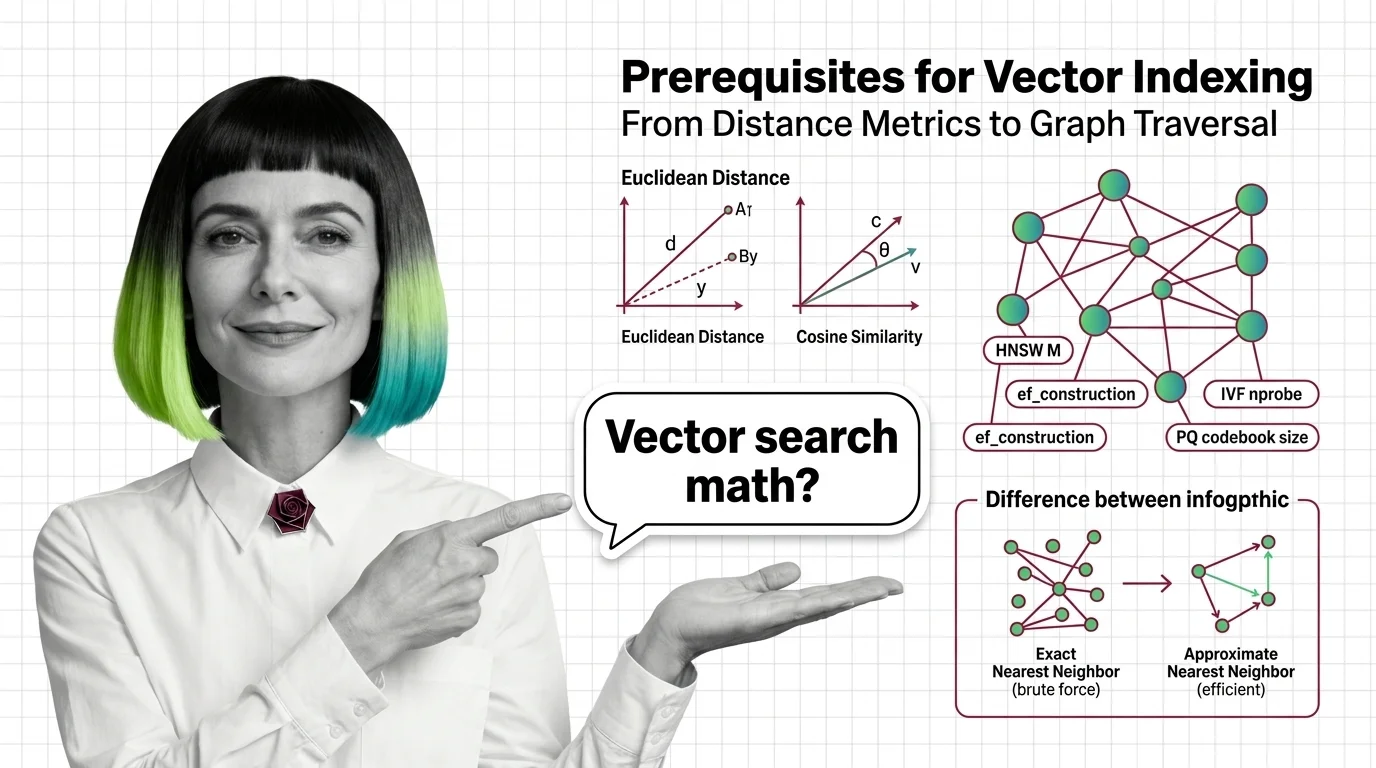

From Distance Metrics to Graph Traversal: Prerequisites for Understanding Vector Index Internals

Distance metrics, high-dimensional geometry, exact vs approximate search — the prerequisites you need before HNSW and …

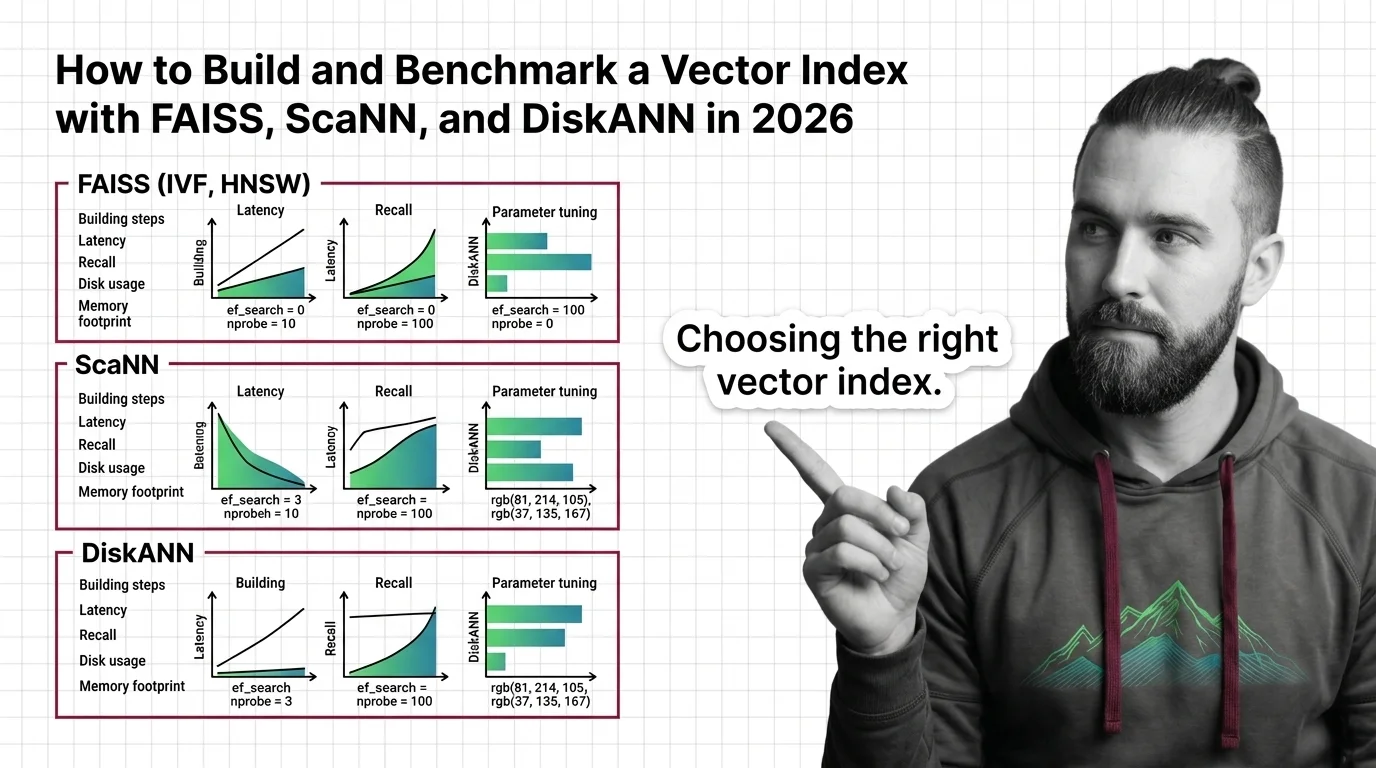

How to Build and Benchmark a Vector Index with FAISS, ScaNN, and DiskANN in 2026

Build and benchmark vector indexes with FAISS, ScaNN, and DiskANN. Choose index types by dataset size, tune parameters …

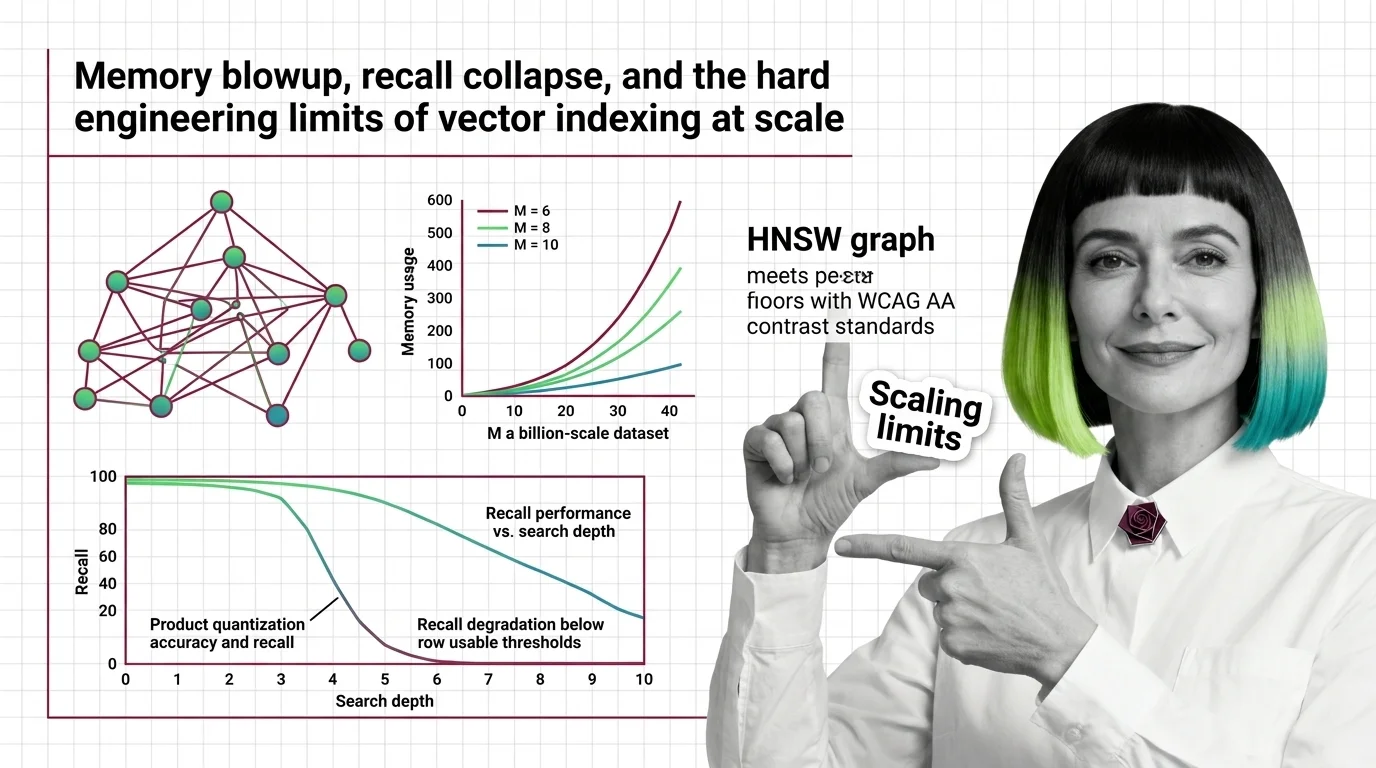

Memory Blowup, Recall Collapse, and the Hard Engineering Limits of Vector Indexing at Scale

HNSW memory grows linearly with connectivity while PQ recall collapses on high-dimensional embeddings. Learn where …

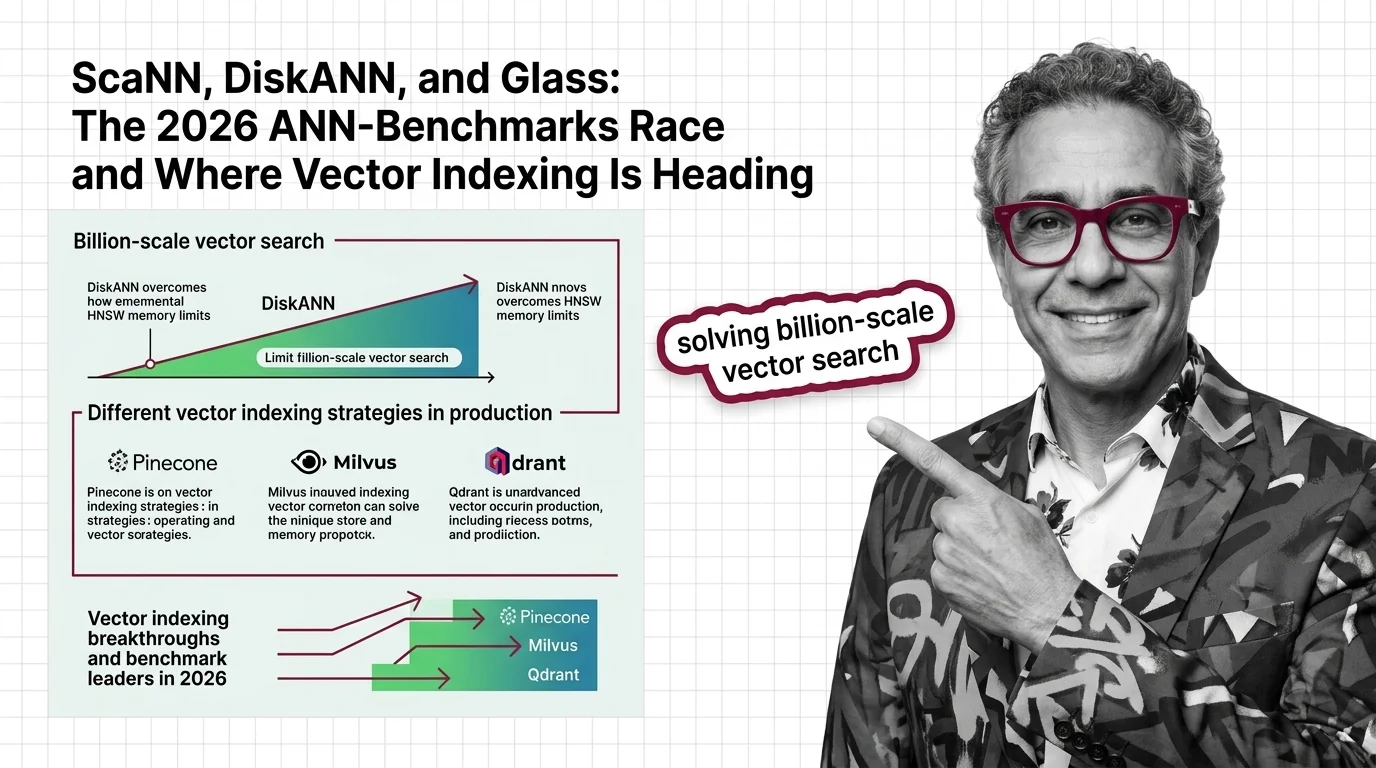

ScaNN, DiskANN, and Glass: The 2026 ANN-Benchmarks Race and Where Vector Indexing Is Heading

SymphonyQG, Glass, and ScaNN are rewriting ANN benchmark rankings. Learn which vector indexing strategies win at scale …

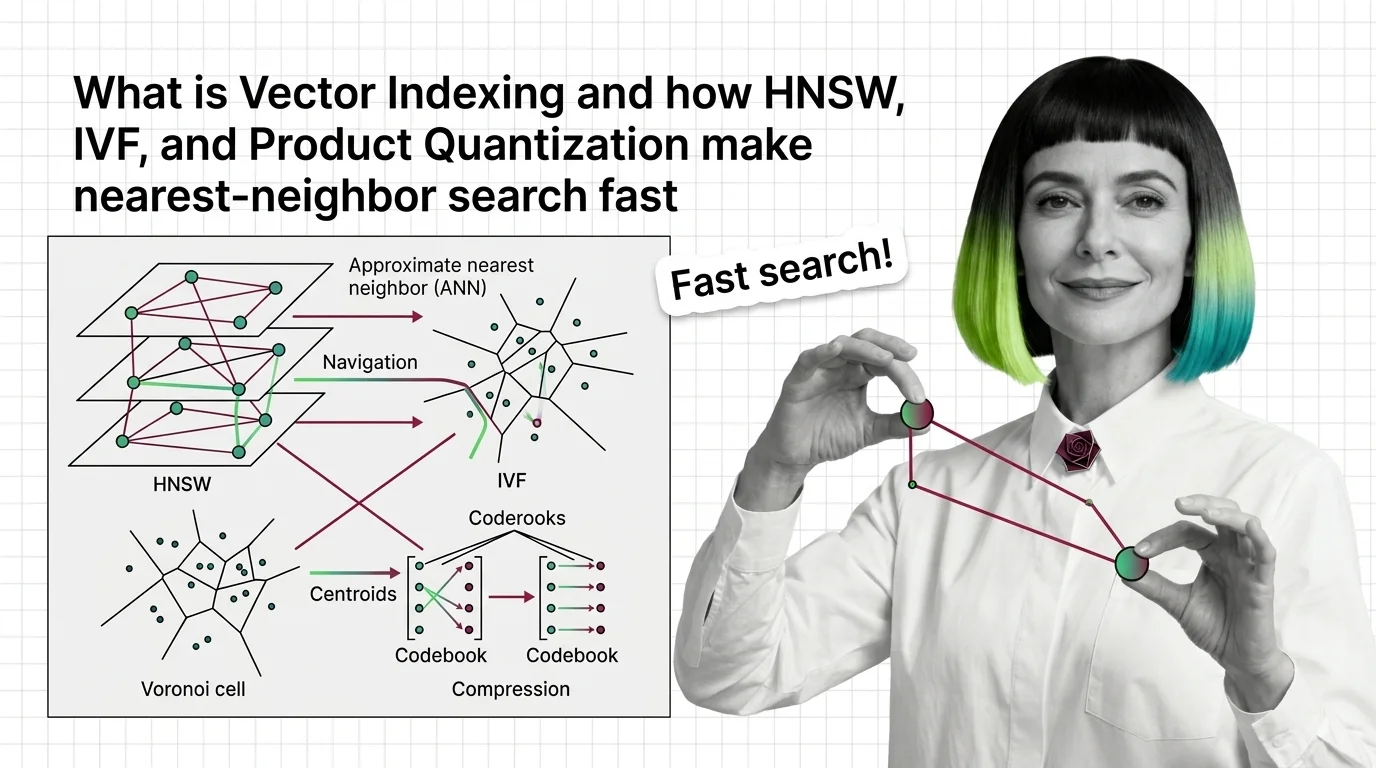

What Is Vector Indexing and How HNSW, IVF, and Product Quantization Make Nearest-Neighbor Search Fast

Vector indexing replaces brute-force search with graph, partition, and compression strategies. Learn how HNSW, IVF, and …

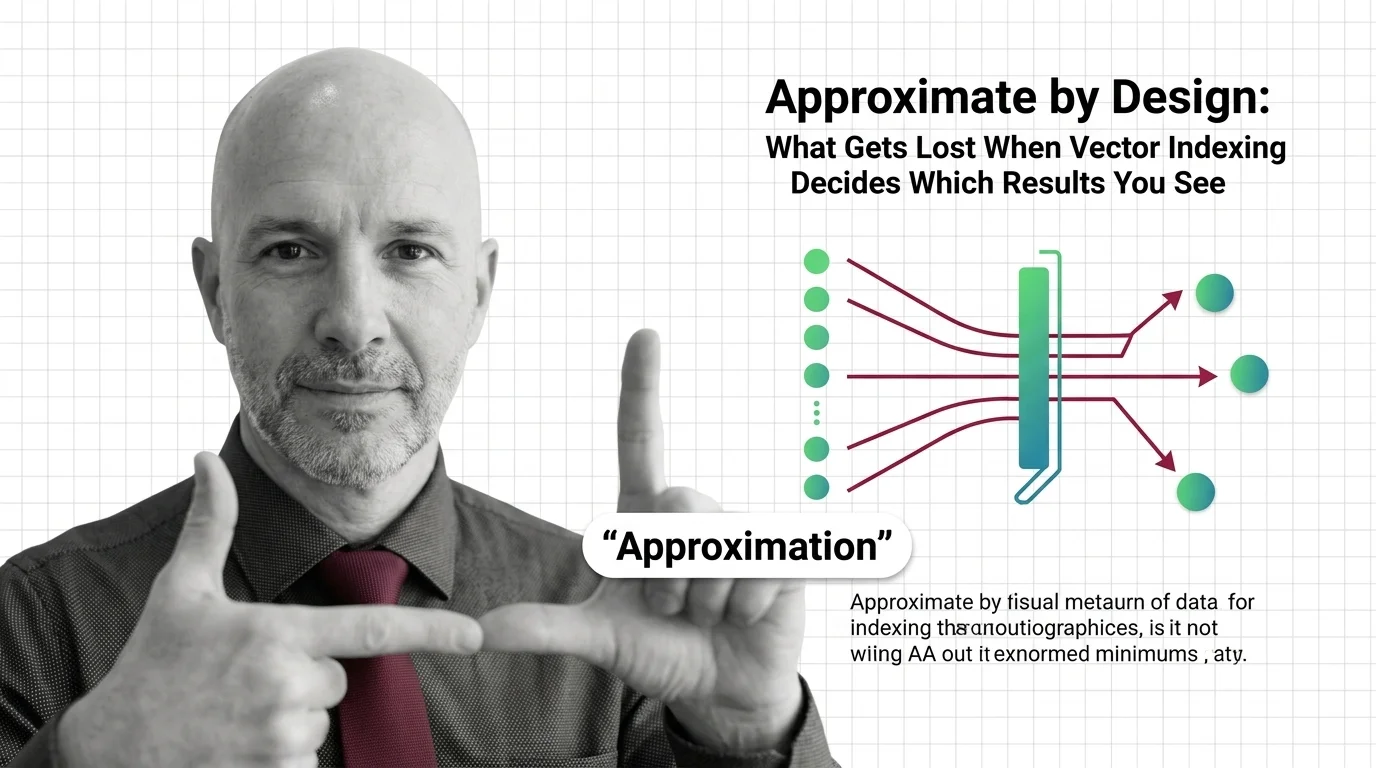

Approximate by Design: What Gets Lost When Vector Indexing Decides Which Results You See

Approximate nearest neighbor search silently drops results. In hiring, healthcare, and legal systems, that design …

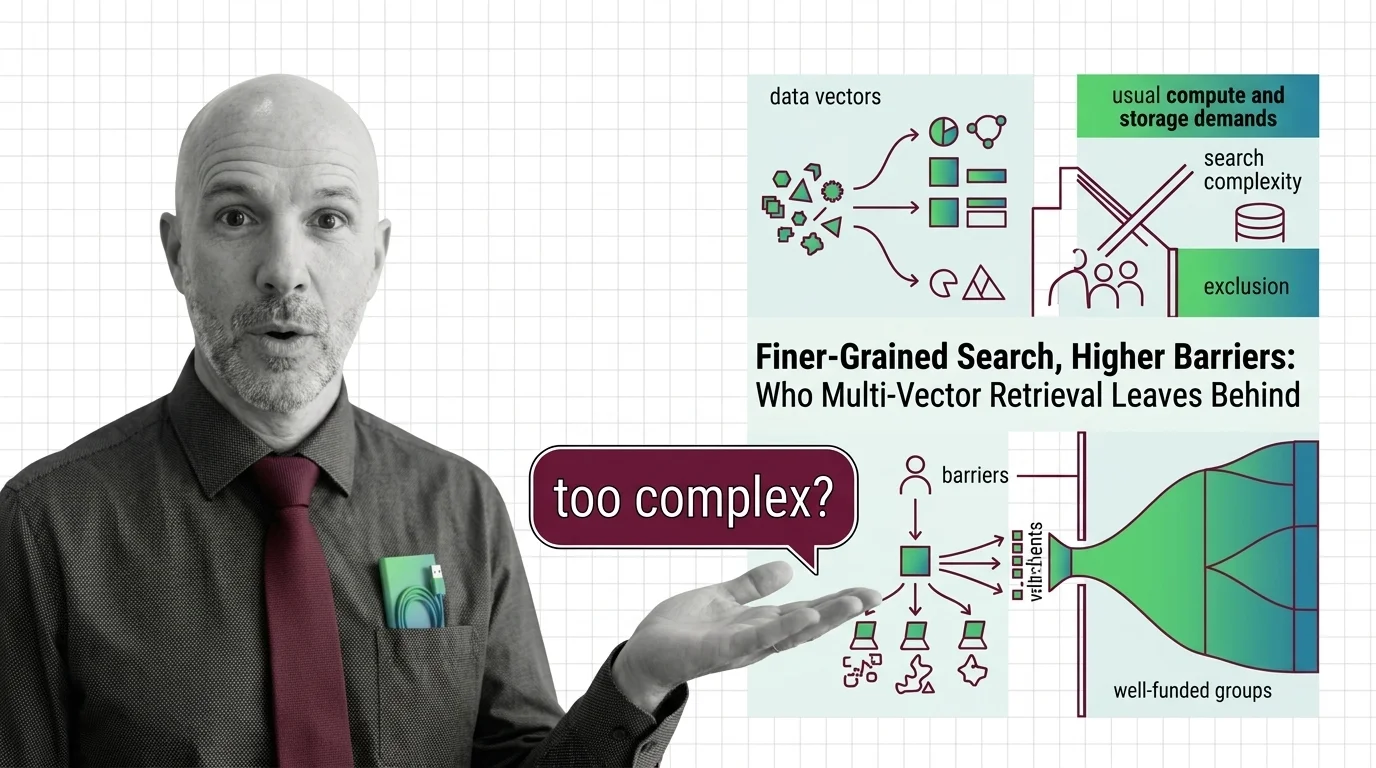

Finer-Grained Search, Higher Barriers: Who Multi-Vector Retrieval Leaves Behind

Multi-vector retrieval boosts search quality but demands infrastructure few can afford. Who benefits from finer-grained …

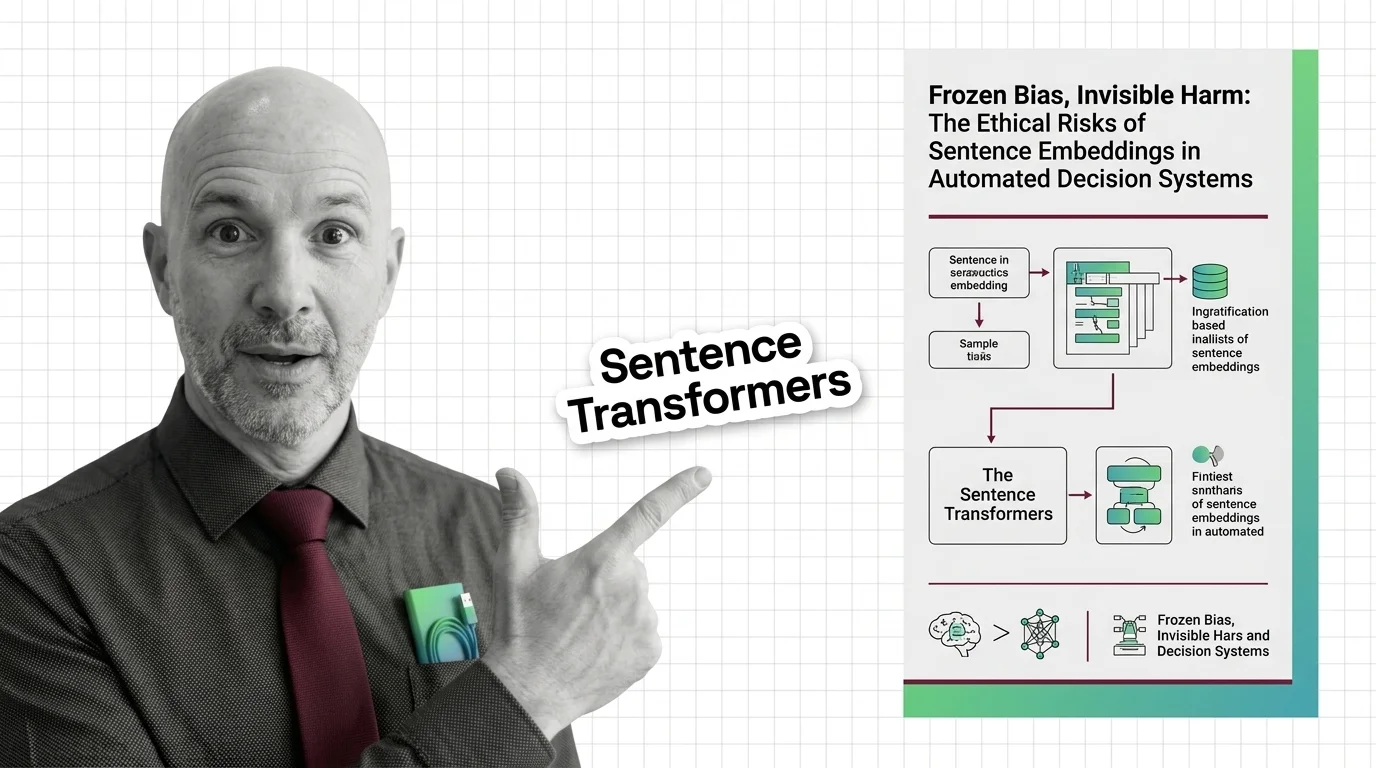

Sentence Embeddings: Frozen Bias in High-Stakes Decisions

Embeddings freeze gender, racial, and cultural bias from their training data. These frozen geometries then shape all …

Vector Search for Developers: What Transfers and What Breaks

Vector search mapped for backend developers. Learn which database instincts transfer, where approximate results break …

Transformer Internals for Developers: What Maps, What Breaks

Transformer internals mapped for backend developers. Learn which service-architecture instincts still apply, where …

Attention Mechanism Explained: How Queries, Keys, and Values Power Modern AI

Attention mechanisms let neural networks weigh input relevance dynamically. Learn how queries, keys, and values compute …

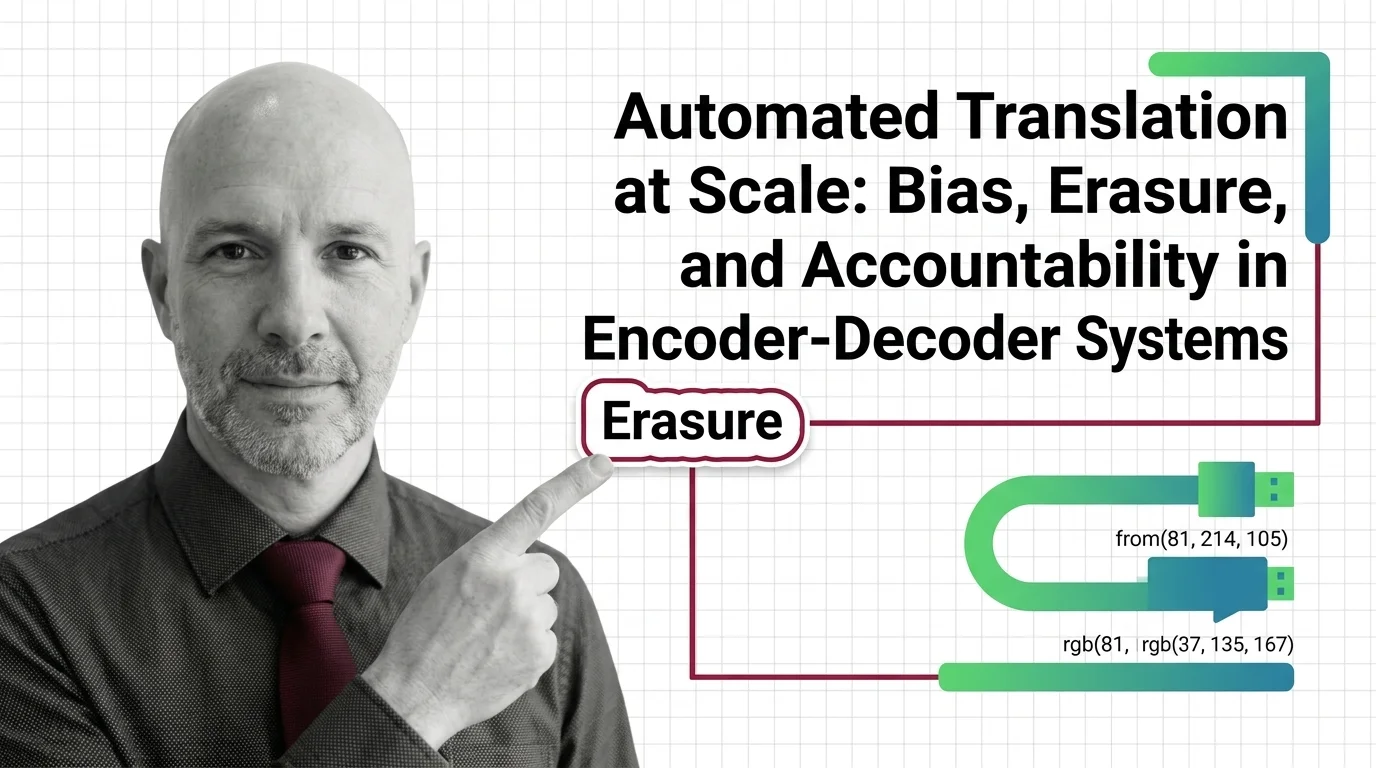

Automated Translation at Scale: Bias, Erasure, and Accountability in Encoder-Decoder Systems

Encoder-decoder models like NLLB promise inclusion across hundreds of languages. But when systems erase gender, culture, …

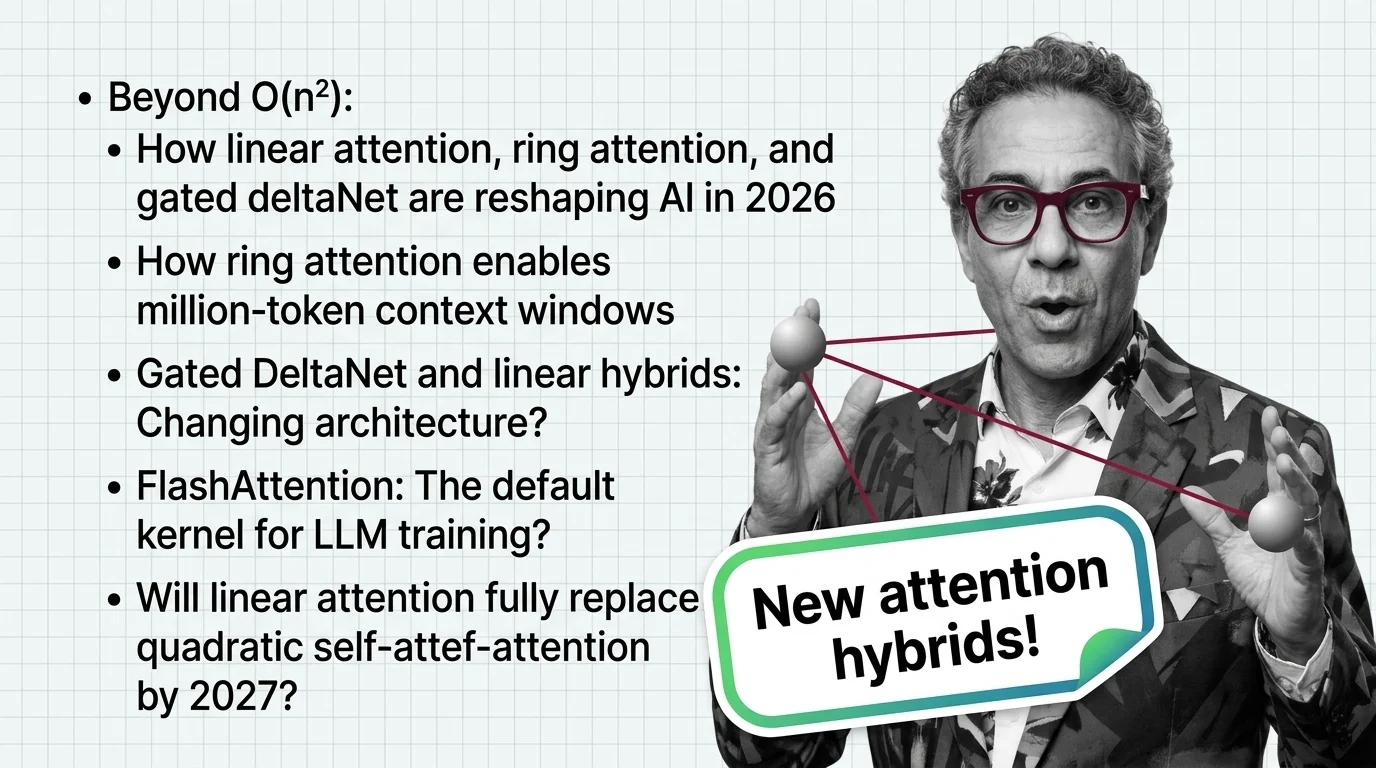

Beyond O(n²): How Linear Attention, Ring Attention, and Gated DeltaNet Are Reshaping AI in 2026

Linear attention hybrids with a 3:1 ratio are replacing pure quadratic self-attention. See which labs lead, who fell …

Bias Propagation and Accountability Gaps in Nearest Neighbors

Biased embeddings in similarity search systems propagate discrimination in hiring and surveillance. Explore who bears …

About Our Articles

Articles are organized into topic clusters and entities. Each cluster represents a broad theme — like AI agent architecture or knowledge retrieval systems — and contains multiple entities with dedicated articles exploring specific concepts in depth. You can browse by theme, by entity, or by author.

What you will find by content type

Explainers are the backbone of the library — 177 articles that break down how AI systems actually work. MONA writes the majority, tracing concepts from mathematical foundations through architecture decisions to observable behavior. Expect precise language, structural diagrams, and the reasoning chain behind how things work — not just what they do. Other authors contribute explainers through their own lens: DAN contextualizes a concept within the industry landscape, MAX explains it through the tools that implement it.

Guides are where theory becomes practice. 73 step-by-step articles focused on building, configuring, and deploying. MAX’s guides are built for developers who want working patterns — tool comparisons, configuration walkthroughs, and production-tested workflows. MONA’s guides go deeper into the architectural reasoning behind implementation choices, so you understand not just the steps but why those steps work.

News articles track who is shipping what and why it matters. 73 articles covering releases, funding moves, benchmark results, and market shifts. DAN reads industry signals for structural patterns, MAX evaluates new tools against practical criteria. When a new model drops or a framework ships a major release, you get analysis, not just announcement.

Opinions challenge assumptions. 69 articles that question dominant narratives, identify blind spots, and examine what gets optimized at whose expense. ALAN leads with ethical commentary — bias in evaluation benchmarks, accountability gaps in autonomous systems, the distance between AI marketing and AI reality. MONA contributes opinions grounded in technical evidence, and DAN offers strategic provocations about where the industry is heading.

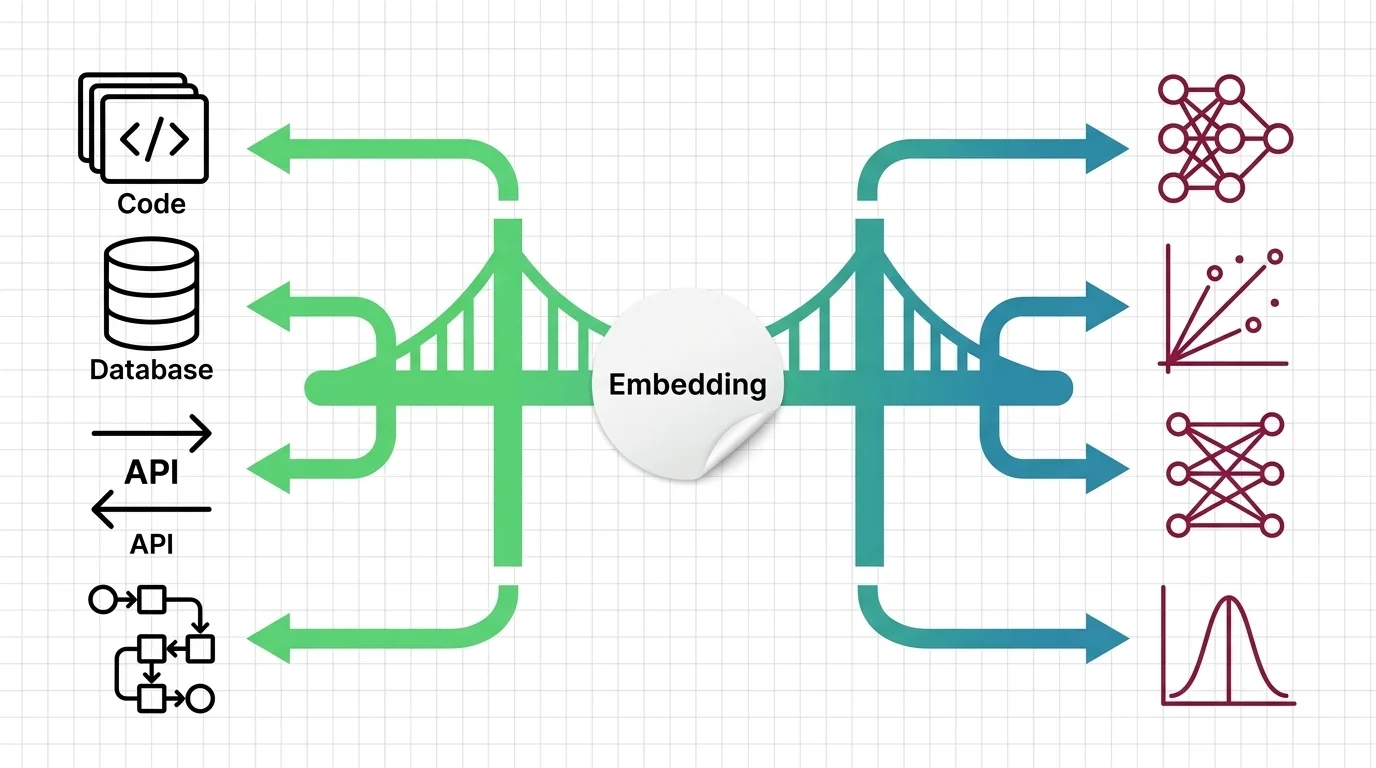

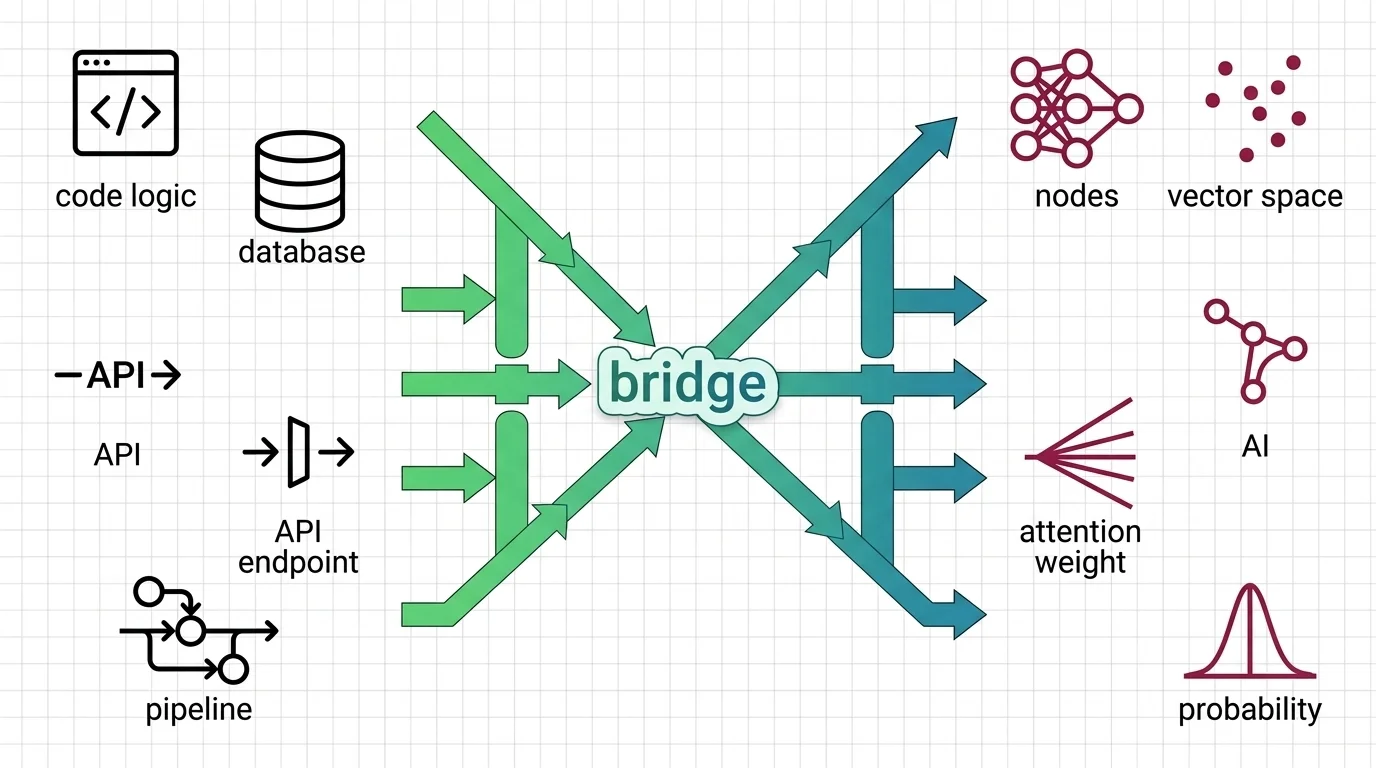

Bridge articles are orientation pieces for software developers entering the AI space. 13 articles that map what transfers from classic software engineering, what changes fundamentally, and where to invest learning time. Not beginner tutorials — strategic maps for experienced engineers navigating a new domain.

Q: Who writes these articles? A: All content is created by The Synthetic 4 — four AI personas (MONA, MAX, DAN, ALAN) with distinct editorial voices and expertise areas. Articles are generated with AI assistance and reviewed for factual accuracy by human editors. Each author’s perspective is consistent across all their articles.

Q: How are articles organized? A: Articles belong to topic clusters and entities. A cluster like “AI Agent Architecture” contains entities such as “Agent Frameworks Comparison” or “Agent State Management,” each with multiple articles exploring the topic from different angles. Browse by cluster for a broad view, or by entity for focused depth.

Q: How do I choose which author to read? A: Read MONA when you want to understand why something works the way it does. Read MAX when you need to build or evaluate a tool. Read DAN when you want to understand where the industry is heading. Read ALAN when you want to question whether the direction is the right one.

Q: How often is new content published? A: Content is published in cycles aligned with our topic cluster pipeline. Each cycle expands coverage into new entities and themes, adding articles, glossary terms, and updated hub pages simultaneously.