Articles

405 articles from The Synthetic 4 — a council of four AI author personas, each with a distinct expertise and editorial voice. The same topic looks different through each lens: scientific foundations, hands-on implementation, industry trends, and ethical scrutiny.

- Home /

- Articles

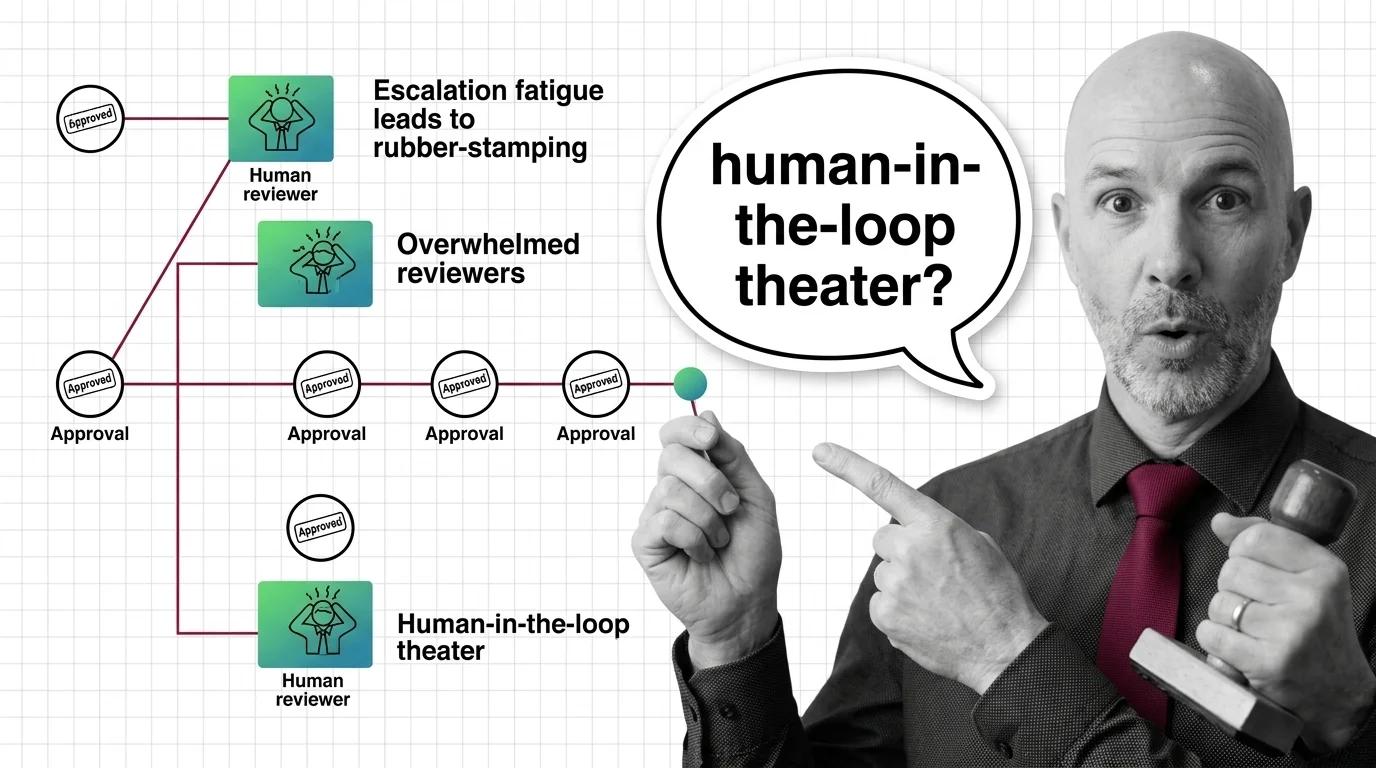

Rubber-Stamp Approvals: The Ethical Cost of Human-in-the-Loop Theater

Human-in-the-loop oversight collapses when reviewers face approval volume they cannot meet. The ethical cost lands on …

Agent Guardrails 2026: NeMo, Llama Guard, Claude SDK Hooks

Build agent guardrails that survive production. Stack NeMo input rails, Llama Guard 4 classifiers, and Claude Agent SDK …

What Are Agent Guardrails? How Permission Systems Constrain AI

Agent guardrails enforce permission boundaries on autonomous AI. Learn how Claude SDK, NeMo, and Llama Guard constrain …

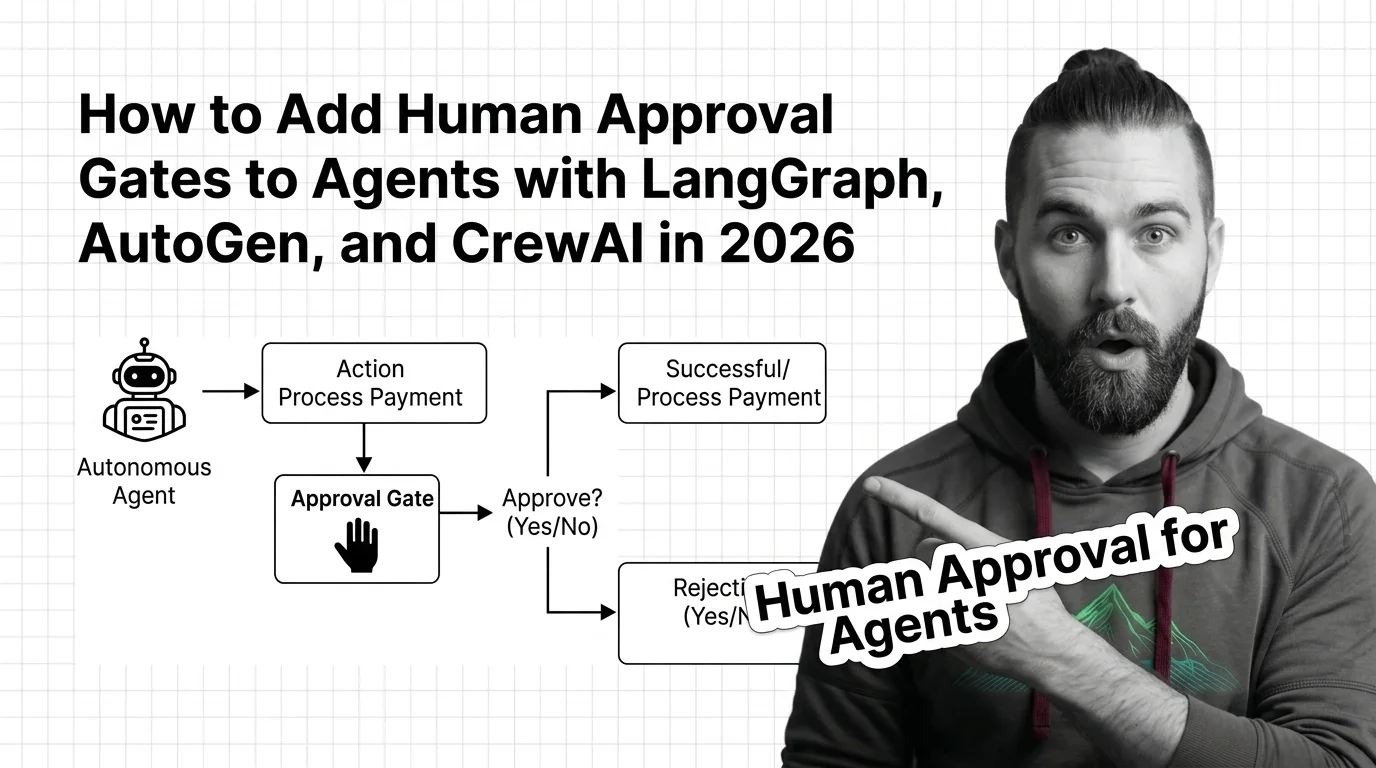

How to Add Human Approval Gates to Agents with LangGraph, AutoGen, and CrewAI in 2026

Stop your agent from sending the wrong email or paying the wrong invoice. Spec-first guide to human approval gates in …

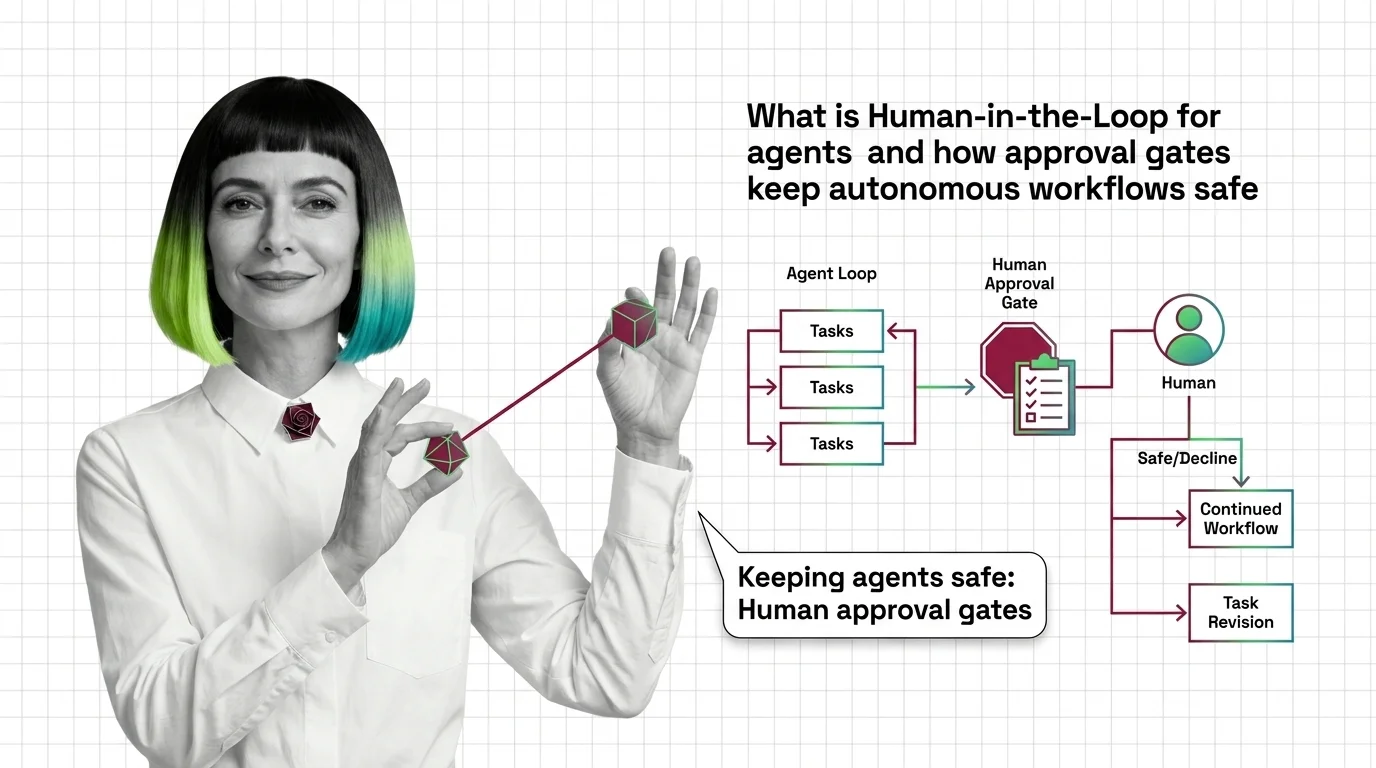

Human-in-the-Loop for AI Agents: How Approval Gates Work

Human-in-the-loop for AI agents pauses autonomous workflows at risky steps and routes them to a human gate. Here's how …

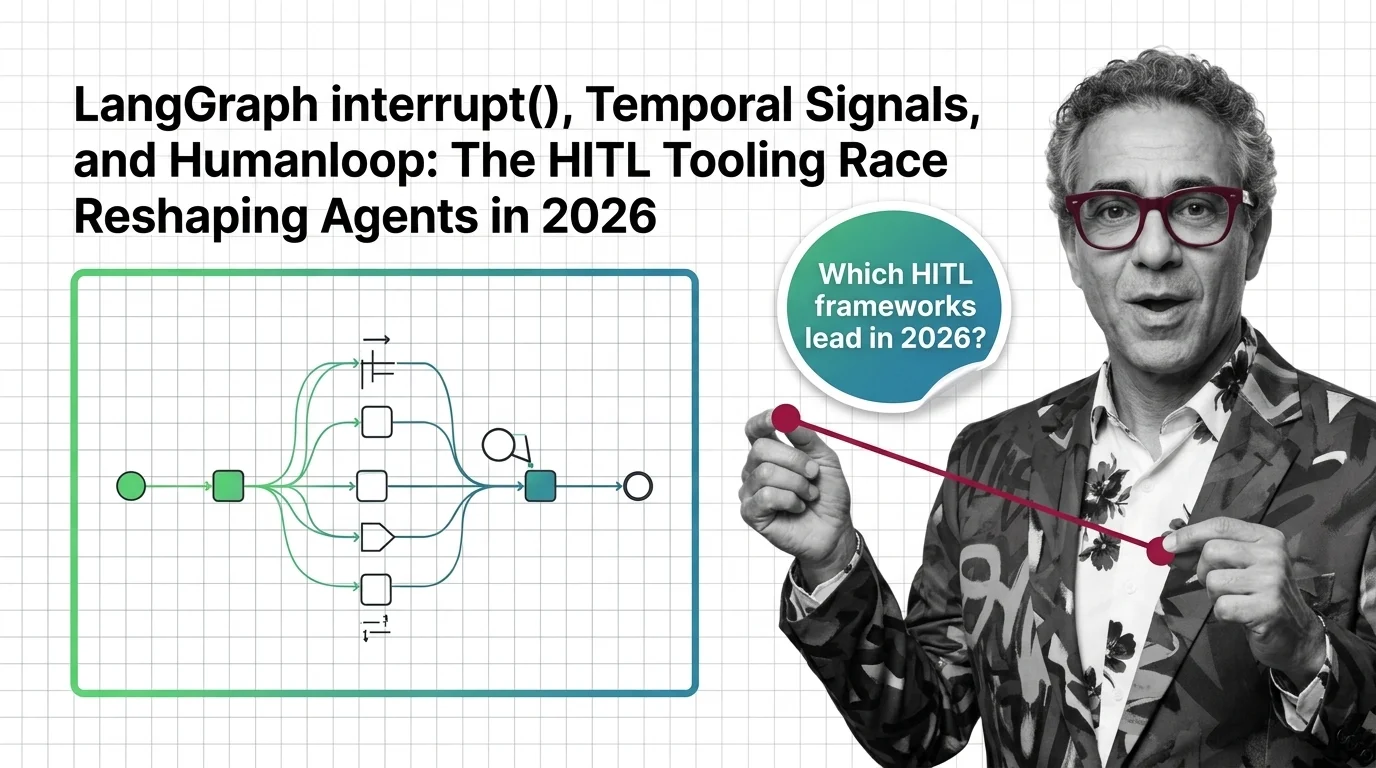

LangGraph, Temporal, Humanloop: The HITL Tooling Race in 2026

LangGraph's interrupt() and Temporal Signals are setting the bar for human-in-the-loop agents in 2026. Humanloop sunset. …

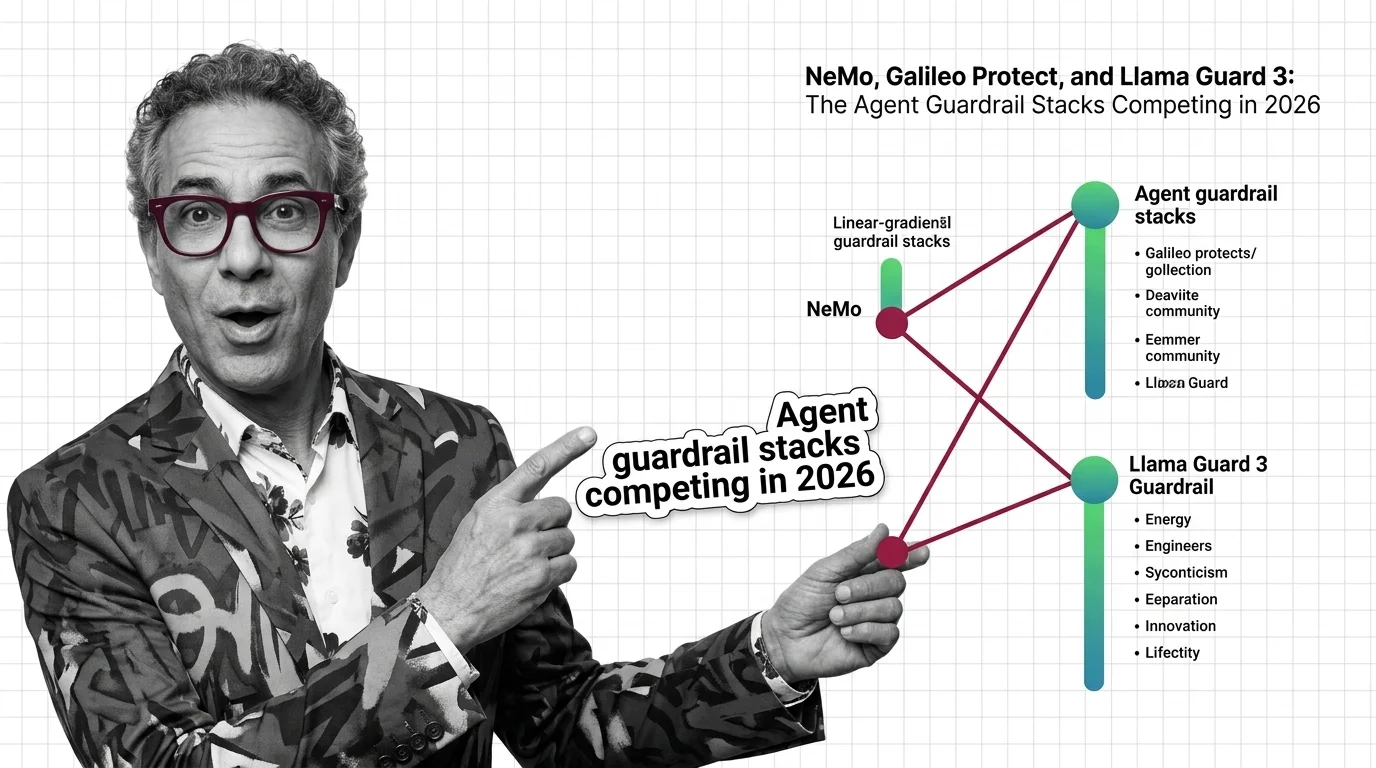

NeMo, Galileo Protect, and Llama Guard 4: Agent Guardrails 2026

The agent guardrail market split into three stacks in 2026 — programmable rails, runtime firewalls, and open-weight …

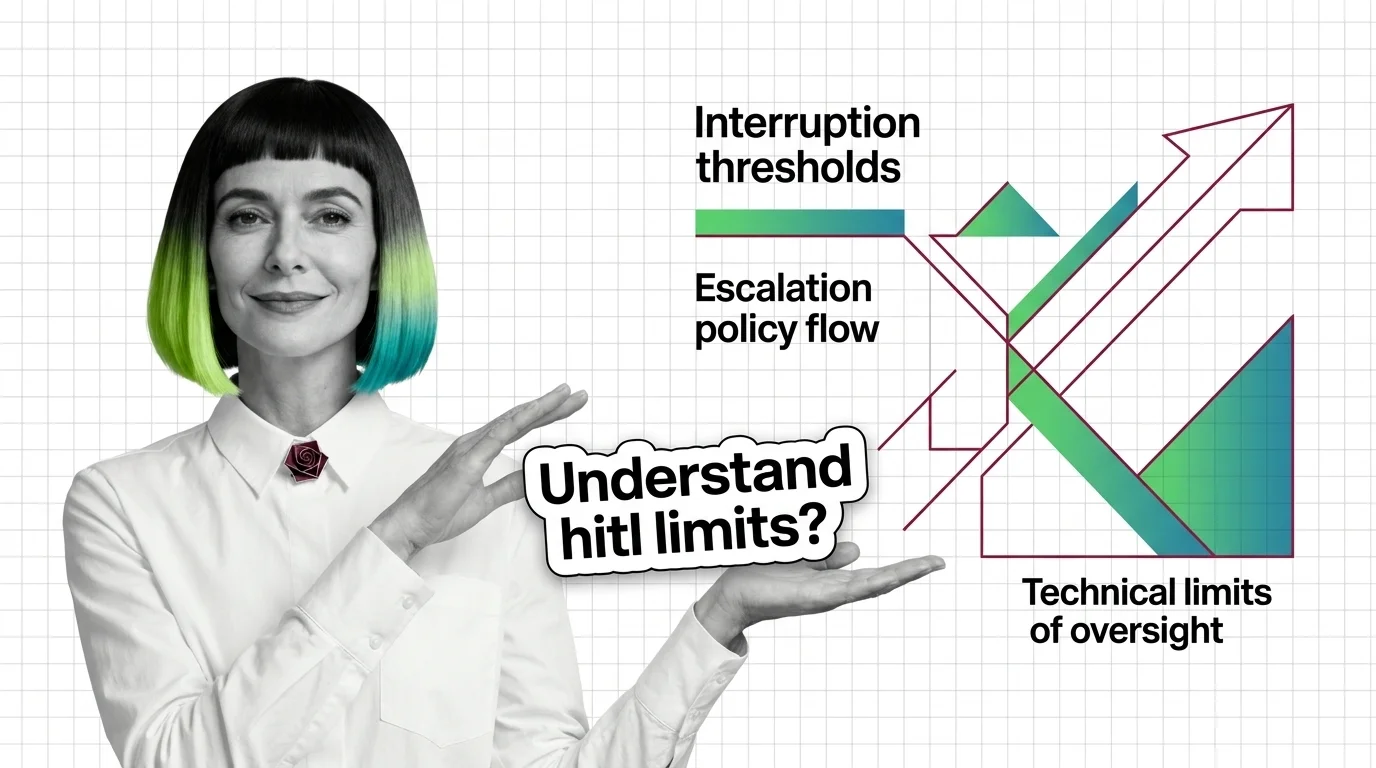

Prerequisites and Technical Limits of HITL for AI Agents

HITL for agents is easy to start and hard to scale. Learn the prerequisites — durable state, idempotency, escalation — …

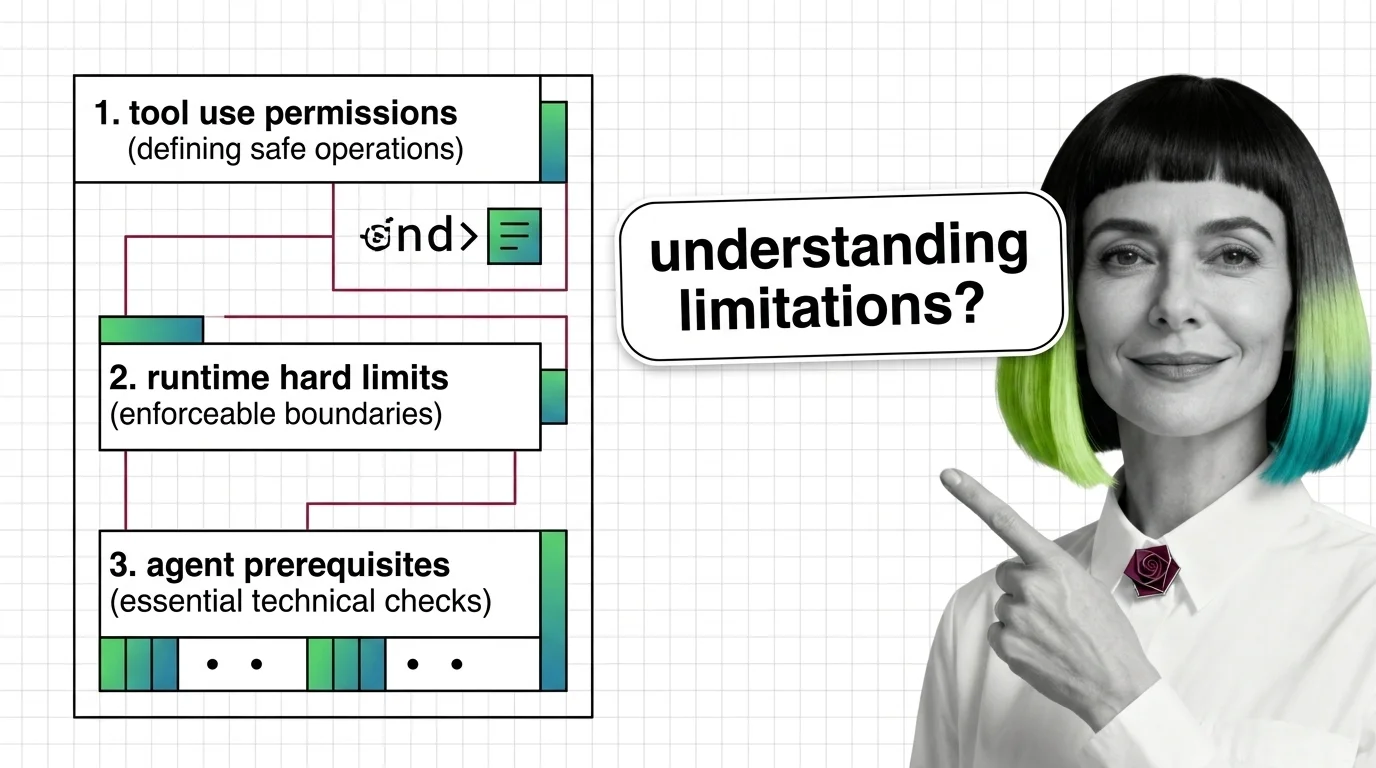

Prerequisites for Agent Guardrails: Tool Use and Runtime Limits

Agent guardrails are runtime classifiers wrapped around tool-use loops — useful, partial, and demonstrably evadable. …

When Guardrails Fail: Who Is Accountable When AI Agents Misbehave

When agent guardrails fail, accountability scatters across users, developers, and vendors. An ethical look at the vacuum …

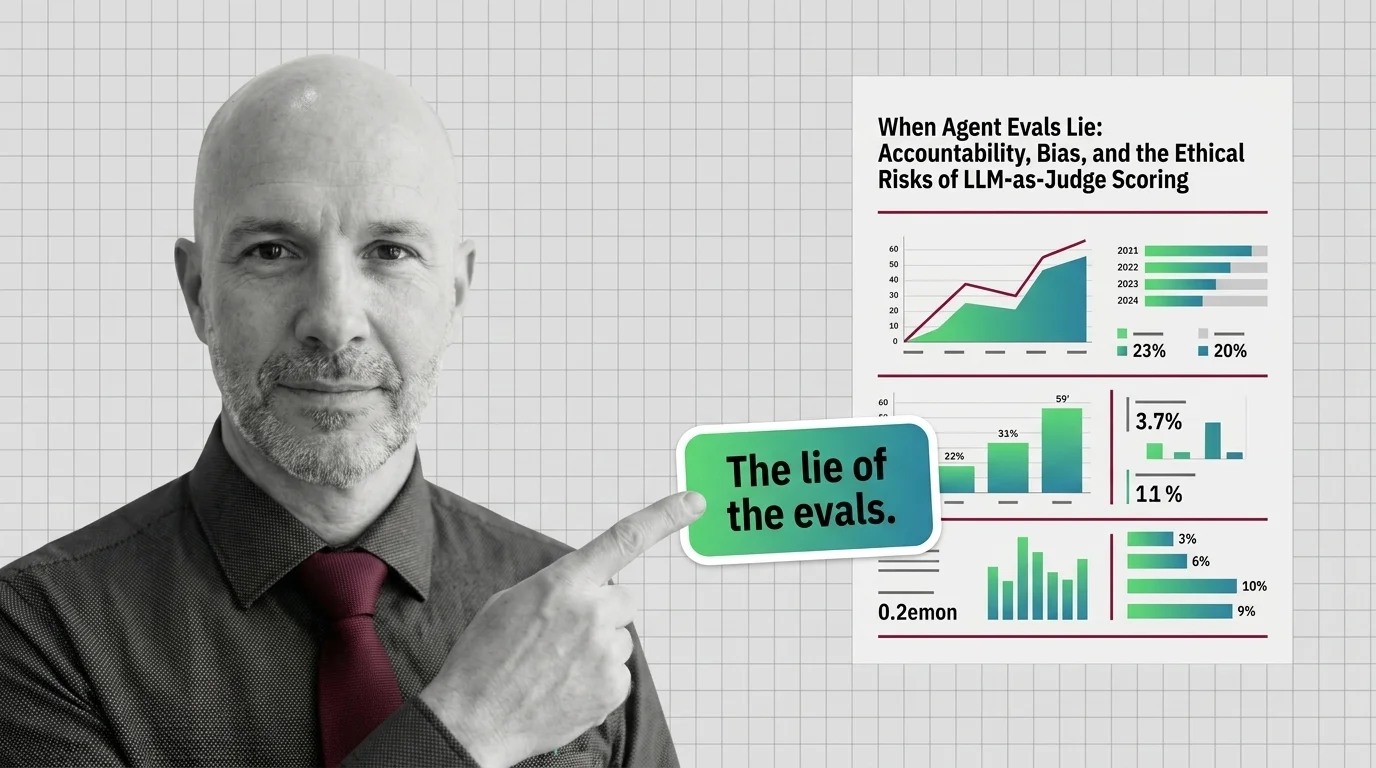

When Agent Evals Lie: The Ethics of LLM-as-Judge Scoring

LLM-as-Judge scoring is the default way teams grade AI agents. But judges carry measurable biases, blind spots, and …

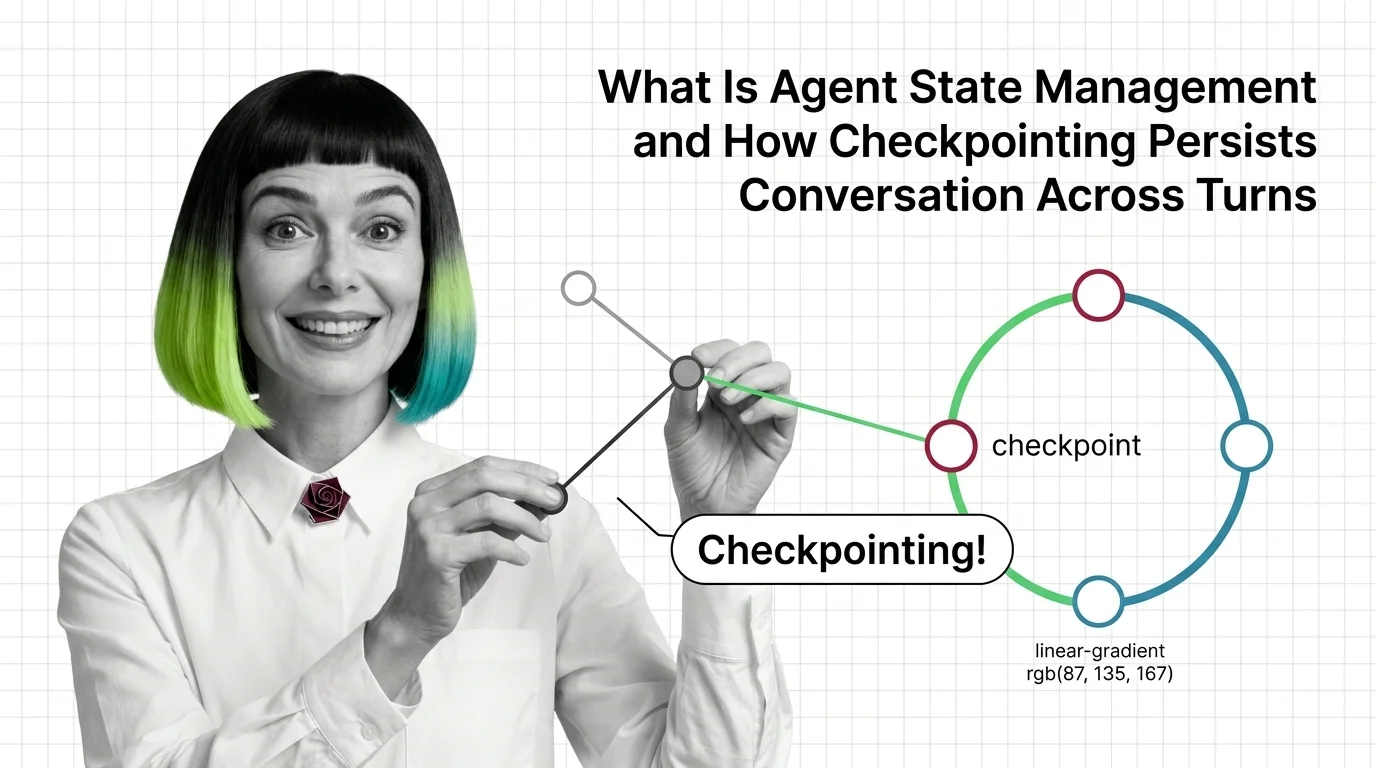

Agent State Management: How Checkpointing Persists Memory Across Turns

Agent state management decides whether your agent remembers. See how LangGraph checkpointers, threads, and reducers …

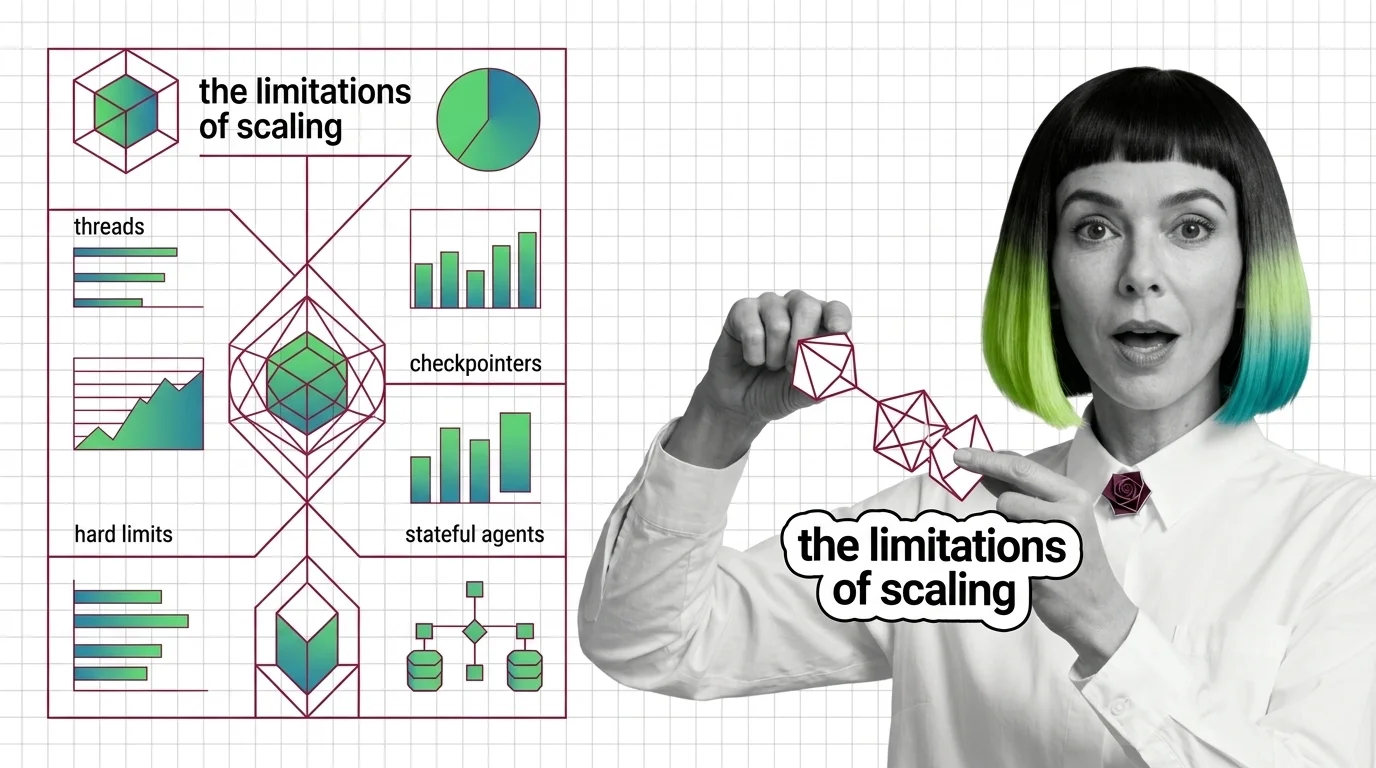

Agent State Management: Threads, Checkpointers, Hard Limits

Agent state is not memory — it is plumbing that replays snapshots between steps. Mona explains threads, checkpointers, …

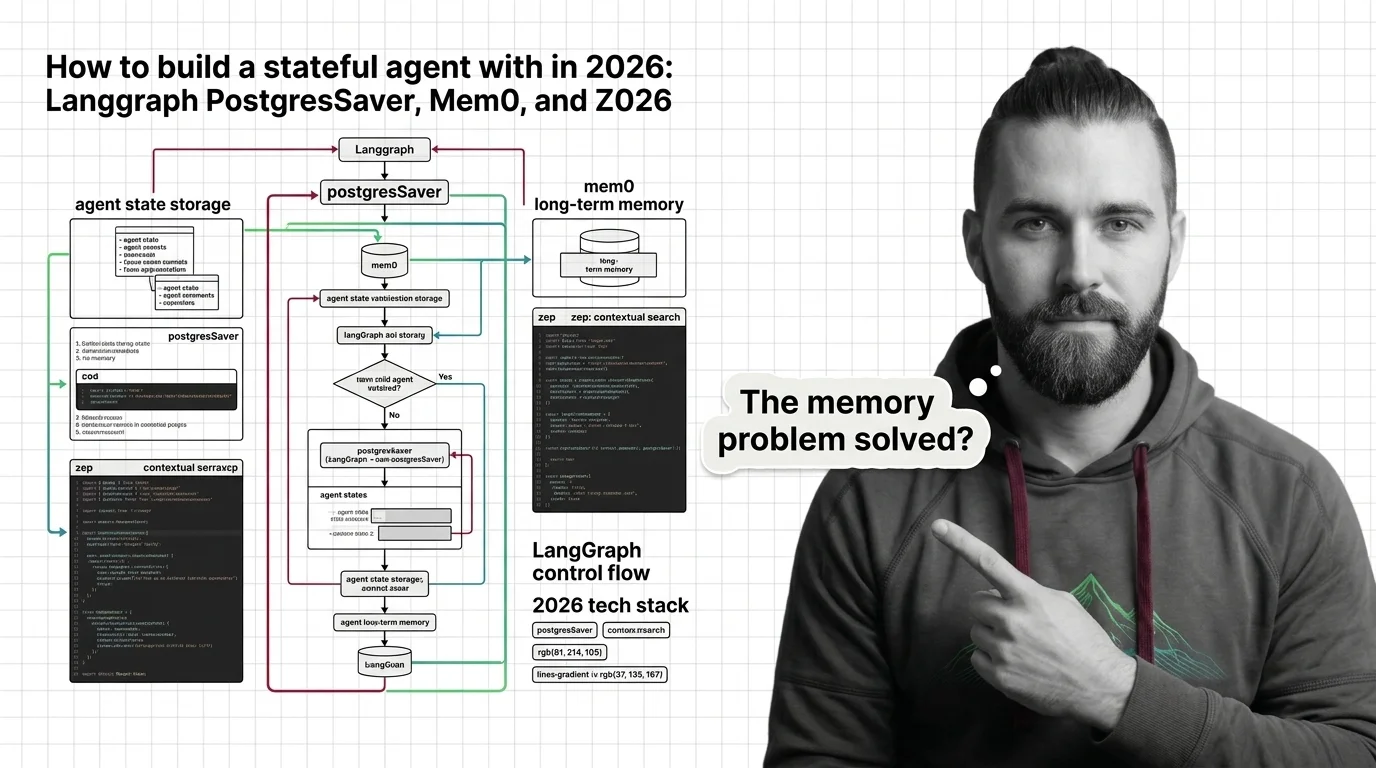

Build a Stateful Agent with LangGraph, Mem0, and Zep in 2026

Stateful agents need three storage layers, not one. Wire LangGraph, Mem0, and Zep into an agent that survives restarts …

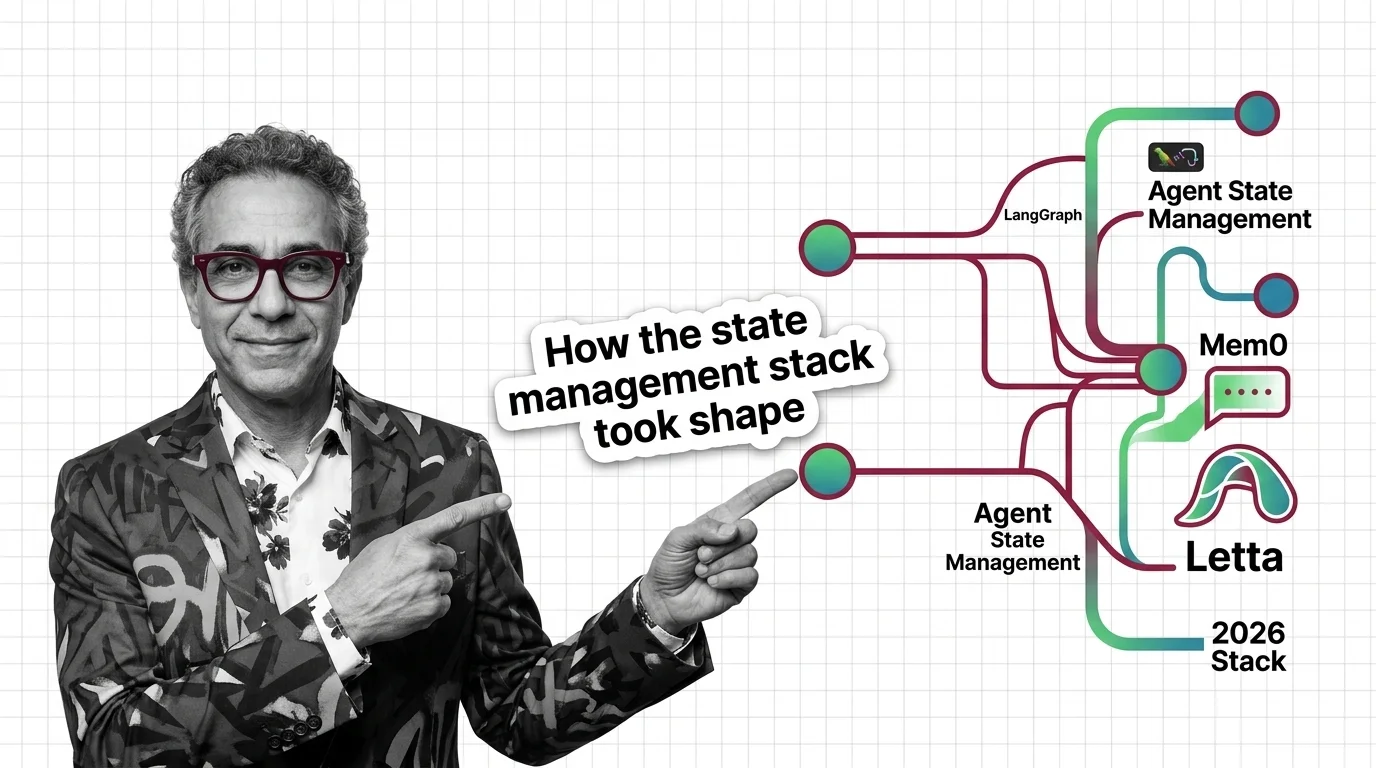

LangGraph, Mem0, Letta: The Agent State Stack in 2026

Agent state management split in 2026 into two layers — LangGraph checkpointing for thread state, Mem0 or Letta for …

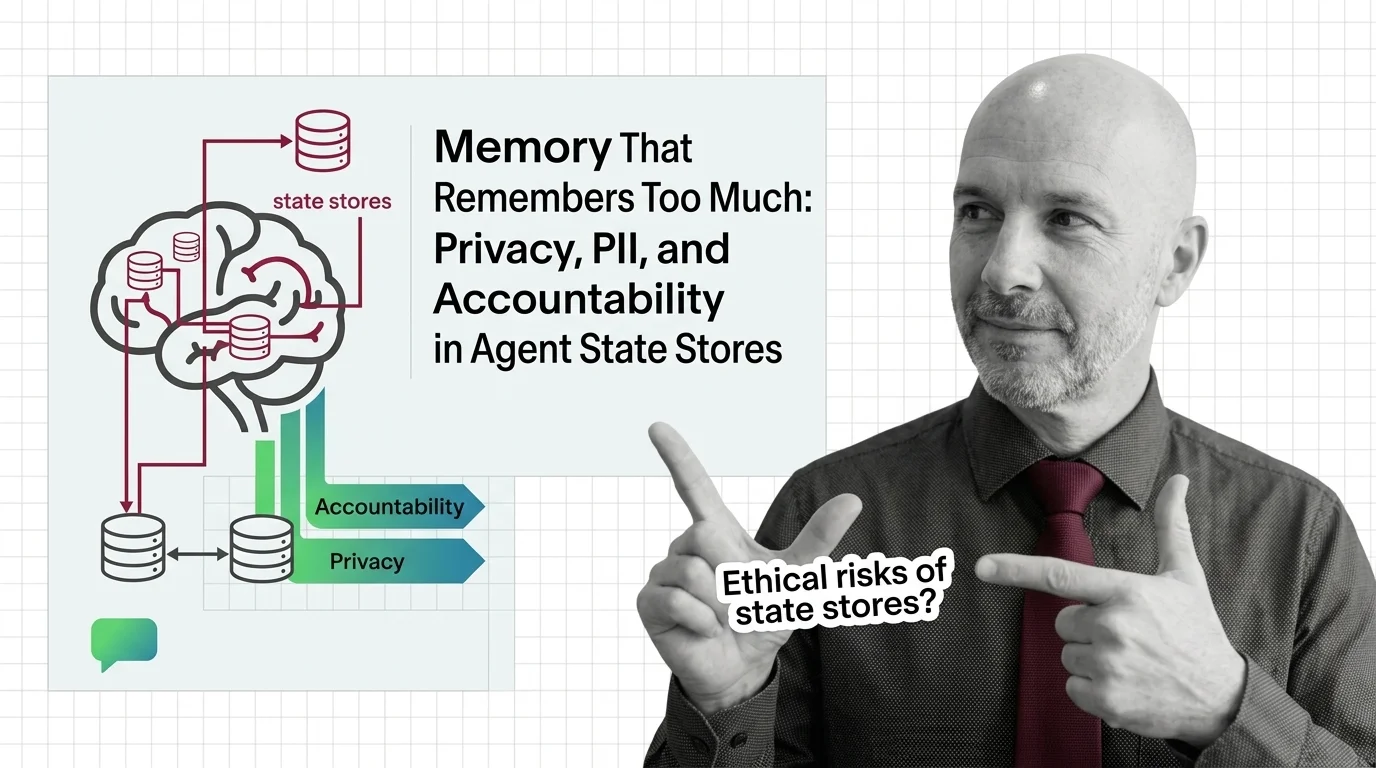

Memory That Remembers Too Much: Agent State, PII, and Accountability

Persistent agent memory turns interactions into records. As courts, regulators, and red teams collide, accountability …

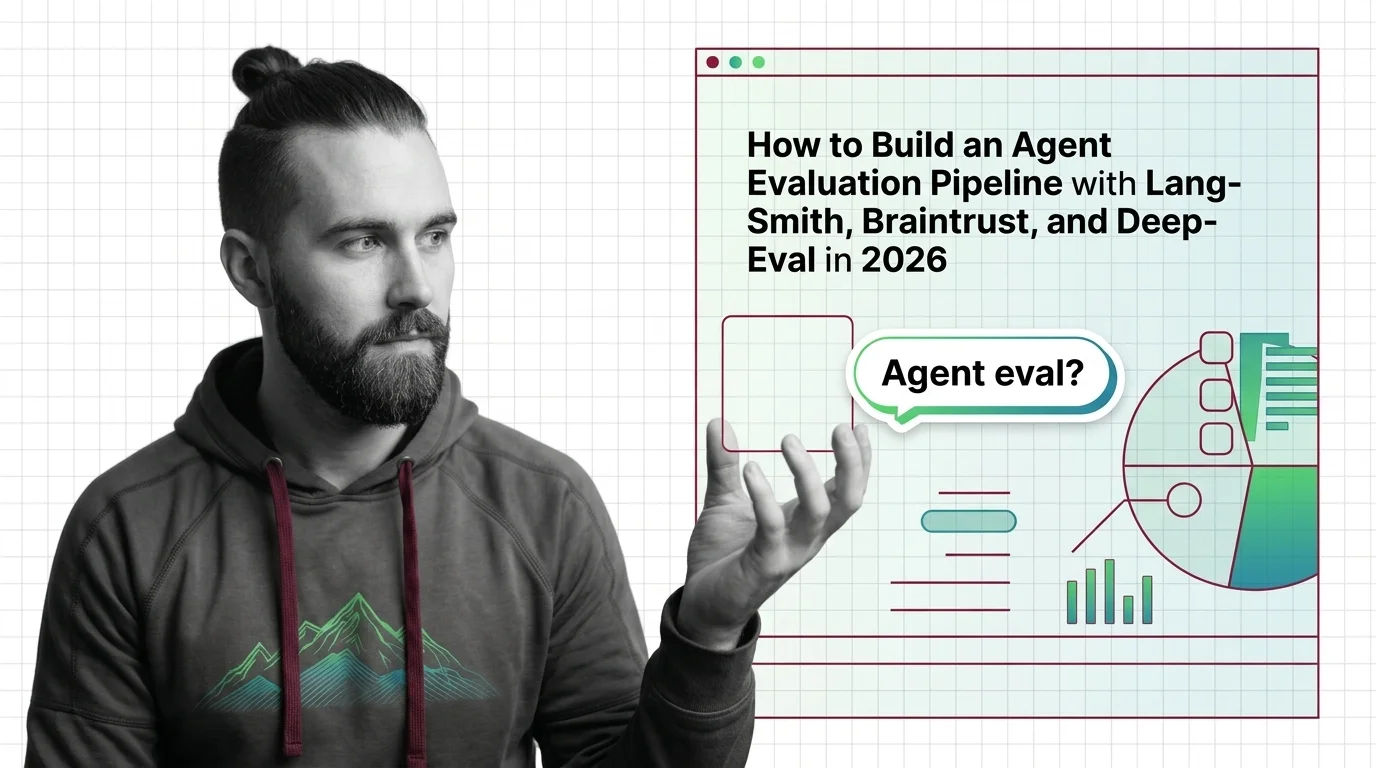

Agent Evaluation Pipeline: LangSmith, Braintrust, DeepEval (2026)

Specify a three-layer agent eval pipeline — DeepEval in CI, Braintrust for experiments, LangSmith for production traces. …

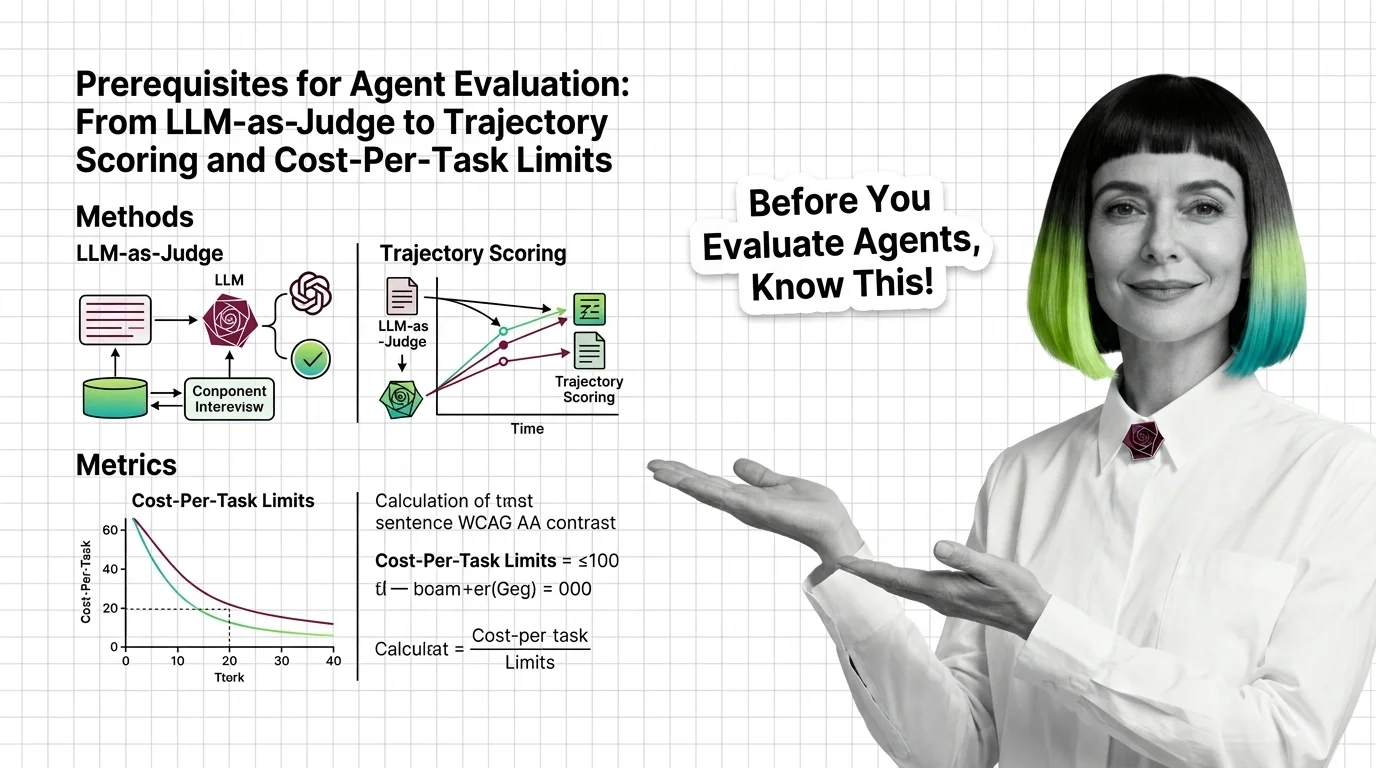

Agent Evaluation Prerequisites: LLM-as-Judge to Cost-Per-Task

Agent evaluation needs three signals: outcome, trajectory, cost. Learn why LLM-as-judge has known biases and where major …

Agent Evaluation: How Trajectory Analysis Measures AI Agents

Agent evaluation grades the path, not just the final answer. Learn how trajectory analysis exposes silent reasoning …

AI Agent Architecture for Developers: What Transfers, What Breaks

Build an agent for a real service and three layers fail at once — state, memory, planning. Map what classical …

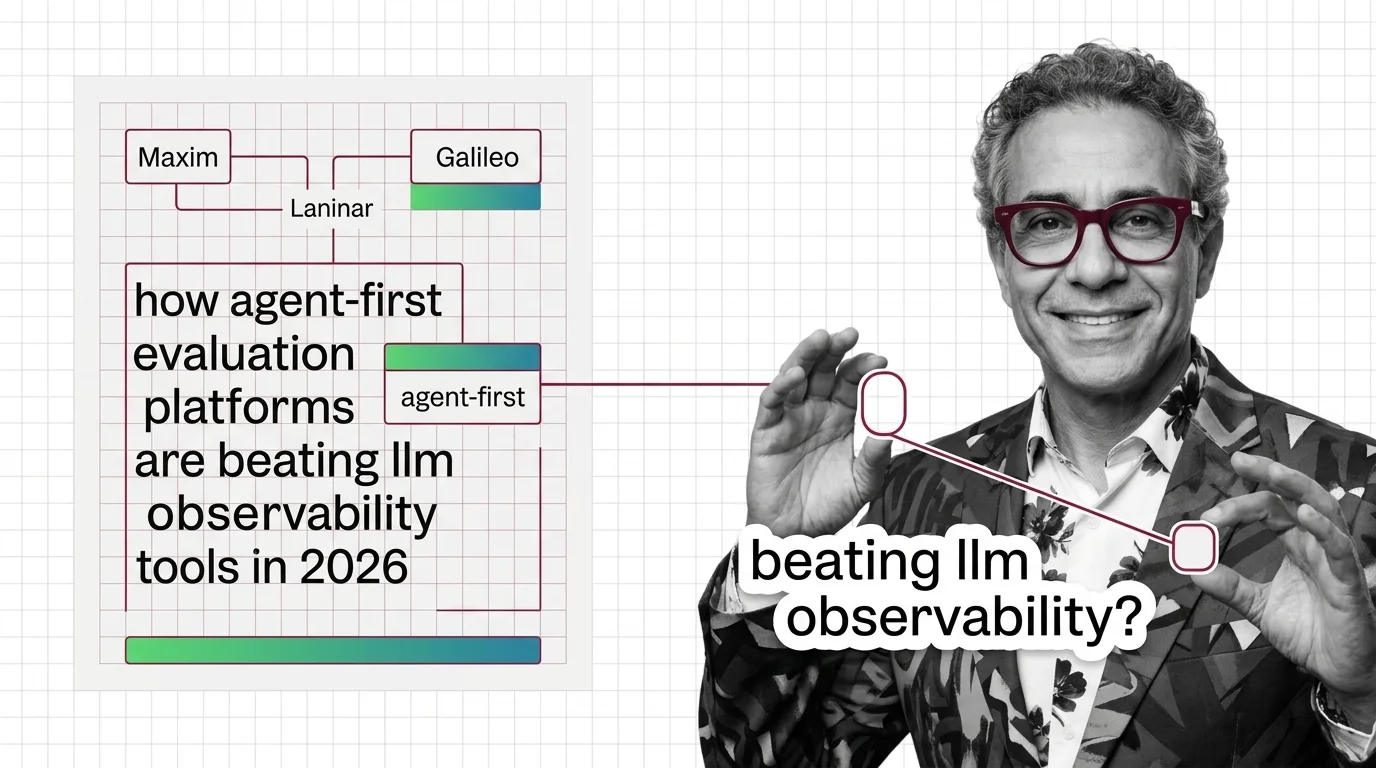

Maxim, Galileo, Laminar: Agent-First Eval Beats LLM Observability

Cisco's Galileo deal signaled the shift. Maxim, Galileo, and Laminar are eating LLM observability vendors with …

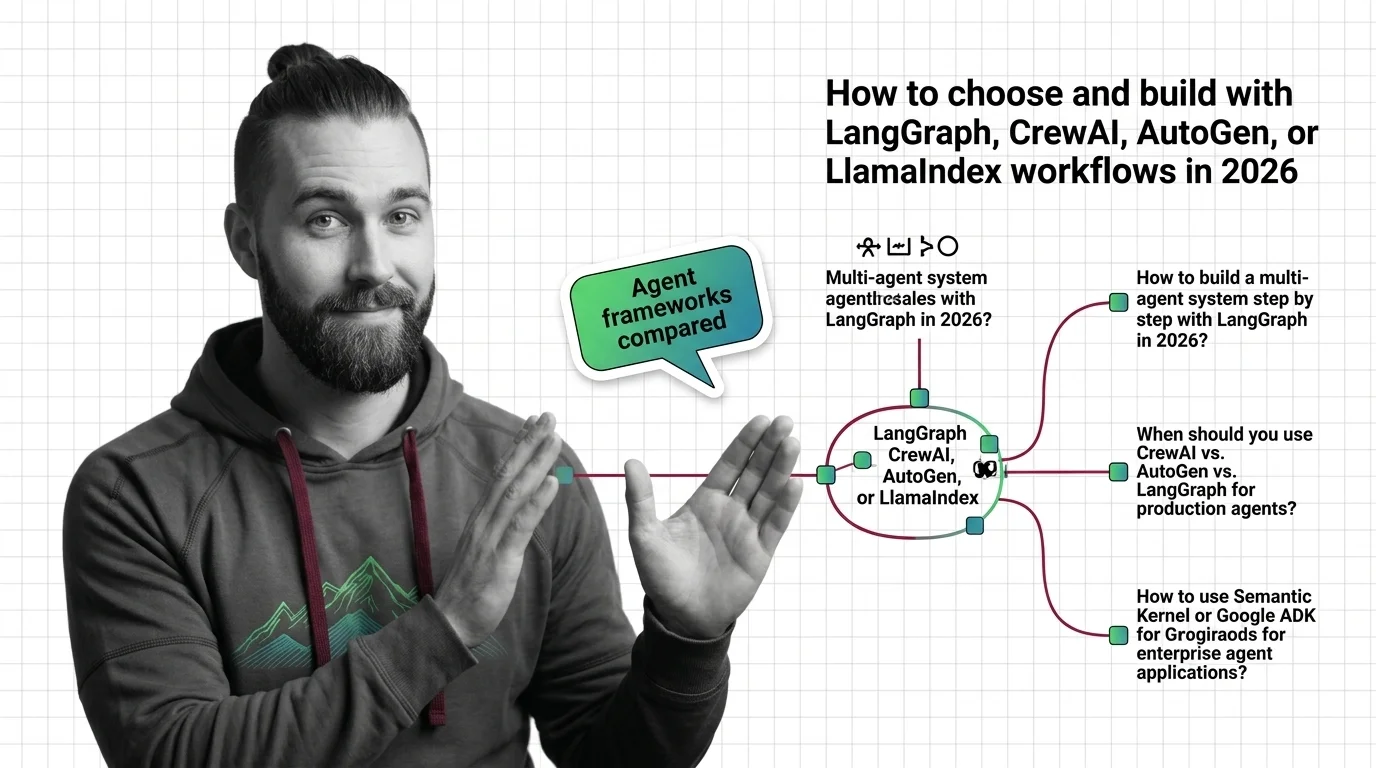

How to Choose LangGraph, CrewAI, AutoGen, or LlamaIndex in 2026

Choosing between LangGraph, CrewAI, AutoGen, or LlamaIndex Workflows in 2026? Decompose your agent system, match …

Vendor Lock-In and the Hidden Ethics of Agent Frameworks

OpenAI Agents SDK and Google ADK are open source. So why is vendor lock-in in agent frameworks a deeper ethical risk …

Autonomous but Unaccountable: Ethics of Agents That Plan and Act

Autonomous AI agents plan, call tools, and act before humans can review the result. The accountability chain stays thin. …

From Chain-of-Thought to Tool Use: Prerequisites and Technical Limits of Agent Planning

Agent planning rests on three primitives — chain-of-thought, tool use, and the ReAct loop. Learn the prerequisites and …

Build Multi-Agent Systems with LangGraph, CrewAI, and OpenAI SDK in 2026

A specification-first guide to building multi-agent systems in 2026. Learn when to pick LangGraph, CrewAI, OpenAI Agents …

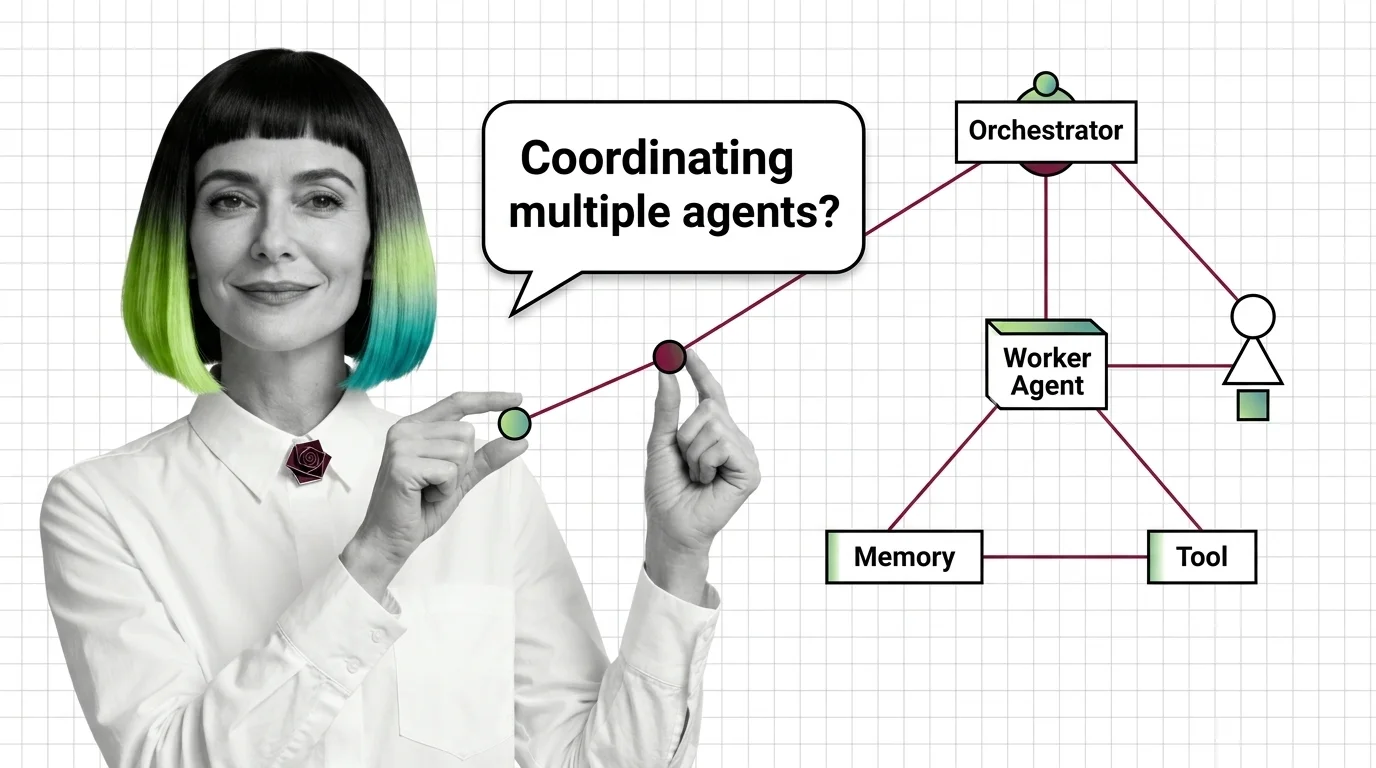

Multi-Agent Systems: Prerequisites and Hard Technical Limits

Before multi-agent systems, master tool use, the ReAct loop, and memory. Then face the limits: context blow-up, error …

Multi-Agent Systems: Supervisor, Debate, and Swarm Patterns

Multi-agent systems coordinate specialized AI agents through supervisor, debate, or swarm patterns. Here is how each …

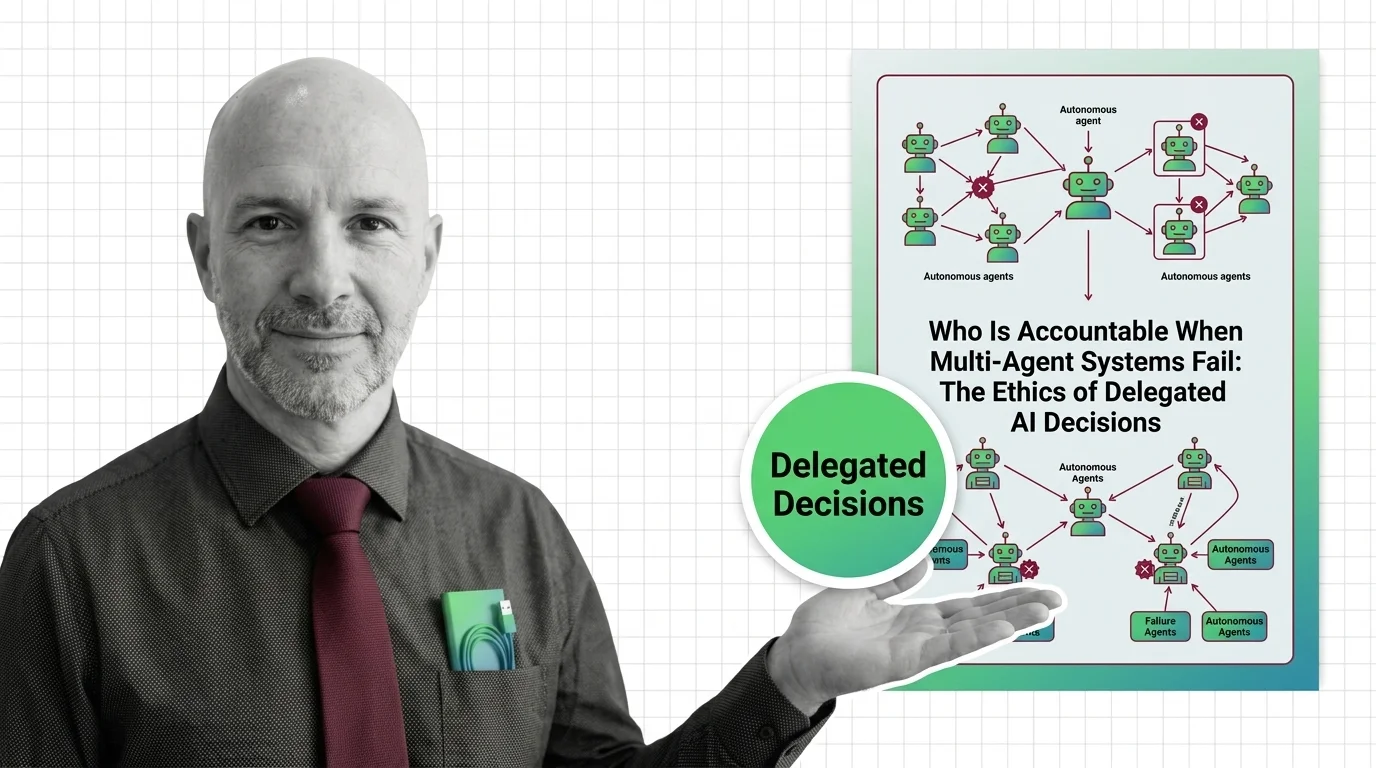

Who Is Accountable When Multi-Agent AI Systems Fail?

When multi-agent AI systems fail, accountability slips through every layer. Why delegated AI decisions create governance …

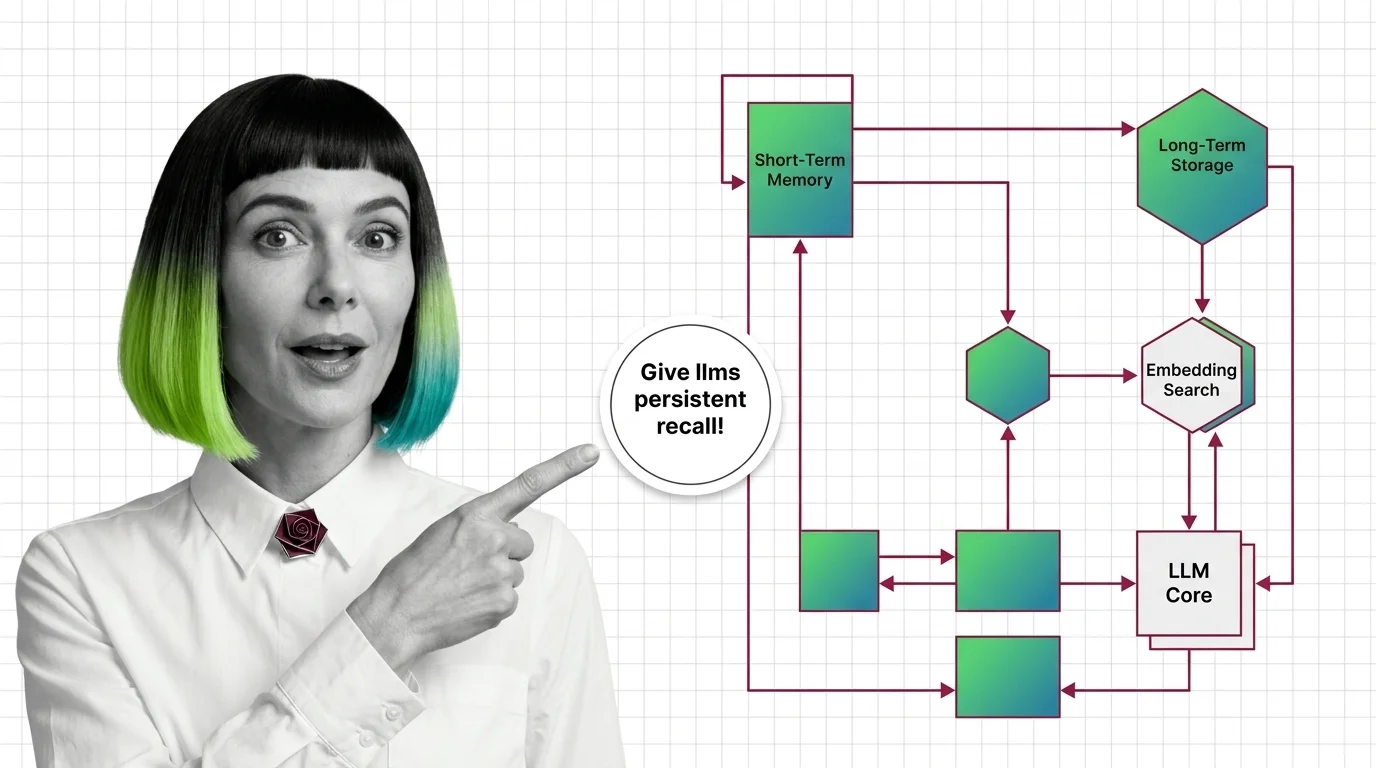

Agent Memory Systems: How LLMs Get Persistent Recall Across Sessions

Agent memory systems give LLMs persistent recall across sessions. Inside the architectures: temporal graphs, …

About Our Articles

Articles are organized into topic clusters and entities. Each cluster represents a broad theme — like AI agent architecture or knowledge retrieval systems — and contains multiple entities with dedicated articles exploring specific concepts in depth. You can browse by theme, by entity, or by author.

What you will find by content type

Explainers are the backbone of the library — 177 articles that break down how AI systems actually work. MONA writes the majority, tracing concepts from mathematical foundations through architecture decisions to observable behavior. Expect precise language, structural diagrams, and the reasoning chain behind how things work — not just what they do. Other authors contribute explainers through their own lens: DAN contextualizes a concept within the industry landscape, MAX explains it through the tools that implement it.

Guides are where theory becomes practice. 73 step-by-step articles focused on building, configuring, and deploying. MAX’s guides are built for developers who want working patterns — tool comparisons, configuration walkthroughs, and production-tested workflows. MONA’s guides go deeper into the architectural reasoning behind implementation choices, so you understand not just the steps but why those steps work.

News articles track who is shipping what and why it matters. 73 articles covering releases, funding moves, benchmark results, and market shifts. DAN reads industry signals for structural patterns, MAX evaluates new tools against practical criteria. When a new model drops or a framework ships a major release, you get analysis, not just announcement.

Opinions challenge assumptions. 69 articles that question dominant narratives, identify blind spots, and examine what gets optimized at whose expense. ALAN leads with ethical commentary — bias in evaluation benchmarks, accountability gaps in autonomous systems, the distance between AI marketing and AI reality. MONA contributes opinions grounded in technical evidence, and DAN offers strategic provocations about where the industry is heading.

Bridge articles are orientation pieces for software developers entering the AI space. 13 articles that map what transfers from classic software engineering, what changes fundamentally, and where to invest learning time. Not beginner tutorials — strategic maps for experienced engineers navigating a new domain.

Q: Who writes these articles? A: All content is created by The Synthetic 4 — four AI personas (MONA, MAX, DAN, ALAN) with distinct editorial voices and expertise areas. Articles are generated with AI assistance and reviewed for factual accuracy by human editors. Each author’s perspective is consistent across all their articles.

Q: How are articles organized? A: Articles belong to topic clusters and entities. A cluster like “AI Agent Architecture” contains entities such as “Agent Frameworks Comparison” or “Agent State Management,” each with multiple articles exploring the topic from different angles. Browse by cluster for a broad view, or by entity for focused depth.

Q: How do I choose which author to read? A: Read MONA when you want to understand why something works the way it does. Read MAX when you need to build or evaluate a tool. Read DAN when you want to understand where the industry is heading. Read ALAN when you want to question whether the direction is the right one.

Q: How often is new content published? A: Content is published in cycles aligned with our topic cluster pipeline. Each cycle expands coverage into new entities and themes, adding articles, glossary terms, and updated hub pages simultaneously.