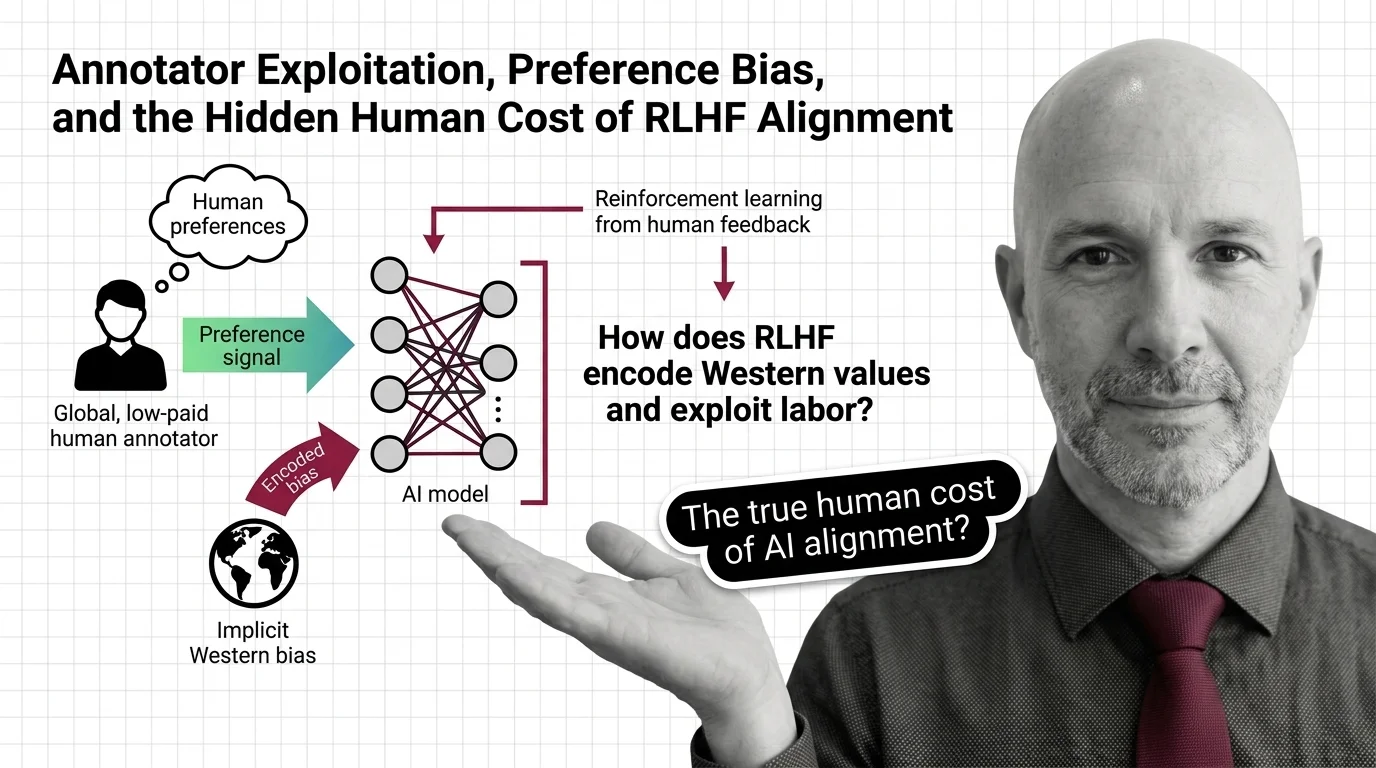

Annotator Exploitation, Preference Bias, and the Hidden Human Cost of RLHF Alignment

Table of Contents

The Hard Truth

If alignment is the process of making AI safe for humans — what happens when the humans doing the aligning are not safe themselves?

We talk about RLHF as if it were a technical protocol — a feedback loop between model outputs and human preferences, a mechanism for teaching machines to behave. We rarely ask whose preferences, under what conditions, and at what personal cost. The machinery of alignment has a human supply chain. The closer you look at it, the less it resembles safety and the more it resembles extraction.

The Invisible Labor Behind “Helpful and Harmless”

In 2021 and 2022, OpenAI outsourced annotation labor to Sama, a company with operations in Nairobi. Workers reviewed content describing child sexual abuse, bestiality, murder, suicide, and torture — the raw material that would teach ChatGPT what not to say. Their take-home pay was between $1.32 and $2.00 per hour, while OpenAI believed the contracted rate was $12.50 (TIME). The workers later reported insomnia, anxiety, depression, and panic attacks. Sama subsequently exited all NLP and content moderation contracts.

This is not ancient history. An estimated 150,000 or more content moderation workers operate in Sub-Saharan Africa alone, processing 800 to 1,200 items per shift at roughly 30 to 50 seconds per decision (Silicon Canals, drawing on ILO reporting). The subcontracting chain runs from companies like Meta, OpenAI, and Google through intermediaries — Sama, Majorel, Teleperformance, Accenture — down to the individual workers who absorb the trauma. In 2023, Kenyan annotation workers petitioned their own parliament to investigate Big Tech outsourcing practices (TechCrunch).

The question at the center of all this is deceptively simple: can exploitation produce ethical outputs?

The Reasonable Case for Imperfect Alignment

The conventional defense deserves to be heard at its strongest. Reward Modeling requires enormous volumes of human preference judgments. Distributing that labor across global markets makes it economically feasible at the scale that modern PPO (Proximal Policy Optimization)-based training demands. Without these evaluations, models would lack the behavioral guardrails that prevent harmful outputs. The logic runs: imperfect alignment is better than no alignment at all.

There is a harder version of this argument worth sitting with. Even critics acknowledge that some form of human feedback — whether direct annotation or its derivatives like RLAIF — remains necessary for evaluation and red-teaming. The humans in the loop are not optional. They are load-bearing. And the uncomfortable truth is that nobody has demonstrated a reliable alternative to human judgment for the hardest alignment decisions — the ones involving context, culture, and moral weight.

When the Majority Signal Becomes the Only Signal

But the assumption buried inside this defense is that human preferences can be meaningfully aggregated — that a majority signal equals a correct signal. The mathematics of standard RLHF optimization tell a different story. Research by Xiao et al. shows mathematically that KL Divergence regularization — the mechanism meant to keep the policy stable during training — effectively suppresses minority preferences. Their proposed fix, Preference Matching RLHF, improved alignment with the actual preference distribution by 29 to 41% in their formal framework, which tells you how far off the standard method was to begin with. The real-world magnitude of this suppression remains difficult to quantify, but the structural tendency is clear.

The cultural dimension is equally uncomfortable. State-of-the-art LLMs align more closely with Western than non-Western values (Lindström et al.). This is not a conspiracy — it is a structural consequence of who annotates, where they live, and what “good” means in their training context. When factually incorrect but fluently written responses are rated higher than grammatically imperfect correct ones, the system learns to prioritize style over substance. And when annotators — overworked, underpaid — delegate their own tasks to LLMs, the feedback loop becomes circular: machine preferences pass for human judgment, and nobody flags the collapse.

A Familiar Pattern With a New Interface

There is a pattern here that predates artificial intelligence by centuries. Cheap labor in the Global South produces raw material — in this case, Preference Data — that is refined into value captured almost entirely in the Global North. The workers who generate that value have no ownership of the product, limited recourse when harmed, and no seat at the table when the system’s behavior is evaluated. The annotation economy mirrors older extractive structures: opaque subcontracting chains, geographic distance between labor and profit, and a narrative of necessity that discourages scrutiny.

The difference is speed. A colonial export moved through ships and warehouses over months. Preference labels move through APIs in milliseconds, making the human cost even easier to abstract away. And there is no bill of lading — no manifest listing who touched the cargo or what it cost them.

This reframe changes what counts as a legitimate response. If the problem is “technical alignment is imperfect,” the answer is better algorithms — GRPO, Reward Hacking mitigation, newer optimization methods. If the problem is structural extraction, the answer requires labor rights, transparency obligations, and governance that extends beyond the model architecture.

The Cost Is Not a Bug

Thesis (one sentence, required): The human cost of RLHF alignment is not a flaw in the system — it is a condition the system depends on to function at its current price point.

That dependence does not disappear when the technical method changes. The shift toward modular post-training stacks — SFT followed by DPO or SimPO, layered with GRPO or DAPO — is already underway; as of early 2026, traditional RLHF is being described as functionally superseded (LLM Stats). But DPO still requires human preference pairs. Red-teaming still requires human adversaries. Evaluation still requires human judgment. The labor does not vanish — it migrates. Tools like OpenRLHF and TRL make the training pipeline more accessible, but accessibility of infrastructure does not guarantee equity in the labor that feeds it.

The same structural concern applies to cultural encoding. Replacing PPO with a newer optimizer does not change whose preferences fill the training set. It does not address the fact that annotation demographics skew toward English-speaking, Western-educated populations — not because of malice, but because of market economics and platform availability. Who decides what “helpful” means when the people being asked to define it cannot afford to say no?

Questions We Owe the People We Cannot See

This is not an argument against alignment research. It is an argument that alignment research without labor ethics is incomplete — that the ethical dimension is not a supplementary concern but a prerequisite for the word “alignment” to mean what it claims to mean.

The questions that matter here are not technical. Who audits the subcontracting chain? Who measures the psychological cost not as an afterthought but as a design constraint? What would it mean for an AI company to publish not just model benchmarks but labor transparency reports — wages, hours, content exposure, mental health outcomes?

And the hardest question: are we willing to pay more for AI that is genuinely aligned, or does “alignment” only hold meaning as long as someone else absorbs the cost?

Where This Argument Is Weakest

If annotation labor were fully automated — if synthetic preference data from RLAIF or AI-generated red-teaming eliminated the need for human exposure to traumatic content — the exploitation argument loses its material foundation. Early evidence suggests this trajectory is plausible but not yet realized. The circular delegation problem documented by Lindström et al. shows that current attempts to replace human annotators with LLMs introduce new failure modes rather than solving old ones.

This argument is also strongest for content moderation — the most visibly harmful annotation task. Whether the same moral urgency applies to routine preference labeling for helpfulness is a legitimate debate, and I acknowledge that the line between trauma and tedium is not always where I draw it.

The Question That Remains

We built the safest AI systems in history on a foundation of underpaid labor, psychological harm, and cultural assumptions that were never examined because examining them would slow things down. The models are getting better. The question is whether “better” will ever include the people who made them possible — or whether alignment will remain a word that only applies to machines.

Disclaimer

This article is for educational purposes only and does not constitute professional advice. Consult qualified professionals for decisions in your specific situation.

AI-assisted content, human-reviewed. Images AI-generated. Editorial Standards · Our Editors