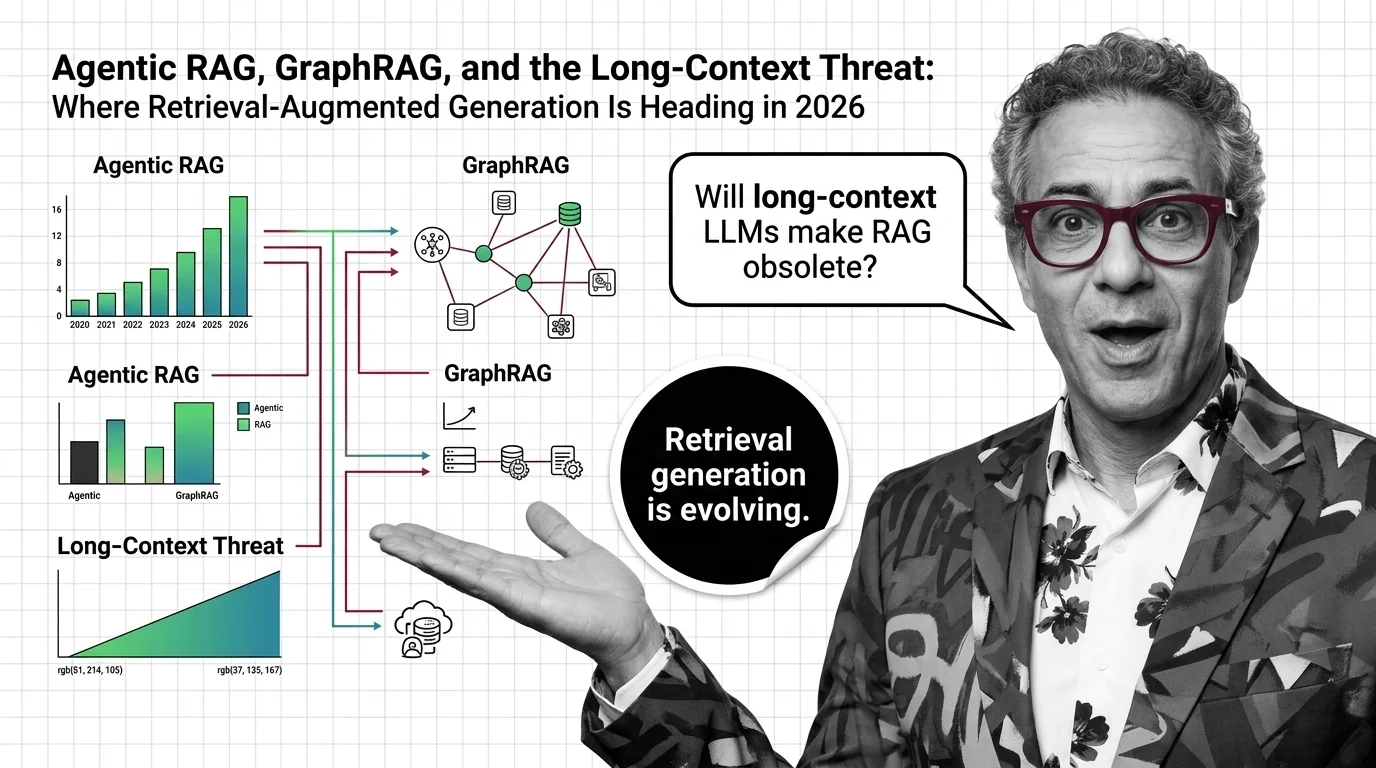

Agentic RAG, GraphRAG, and the Long-Context Threat: Where Retrieval-Augmented Generation Is Heading in 2026

Table of Contents

TL;DR

- The shift: RAG isn’t dying — it’s being absorbed into agent toolchains while two new architectures (graph-augmented and long-context) carve out their own lanes.

- Why it matters: Teams treating RAG as a single technology are about to discover they’re actually buying three different ones.

- What’s next: Production stacks will run hybrid by default — agentic orchestration on top, vector and graph retrieval underneath, long-context for the small slice where it earns its cost.

Headlines started declaring Retrieval Augmented Generation dead in January 2026. They didn’t stop. They’re wrong — but the version still alive doesn’t look much like the 2023 version. Three architectural shifts are landing at the same time, and most teams still think they’re picking one.

The Three-Front Reshape

Thesis: RAG didn’t get replaced. It got split into three jobs running on three different stacks — and the engineering team that doesn’t see the split will overpay for inference, under-deliver on accuracy, or both.

The first front is Agentic RAG. The canonical survey from Singh et al. (2025) defines it across four axes — agent cardinality, control structure, autonomy, and knowledge. It’s not a research curiosity anymore. It’s the orchestration layer most production stacks are converging on.

The second front is graph-augmented retrieval. Microsoft’s GraphRAG hit GA in late 2024, and the LazyGraphRAG variant entered public preview inside Microsoft Discovery and Azure Local in 2026.

The third front is long context. Claude Opus 4.7 shipped a 1M-token window with 128k max output in April 2026 (Anthropic Docs). Gemini 2.5 Pro is at 1M; the marketed 2M expansion was announced as forthcoming but had not shipped as of April 2026. The pitch: load the corpus, skip retrieval, let the model figure it out.

Three architectures, one quarter, one direction. The architecture wars are over. The market just zoned itself.

Three Architectures, One Direction

Watch what each move actually proves.

LazyGraphRAG cut indexing cost to roughly 0.1% of full GraphRAG — a 1000x reduction landing it at vector-RAG cost levels — with query cost over 700x lower than GraphRAG global search at comparable quality (Microsoft Research). Translation: the previous reason to skip graph retrieval just evaporated.

On the long-context side, Anthropic Docs confirm 1M tokens went GA on Opus 4.6 and Sonnet 4.6 at standard pricing on March 13, 2026. Articles still quoting premium pricing for requests over 200K tokens are now stale. Long context stopped being a research demo and became a pricing line.

But long context didn’t kill retrieval. Liu et al. (2023) measured roughly a 30% accuracy drop when relevant information sits mid-context versus at the start or end. Stuff a million tokens in front of the model and you pay for tokens it doesn’t read accurately.

And the demand side hasn’t budged. Menlo Ventures’ 2024 enterprise survey put RAG at 51% of techniques in production, up from 31% in 2023 — and the 2025 report still places it as dominant after prompt design.

Each architecture proves the same point: retrieval is being unbundled, not retired.

Who Moves Up

Vector infrastructure that rides the agent wave wins. Pinecone Serverless is in production at Notion, Gong, and CS Disco — RAG over billions of documents at managed-service economics (Pinecone Blog).

Rerankers won the precision argument. Cohere Rerank 3.5 — at $2.00 per 1,000 searches, with a 4,096-token context and 100+ languages (Cohere Pricing) — ships natively in Pinecone, AWS Marketplace, Oracle Generative AI, and Azure Marketplace. Hybrid retrieval plus reranking is the default production pattern, not the optimization step.

Graph infrastructure entered the conversation. LazyGraphRAG’s productization inside Microsoft Discovery and Azure Local makes graph retrieval an option a procurement team can buy, not a research project.

Frameworks that pivoted to agents kept their seat. LangChain and LlamaIndex now run side-by-side in 2026 production stacks — orchestration plus retrieval-first query engines — with DSPy rising as the compile-time prompt-optimization challenger.

The platforms that admitted retrieval is now infrastructure are pricing accordingly. The ones still pitching it as a feature are about to find out what that costs.

Who Gets Left Behind

Single-stack purists are the first casualty. Teams that bet on “vector-only” or “long-context-only” are running half a system in a market that went three-front.

The Chunking Strategy-as-product crowd — vendors selling chunkers, embedders, or pipelines without retrieval evaluation — lose pricing power once the orchestration layer commodifies the components underneath.

Anyone still quoting Gemini 2.5 Pro at 2M tokens, or Claude pricing as premium above 200K, is shipping outdated specs into procurement decks. That’s a credibility tax.

And the “RAG is dead” camp got the headline right and the architecture wrong. Retrieval moved inside the agent toolchain. The need didn’t disappear. The bill got itemized.

Security & compatibility notes:

- LangChain Serialization Injection (CVE-2025-68664): Patched in

langchain-core1.2.5 / 0.3.81 with breaking changes toload()/loads()defaults — allowlist enforcement,secrets_from_env=False, Jinja2 templates blocked. Pin or migrate before upgrading.- LangChain Path Traversal (CVE-2026-34070): Legacy

load_prompt()/load_prompt_from_config()deprecated; removal scheduled forlangchain-core2.0.0.

What Happens Next

Base case (most likely): Production stacks run hybrid by default through 2026. Agentic orchestration on top. Hybrid Search (vector + lexical + reranker) underneath for most queries. Graph retrieval for relationship-heavy domains. Long context for the narrow slice where the corpus fits and recall matters more than precision. Signal to watch: Major framework releases shipping native graph and long-context tools as first-class citizens, not extensions. Timeline: Through Q4 2026.

Bull case: LazyGraphRAG-class cost curves drop graph retrieval to cheaper-than-vector for relationship-dense corpora, and Cohere Rerank-class precision becomes table stakes. Enterprise retrieval spend compounds rather than fragments. Signal: Procurement RFPs explicitly listing “graph retrieval” alongside vector search. Timeline: Late 2026 into 2027.

Bear case: Long-context pricing keeps falling and “lost in the middle” gets engineered around. Mid-tier RAG vendors lose deal velocity to “just stuff the context window” defaults from frontier model providers. Signal: Frontier labs shipping production-grade in-context retrieval primitives. Timeline: 2027.

Frequently Asked Questions

Q: How are companies deploying RAG at scale in 2026? A: Hybrid stacks are the default — agentic orchestration on top, vector retrieval plus reranking underneath, graph retrieval where relationships dominate. Pinecone Serverless powers Notion’s billion-document RAG, Gong, and CS Disco at managed-service economics, with Cohere Rerank 3.5 as the precision layer.

Q: What is the future of RAG in 2026 with agentic RAG and GraphRAG emerging? A: RAG is unbundling. Agentic RAG owns orchestration. Graph-RAG owns relationship-heavy retrieval. Vector RAG owns commodity semantic search. The question is no longer “which one?” — it’s “which mix, for which query class?” Single-architecture bets are losing.

Q: Will long-context LLMs make RAG obsolete in 2026? A: No. Claude Opus 4.7 and Gemini 2.5 Pro both reach 1M tokens, but Liu et al. (2023) showed a roughly 30% accuracy drop when relevant information sits mid-context. Long context complements retrieval. It doesn’t replace it.

The Bottom Line

RAG isn’t dead — it’s been split. The teams that win in 2026 run all three architectures and route each query to the cheapest, most accurate path. You’re either architecting for the unbundle or you’re paying for last year’s stack.

Disclaimer

This article discusses financial topics for educational purposes only. It does not constitute financial advice. Consult a qualified financial advisor before making investment decisions.

Stay ahead, Dan.

AI-assisted content, human-reviewed. Images AI-generated. Editorial Standards · Our Editors