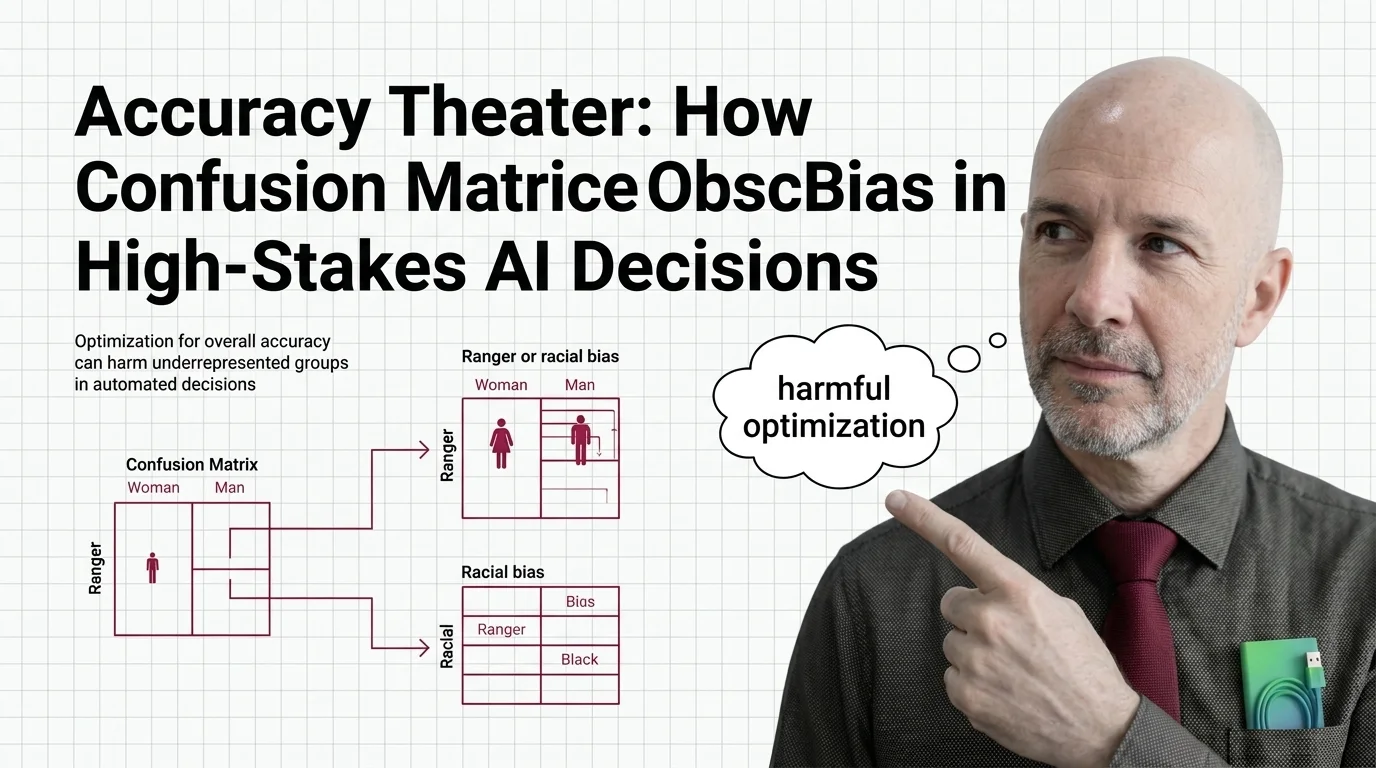

Accuracy Theater: How Confusion Matrices Obscure Bias in High-Stakes AI Decisions

Table of Contents

The Hard Truth

A model scores 61% accuracy. The team presents the number. Management approves deployment. At no point does anyone ask: 61% accurate for whom — and who pays for the other 39%?

We have been taught to read the Confusion Matrix as a diagnostic tool — a grid that lays bare how a model succeeds and fails. True positives here, false negatives there. The math is clean, the categories are tidy, and the overall accuracy sits at the top like a verdict. But a verdict that averages across populations is not a diagnosis. It is, at best, an abstraction — and at worst, an alibi.

The Seduction of the Single Score

The appeal of aggregate accuracy is understandable. A Binary Classification system makes predictions, and the confusion matrix tallies the outcomes — correct and incorrect, across two axes. It is one of the oldest tools in Model Evaluation, and it works exactly as designed. The problem is not that the matrix lies. The problem is that it tells a truth so compressed it loses the part that matters most.

When you report that a criminal risk assessment system achieves 61% accuracy across all crime types — and only 20% accuracy for violent crime predictions (ProPublica) — you have communicated something real. But you have also communicated almost nothing about who the system harms. The aggregate number was defensible. The disaggregated reality was not.

What the Grid Reveals — and What It Was Never Built to Show

The confusion matrix was not designed to deceive. It was designed for a world where the question was simpler: does the model work? And for many applications, that question is sufficient. A spam filter with high overall accuracy is doing its job. Nobody is harmed in a meaningful way when a legitimate email lands in the junk folder for a few hours.

But the moment you apply the same evaluation framework to a system that determines pretrial detention, medical triage, or loan eligibility, the moral weight of each cell in the matrix changes entirely. A false positive in spam filtering is an inconvenience. A false positive in criminal sentencing is a human being locked in a cage. Specificity and Precision, Recall, and F1 Score can capture this asymmetry — in theory. In practice, most deployment reviews never break them down by demographic group. The tools exist. The habit of using them does not.

The Missing Row in Every Matrix

Here is the assumption that nobody examines: errors are distributed randomly across populations. They are not.

ProPublica’s analysis of the COMPAS recidivism algorithm found that Black defendants faced a false positive rate of 44.9% — nearly double the 23.5% rate for white defendants. The system was almost twice as likely to incorrectly label a Black person as high-risk. Conversely, white defendants had a higher false negative rate — 47.7% versus 28.0% — meaning the system was more generous in its mistakes toward those it already privileged (ProPublica). The methodology has been disputed — Barenstein argued in 2019 that ProPublica’s data processing contained a cutoff error that inflated recidivism rates — but the directional finding has been replicated across enough contexts to demand serious attention rather than comfortable dismissal.

The pattern extends beyond criminal justice. Joy Buolamwini and Timnit Gebru’s Gender Shades study, published in 2018, found that commercial gender classification systems exhibited error rates of up to 34.7% for darker-skinned females compared to 0.8% for lighter-skinned males (Buolamwini & Gebru). NIST’s evaluation of 189 facial recognition algorithms found false positive rates 10 to 100 times higher for Asian and African-American faces than for white faces (NIST). These are not edge cases. These are the default behaviors of systems optimized for aggregate performance on datasets that reflect — and then amplify — existing disparities.

Companies have since responded to these findings. IBM exited facial recognition partly in response to the Gender Shades work. But the evaluation methodology that enabled the disparity in the first place remains standard practice in most organizations.

A Theorem That Should Have Changed Everything

In 2016, Kleinberg, Mullainathan, and Raghavan proved something that the machine learning community has been remarkably slow to absorb. Their impossibility theorem demonstrates that when base rates differ between groups — when one population has a different disease prevalence, or a different arrest rate — you cannot simultaneously satisfy calibration, balance for the positive class, and balance for the negative class (Kleinberg et al.). Except with perfect prediction or equal base rates, the math is unambiguous. Perfect fairness across all dimensions is structurally impossible.

This is not a technical limitation awaiting a clever fix. It is a constraint. Every confusion matrix for a system operating across populations with different base rates is already making a choice — which dimension of fairness to sacrifice. The question is whether that choice is made consciously, openly, with the consent of the people it affects — or whether it is buried inside an aggregate accuracy score that nobody disaggregates.

When we discuss Benchmark Contamination — the ways evaluation metrics can mislead — we tend to focus on data leakage or test-set overfitting. But the most consequential form of contaminated evaluation happens in plain sight: reporting a single number when different populations experience fundamentally different systems.

Accuracy as Institutional Permission

Thesis: Aggregate accuracy functions not as a measure of model quality but as institutional permission to avoid examining whom the system harms.

This is the uncomfortable conclusion. A confusion matrix is not a neutral diagnostic when the costs of each error type fall unevenly across populations. It becomes a document that allows organizations to claim “the model works” without specifying for whom, at whose expense, and who authorized the trade-off. That is not evaluation. That is theater — a performance of rigor that obscures the moral decision underneath.

The regulatory world is beginning to catch up. The EU AI Act introduces bias testing obligations for high-risk AI systems effective August 2, 2026, though the deadline may extend to December 2027 if harmonized standards are not available in time (EU AI Act). NIST’s SP 1270 identifies three categories of bias — computational, systemic, and human — and open-source frameworks like IBM’s AI Fairness 360 and Microsoft’s Fairlearn 2.0 now offer mature tooling for disaggregated assessment. The infrastructure for doing this properly exists. What is missing is the institutional will to make disaggregated evaluation the default rather than an afterthought.

The Obligations We Keep Deferring

None of this means we should abandon confusion matrices. The grid itself is not the enemy. What needs to change is the cultural practice of treating a single aggregate number as sufficient evidence for deploying systems that affect human lives.

The question for any team building a high-stakes classifier is not “what is our accuracy?” It is: what is the false positive rate for the most vulnerable population in our dataset? What is the false negative rate? Who decided which trade-off was acceptable — and did the people affected by that trade-off have any voice in the decision?

These are not technical questions. They are governance questions dressed in technical clothing. And the longer we treat them as the former, the longer the people absorbing the cost of our aggregate metrics remain invisible in the very tool that was supposed to make the system’s behavior transparent.

Where This Argument Is Weakest

If disaggregated evaluation became universal — if every confusion matrix were broken down by every relevant demographic dimension — the impossibility theorem guarantees that some trade-off would still remain. No evaluation framework eliminates the trade-off itself. It can only make it visible. A critic could reasonably argue that visibility without a decision-making framework produces paralysis rather than justice. That is a real risk. Transparency alone does not guarantee fairness. But opacity guarantees that unfairness will go unexamined — and the history of algorithmic harm suggests that unexamined is the more dangerous condition.

The Question That Remains

We built the confusion matrix to measure whether machines get the answer right. We never built the tool that measures who pays when they get it wrong. Until disaggregated evaluation is the expectation rather than the exception — who is deciding which populations absorb the cost of our convenient averages?

Disclaimer

This article is for educational purposes only and does not constitute professional advice. Consult qualified professionals for decisions in your specific situation.

Ethically, Alan.

AI-assisted content, human-reviewed. Images AI-generated. Editorial Standards · Our Editors