AI Transition Explained — From Developer to AI Engineer

Navigating the shift from traditional development to AI — without losing your identity or starting from zero. Every topic explored from four angles: scientific foundations, practical tools, market trends, and ethical impact.

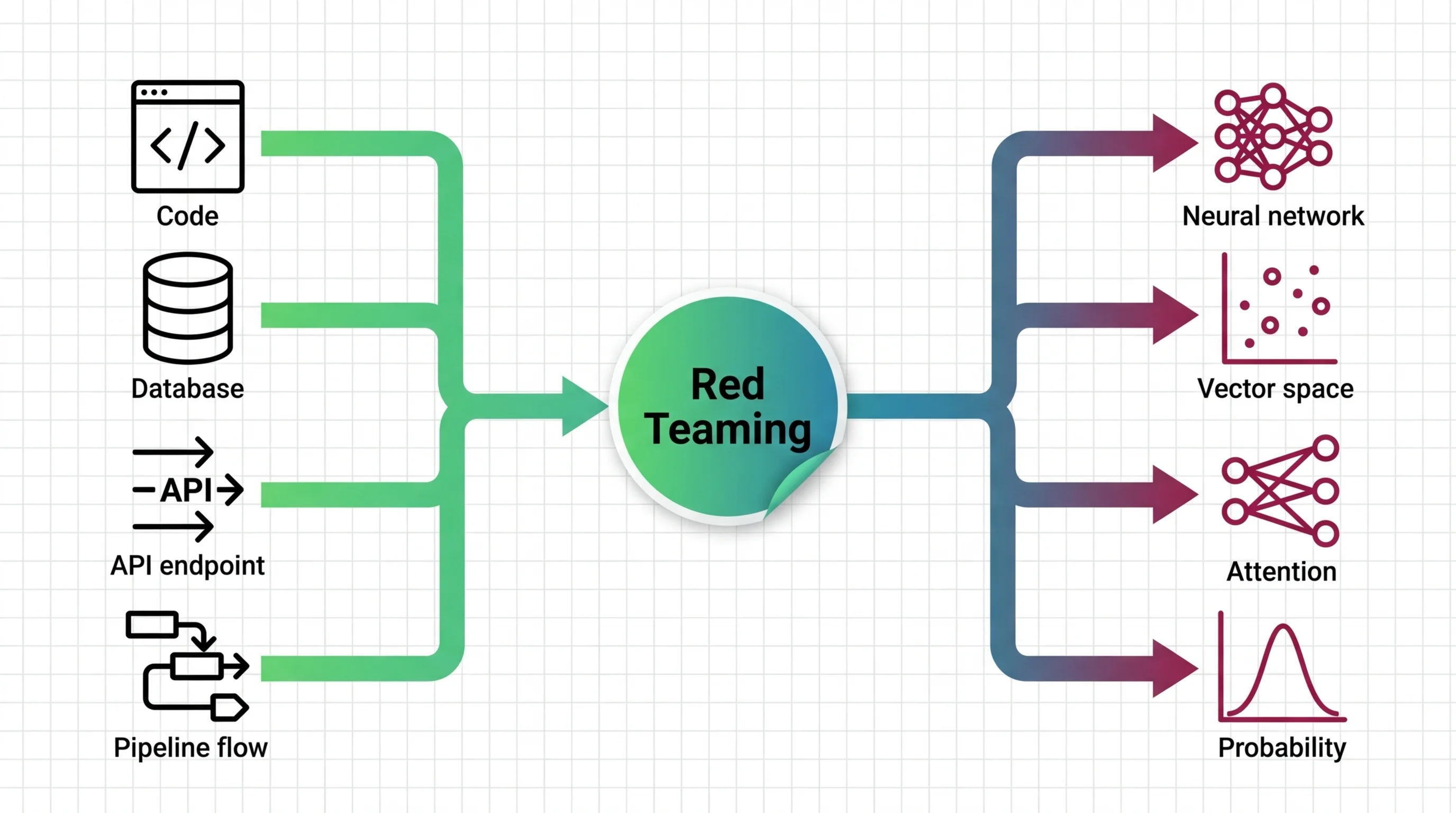

AI Transition: What Developers Actually Need to Know

The “AI engineer” title sounds impressive. The reality is often integration, product decisions, and production engineering. We explain what it actually takes.

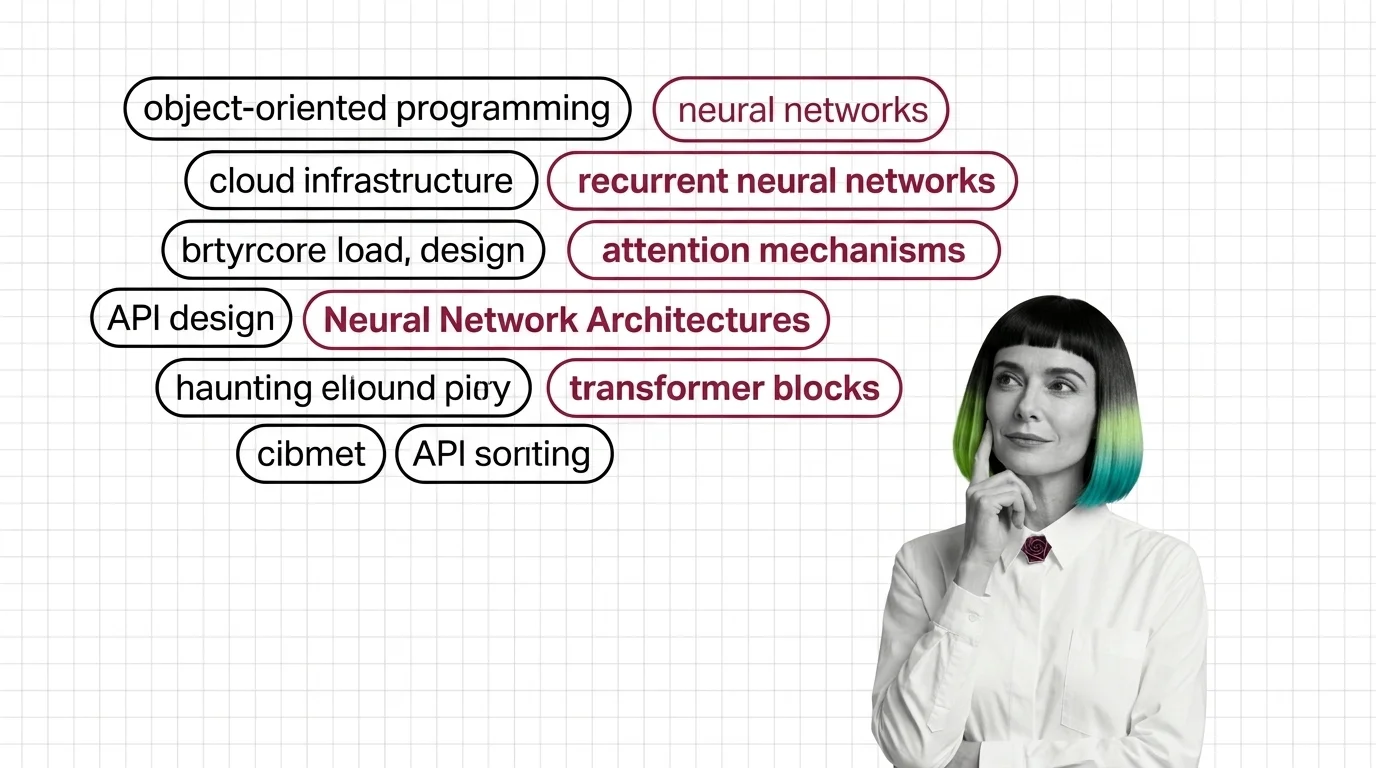

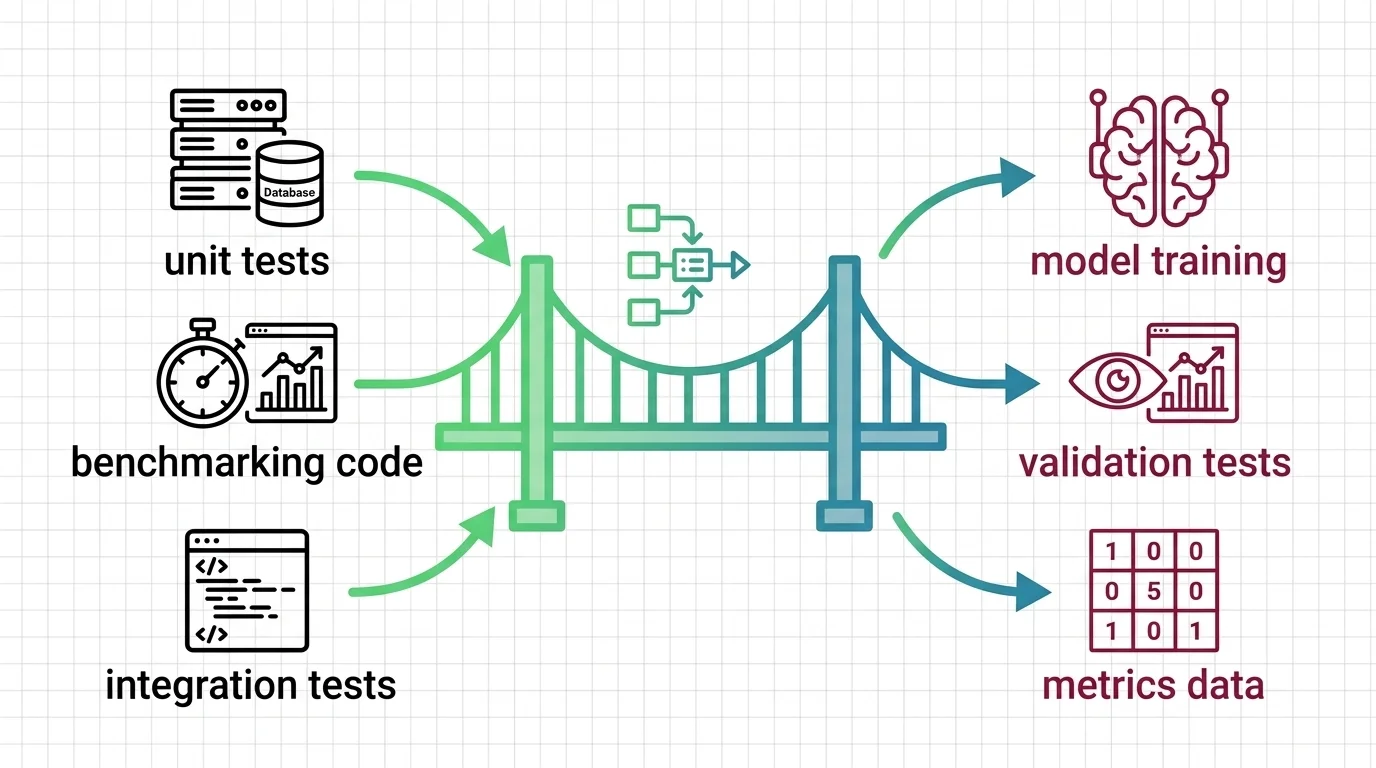

Neural Network Architectures for Developers: What Maps and What Breaks

Neural network architectures for developers. Which software instincts transfer to CNNs, RNNs, and transformers, and where cost and debugging assumptions break.

Latest AI Insights

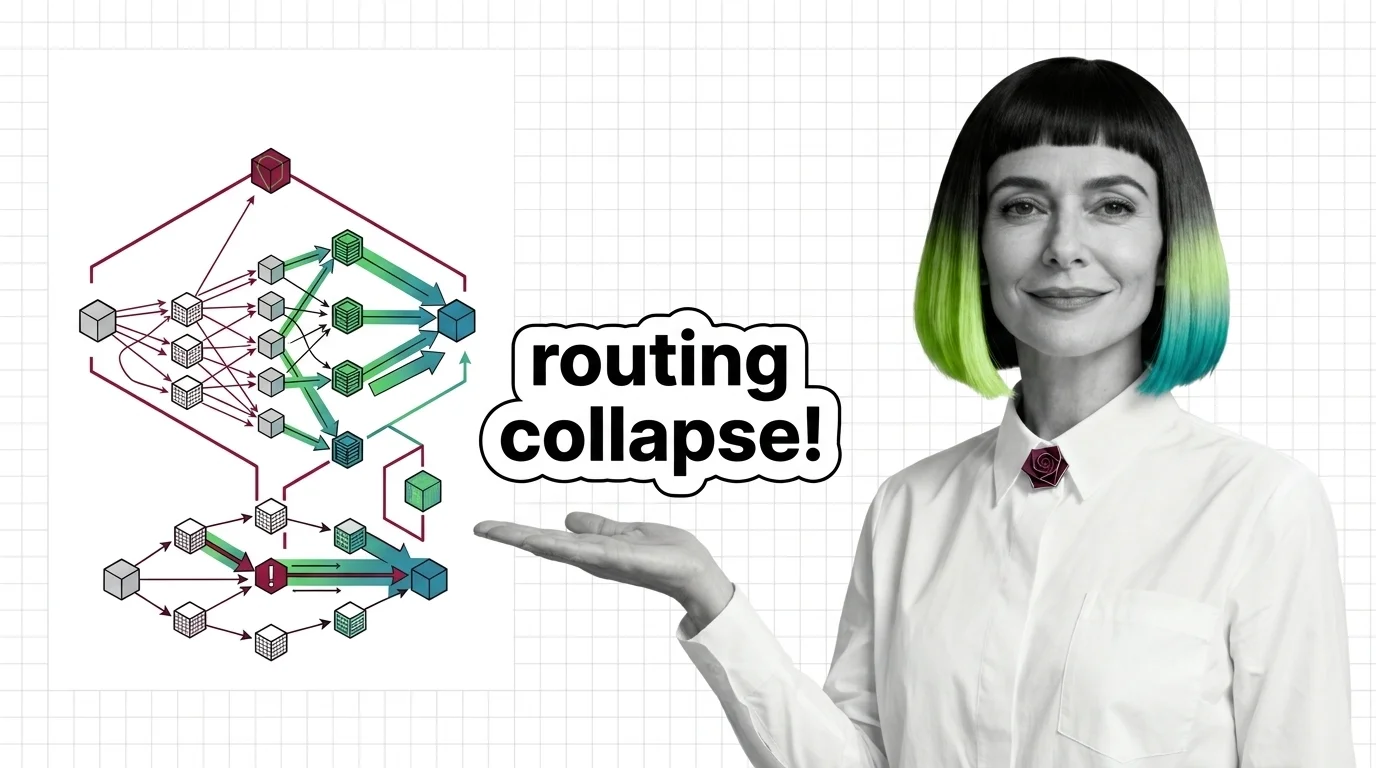

Routing Collapse, Load Balancing Failures, and the Hard Engineering Limits of Mixture of Experts

MoE models promise scale at fractional compute cost. Understand routing collapse, memory tradeoffs, and communication overhead — the hard engineering limits.

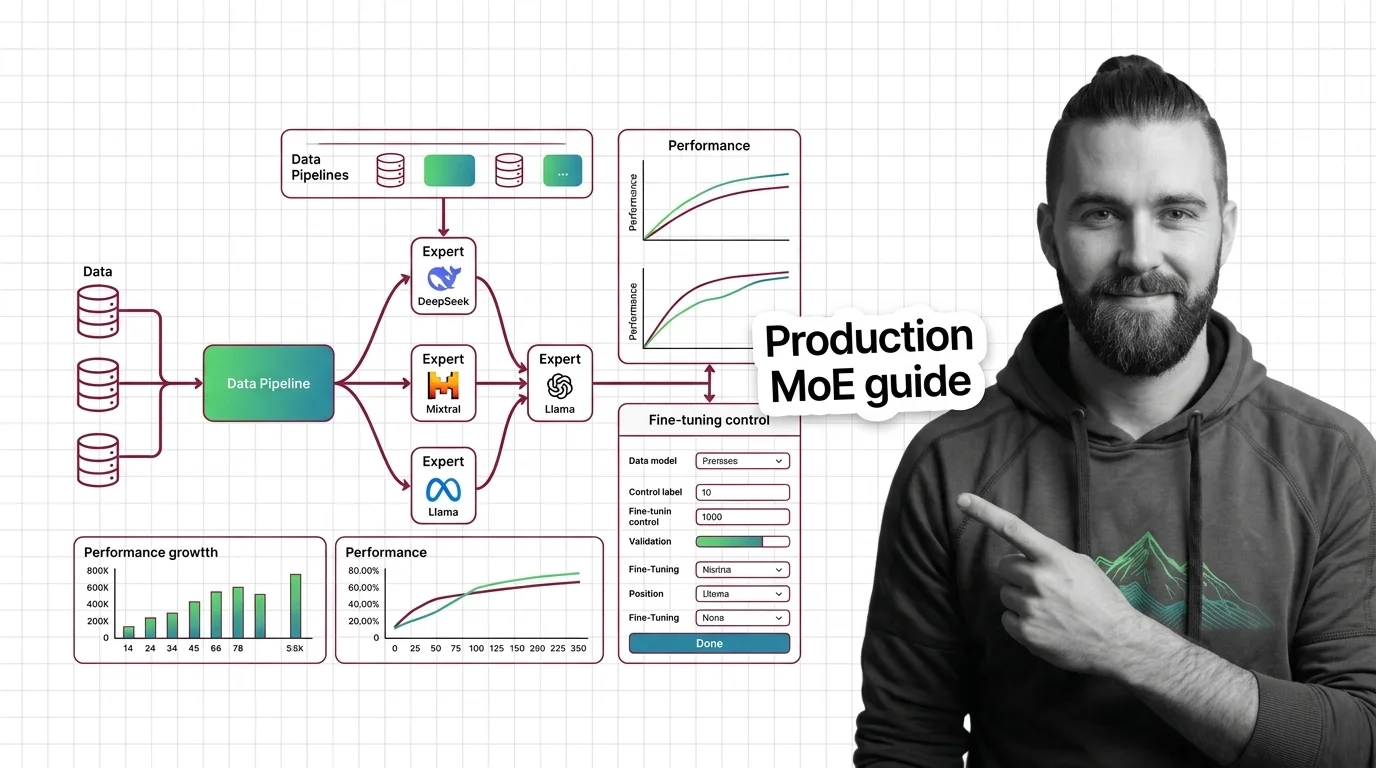

How to Run and Fine-Tune Open-Weight MoE Models with DeepSeek-V3, Mixtral, and Llama 4 in 2026

Deploy and fine-tune open-weight MoE models like DeepSeek-V3, Mixtral 8x22B, and Llama 4. Hardware mapping, expert …

Routing Collapse, Load Balancing Failures, and the Hard Engineering Limits of Mixture of Experts

MoE models promise scale at fractional compute cost. Understand routing collapse, memory tradeoffs, and communication …

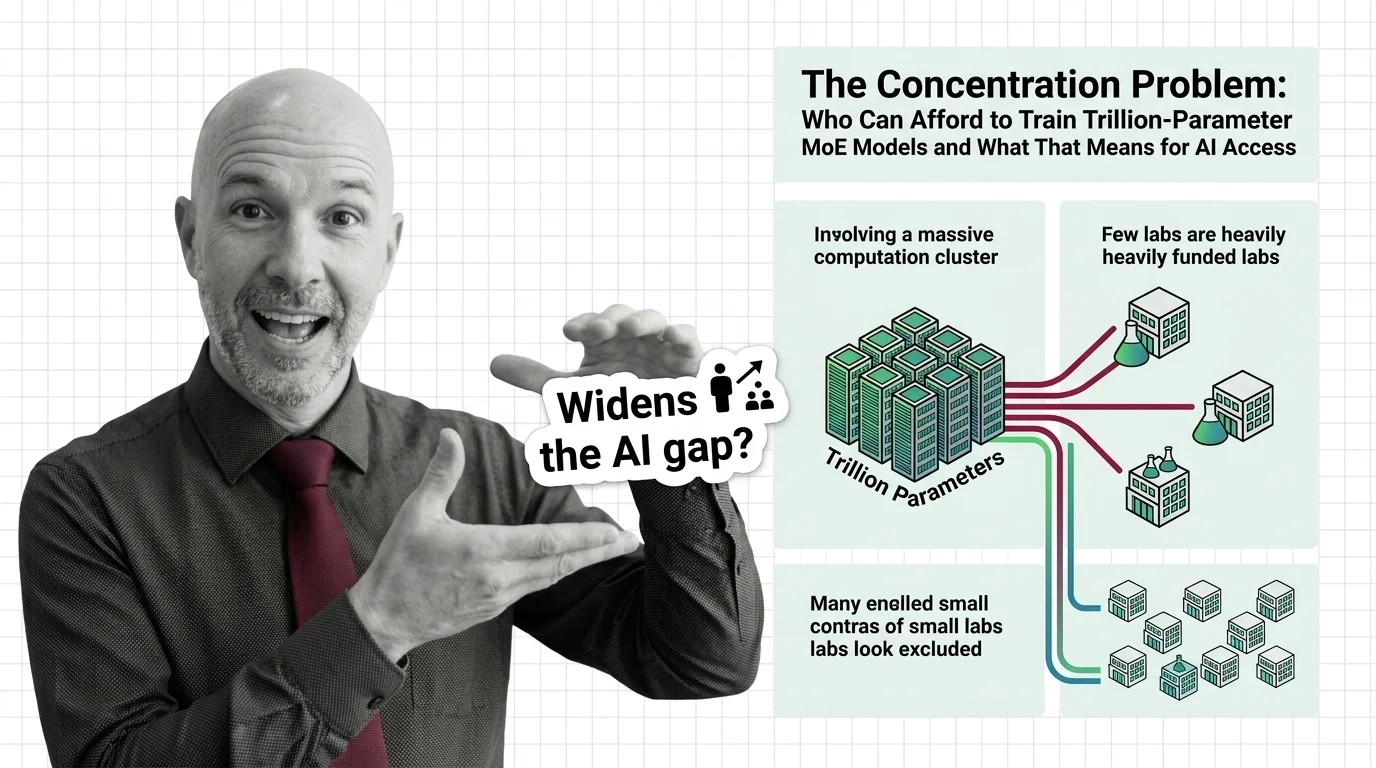

The Concentration Problem: Who Can Afford to Train Trillion-Parameter MoE Models and What That Means for AI Access

Trillion-parameter MoE models promise efficiency through sparse activation. But training costs keep rising, and the …

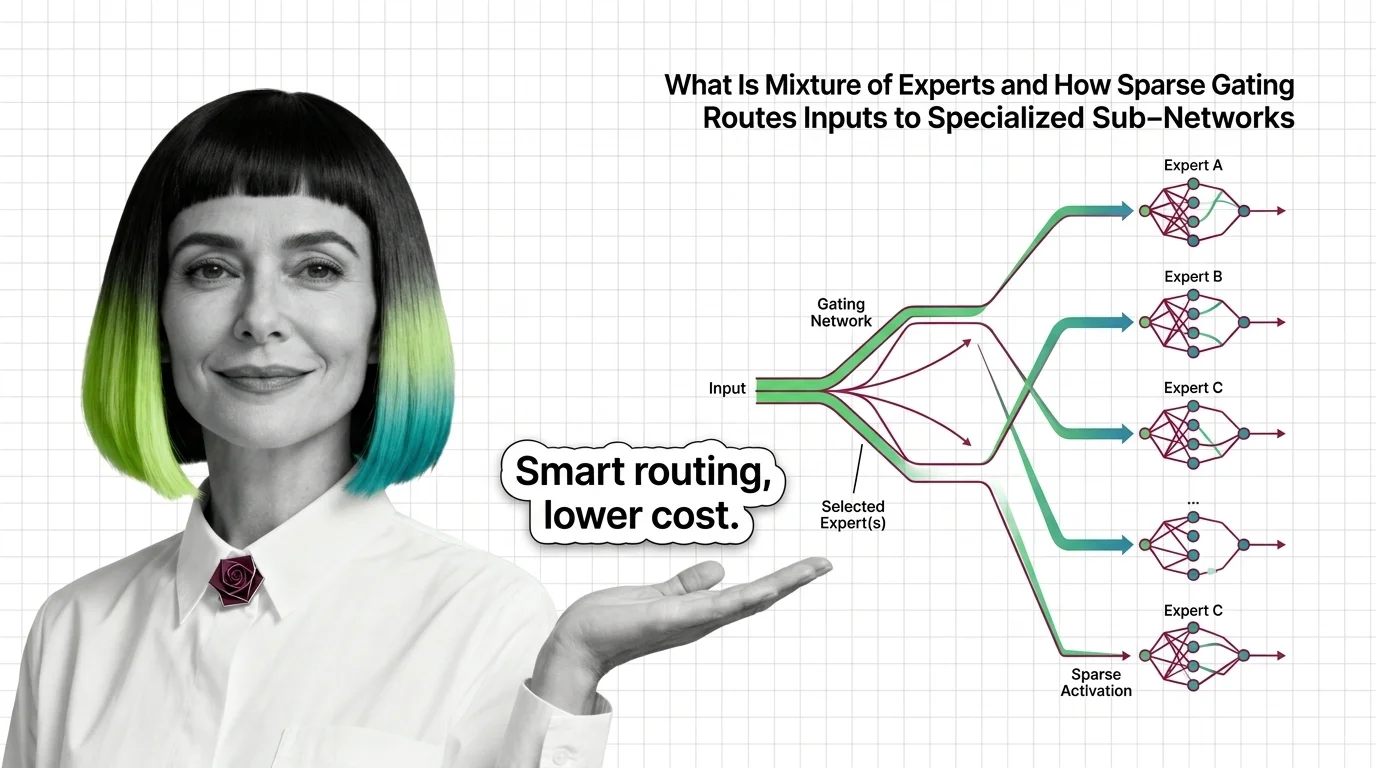

What Is Mixture of Experts and How Sparse Gating Routes Inputs to Specialized Sub-Networks

Mixture of experts activates only selected sub-networks per token. Learn how sparse gating makes trillion-parameter …

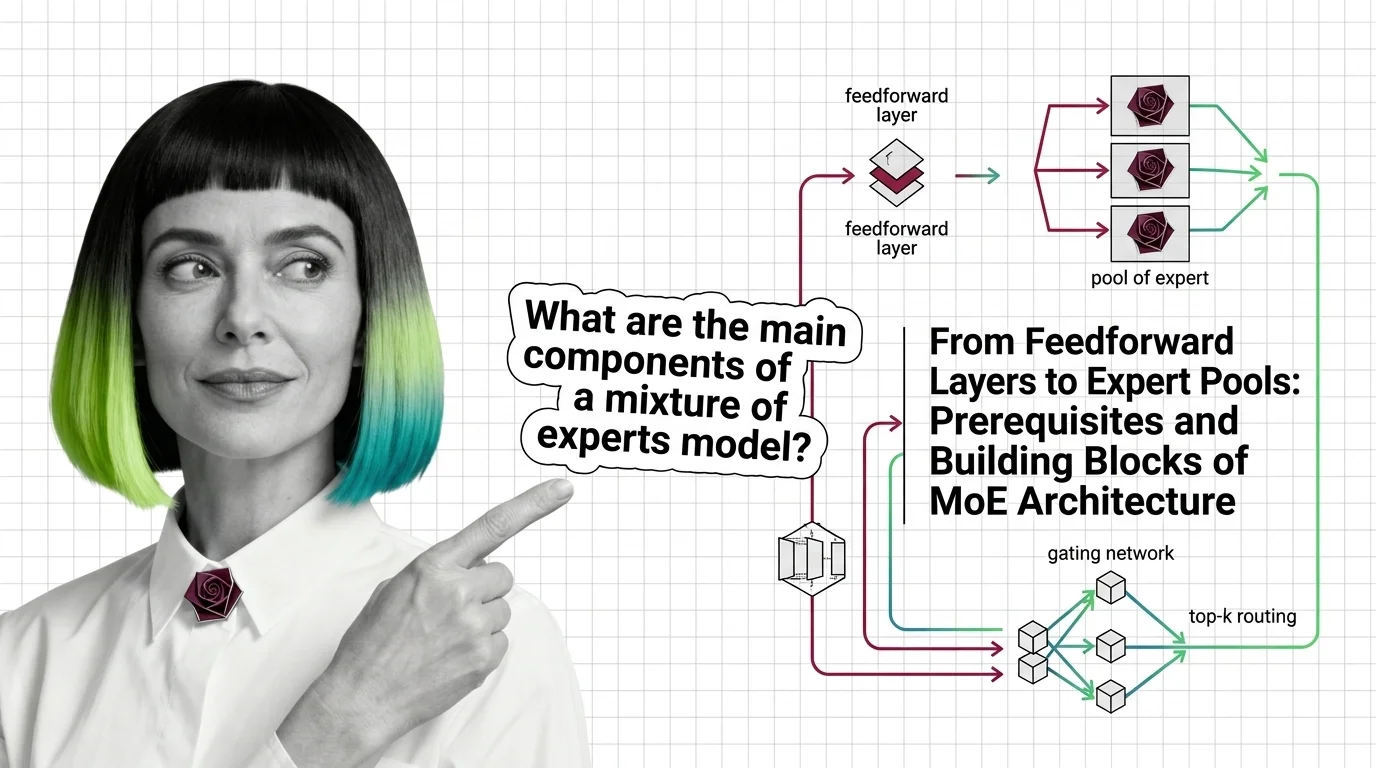

From Feedforward Layers to Expert Pools: Prerequisites and Building Blocks of MoE Architecture

Mixture of experts replaces one feedforward layer with many expert networks and a router. Learn how MoE gating and …

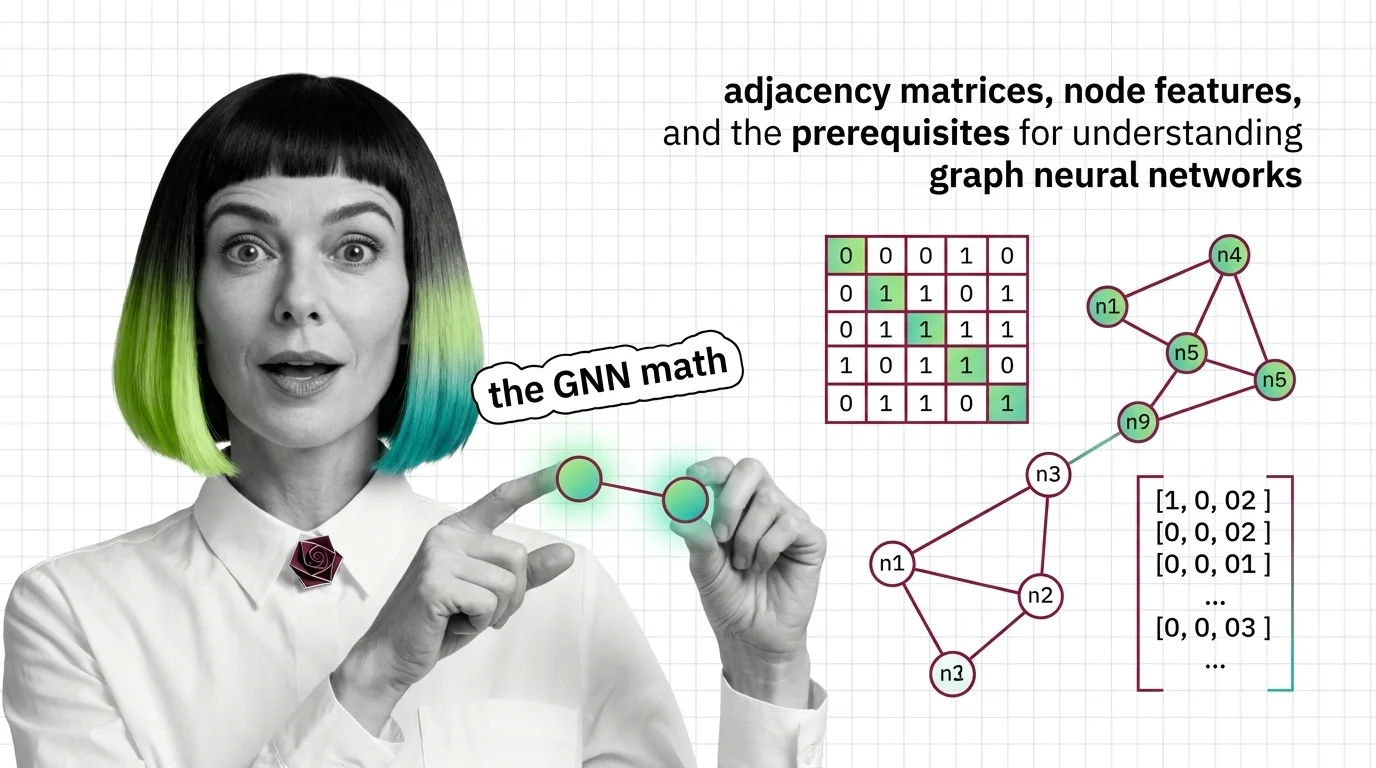

Adjacency Matrices, Node Features, and the Prerequisites for Understanding Graph Neural Networks

Graph neural networks consume matrices, not pixels. Learn how adjacency matrices, node features, and message passing …

AI Explained: Explore by Theme

8 themes — from neural network internals to safety evaluation. Pick a theme and go deep.

Sequence & State-Space Models →

Emerging architecture alternatives to transformers for processing long sequences efficiently, including state-space …

Neural Network Architectures →

The major neural network architecture families beyond transformers, including CNNs, RNNs, GANs, VAEs, and graph …

Embeddings & Vector Search →

Dense vector representations, similarity algorithms, and indexing structures that power semantic search and retrieval …

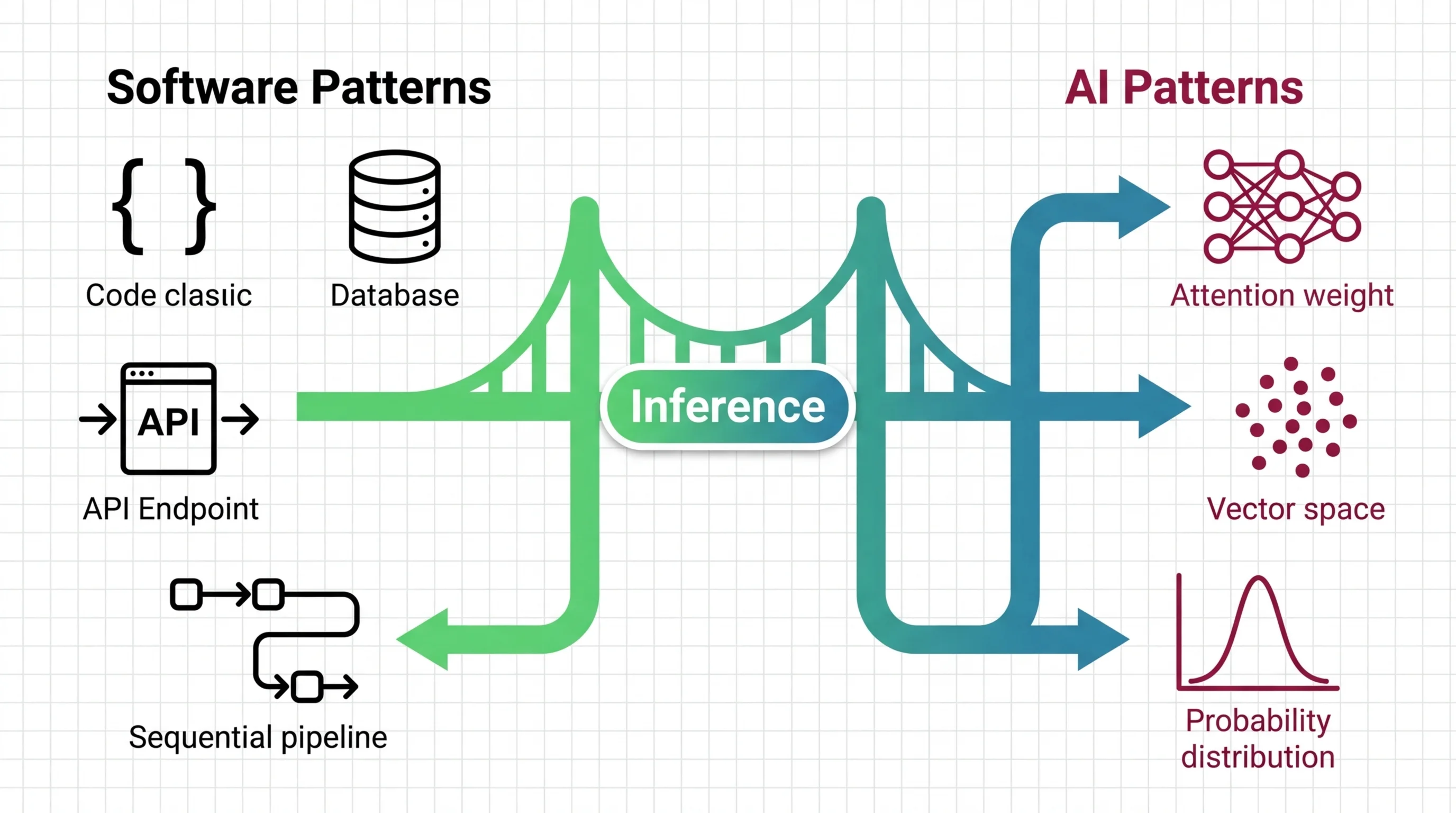

Inference Optimization →

Techniques for running models efficiently at inference time, from quantization to batching and sampling strategies.

LLM Training & Pre-Training →

How large language models are trained from scratch, covering pre-training objectives, scaling laws, and compute …

Model Evaluation & Benchmarks →

Methods, metrics, and benchmark suites for measuring AI model quality, from classification metrics to LLM-specific …

Deep Dive: Learning Paths

37 topics — pick one and get the full picture: theory, tutorials, market context, and critical analysis.

Mixture of Experts →

Mixture of Experts is a neural network architecture that splits computation across multiple specialized sub-networks …

Graph Neural Network →

A graph neural network is a deep learning architecture that operates directly on graph-structured data, where …

Variational Autoencoder →

A Variational Autoencoder (VAE) is a generative neural network that encodes input data into a continuous, structured …

Generative Adversarial Network →

A generative adversarial network is a machine learning architecture composed of two neural networks — a generator and a …

Convolutional Neural Network →

A Convolutional Neural Network is a deep learning architecture that applies small, learnable filters across input data …

Neural Network Basics for LLMs →

Neural networks are computational systems that learn patterns from data by adjusting internal parameters called weights …

Four Perspectives, One Topic

Every AI topic gets examined from four angles. No single narrative — just the full picture.

Humans in the Loop

Every article is curated and fact-checked by real people before publication.

AI Glossary

232 terms explained — from embeddings to transformers, RAG to synthetic data.

Ready for Your AI Transition?

Start with a learning path and go from zero to deep understanding, guided by four distinct perspectives.

Pick a Topic Start with Glossary