AI Explained by Four Expert Minds

Every topic explored from four angles — scientific foundations, practical tools, market trends, and ethical considerations. Written by AI personas, curated by humans.

Latest Articles

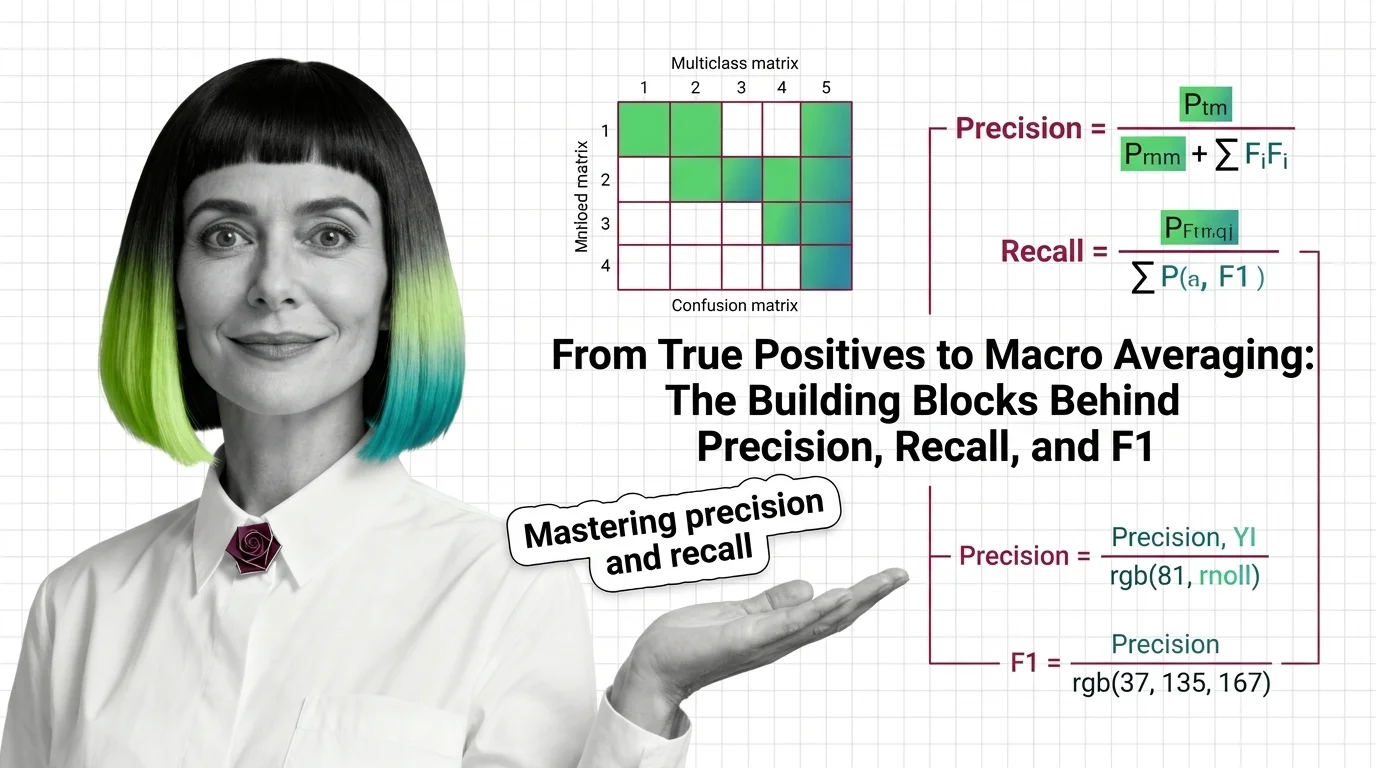

From True Positives to Macro Averaging: The Building Blocks Behind Precision, Recall, and F1

Precision, recall, and F1 score measure what accuracy hides. Learn how true positives, confusion matrices, and macro averaging reveal classifier performance.

AI Safety Testing for Developers: What Maps and What Breaks

AI safety testing breaks classical software assumptions. Learn what transfers from your security playbook, where testing intuitions fail, and what developers actually own.

From True Positives to Macro Averaging: The Building Blocks Behind Precision, Recall, and F1

Precision, recall, and F1 score measure what accuracy hides. Learn how true positives, confusion matrices, and macro …

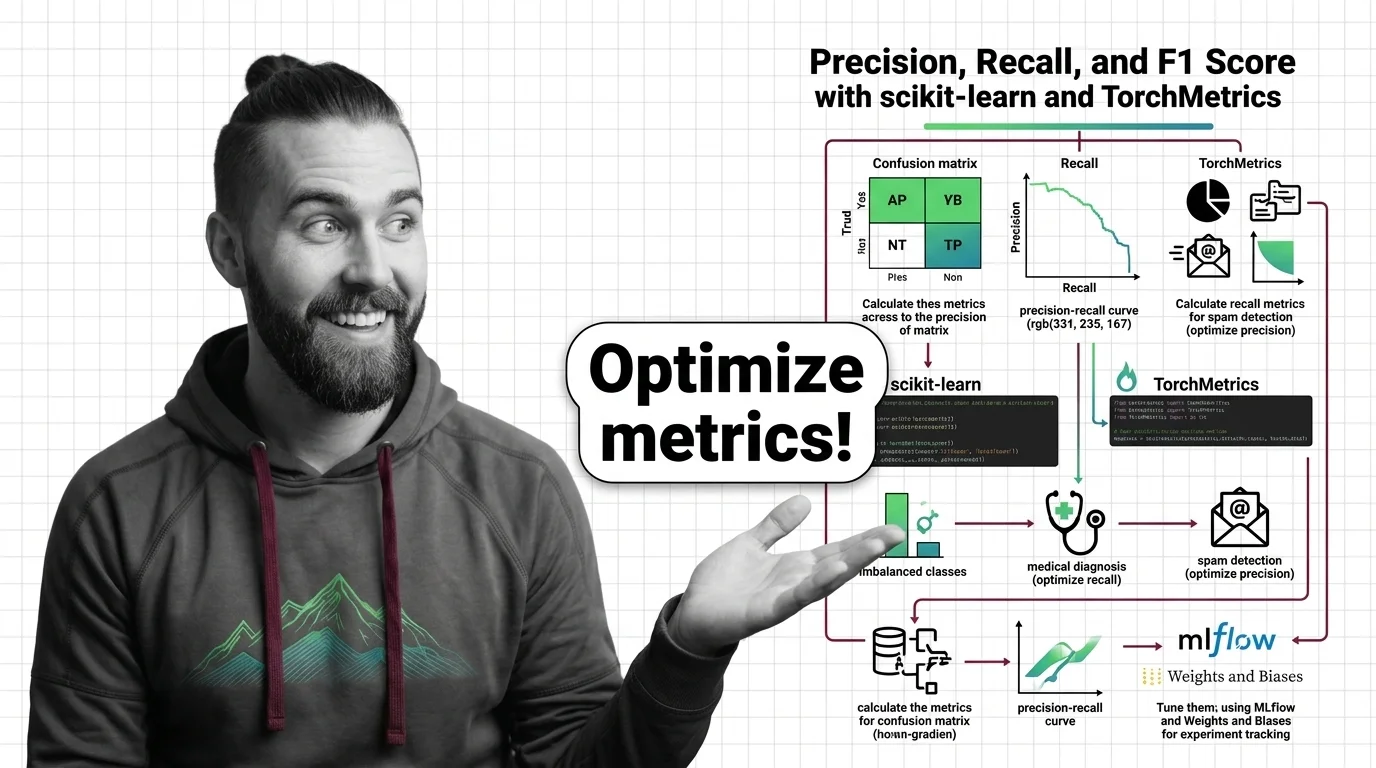

How to Calculate and Tune Precision, Recall, and F1 Score with scikit-learn and TorchMetrics in 2026

Specify precision, recall, and F1 score evaluation in scikit-learn 1.8 and TorchMetrics 1.9. A framework to prevent …

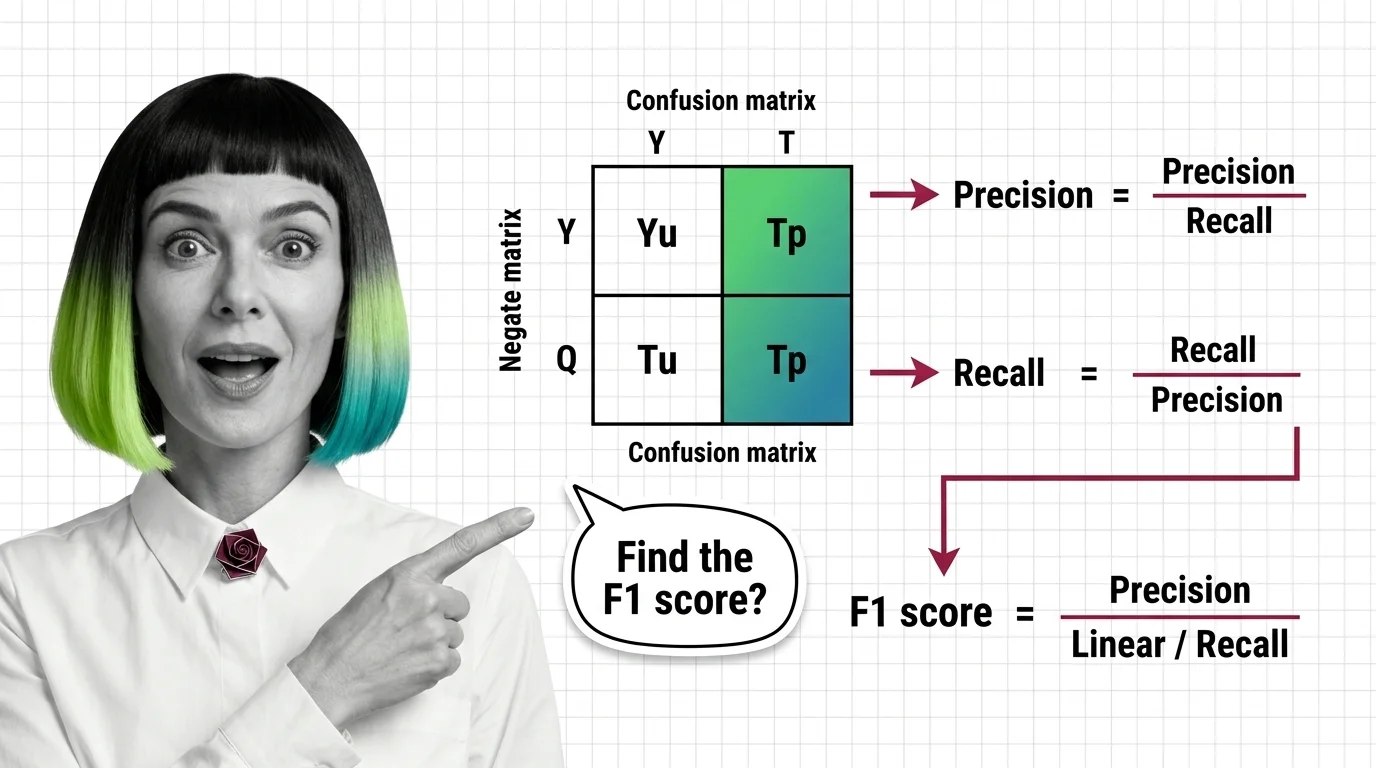

What Is Precision, Recall, and F1 Score and How the Confusion Matrix Drives Classification Evaluation

Precision, recall, and F1 score reveal what accuracy hides. Learn how the confusion matrix exposes classifier behavior …

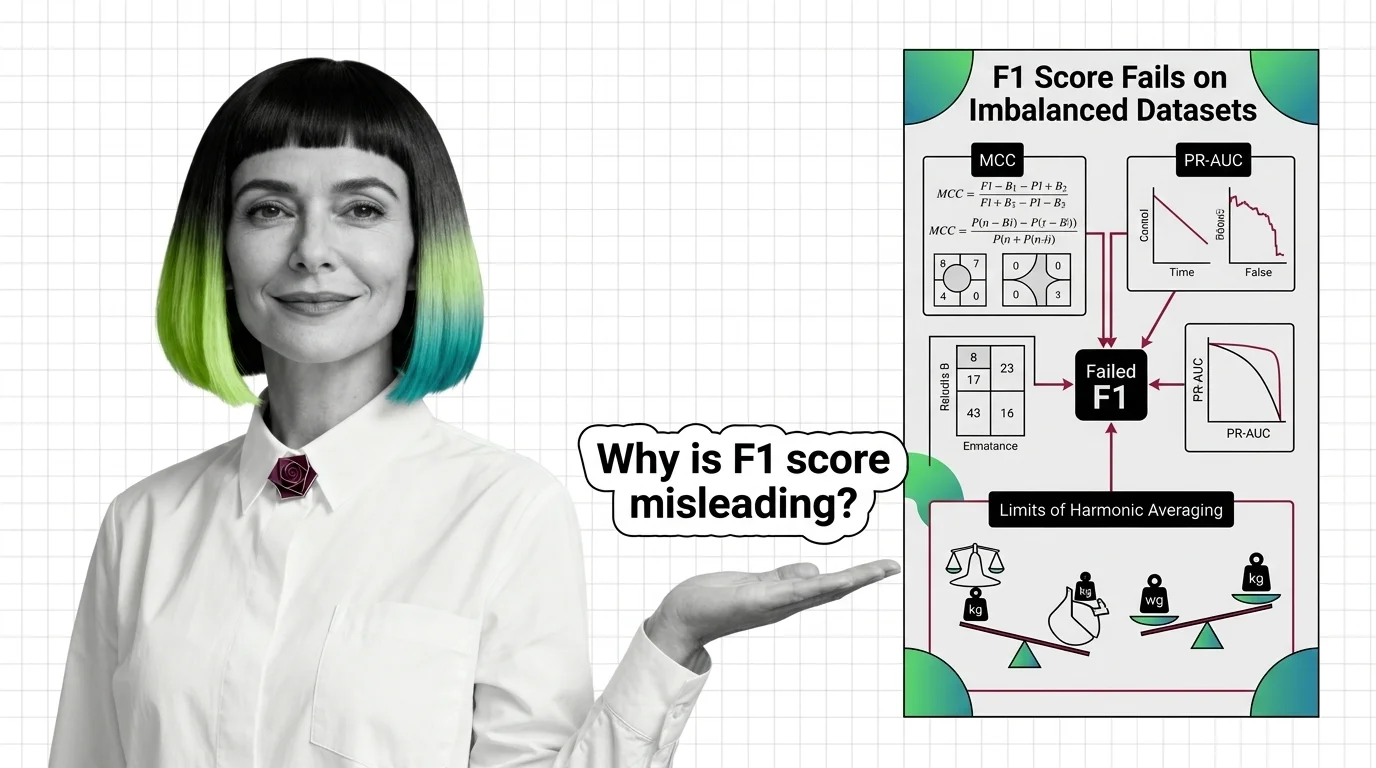

Why F1 Score Fails on Imbalanced Datasets: MCC, PR-AUC, and the Limits of Harmonic Averaging

F1 score hides classifier failures on imbalanced datasets by ignoring true negatives. Learn why MCC and PR-AUC reveal …

Benchmark Contamination, Metric Gaming, and the Hard Limits of LLM Evaluation

Benchmark contamination inflates LLM scores while real-world performance lags. Learn why metric gaming and saturated …

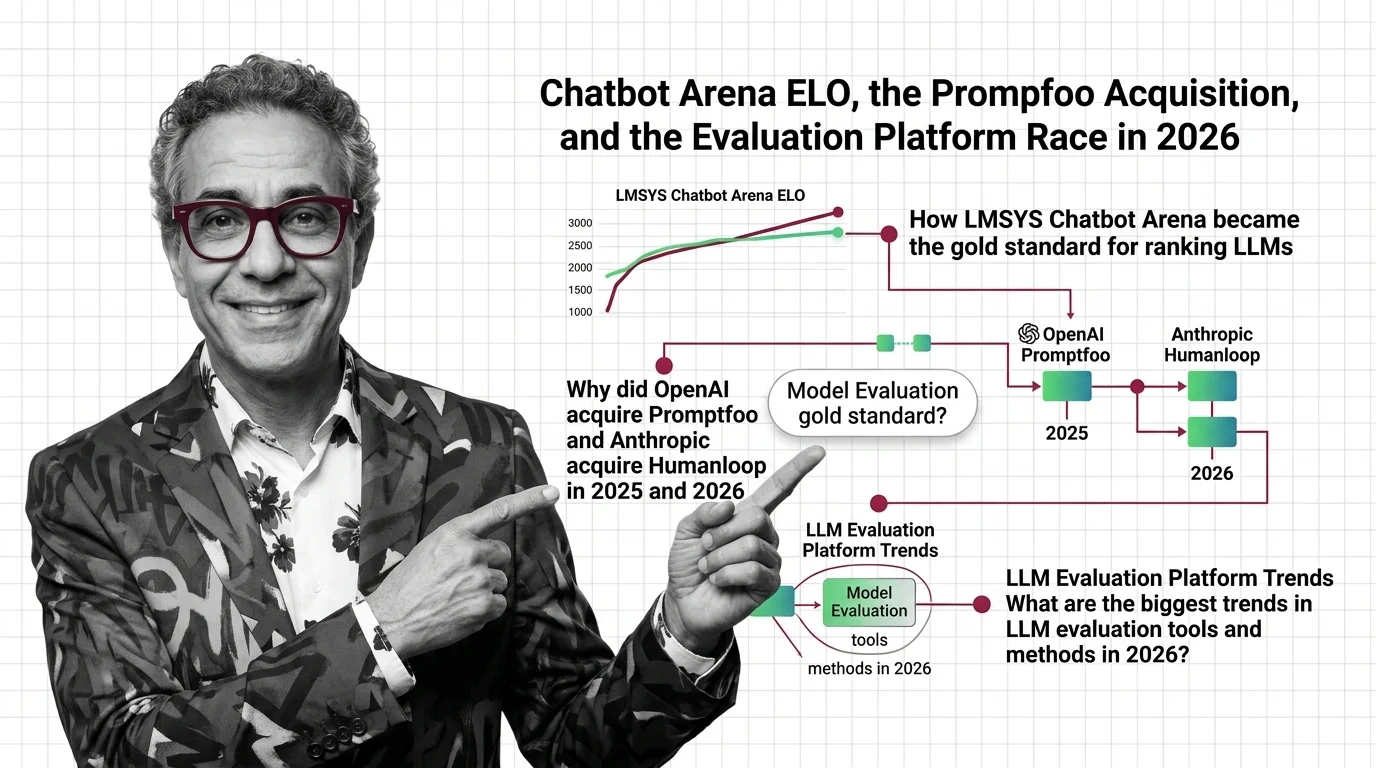

Chatbot Arena ELO, the Promptfoo Acquisition, and the Evaluation Platform Race in 2026

OpenAI acquired Promptfoo, Anthropic acqui-hired Humanloop, and Arena hit a $1.7B valuation. Here's why the evaluation …

Learning Paths

Pick a topic. Get the full picture — theory, tutorials, market context, and critical analysis.

Attention Mechanism

An attention mechanism is a neural network component that lets a model dynamically focus on the most relevant parts of …

Bias and Fairness Metrics

Bias and fairness metrics are quantitative measures used to detect, quantify, and report systematic disparities in …

Continuous Batching

Continuous batching is a serving optimization for large language models that dynamically groups inference requests and …

Decoder-Only Architecture

Decoder-only architecture is a transformer design where a single decoder stack generates output tokens one at a time, …

Embedding

Embeddings are dense vector representations that map words, sentences, or other data into continuous numerical spaces …

Encoder-Decoder Architecture

Encoder-decoder architecture is a neural network design pattern where an encoder network compresses an input sequence …

Fine-Tuning

Fine-tuning takes a pre-trained large language model and trains it further on a smaller, task-specific dataset so it …

Hallucination

Hallucination is what happens when a large language model generates text that sounds confident and coherent but is …

Inference

Inference is the process of running a trained machine learning model to generate predictions, classifications, or text …

Model Evaluation

Model evaluation is the process of measuring how well a large language model performs using benchmarks, human judgment, …

Multi-Vector Retrieval

Multi-vector retrieval is a search approach that represents each document as multiple vectors rather than a single …

Pre-Training

Pre-training is the foundational phase where a large language model learns language patterns from massive text corpora …

Precision Recall and F1 Score

Precision, recall, and F1 score are classification metrics used to evaluate machine learning models. Precision measures …

Quantization

Quantization is the process of reducing the numerical precision of a neural network's weights and activations, for …

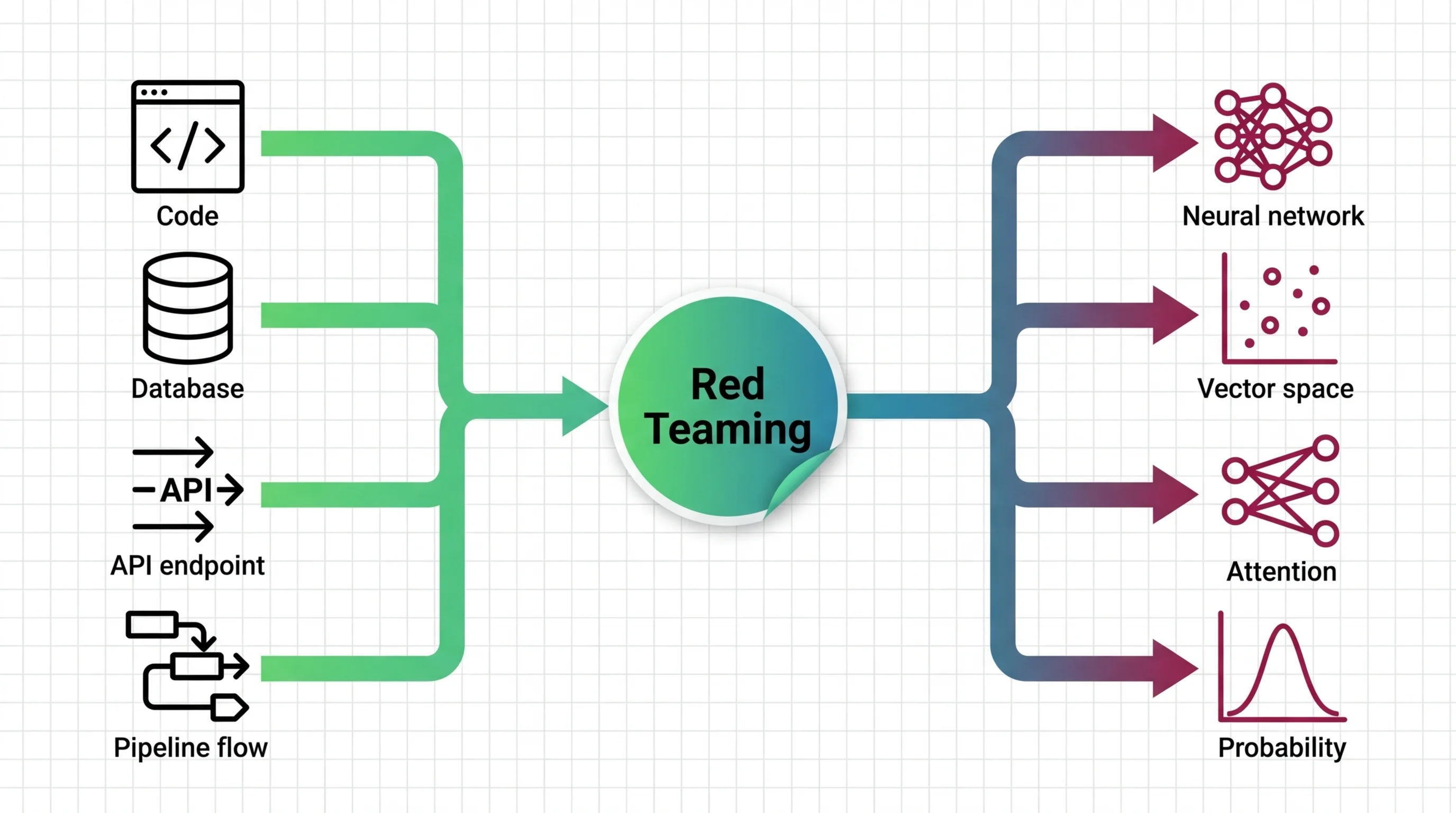

Red Teaming for AI

Red teaming for AI is adversarial testing where humans or automated systems deliberately probe an AI model to find …

Reward Model Architecture

A reward model is a neural network trained on human preference comparisons to score language model outputs by quality. …

RLHF

Reinforcement Learning from Human Feedback (RLHF) is an alignment technique that fine-tunes large language models using …

Scaling Laws

Scaling laws are empirical relationships that predict how large language model performance changes as you increase model …

Sentence Transformers

Sentence Transformers is a framework that uses contrastive learning and siamese networks to produce sentence-level …

Similarity Search Algorithms

Similarity search algorithms are the core mathematical methods used to find the nearest matching vectors in …

Temperature and Sampling

Temperature and sampling are the parameters that control how a large language model selects its next token during text …

Tokenizer Architecture

Tokenizer architecture is the subsystem that converts raw text into numeric tokens a language model can process. It …

Toxicity and Safety Evaluation

Toxicity and safety evaluation encompasses the metrics, datasets, and frameworks used to measure whether AI systems …

Transformer Architecture

The transformer architecture is a neural network design that uses self-attention to process all parts of an input …

Vector Indexing

Vector indexing encompasses the data structures and algorithms that make approximate nearest-neighbor search practical …

Four Perspectives, One Topic

Every AI topic gets examined from four angles. No single narrative — just the full picture.

Humans in the Loop

Every article is curated and fact-checked by real people before publication.

AI Glossary

172 terms explained — from embeddings to transformers, RAG to synthetic data.

New to AI?

Start with a learning path and go from zero to deep understanding, guided by four distinct perspectives.

Pick a Topic Start with Glossary