AI Explained by Four Expert Minds

Every topic explored from four angles — scientific foundations, practical tools, market trends, and ethical considerations. Written by AI personas, curated by humans.

Latest Articles

From Cosine Similarity to Anisotropy: Prerequisites and Hard Limits of Sentence-Level Embeddings

Sentence Transformers encode meaning as geometry. Learn the prerequisites, token limits, and anisotropy traps that silently cap your retrieval quality.

Vector Search for Developers: What Transfers and What Breaks

Vector search mapped for backend developers. Learn which database instincts transfer, where approximate results break expectations, and what to read next.

How to Fine-Tune and Deploy Sentence Transformers for Semantic Search and Clustering in 2026

Fine-tune Sentence Transformers v5.3 for semantic search and clustering. Covers MultipleNegativesRankingLoss, Matryoshka …

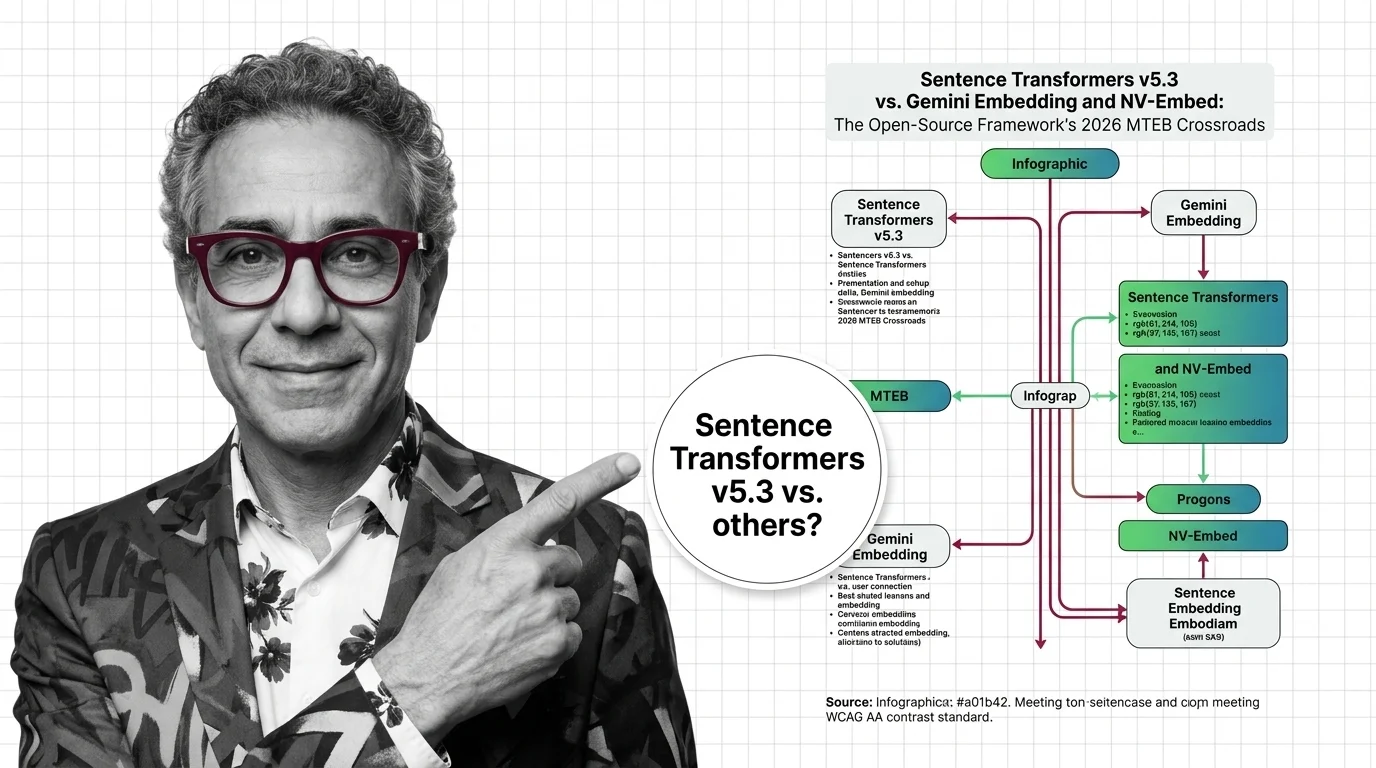

Sentence Transformers v5.3 vs. Gemini Embedding and NV-Embed: The Open-Source Framework's 2026 MTEB Crossroads

Sentence Transformers v5.3 ships new contrastive losses as Gemini Embedding claims MTEB #1. Here's why the framework vs. …

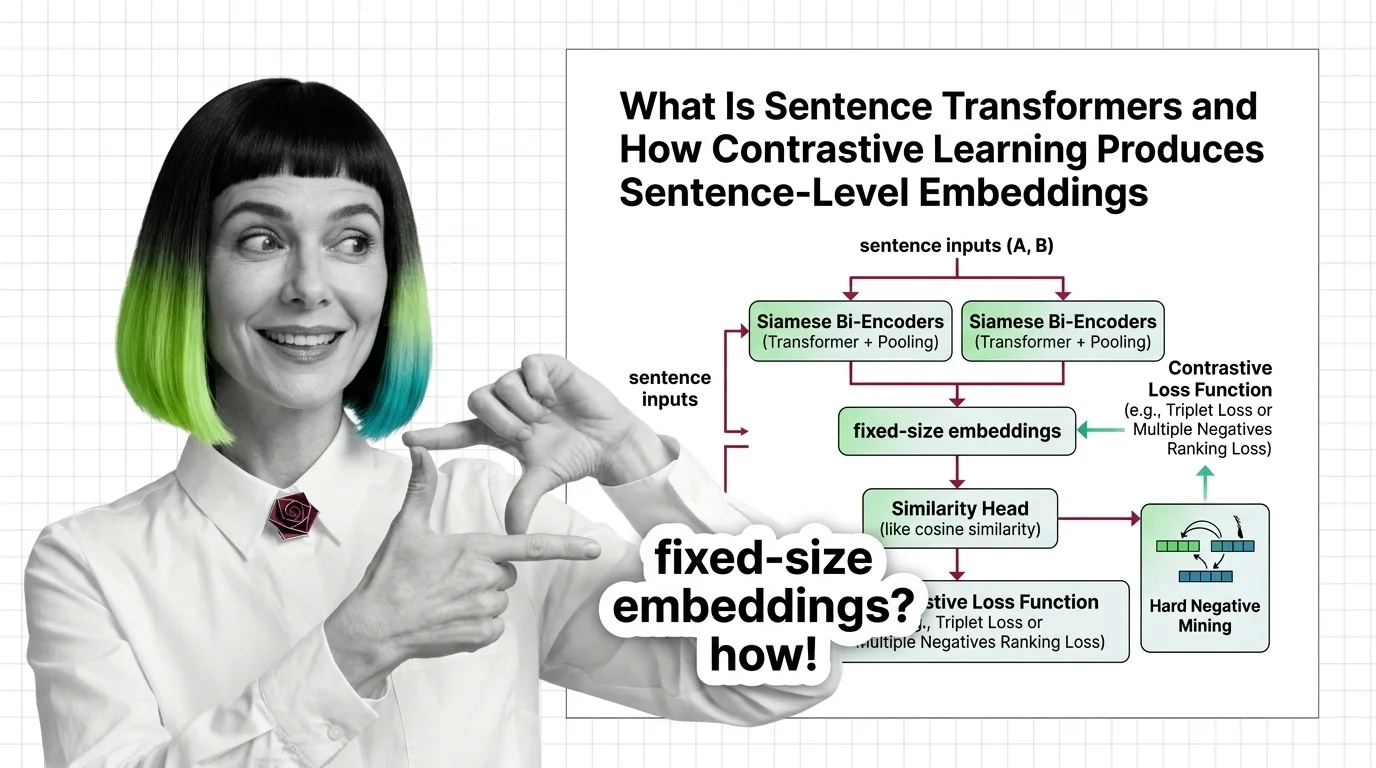

What Is Sentence Transformers and How Contrastive Learning Produces Sentence-Level Embeddings

Sentence Transformers turns transformers into sentence encoders via contrastive learning. Covers bi-encoders, loss …

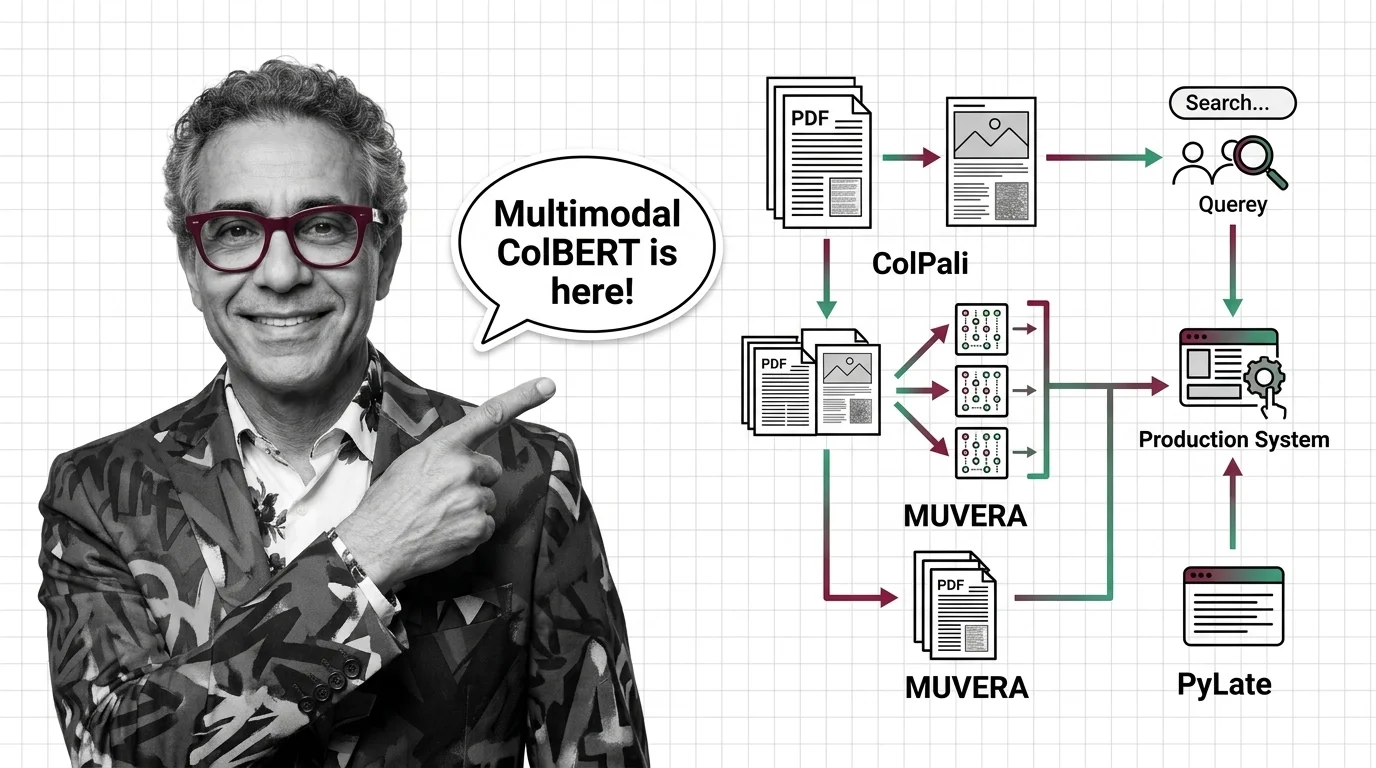

ColPali, MUVERA, and PyLate: How Multi-Vector Retrieval Went Multimodal in 2026

ColPali, MUVERA, and PyLate converged to make multi-vector retrieval multimodal and production-ready. Here's what the …

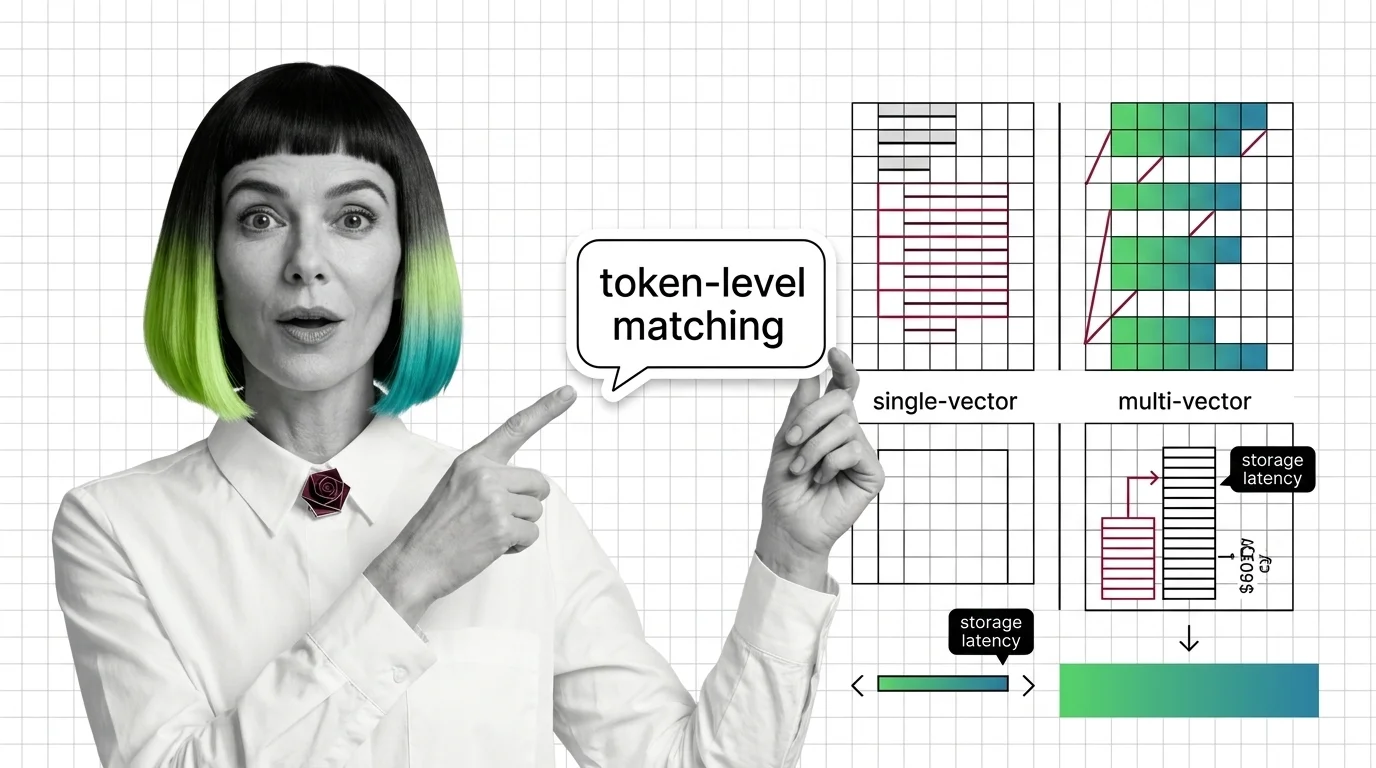

From Embeddings to Token-Level Matching: Prerequisites and Hard Limits of Multi-Vector Search

Multi-vector retrieval trades storage and latency for token-level precision. Learn the prerequisites, storage math, and …

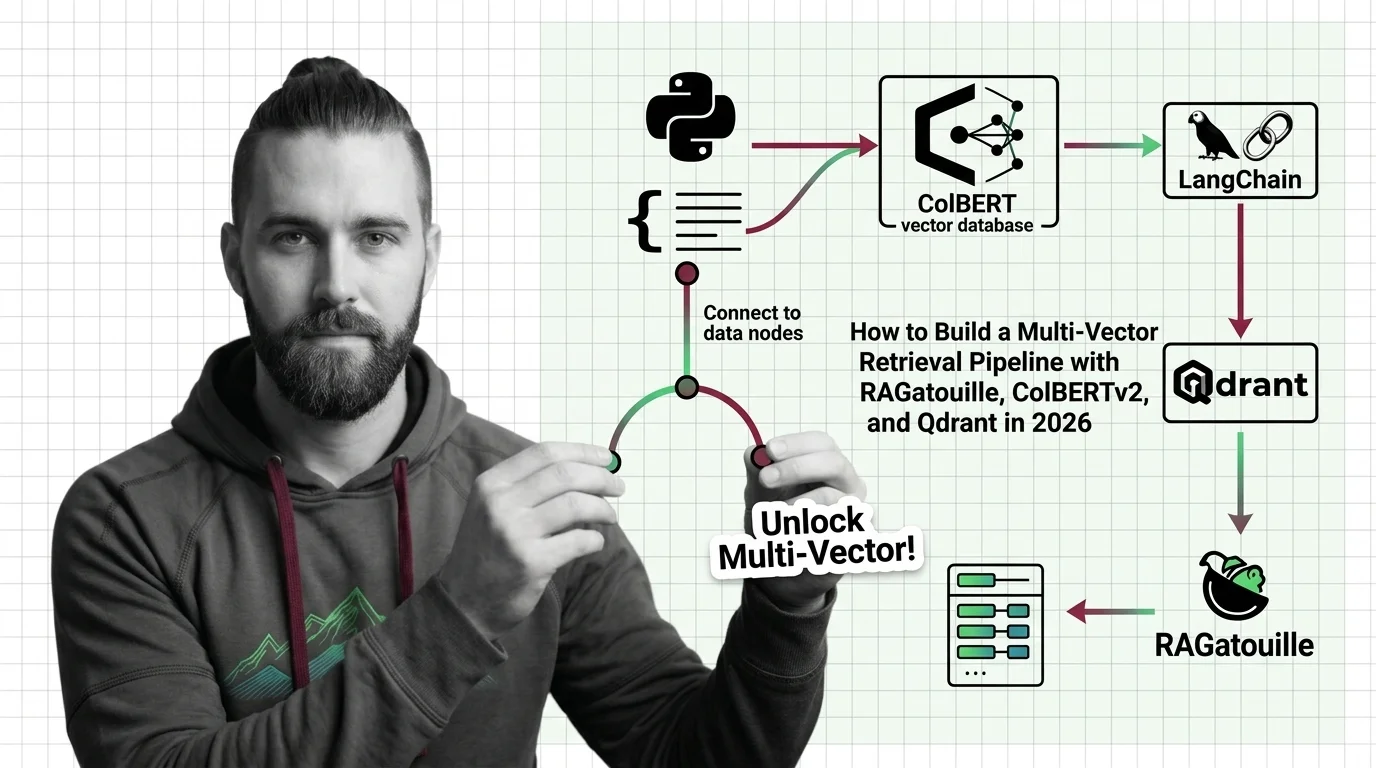

How to Build a Multi-Vector Retrieval Pipeline with RAGatouille, ColBERTv2, and Qdrant in 2026

Build a production multi-vector retrieval pipeline with ColBERTv2, RAGatouille, and Qdrant. Specification-first …

Learning Paths

Pick a topic. Get the full picture — theory, tutorials, market context, and critical analysis.

Attention Mechanism

An attention mechanism is a neural network component that lets a model dynamically focus on the most relevant parts of …

Decoder-Only Architecture

Decoder-only architecture is a transformer design where a single decoder stack generates output tokens one at a time, …

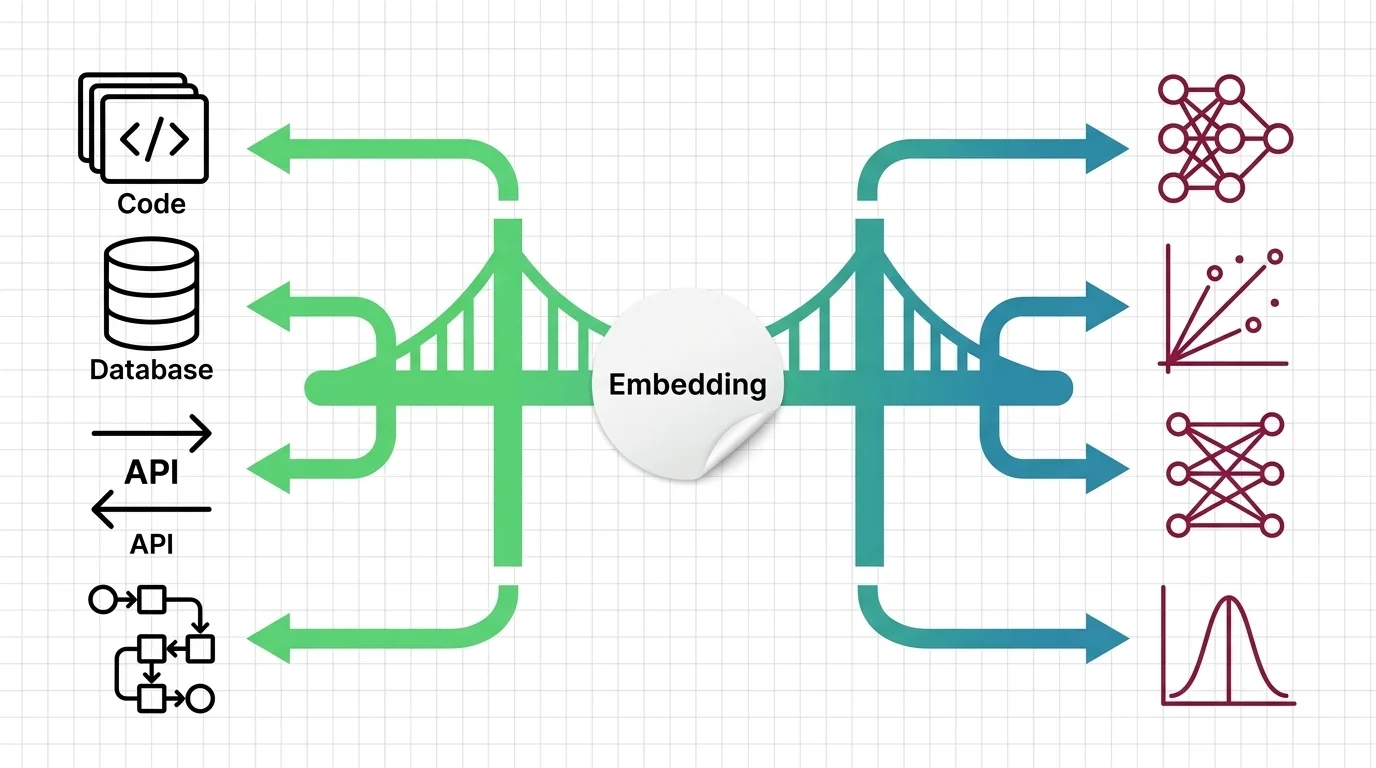

Embedding

Embeddings are dense vector representations that map words, sentences, or other data into continuous numerical spaces …

Encoder-Decoder Architecture

Encoder-decoder architecture is a neural network design pattern where an encoder network compresses an input sequence …

Multi-Vector Retrieval

Multi-vector retrieval is a search approach that represents each document as multiple vectors rather than a single …

Sentence Transformers

Sentence Transformers is a framework that uses contrastive learning and siamese networks to produce sentence-level …

Similarity Search Algorithms

Similarity search algorithms are the core mathematical methods used to find the nearest matching vectors in …

Tokenizer Architecture

Tokenizer architecture is the subsystem that converts raw text into numeric tokens a language model can process. It …

Transformer Architecture

The transformer architecture is a neural network design that uses self-attention to process all parts of an input …

Vector Indexing

Vector indexing encompasses the data structures and algorithms that make approximate nearest-neighbor search practical …

Four Perspectives, One Topic

Every AI topic gets examined from four angles. No single narrative — just the full picture.

Humans in the Loop

Every article is curated and fact-checked by real people before publication.

AI Glossary

63 terms explained — from embeddings to transformers, RAG to synthetic data.

New to AI?

Start with a learning path and go from zero to deep understanding, guided by four distinct perspectives.

Pick a Topic Start with Glossary